Boston is about to bet big on the future, and it’s a future written in code. Starting with the graduating class of 2028, all students in Boston Public Schools will need to demonstrate proficiency in artificial intelligence to earn their diplomas. The move, announced late last week, has sparked a predictably lively debate online – and not just about the merits of AI education itself. A Reddit thread, surfacing just hours ago, pointed to a nagging question: are we rushing into this without acknowledging a growing body of evidence suggesting more tech in the classroom doesn’t automatically equal better outcomes?

Beyond the Hype: Why Boston’s AI Mandate Matters Now

This isn’t simply about adding another line item to the graduation requirements. Boston’s decision reflects a fundamental shift in how we view education in the 21st century. The labor market is undergoing a seismic transformation, driven by advancements in AI and automation. Jobs that exist today may be obsolete tomorrow, and the skills needed to thrive are rapidly evolving. Boston is attempting to proactively equip its students with the tools they’ll need to navigate this new landscape – and, crucially, to shape it. But the timing is…fraught. The Reddit comment about recent studies isn’t off-base. Research consistently shows a complex relationship between technology integration and student performance. Simply throwing devices and software at the problem doesn’t guarantee improvement, and can, in some cases, actively hinder learning.

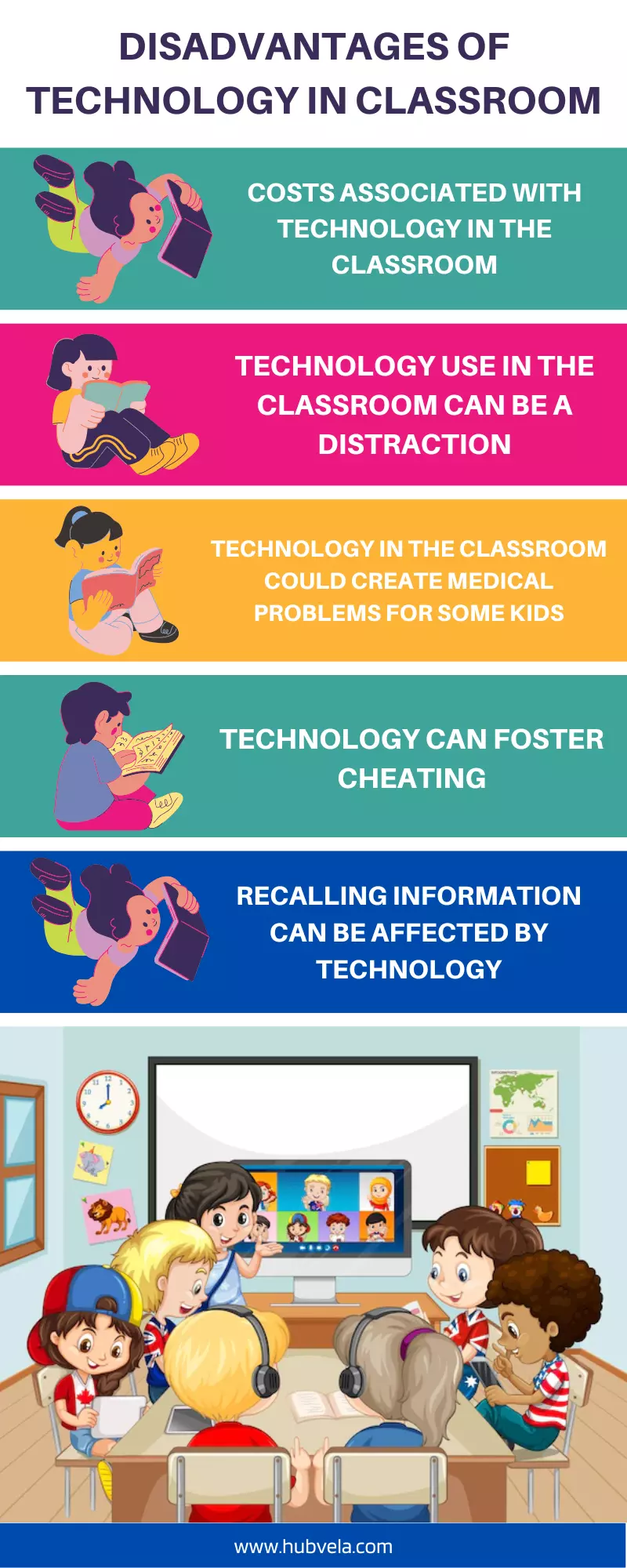

The Paradox of Progress: When More Tech Means Less Learning

The concern raised on Reddit taps into a growing body of research highlighted by organizations like the Organization for Economic Co-operation and Development (OECD). Their 2015 study, “Students, Computers and Learning: Making the Connection”, found little improvement in reading, mathematics, or science scores in countries that had invested heavily in computer technology in schools. In some cases, scores actually declined. The issue isn’t the technology itself, but how it’s implemented. Passive consumption of digital content, replacing teacher-led instruction with online modules, and a lack of adequate teacher training are all contributing factors. The fear is that a rushed AI curriculum, implemented without careful consideration of these pitfalls, could exacerbate existing inequalities and abandon students even further behind.

A Historical Echo: The Typewriter and the Curriculum

This isn’t the first time education has faced a technological upheaval. In the late 19th and early 20th centuries, the introduction of the typewriter sparked a similar debate. Should schools prioritize teaching typing skills? Would it prepare students for the future, or simply train them for a specific, potentially fleeting, profession? The debate ultimately led to the widespread inclusion of typing in school curricula, but it also highlighted the importance of balancing practical skills with foundational knowledge. The parallel is striking. AI, like the typewriter, is a tool. And like any tool, its effectiveness depends on the skill of the user – and the underlying understanding of the principles at play.

What Does “AI Proficiency” Even Mean? Defining the Curriculum

The biggest unanswered question surrounding Boston’s mandate is: what exactly will “AI proficiency” entail? Will it be coding skills? An understanding of AI ethics? The ability to critically evaluate AI-generated content? The details are still being hammered out, but officials have indicated a focus on foundational concepts rather than specialized programming. “We’re not trying to turn every student into an AI engineer,” explains Dr. Sheila Lirio Marcelo, founder and CEO of CareMessage, a non-profit leveraging AI to improve healthcare access. “The goal is to ensure that all students have a basic understanding of how AI works, its potential benefits and risks, and how to use it responsibly.”

“AI literacy is becoming as essential as traditional literacy. Students need to be able to understand, interpret, and critically evaluate the information they encounter in an increasingly AI-driven world.”

This approach aligns with a growing movement towards “AI literacy” – a broader concept that emphasizes critical thinking, problem-solving, and ethical reasoning. However, even a focus on foundational concepts presents significant challenges. Teachers need to be adequately trained, curriculum materials need to be developed, and assessments need to be designed to accurately measure student understanding. Boston is allocating $500,000 to professional development for teachers, but whether that will be enough remains to be seen. The district is also partnering with local universities and tech companies to develop curriculum resources, a move applauded by industry leaders.

The Economic Ripple Effect: Will Boston’s Graduates Have an Edge?

Boston’s decision is likely to have a ripple effect beyond the city limits. Other school districts may follow suit, creating a demand for AI-trained educators and curriculum developers. The tech sector, already facing a shortage of skilled AI professionals, will undoubtedly benefit. According to a recent report by Brookings, demand for AI-related jobs has increased by 74% over the past five years. This trend is expected to continue, making AI skills increasingly valuable in the labor market. However, there’s a risk that this mandate could widen the gap between students from affluent and disadvantaged backgrounds. Access to technology, quality internet connectivity, and supplemental educational resources are all factors that can influence student success. Boston needs to ensure that all students have equal opportunities to acquire the skills they need to thrive in the age of AI.

the curriculum must address the ethical implications of AI. Bias in algorithms, data privacy concerns, and the potential for job displacement are all critical issues that students need to grapple with. Simply teaching students how to use AI tools without addressing these ethical considerations would be a disservice. As Meredith Whittaker, President of Signal Foundation, recently stated,

“We need to move beyond a purely technical understanding of AI and embrace a more critical and socially aware approach. AI is not neutral; it reflects the values and biases of its creators.”

Looking Ahead: A Calculated Risk or a Missed Opportunity?

Boston’s AI mandate is a bold move, and one that carries significant risks and potential rewards. It’s a calculated gamble on the future, a recognition that the world is changing and that education must adapt. Whether it succeeds will depend on careful planning, adequate funding, and a commitment to equity. The Reddit thread that sparked this exploration wasn’t simply a cynical dismissal of progress; it was a legitimate question about the best way to prepare students for an uncertain future. The answer, it seems, lies not just in embracing new technologies, but in understanding their limitations and ensuring that they are used to enhance, not replace, the core principles of a well-rounded education. What do *you* think? Is Boston getting ahead of the curve, or are they rushing into a future they haven’t fully considered?

Boston Public Schools Official Website World Economic Forum: Bridging the AI Skills Gap