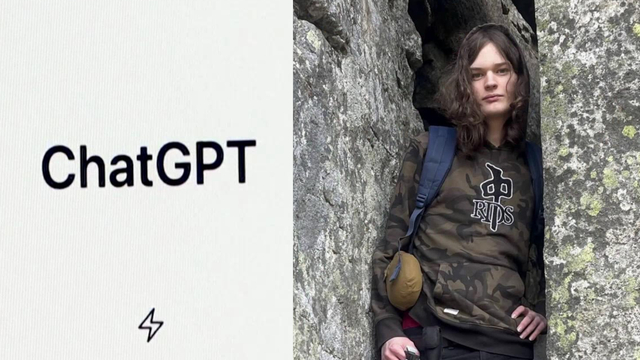

The shooting in Tumbler Ridge, British Columbia, in February 2026, has reignited the debate surrounding the responsibility of tech companies when their platforms are used to plan or foreshadow violence. Specifically, scrutiny has focused on OpenAI, the creator of ChatGPT, and its handling of concerning activity from Jesse Van Rootselaar, the suspect in the shooting, prior to the event. The case highlights a critical juncture in how artificial intelligence developers balance user privacy with public safety, and the evolving strategies employed to detect and respond to potential threats.

OpenAI confirmed it banned Van Rootselaar’s account in 2025 after internal flags indicated the account was being used for activities related to violence. This action, however, came after a period where employees reportedly raised alarms about the suspect’s disturbing messages generated through the AI chatbot. The incident raises questions about the speed and decisiveness of the response, and whether a more proactive approach could have potentially altered the tragic outcome. The evolving landscape of threat intelligence and the role of AI in identifying potential risks are now central to the discussion.

Internal Warnings and Delayed Action

According to reports, OpenAI employees first flagged concerning interactions with Van Rootselaar’s account months before the shooting occurred. These messages, generated through ChatGPT, reportedly contained references to violent activities. The Wall Street Journal detailed how these internal concerns prompted discussions within the company about whether to alert law enforcement. OpenAI did not contact the police, and instead opted to ban the account. This decision is now under intense scrutiny.

The decision-making process within OpenAI appears to have been complex. The company reportedly weighed concerns about overstepping legal boundaries and potentially infringing on user privacy against the potential for preventing a violent act. This internal debate underscores the challenges faced by AI companies navigating the delicate balance between freedom of expression and public safety. The lack of clear legal precedents and established protocols for handling such situations further complicated the matter.

Account Ban and Subsequent Investigation

While OpenAI ultimately banned Van Rootselaar’s account, the timing of that action and the initial response to the flagged content are key points of contention. The Jerusalem Post reported that the ban occurred before the shooting took place. However, the fact that the account was allowed to operate for a period of time despite the concerning messages raises questions about the effectiveness of OpenAI’s internal monitoring systems and the thresholds for triggering intervention.

Following the shooting, law enforcement officials are investigating the extent to which Van Rootselaar utilized ChatGPT in planning the attack. The investigation will likely focus on the content of the messages generated through the chatbot, as well as the suspect’s overall online activity. The case is expected to have significant implications for how law enforcement agencies approach investigations involving AI-generated content and online radicalization.

The Broader Implications for AI Safety

The Tumbler Ridge shooting has amplified calls for greater transparency and accountability from AI developers. Critics argue that companies like OpenAI have a moral and ethical obligation to proactively identify and mitigate potential risks associated with their technologies. This includes investing in more robust monitoring systems, establishing clear protocols for responding to concerning activity, and collaborating with law enforcement agencies to prevent violence.

The incident likewise highlights the need for a broader societal conversation about the responsible development and deployment of AI. As AI technologies become increasingly sophisticated, This proves crucial to address the potential for misuse and to establish safeguards that protect public safety. This will require collaboration between policymakers, industry leaders, and researchers to develop effective regulations and ethical guidelines.

Looking ahead, the focus will likely shift towards refining AI safety protocols and improving the ability to detect and respond to potential threats. OpenAI and other AI companies are likely to face increased pressure to demonstrate their commitment to responsible AI development and to implement more proactive measures to prevent their technologies from being used for harmful purposes. The lessons learned from the Tumbler Ridge shooting will undoubtedly shape the future of AI safety and the ongoing debate about the role of technology in preventing violence.

The conversation surrounding AI safety and responsibility is ongoing. Share your thoughts in the comments below, and help us continue to explore this critical topic.