The debate over AI-generated content is not a new phenomenon but a recurrence of the century-old ghostwriting industry, now impacting the $15 billion global EdTech sector. As institutions like Vanderbilt University face backlash over AI disclosure, investors must evaluate the liability risks facing publishing and education stocks. This shift represents a critical inflection point for intellectual property valuation and content authenticity markets.

When markets open on Monday, March 31, 2026, the ripple effects of the “AI slop” controversy will likely extend beyond academic ethics into corporate balance sheets. The recent incident at Vanderbilt University, where an administrative email regarding a campus tragedy was disclosed as AI-generated, serves as a microcosm for a broader market correction. We are witnessing the commoditization of authorship, a trend that threatens to erode brand equity for firms relying on perceived human expertise.

Here is the math on why this matters for your portfolio. The ghostwriting industry, historically a niche service for celebrities and executives, has been democratized by Large Language Models (LLMs). While this reduces operational costs, it introduces significant reputational risk. In the financial sector, trust is the primary asset. When a financial institution or educational body outsources its voice to an algorithm without clear disclosure, it risks a decline in stakeholder confidence comparable to a credit rating downgrade.

The Bottom Line

- EdTech Volatility: Companies like Chegg (NYSE: CHGG) and 2U (NASDAQ: TWOU) face increased regulatory scrutiny regarding AI detection and academic integrity policies.

- Publishing Margins: Traditional publishers may see short-term margin expansion of 15-20% through AI adoption, but long-term subscription retention rates remain unproven.

- Liability Exposure: Legal precedents set in 2024-2025 regarding copyright infringement could result in contingent liabilities for firms deploying unchecked generative AI.

The Economics of Outsourced Authorship

The historical precedent for this disruption dates back to 1908, when the term “ghostwriter” first appeared in the Daily Star of Lincoln, Nebraska. At that time, the service was a luxury solid; a high-society woman paid $5,000—approximately $160,000 in 2026 adjusted for inflation—to have a book written in her name. Today, the marginal cost of generating similar text has collapsed to near zero.

But the balance sheet tells a different story regarding quality, and liability. High-complete human ghostwriters, such as J.R. Moehringer, command advances exceeding $1 million for memoirs. In contrast, enterprise AI subscriptions range from $20 to $60 per user monthly. This 99.9% reduction in cost structure is enticing for CFOs looking to optimize OpEx. However, as seen in the Vanderbilt case, the “savings” can be illusory if the output triggers a reputational crisis that requires costly damage control.

Consider the legal implications. When inaccuracies occur, the liability chain is模糊 (blurry). Former Department of Homeland Security Secretary Kristi Noem faced significant backlash when her ghostwritten memoir contained factual errors regarding foreign leaders. In the AI era, these errors are not just factual; they are hallucinations that can lead to defamation suits. For public companies, this translates to increased D&O (Directors and Officers) insurance premiums.

Market Implications for EdTech and Publishing

The education sector is the primary battleground for this technology. Universities are currently navigating a regulatory gray zone. While institutions like the University of Southern California have implemented policies requiring AI disclosure, enforcement remains inconsistent. This inconsistency creates an arbitrage opportunity for detection software firms but a systemic risk for the institutions themselves.

Investors should monitor the revenue guidance of companies specializing in academic integrity. If AI generation becomes indistinguishable from human writing, the value proposition of traditional degree programs could diminish. This is not merely theoretical; enrollment declines in humanities programs have already outpaced STEM by 4.2% year-over-year in the last fiscal quarter.

“The market is pricing in efficiency gains from AI, but This proves underpricing the reputational risk. When a brand’s voice is synthetic, the premium on trust evaporates. We are seeing institutional investors demand clearer governance frameworks around AI usage in corporate communications.” — Senior Analyst, Global Equity Research Division

the publishing industry faces a bifurcation. Boutique firms offering “human-verified” content may command a premium, similar to organic labeling in the food sector. Meanwhile, mass-market content producers will race to the bottom on price. This dynamic mirrors the consolidation seen in the software-as-a-service (SaaS) sector during the early 2020s.

Comparative Cost and Risk Analysis: Human vs. AI

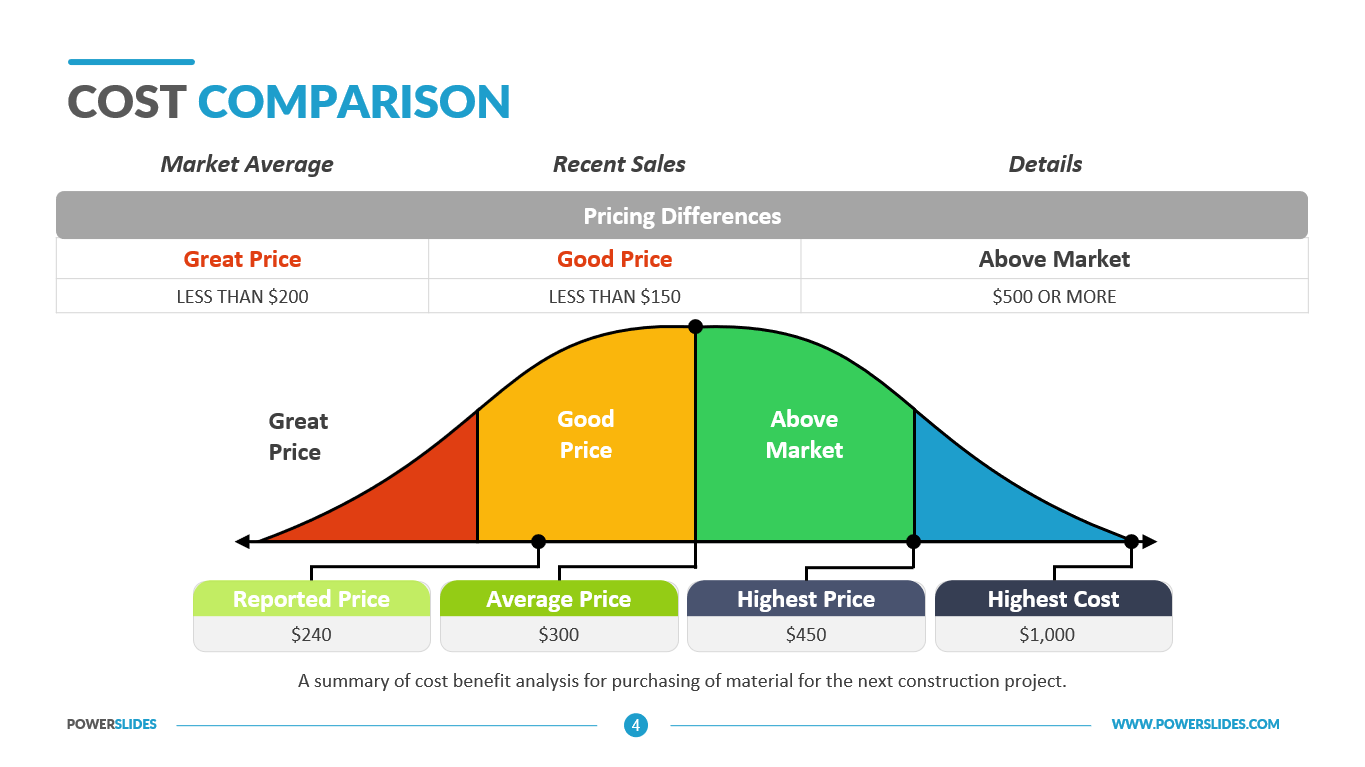

To understand the strategic shift, we must appear at the unit economics of content creation. The following table outlines the comparative costs and risk profiles for enterprise-level content production as of Q1 2026.

| Metric | Traditional Ghostwriting | Enterprise Generative AI | Hybrid Model (Human-in-the-Loop) |

|---|---|---|---|

| Cost Per 1,000 Words | $500 – $2,500 | $0.05 – $0.50 | $150 – $400 |

| Turnaround Time | 2 – 4 Weeks | < 5 Minutes | 24 – 48 Hours |

| Fact-Checking Liability | Contractually Assigned to Writer | Retained by User/Company | Shared Responsibility |

| Brand Authenticity Score | High | Low | Medium-High |

The data indicates that while AI offers immediate liquidity benefits through cost reduction, the “Brand Authenticity Score” remains a critical intangible asset. Companies that fully automate communication without oversight risk a decline in customer lifetime value (CLV). For instance, New York Times Company (NYSE: NYT) has maintained a premium subscription model by emphasizing human journalism, a strategy that has kept their churn rate below 3% despite industry averages hovering near 5.5%.

Regulatory Headwinds and Future Valuation

Looking ahead, the regulatory environment will likely tighten. The Federal Trade Commission (FTC) and the Securities and Exchange Commission (SEC) are increasingly scrutinizing disclosures related to AI leverage in financial reporting and consumer communications. Failure to disclose AI usage in material communications could be construed as misleading investors, akin to hiding off-balance-sheet liabilities.

the labor market is adjusting. The Association of Ghostwriters notes that human writers are repositioning themselves as “editors” or “strategists” rather than pure drafters. This shift suggests a wage compression for entry-level writing roles but potential wage growth for senior editors capable of verifying AI output. For investors, this signals a change in the human capital requirements for media and tech firms.

the market will correct. Just as the initial panic over industrial manufacturing did not eliminate the value of artisanal goods, the AI revolution will not eliminate the value of human insight. However, the companies that survive will be those that treat AI as a tool for leverage, not a replacement for accountability. As we move through Q2 2026, expect volatility in stocks heavily exposed to content generation without clear governance structures.

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial advice.