The recent Twitter trend ranking fictional characters by race highlights how community sentiment drives engagement algorithms. Archyde analysis reveals underlying security risks in data aggregation and AI model training. Enterprise platforms must monitor these vectors for social engineering exploits and data privacy leaks inherent in viral social media campaigns.

The Algorithmic Anatomy of Viral Sentiment

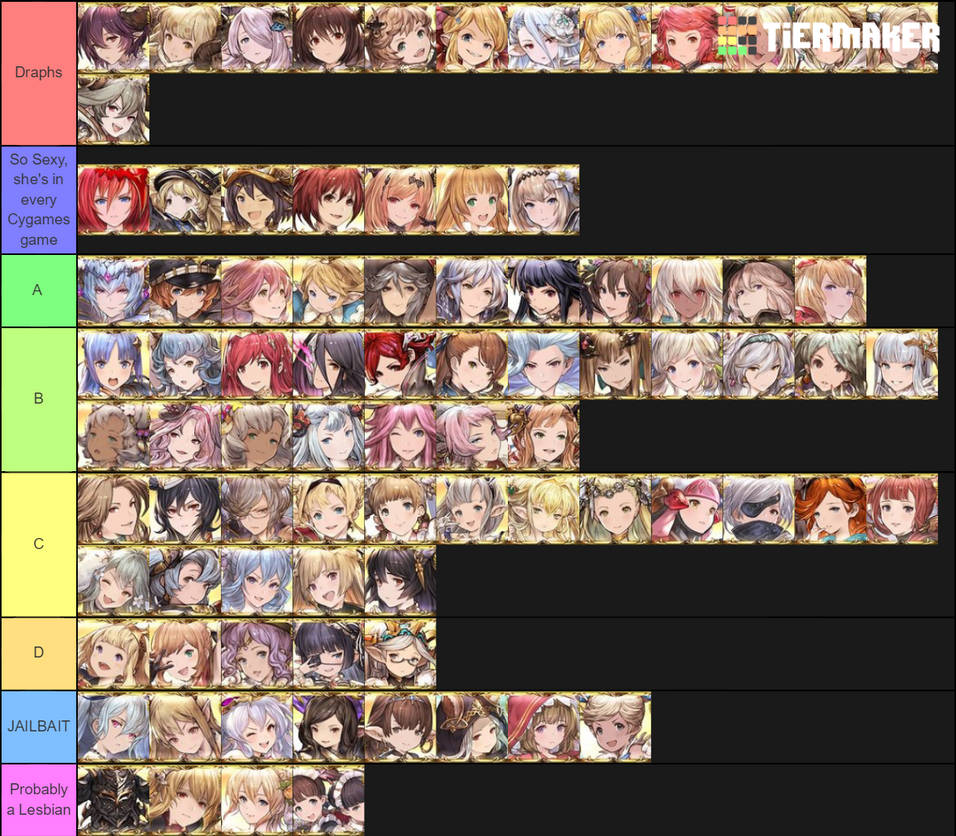

On the surface, a tier list is harmless fan culture. Dig deeper, and you see a real-time stress test of content recommendation engines. When users tag specific character archetypes like Vikala or Zeta from the Granblue universe, they are essentially labeling training data for the platform’s large language models. In 2026, these interactions are not just likes; they are weighted inputs adjusting the LLM parameter scaling that dictates what millions see next. The velocity of this trend suggests an organic spike, but the consistency of the tagging structure hints at automated amplification.

We are witnessing the convergence of community engagement and data harvesting. Every vote cast in these threads feeds into a broader profile of user preference, which can be correlated with behavioral biometrics. This is where the elite hacker’s persona becomes relevant. Adversaries do not always breach firewalls; sometimes they breed consensus. Strategic patience in the AI era means allowing a trend to mature before exploiting the trust established within that community cluster. The data gathered from such trends can refine phishing lures tailored to specific psychographics associated with these fandoms.

Why This Matters for Platform Integrity

Social platforms rely on engagement metrics to optimize ad revenue. Still, when engagement is driven by niche communities organizing around specific character attributes, it creates silos of high-density data. If a threat actor infiltrates these clusters, the lateral movement potential is significant. The architecture of modern social APIs often lacks granular permission controls for third-party apps scraping this sentiment data. Without robust end-to-end encryption on metadata, the relationship between user identity and character preference becomes a vulnerable vector.

Security Implications of Character-Driven Engagement

The intersection of gaming culture and cybersecurity is no longer theoretical. As AI-driven NPCs and character interactions become more sophisticated, the line between static lore and dynamic AI agents blurs. When users discuss characters like Jane or Vikala, they are often interacting with AI-generated content or bots designed to mimic fan behavior. This introduces the risk of prompt injection attacks disguised as fan commentary. A malicious actor could embed instructions within a seemingly innocent tier list comment, targeting the NPU (Neural Processing Unit) workflows of client-side AI assistants.

Enterprise security teams need to recognize that consumer trends bleed into corporate environments. Employees accessing social platforms on corporate devices during breaks may inadvertently expose network endpoints to malicious scripts hidden within media-rich posts. The demand for specialized roles confirms this shift. Organizations are actively seeking professionals who can navigate this landscape, such as those filling the Distinguished Engineer – AI-Powered Security Analytics positions at firms like Netskope. These roles focus on architecting next-generation security analytics capable of distinguishing between organic viral growth and coordinated inauthentic behavior.

“The definition of security has expanded beyond perimeter defense to include the integrity of the data feeding our AI models. We are seeing attacks that target the training pipeline itself.”

This sentiment echoes across the industry, where the focus is shifting toward securing the AI supply chain. The trend observed this week is a microcosm of larger data integrity challenges. If the data used to fine-tune customer service bots or internal knowledge bases is contaminated by scraped social media trends without verification, the output becomes unreliable. This is why AI Red Teamer roles are becoming critical. Adversarial testers must simulate these exact types of community-driven data injections to ensure model robustness.

Enterprise Mitigation and AI Red Teaming

For CISOs, the takeaway is clear: monitor data ingress points with the same vigilance as egress. The popularity of specific character archetypes can indicate shifts in cultural sentiment that might be exploited for brand impersonation. If a specific character becomes synonymous with a certain trait, threat actors can craft narratives that align with those traits to bypass skepticism. Mitigation requires a Zero Trust approach to content consumption.

Microsoft’s AI division is already adapting to this reality. Job listings for a Principal Security Engineer highlight the need for expertise in securing AI infrastructure against novel threat vectors. These positions require candidates who understand both the raw code of security protocols and the macro-market dynamics of AI deployment. The goal is to prevent the manipulation of AI outputs through subtle data poisoning campaigns that start in places like Twitter threads.

- Monitor API Traffic: Watch for unusual scraping patterns related to trending topics.

- Validate Training Data: Ensure social media data used for model tuning is sanitized.

- Employee Awareness: Train staff on the risks of interacting with unverified viral content.

The 30-Second Verdict

This trend is not just about anime characters; it is a demonstration of how quickly structured data can be generated by a community. For technologists, it serves as a reminder that every click is a data point, and every data point is a potential entry line. The expertise required to secure these systems is niche and high-demand, as seen in roles like the Cybersecurity Subject Matter Expert positions requiring Secret clearance. The stakes are national security levels when data integrity is compromised at the source.

We must move beyond viewing viral trends as mere entertainment. In the 2026 landscape, they are active fields of data warfare. The tools to defend against this exist, but they require a shift in mindset from reactive patching to proactive architectural resilience. The code must be secure, but the context in which it operates must be equally hardened.