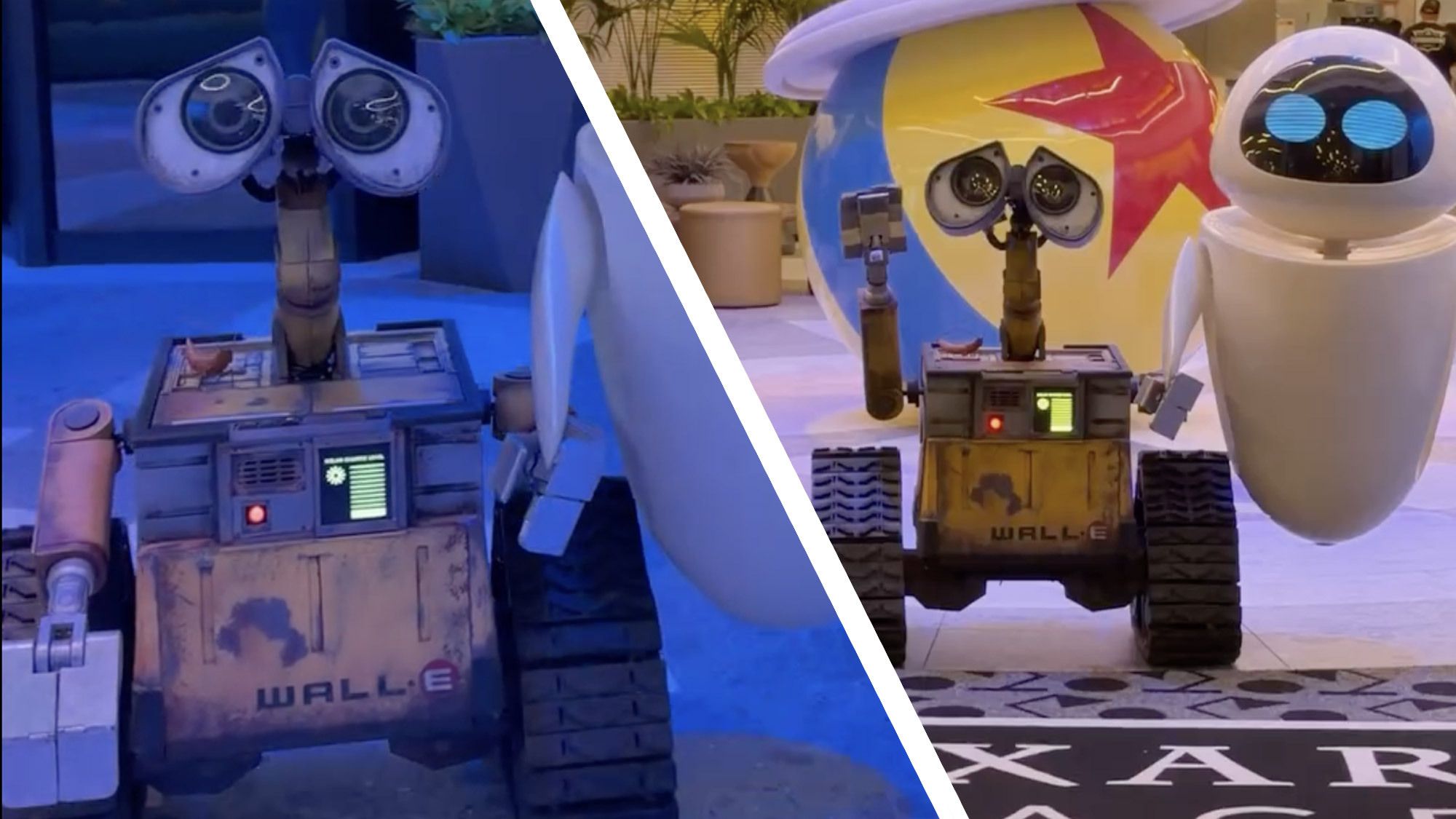

Wall-E and Eve are appearing at Disneyland’s Pixar Place Hotel throughout April 2026, signaling Disney’s continued investment in high-fidelity interactive robotics to bridge the gap between static animatronics and autonomous AI agents in themed environments, testing the boundaries of human-robot interaction (HRI) in high-traffic consumer spaces.

For the casual tourist, it is a photo op. For those of us who live in the terminal, it is a deployment. When I look at Wall-E and Eve, I don’t see characters; I see a sophisticated intersection of actuators, computer vision, and the slow death of the pre-recorded dialogue loop. The return of these specific bots isn’t just a nostalgia play—it’s a signal that Imagineering is refining the “Character Bot” as a platform.

The industry has reached a tipping point. We are moving away from the era of the PLC (Programmable Logic Controller), where a robot simply repeats a sequence of movements triggered by a sensor, and moving toward the era of neural inference at the edge. To produce a robot like Wall-E feel “alive” in 2026, you cannot rely on a script. You need a stack that integrates real-time spatial awareness with a generative personality layer.

The Shift from Scripted Loops to Neural Inference

The “magic” of modern character robotics lies in the NPU (Neural Processing Unit). In previous iterations, character bots were essentially puppets with high-end servos. Today, the goal is a seamless integration of an LLM (Large Language Model) with a physical chassis. This requires massive parameter scaling to ensure the robot can process a guest’s query, determine the emotional valence, and trigger a corresponding physical gesture—all within a latency window of under 200 milliseconds to avoid the “uncanny valley” lag.

If Disney is eyeing the next wave of robots, they aren’t looking at more puppets. They are looking at embodied AI. Imagine a Baymax bot that doesn’t just wave, but uses computer vision to detect a guest’s mood or a child’s height and adjusts its vocal frequency and posture accordingly. This requires a move toward IEEE standards for robotic safety and sophisticated haptic feedback systems to ensure that a 300-pound piece of machinery doesn’t accidentally crush a toddler during a hug.

It’s a high-stakes game of hardware optimization.

The 30-Second Verdict: Why This Matters for Tech

- Edge Intelligence: Disney is essentially running a massive beta test on how LLMs perform in noisy, unpredictable physical environments.

- Actuator Precision: The move toward “fluid” movement suggests a shift toward harmonic drives and high-torque brushless DC motors.

- Platform Lock-in: By creating a proprietary ecosystem of interactive bots, Disney is building a moat around “experiential AI” that Big Tech (Google, Meta) cannot replicate without a physical theme park infrastructure.

Navigating the Chaos: SLAM and the Disney Floor

The biggest technical hurdle for any autonomous character bot is SLAM—Simultaneous Localization and Mapping. Navigating a crowded hotel lobby is a nightmare for a robot. You have dynamic obstacles (children), reflective surfaces (marble floors), and unpredictable lighting. To solve this, the next generation of Disney bots will likely move beyond simple LiDAR to a multi-modal sensor fusion approach, combining depth cameras with ultrasonic sensors.

This represents where the “tech war” gets interesting. While Tesla is pushing the “Vision Only” approach for Optimus, Disney needs redundancy. A robot that freezes because it can’t “see” a glass door is a failure in the guest experience. They are likely leveraging frameworks similar to ROS 2 (Robot Operating System) to handle the middleware between the high-level AI brain and the low-level motor controllers.

“The challenge isn’t making a robot move; it’s making a robot move with intent. When you merge generative AI with physical actuators, you’re no longer programming a machine—you’re choreographing an intelligence.”

The choreography is the hard part. To achieve the “clunky but cute” movement of Wall-E, engineers actually have to program imperfections. This is inverse kinematics at its most artistic: calculating the joint angles to ensure a movement looks accidental rather than mechanical.

The “Optimus” Effect: Entertaining the General Purpose Robot

We cannot discuss character robots without acknowledging the shadow of the general-purpose humanoid. With the rapid advancement of Figure AI and Tesla’s Optimus, the barrier between “theme park bot” and “utility bot” is evaporating. Disney is in a unique position to pioneer the social application of these technologies.

If we look at the hardware trajectory, the next character robots will likely incorporate “soft robotics”—polymers that can change shape or feel, moving away from the hard plastic and metal shells of Wall-E. This would be essential for a character like Baymax, where the “squish” is a core part of the IP.

| Feature | Legacy Animatronics | Next-Gen Character Bots (2026+) |

|---|---|---|

| Control Logic | Pre-programmed sequences (PLC) | Neural Inference / LLM-driven |

| Navigation | Fixed tracks or remote op | Autonomous SLAM / Sensor Fusion |

| Interaction | Triggered audio clips | Real-time Natural Language Processing (NLP) |

| Hardware | Hydraulic/Pneumatic actuators | High-torque Electric / Soft Robotics |

Latency, Edge Computing, and the Death of the Pre-Record

The ultimate goal for Imagineering is the elimination of the “loop.” Currently, most character interactions are based on a finite set of responses. But as we move toward 2027, the integration of 5G (and eventually 6G) allows for a hybrid compute model. The “heavy lifting” of the LLM can happen in a local edge data center, while the immediate reactive movements are handled by an onboard NPU.

This architecture solves the thermal throttling problem. If you try to run a 70B parameter model locally on a robot, the chassis becomes a space heater. By offloading the cognitive load to the edge, Disney can keep the robots slim and the battery life viable for an eight-hour shift.

However, this introduces a cybersecurity vulnerability. An autonomous robot connected to a network is an endpoint. If a terrible actor gains access to the control layer, you don’t just have a data breach—you have a several-hundred-pound kinetic object moving through a crowd. This is why end-to-end encryption and hardware-level root-of-trust are no longer optional; they are fundamental to the safety of the park.

For more on the vulnerabilities of embodied AI, the Ars Technica archives on IoT security provide a sobering look at what happens when “smart” devices lack rigorous perimeter defense.

Wall-E and Eve are the vanguard. They are the friendly faces of a much more complex transition. As we move toward a world where AI has a physical presence, Disney isn’t just selling tickets—they are mapping the psychological and technical blueprint for how humans will coexist with autonomous machines. The next robot won’t just be a character; it will be a companion.