A viral sentiment from developer Ryan Caton—”At least it’s not Microsoft Teams”—has crystallized into a broader industry critique of “bloatware” in the AI era. As enterprises integrate LLM-driven agents into their workflows this April 2026, the focus has shifted from raw capability to the cognitive load of the interface.

Let’s be clear: this isn’t just a joke about a clunky chat app. It is a visceral reaction to the “Everything App” philosophy that Microsoft has aggressively pushed. When we talk about the “Teams-ification” of the enterprise, we are talking about the erosion of the focused workspace. We are seeing a trend where the tool designed to facilitate work becomes the primary obstacle to actually getting it done.

The irony is that while Microsoft integrates Copilot into every conceivable surface area, the actual power users—the ones pushing GitHub Copilot to its limits or architecting complex RAG (Retrieval-Augmented Generation) pipelines—are retreating toward leaner, decoupled environments. They want the intelligence of a 1-trillion parameter model without the telemetry and UI friction of a corporate ecosystem that treats the user as a data point.

The Architecture of Friction: Why “Integrated” is Often “Obstructive”

From a systems engineering perspective, the “Teams” experience is a masterclass in resource mismanagement. We are talking about Electron-based shells that devour RAM while attempting to synchronize state across a dozen different API endpoints. When you add an AI layer on top of this, you aren’t just adding a feature; you are adding latency. In the world of high-frequency development, a 200ms delay in a UI response is an eternity.

The industry is currently pivoting toward Agentic Workflows. Unlike a chatbot embedded in a sidebar, these agents operate autonomously on the backend, interacting via IEEE standardized protocols or lightweight REST APIs. The goal is “invisible AI.” If the AI is doing its job, you shouldn’t have to open a bloated application to see it happening.

Consider the shift toward NPUs (Neural Processing Units) in the latest silicon. We are moving the inference from the cloud—where it’s subject to the whims of a corporate SaaS wrapper—directly onto the edge. When the model runs locally on your hardware, the need for a “Teams-like” orchestration layer vanishes. You receive raw, low-latency interaction with the weights of the model, bypassing the corporate middleware entirely.

“The next phase of productivity isn’t about adding more buttons to a dashboard; it’s about the total disappearance of the dashboard. We are moving toward a ‘headless’ enterprise where the UI is generated on-the-fly based on the specific intent of the user, rather than a static, bloated suite.”

The Rise of the “Attack Helix” and the Security Paradox

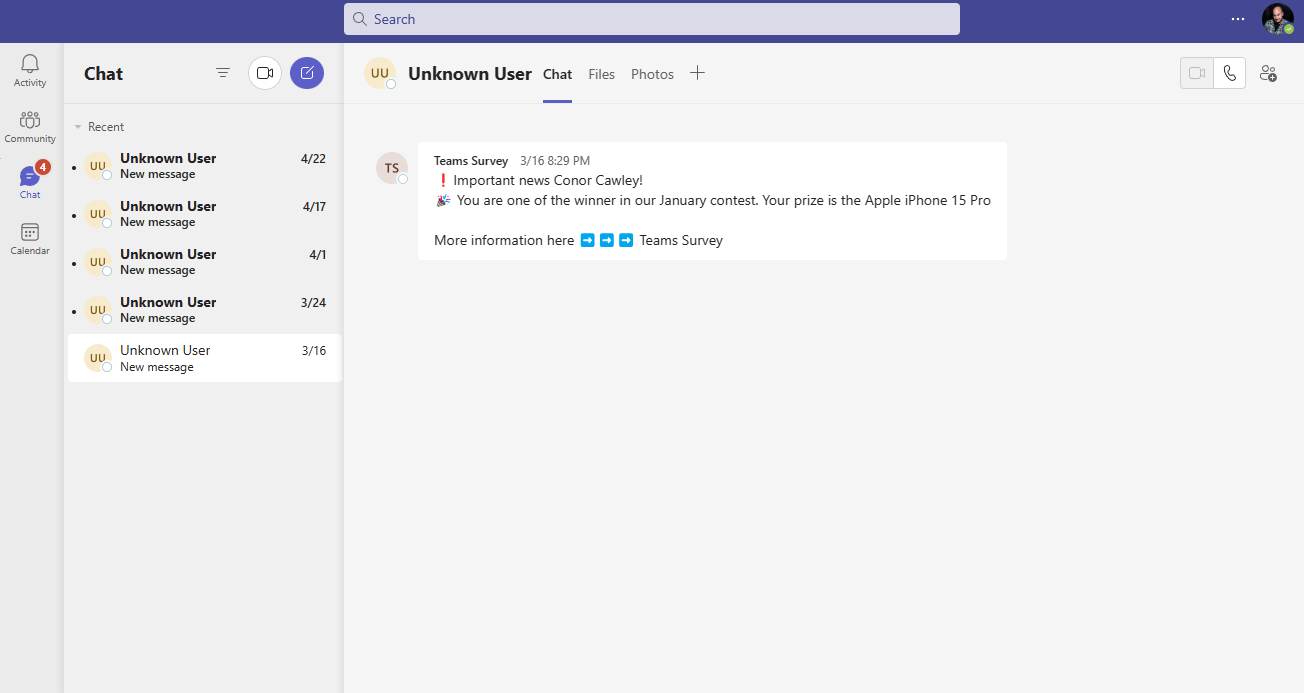

While the average user is complaining about UI bloat, the elite tier of security researchers is weaponizing the exceptionally integration Microsoft prizes. The “Attack Helix” architecture—recently highlighted in offensive security circles—demonstrates how AI can be used to map the internal dependencies of a corporate ecosystem. Due to the fact that Teams integrates with everything from SharePoint to Azure Active Directory, it creates a massive, interconnected attack surface.

If an attacker gains a foothold in a “unified” environment, they aren’t just in a chat app; they are in the nervous system of the company. This is the dark side of the ecosystem lock-in. The more “integrated” the tool, the more catastrophic the single point of failure.

The 30-Second Verdict: Integration vs. Interoperability

- Integration (The Teams Way): Forced bundling, proprietary silos, high resource overhead, single point of failure.

- Interoperability (The Elite Way): Modular tools, open APIs, local inference (NPU), decoupled security layers.

This isn’t just a preference; it’s a survival strategy for the modern engineer. The “Strategic Patience” mentioned by elite hackers in the AI era is essentially a wait-and-see approach to see which proprietary wrappers fail first. They understand that the underlying LLM parameter scaling will eventually outpace the ability of a company like Microsoft to wrap it in a user-friendly (read: restrictive) GUI.

The Hardware War: ARM, x86, and the Death of the Bloat-Shell

We cannot discuss the “Teams” problem without discussing the hardware. The industry is seeing a divergence in how we handle AI workloads. On one side, you have the x86 legacy—powerful but often bogged down by the software layers designed for backward compatibility. On the other, the ARM-based revolution is pushing a tighter integration between the OS and the silicon.

When you run a streamlined, AI-native OS on ARM, the “Teams-style” bloat becomes an evolutionary dead end. The efficiency of the SoC (System on Chip) allows for background agents to handle the “coordination” that Teams attempts to do through a GUI. Why have a meeting to discuss a project when an AI agent can synthesize the delta between two Git branches and present the solution in a terminal?

| Metric | Legacy Integrated Suite (SaaS) | Modular AI Stack (Edge/Local) |

|---|---|---|

| Cold Start Latency | High (Seconds) | Low (Milliseconds) |

| RAM Overhead | Gigs (Electron-based) | MBs (C++/Rust binaries) |

| Data Sovereignty | Cloud-dependent | Local/Private Cloud |

| Attack Surface | Monolithic/Broad | Granular/Isolated |

The Path Forward: Decoupling the Intelligence from the Interface

The sentiment “At least it’s not Microsoft Teams” is a canary in the coal mine. It signals a growing resentment toward the “Suite” mentality. The future of technology isn’t a bigger box that holds more tools; it’s a set of invisible, highly specialized tools that emerge only when needed.

For the CTOs and architects currently designing their 2026-2027 roadmaps, the lesson is simple: stop building dashboards. Start building APIs. The value is in the logic, not the layout. If your “AI transformation” involves adding another tab to a corporate communication tool, you aren’t innovating—you’re just adding to the noise.

The real winners of this era will be those who embrace the “headless” philosophy. By leveraging Ars Technica-level scrutiny of performance benchmarks and prioritizing raw throughput over “user experience” (as defined by a marketing department), we can finally move past the era of the bloat-shell.

In short: Give us the NPU power, give us the open weights, and for the love of all that is holy, keep it out of the chat app.