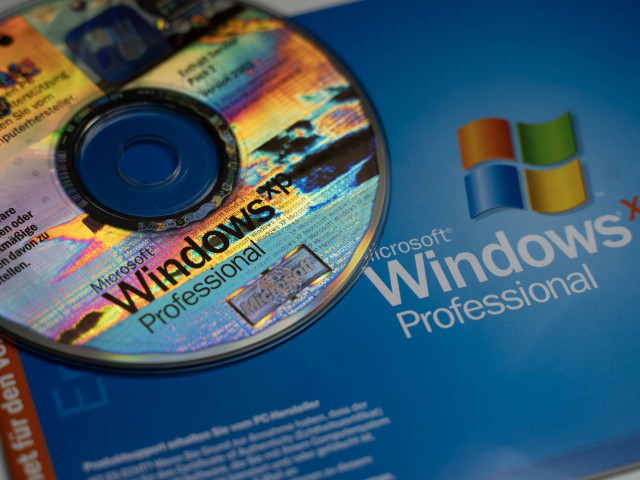

Windows XP, Microsoft’s NT 5.1 kernel masterpiece, officially reached end-of-life on April 8, 2014. Despite the subsequent security vacuum, millions of users and enterprises clung to its lean architecture and stability for years, illustrating a fundamental tension between software evolution and the brutal reality of technical debt in global computing.

Twelve years after the official “death” of XP, we aren’t just reminiscing about a blue taskbar and green start button. We are analyzing a case study in software endurance. For many, the transition to Windows 7, 8, or 10 wasn’t a seamless upgrade—it was a forced migration that often broke specialized hardware drivers and inflated system requirements without providing a proportional leap in utility. This is the “XP Effect”: the psychological and technical refusal to migrate when the cost of change exceeds the perceived risk of vulnerability.

It is a pattern we are seeing repeat in 2026, albeit in different forms. As we roll out this week’s beta for the latest neural-OS integrations, the industry is grappling with the same legacy bottlenecks. We are now seeing “frozen” AI pipelines—companies refusing to update their LLM weights because the current prompt-engineering equilibrium is too fragile to risk a version bump. Technical debt is a circle.

The NT 5.1 Architecture: Why Stability Trumped Innovation

To understand why users stayed until 2014 and beyond, you have to look at the Hardware Abstraction Layer (HAL). Windows XP was the first time Microsoft successfully merged the consumer-grade friendliness of the 9x series with the industrial-strength stability of the NT (New Technology) kernel. For the average user, this meant the “Blue Screen of Death” became a rarity rather than a daily ritual.

XP’s resource footprint was an anomaly of efficiency compared to today’s telemetry-heavy environments. It operated with a level of transparency that modern OSs—riddled with background processes, cloud-syncing daemons, and invasive data harvesting—simply cannot match. When you ran a process on XP, the CPU cycles went to the application, not to a dozen hidden “experience improvement” services.

The tragedy of its demise wasn’t a lack of capability, but the architectural shift toward x86-64. While XP had a 64-bit version, it was a fragmented mess of driver incompatibilities. Most users stayed on the 32-bit version, capped at a 4GB RAM ceiling, because the software ecosystem—specifically the Win32 API—was so optimized for that specific environment that moving felt like a downgrade in reliability.

The 30-Second Technical Verdict

- The Win: Unprecedented stability via the NT kernel; minimal overhead.

- The Fail: Poor 64-bit transition and a total lack of modern security primitives (like ASLR).

- The Legacy: Established the blueprint for the modern desktop OS but created a decade of “legacy lock-in.”

The Air-Gap Fallacy and the Security Debt

From a cybersecurity perspective, continuing to use Windows XP after 2014 was essentially an invitation to every script kiddie and state-sponsored actor on the planet. Without security patches, the OS became a playground for exploits. The absence of Address Space Layout Randomization (ASLR) and Data Execution Prevention (DEP) in early iterations meant that buffer overflow attacks were trivial to execute.

Many enterprise users justified this by claiming their systems were “air-gapped”—physically disconnected from the internet. This is a dangerous myth. In the modern threat landscape, “air-gapped” usually just means “not connected to Wi-Fi,” while still being vulnerable to USB-borne malware or lateral movement within a local area network (LAN).

“The persistence of legacy systems like Windows XP in industrial control environments isn’t a choice; it’s a hostage situation. When a multi-million dollar CNC machine only speaks a driver language written for NT 5.1, the business accepts the security risk because the alternative is a total capital expenditure overhaul.”

This creates a systemic vulnerability. We saw this with the WannaCry ransomware attacks, which leveraged the EternalBlue exploit. While Windows 7 and 10 were the primary targets, the legacy XP machines in hospitals and factories became the easiest points of entry, turning a software flaw into a real-world operational crisis. For a deeper dive into how these exploits function, the IEEE Xplore archives provide extensive documentation on kernel-level vulnerabilities in legacy NT systems.

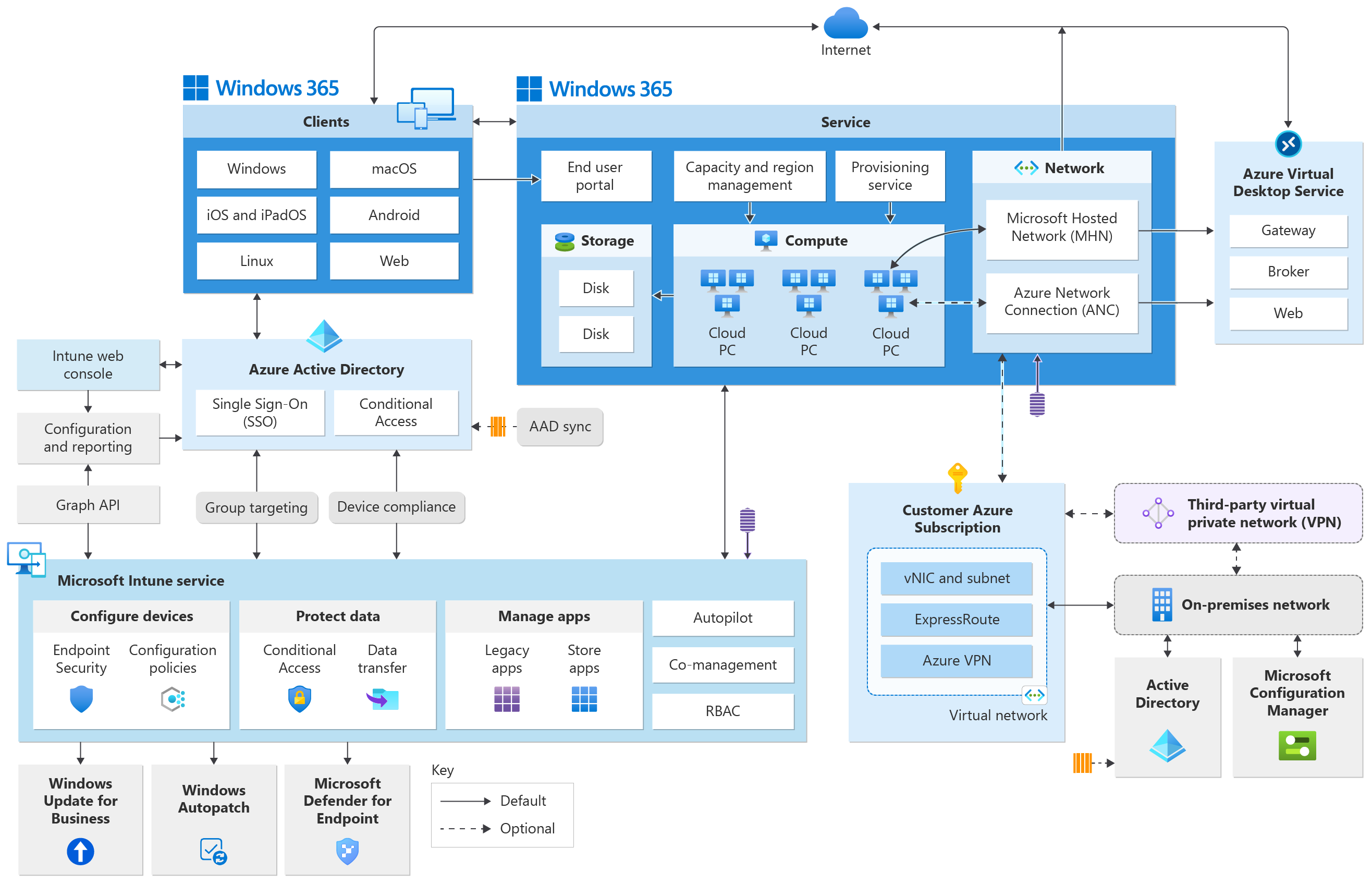

Bridging the Gap: From Bare Metal to Containerization

The industry eventually found a way to kill XP without killing the workflows that depended on it. The shift didn’t happen through better OS updates, but through virtualization and containerization. Instead of running XP on bare metal, developers began wrapping legacy apps in virtual machines (VMs) or using tools like Docker to isolate the environment.

This decoupled the software from the hardware. You could finally run that critical 2004-era accounting software on a modern ARM-based SoC (System on a Chip) without compromising the security of the host OS. This evolution mirrors the current trend in open-source emulation, where projects like Wine or DOSBox allow us to preserve digital history without the risk of running unpatched kernels.

| Feature | Windows XP (NT 5.1) | Modern OS (Win 11 / macOS) | Impact of Shift |

|---|---|---|---|

| Kernel Architecture | Hybrid NT (Lean) | Hybrid NT/XNU (Heavy/Service-oriented) | Increased overhead for background telemetry. |

| Memory Addressing | Predominantly 32-bit (4GB limit) | 64-bit (Terabytes) | Essential for LLMs and high-res rendering. |

| Security Model | Permissive / Admin-centric | Zero Trust / Sandbox-centric | Massive reduction in “one-click” system compromises. |

| Driver Model | Static / Hardware-specific | Dynamic / Universal (USB-C/Thunderbolt) | Easier hardware swaps, less “Blue Screen” volatility. |

The Final Analysis: The Ghost in the Machine

The story of Windows XP isn’t about a piece of software; it’s about the human resistance to friction. We abandoned XP not because it stopped working, but because the world around it changed. The web moved from static HTML to heavy JavaScript frameworks; hardware moved from spinning platters to NVMe SSDs; and the threat model moved from “avoiding viruses” to “defending against APTs (Advanced Persistent Threats).”

As we navigate 2026, the lesson is clear: the more “perfect” a tool feels in its moment, the harder it is to leave. Whether it’s an OS from 2001 or a deprecated API from 2023, the cost of stability is often a slow slide into obsolescence. The goal of any elite technologist isn’t to find the “perfect” system, but to build a stack that is modular enough to be replaced without bringing the whole house down.

If you’re still running a legacy environment for the sake of “stability,” you aren’t avoiding risk—you’re just deferring the bill. And in cybersecurity, the interest rates on technical debt are predatory.