Xilinx, now a pivotal arm of AMD, is redefining programmable hardware by integrating AI-native engines into Field Programmable Gate Arrays (FPGAs). By blurring the line between fixed ASICs and flexible software, Xilinx enables real-time, low-latency hardware acceleration for the most demanding AI workloads across data centers and edge devices.

Let’s be clear: we are witnessing the death of the “general purpose” era. For decades, we accepted the compromise of the von Neumann architecture—the constant shuffling of data between the CPU and memory. But as LLM parameter scaling hits a wall of power inefficiency, the industry is pivoting toward Adaptive Compute Acceleration Platforms (ACAPs). This isn’t just a spec bump; it’s a fundamental rewrite of how silicon handles logic.

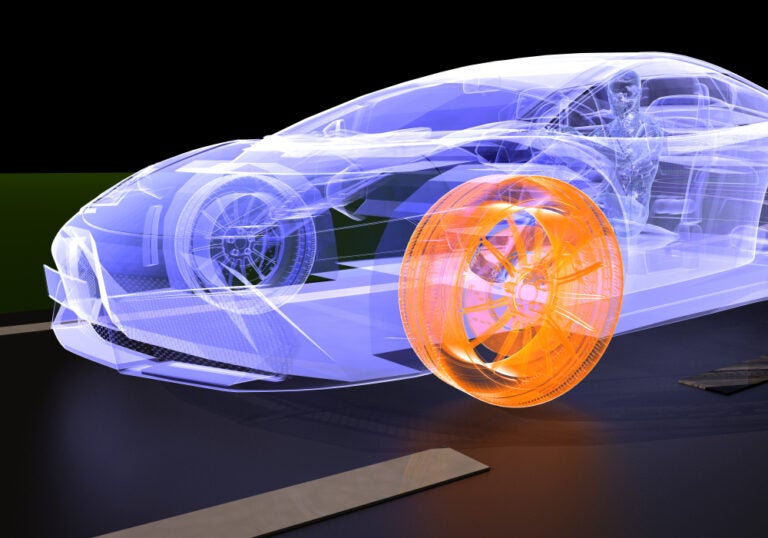

The core of the Xilinx evolution lies in the transition from traditional FPGAs—which were essentially “blank slates” of look-up tables (LUTs)—to a heterogeneous architecture. We are seeing the integration of AI Engines (AIE), which are essentially arrays of vector processors designed for the heavy lifting of tensor mathematics. By offloading the matrix multiplication to these dedicated engines while keeping the programmable logic for custom data routing, Xilinx has effectively solved the “dark silicon” problem where large portions of a chip sit idle during specific tasks.

The War on Latency: Why ACAP Beats the GPU at the Edge

While NVIDIA dominates the training phase with H100s and B200s, the inference game—especially at the edge—is a different beast. GPUs are throughput monsters, but they suffer from “jitter” and non-deterministic latency. In a high-frequency trading environment or an autonomous vehicle’s sensor fusion system, a 10ms delay is a catastrophic failure.

Xilinx’s approach utilizes spatial computing. Instead of instructions flowing through a fixed pipeline, the data flows through a circuit configured specifically for that algorithm. This eliminates the instruction-fetch overhead entirely. When you combine this with Software Defined Infrastructure (SDI), the hardware becomes as malleable as a Python script, yet retains the raw speed of hard-wired circuitry.

The technical brilliance here is the NoC (Network-on-Chip). By implementing a dedicated high-speed interconnect, Xilinx has decoupled the compute engines from the memory controllers. This prevents the “bottlenecking” common in older FPGA generations where routing congestion would limit the maximum clock frequency (Fmax).

The 30-Second Verdict: Hardware Malleability

- The Win: Deterministic latency and massive parallelization without the power draw of a 700W GPU.

- The Friction: The learning curve. Even with Vitis, moving from C++ to RTL (Register Transfer Level) is a steep climb for most devs.

- The Bottom Line: If your workload requires sub-millisecond response times and high energy efficiency, ACAPs are the only viable path.

Bridging the Gap: From VHDL to AI-Driven Synthesis

For years, the “barrier to entry” for Xilinx hardware was the grueling requirement of knowing VHDL or Verilog. It was a priesthood of hardware engineers. Although, the shift toward High-Level Synthesis (HLS) and the integration of AI into the toolchain is democratizing the silicon. We are now seeing compilers that can accept a PyTorch model and synthesize it directly into a bitstream.

This creates a massive ecosystem shift. By lowering the friction, Xilinx is attacking the “platform lock-in” held by proprietary AI chips. If a developer can deploy a custom neural network architecture to an FPGA in hours rather than months, the incentive to buy a fixed-function ASIC vanishes.

“The convergence of adaptive hardware and AI-driven synthesis is turning silicon into software. We are no longer designing chips; we are designing fluid architectures that evolve as the models do.”

This fluidity is critical when considering the current IEEE standards for interconnects. As CXL (Compute Express Link) gains traction, the ability for a Xilinx chip to act as a transparent, accelerators-as-a-service layer within a server rack becomes a dominant market strategy.

The Silicon Chessboard: AMD, NVIDIA, and the Chip Wars

The acquisition of Xilinx by AMD wasn’t just about adding a product line; it was about survival. Intel is struggling with its foundry transition, and NVIDIA is attempting to move “up” into the CPU space with Grace. AMD, by owning the programmable logic layer, can offer a “Swiss Army Knife” of compute. Imagine a server where the CPU handles the OS, the GPU handles the bulk training, and the Xilinx FPGA handles the real-time pre-processing and security encryption—all on the same substrate.

Here’s where the “Chip War” gets interesting. We are moving toward a Chiplet-based architecture. Instead of one giant monolithic die, we have smaller, specialized dies (chiplets) connected by a high-speed fabric. Xilinx’s expertise in programmable interconnects makes them the glue that holds these heterogeneous systems together.

| Feature | Traditional FPGA | Xilinx ACAP (Versal) | General Purpose GPU |

|---|---|---|---|

| Compute Logic | LUT-based (Flexible) | AI Engines + Programmable Logic | Fixed CUDA Cores |

| Latency | Ultra-Low (Deterministic) | Ultra-Low (Deterministic) | Variable (Batch-dependent) |

| Power Efficiency | Medium | High (Per-Operation) | Low (High TDP) |

| Development Cycle | Very Slow (RTL) | Moderate (HLS/Vitis) | Fast (CUDA/PyTorch) |

Security at the Gate: The Hardware Root of Trust

In an era of “Agentic SOCs” and AI-driven offensive security, software-level encryption is no longer enough. If the kernel is compromised, the encryption keys are gone. Xilinx is pushing the security perimeter down to the Physical Layer (PHY). By implementing hardware-level isolation and “Root of Trust” (RoT) directly into the programmable logic, they can create secure enclaves that are physically separated from the main processor.

This is the only way to mitigate side-channel attacks and zero-day exploits that target the CPU’s speculative execution. When the security logic is baked into the hardware gates, it cannot be bypassed by a software exploit. For enterprise IT, this means the ability to verify the integrity of the hardware bitstream before a single line of code executes.

For those tracking the open-source movement, the rise of RISC-V is the wild card. If Xilinx continues to integrate open-instruction set architectures with their programmable fabric, we could see a total collapse of the proprietary x86/ARM hegemony in the data center.

The Final Takeaway

The evolution of programmable hardware at Xilinx is a signal that the industry has reached the limits of “brute force” scaling. We cannot simply add more transistors or increase clock speeds without melting the silicon. The future belongs to architectural efficiency. By making hardware as flexible as software, Xilinx isn’t just selling chips; they are selling the ability to pivot your entire infrastructure at the speed of a firmware update. That is the ultimate competitive advantage in the AI era.