Saturday Edition

Stories shaping the day across news, world, money, culture, health, tech, and sport.

Stay updated with Archyde – your source for breaking news, global headlines, economy, entertainment, health, technology, and sports. Fresh stories daily.

Continuous Coverage

Latest Dispatches

The Devil Wears Prada Sequel Leads Box Office With $77 Million Debut

Here’s the article in raw HTML5, adhering strictly to your guidelines: The Devil Wears Prada 2 has made…

World Cup 2026: Extreme Ticket Prices and US Logistics Warnings

New York City’s World Cup 2026 ticket pricing has reached a breaking point, forcing FIFA and local organizers…

Weekly Entertainment Roundup: Michael Jackson, Oscars, and K-Pop News

The Michael Jackson biopic, Michael, is under scrutiny after director Antoine Fuqua spent $15 million on reshoots to…

Omega Constellation Observatory: World’s First 2-Hand Chronometer

Omega has launched the Constellation “Observatory,” the first-ever two-hand chronometer to receive official certification. By stripping the dial…

Microgravity weakens astronaut hearts but accelerates organoid growth

Astronauts’ hearts undergo measurable changes in space, including structural weakening and altered shape due to fluid redistribution and…

Pistons Dominate Magic in Game 7 to Complete Comeback

The Detroit Pistons have done it. After a playoff series that defied logic, erased history, and rewrote the…

Global Affairs

World Desk

Jailed Iranian Nobel Laureate Narges Mohammadi in Critical Condition

Narges Mohammadi, the Nobel Peace Prize-winning activist, is currently hospitalized in critical condition in Iran. Her family and…

Markets And Money

Economy Desk

US-Iran Tensions: Trump’s Strategy and the Global Energy Surge

President Donald Trump has announced that the United States will move to free shipping vessels in the Strait…

Digital Culture

Technology Desk

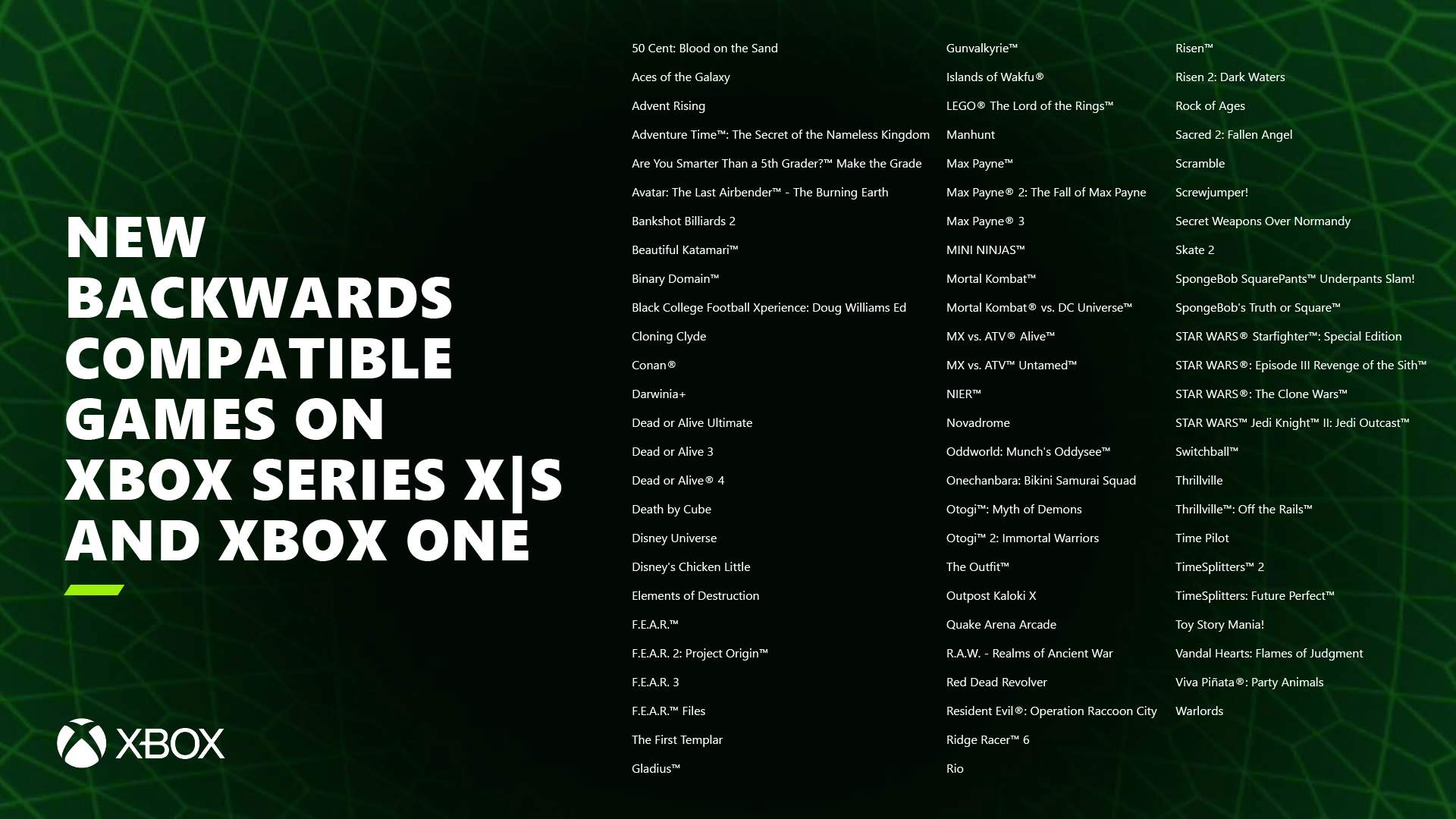

Korean Xbox Community Petitions for Original & 360 Backward Compatibility

Microsoft is quietly reviving its Xbox backward compatibility program, with over 50,000 players now voting to restore original…

Science And Wellbeing

Health Desk

Screen And Sound

Entertainment Desk

Theatre Performance at Casino d’Aix-les-Bains: May 7

Sandrine Quétier headlines a theatrical production at the Casino d’Aix-les-Bains on Thursday, May 7, at 8:30 PM. Joining…

Fixtures And Form

Sport Desk

WWE to Continue Celebrity Involvement After WrestleMania 42

WWE is doubling down on celebrity integration following a successful WrestleMania 42, with internal sources confirming the company…