Intruder, a GCHQ-accelerated cybersecurity firm, has launched AI pentesting agents that automate complex vulnerability assessments. By replicating human methodologies in minutes rather than weeks, they are disrupting the $50,000 manual pentest model, enabling enterprises to move from static, point-in-time audits to continuous, autonomous security validation.

For years, the enterprise security playbook has been a cycle of expensive, periodic rituals. You hire a boutique firm, pay a premium—often between $10,000 and $50,000—and wait weeks for a curated PDF that tells you exactly how you were vulnerable three weeks ago. It’s a legacy model designed for a world where software updates happened quarterly, not hourly.

The problem is simple: the “point-in-time” audit is a lie. In a CI/CD environment where code is pushed to production multiple times a day, a penetration test is obsolete before the ink dries. Intruder’s move to deploy AI agents this May isn’t just a cost-saving measure; it is a fundamental architectural shift in how we perceive the attack surface.

Beyond the Scanner: The Agentic Reasoning Loop

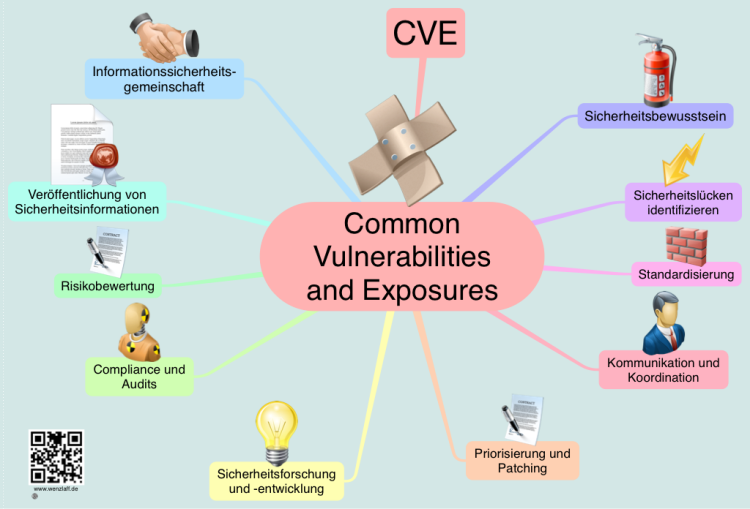

To understand why this matters, we have to distinguish between a “scanner” and an “agent.” Most companies already use automated vulnerability scanners. These tools are essentially digital checklists—they ping a port, see if it’s open, and check if the version number matches a known CVE (Common Vulnerabilities and Exposures) entry. They are loud, rigid, and prone to false positives.

Intruder’s AI agents operate on a different plane: agentic reasoning. Instead of a linear checklist, these agents utilize a “ReAct” (Reason + Act) pattern. The AI doesn’t just see an open port; it hypothesizes. It thinks: “I see an exposed Jenkins instance on port 8080. Based on the header, it’s an outdated version. I will now attempt a known remote code execution (RCE) payload to see if I can gain a shell.”

This mimics the intuition of a human red-teamer. It involves a feedback loop where the output of one tool—perhaps an Nmap scan—becomes the prompt for the next action. By scaling LLM parameters specifically for security telemetry, Intruder has effectively compressed the “discovery-to-exploitation” timeline from days to seconds.

It is ruthless efficiency.

The 30-Second Verdict: Manual vs. AI Pentesting

| Metric | Manual Penetration Test | Intruder AI Agents |

|---|---|---|

| Average Cost | $10,000 – $50,000 per engagement | Subscription-based / Per-scan |

| Execution Time | 2 – 4 Weeks | Minutes to Hours |

| Frequency | Annual or Bi-Annual | Continuous / On-Demand |

| Methodology | Heuristic & Intuitive | Agentic LLM Orchestration |

| Output | Static PDF Report | Live Dashboard & Remediation |

The Erosion of the “Human Moat”

The cybersecurity industry has long relied on the “human moat”—the idea that only a skilled operator can chain together multiple low-severity vulnerabilities to create a high-severity exploit. We believed that AI could handle the “low-hanging fruit” (SQL injections, outdated libraries) but that the complex logic flaws required a human brain.

That moat is evaporating. As LLMs become more adept at understanding systemic dependencies and proprietary codebases, the ability to “chain” exploits becomes a matter of compute, not just creativity. When an AI can parse thousands of lines of documentation and correlate them with real-time network responses, the “creative” edge of the human pentester shrinks.

“The democratization of offensive AI is a double-edged sword. While tools like Intruder allow defenders to find holes before the bad guys do, we are essentially providing a blueprint for how autonomous attack agents will operate. The window between vulnerability discovery and weaponization is closing to near zero.”

This shift forces a move toward OWASP-aligned continuous security. If the attacker is using an AI agent to probe your perimeter 24/7, defending with a yearly manual audit is like bringing a knife to a railgun fight.

Systemic Risks and the AI Arms Race

We cannot discuss the rise of AI pentesting without addressing the “Shadow AI” problem. If a legitimate company can build an agent that does this in minutes, the adversarial community—state-sponsored actors and ransomware cartels—is already doing the same. We are entering an era of “Machine vs. Machine” warfare.

The technical danger here is “hallucinated exploits.” If an AI agent misinterprets a system response, it could potentially trigger a Denial of Service (DoS) by attempting a payload that crashes a legacy service. This is why the integration of these agents into a production environment requires strict guardrails and a deep understanding of the target’s fragility.

this technology accelerates platform lock-in. As security becomes an integrated, AI-driven service, companies may find themselves tethered to the ecosystem that has the best “training data” on the latest exploits. The “data moat” becomes more valuable than the software itself.

What In other words for Enterprise IT

- Budget Realignment: The “big ticket” annual pentest budget will likely shift toward continuous security platforms.

- Skill Shift: Security engineers will move from “finding” bugs to “orchestrating” the AI that finds them and managing the remediation pipeline.

- Compliance Evolution: Regulators (like those overseeing SOC2 or HIPAA) will need to accept continuous AI validation as a substitute for the traditional “signed PDF” from a human auditor.

The Bottom Line: From Audit to Immunity

Intruder isn’t just selling a cheaper version of a human pentester. They are selling a shift in philosophy. The goal is no longer to “pass the test” but to achieve a state of continuous immunity.

By leveraging agentic workflows, we are finally moving away from the theater of security—where we pretend a snapshot of a network is a guarantee of safety—and toward a reality where the defense evolves as fast as the attack. For the CISO, the message is clear: if you are still scheduling your 2026 pentests via email and spreadsheets, you are already obsolete.

The bots are already inside the wire. You might as well be the one controlling them.