The Power Grid Under Strain: AI Data Centers and the Rising Cost of Electricity

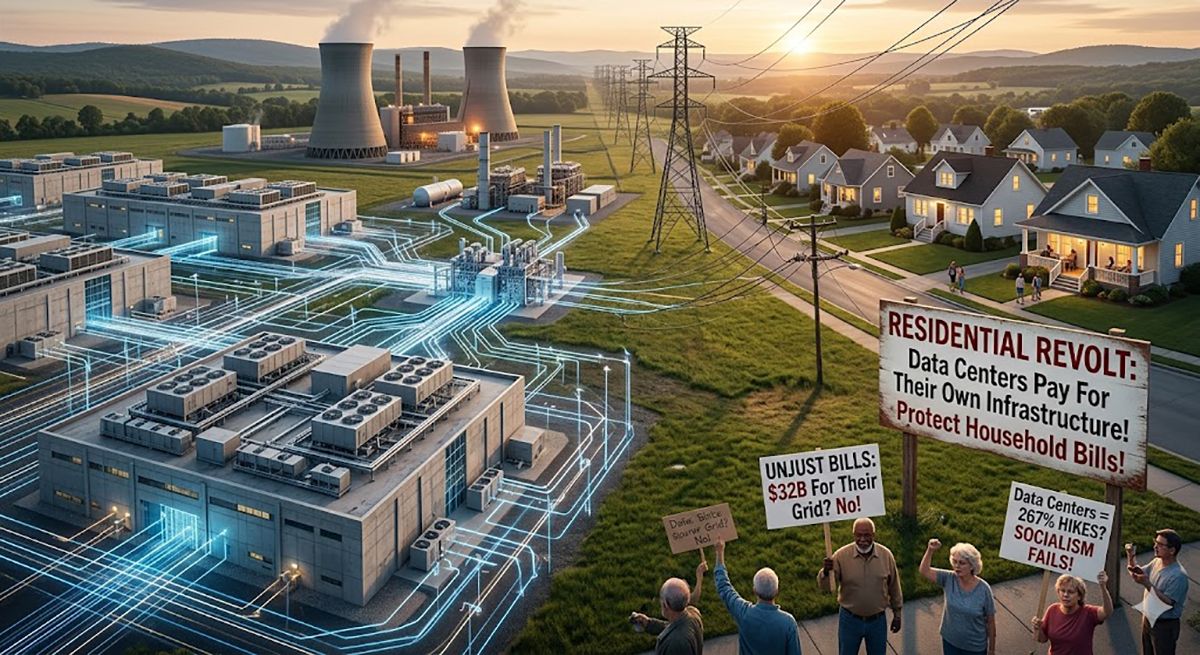

Across the United States, residents are experiencing a surge in electricity bills, directly attributable to the exponential growth of AI data centers. These facilities, ravenous for power to fuel the training and operation of large language models (LLMs) and other AI applications, are placing unprecedented demands on local grids. While the long-term benefits of AI are touted, the immediate financial burden is sparking a revolt in several states, challenging the current infrastructure and cost allocation models. This isn’t simply about increased demand; it’s about a fundamental mismatch between how electricity is priced and who ultimately bears the cost of supporting these power-intensive operations.

The situation isn’t merely anecdotal. Reports from states like Virginia, North Carolina, and Texas demonstrate a clear correlation between the influx of data centers – driven by companies like Microsoft, Amazon, and Google – and escalating electricity rates for consumers. The core issue lies in the fact that many data centers benefit from discounted electricity rates negotiated directly with utility providers, while residential and small business customers are left to absorb the costs of grid upgrades and increased overall demand. This creates a situation where the benefits of AI innovation are disproportionately enjoyed by a few large corporations, while the financial burden falls on the broader public.

The NPU Arms Race: Why AI Needs So Much Power

Understanding the power demands requires a dive into the hardware. The current AI boom is fueled by specialized processors, most notably Graphics Processing Units (GPUs) initially, but increasingly, Neural Processing Units (NPUs). While GPUs excel at parallel processing, NPUs – like Google’s TPU v5e or Apple’s M3 series – are architected specifically for the matrix multiplications at the heart of deep learning. The efficiency gains of NPUs are significant, but even these specialized chips consume massive amounts of power, especially during model training. LLM parameter scaling is a key driver here. Each doubling of model parameters (think from 7 billion to 14 billion to 70 billion parameters in models like Llama 2) requires exponentially more computational resources and, energy. The trend isn’t slowing down; we’re rapidly approaching trillion-parameter models, demanding even more power.

Consider the power density. A single NVIDIA H100 GPU, a workhorse in many data centers, can draw up to 700 watts. A rack containing dozens of these GPUs can easily exceed 20kW. Multiply that by hundreds or thousands of racks within a single data center, and the power requirements become astronomical. This isn’t just about the chips themselves; it’s likewise about the cooling infrastructure required to dissipate the heat generated. Liquid cooling, while more efficient than traditional air cooling, still adds to the overall energy consumption.

Beyond the Megawatts: The Grid Modernization Challenge

The problem extends beyond simply generating enough electricity. The existing power grid, in many areas, isn’t equipped to handle the concentrated power demands of these data centers. Substations need to be upgraded, transmission lines need to be reinforced, and distribution networks need to be modernized. These upgrades are expensive, and the costs are often passed on to consumers through higher electricity rates. The current grid infrastructure, largely built decades ago, wasn’t designed for the localized, high-density power loads created by AI data centers.

the intermittent nature of renewable energy sources – like solar and wind – adds another layer of complexity. Data centers require a stable and reliable power supply, and relying solely on renewables can be challenging. This often necessitates the use of backup power sources, such as natural gas generators, which further contribute to carbon emissions and increase costs. The ideal solution involves a combination of renewable energy sources, energy storage systems (like large-scale batteries), and a smart grid capable of dynamically managing power flow.

What This Means for Enterprise IT

For businesses, the rising cost of electricity translates directly into higher cloud computing costs. Cloud providers, facing increased energy expenses, are likely to pass those costs on to their customers. This could slow down the adoption of AI-powered services, particularly for small and medium-sized businesses (SMBs) with limited budgets. It also incentivizes companies to explore on-premise AI solutions, but that comes with its own set of challenges, including the need for significant capital investment in hardware and infrastructure.

The situation also highlights the importance of energy-efficient AI algorithms and hardware. Researchers are actively exploring techniques like model pruning, quantization, and knowledge distillation to reduce the computational complexity of AI models without sacrificing accuracy. These techniques can significantly reduce the energy consumption of AI applications, making them more sustainable and affordable.

The Regulatory Pushback and the “Socialist” Label

The backlash against the current electricity pricing model is gaining momentum. Several states are considering legislation to reform how data centers are charged for electricity, aiming to ensure that they contribute their fair share to grid upgrades and infrastructure maintenance. The term “socialist” being applied to the current system is a politically charged framing, but it reflects the sentiment that the costs are being unfairly distributed. The core argument is that data centers, as significant beneficiaries of the grid, should bear a greater proportion of the costs associated with maintaining and expanding it.

One proposed solution involves implementing a tiered pricing system, where data centers pay a higher rate for electricity during peak demand periods. Another approach is to require data centers to directly fund grid upgrades in the areas where they are located. These measures are facing resistance from the tech industry, which argues that they could stifle innovation and discourage investment. However, proponents argue that a more equitable system is essential for ensuring the long-term sustainability of the power grid and the affordability of electricity for all consumers.

“The current model is unsustainable. We’re essentially subsidizing the AI revolution with the electricity bills of everyday citizens. A more equitable cost allocation is not anti-innovation; it’s a matter of fairness and long-term grid stability.” – Dr. Anya Sharma, CTO of GridNexus, a smart grid analytics firm.

The debate is also sparking discussions about the location of data centers. States with more favorable electricity rates and regulatory environments are likely to attract more data center investment, while states with stricter regulations may see a slowdown in growth. This could lead to a geographic concentration of AI infrastructure, potentially exacerbating regional disparities in electricity costs.

The 30-Second Verdict

AI’s power hunger is real, and you’re paying for it. Expect continued electricity price hikes unless states implement fairer cost allocation models. The NPU revolution is helping, but demand is still outpacing efficiency gains. This isn’t just a tech issue; it’s a political and economic one.

The Open-Source Alternative: Decentralizing the AI Compute

Interestingly, the rising cost of centralized AI compute is also fueling interest in decentralized AI platforms. Projects like SingularityNET and initiatives leveraging federated learning aim to distribute AI workloads across a network of smaller, independent compute nodes. This approach could reduce the reliance on massive data centers and lower overall energy consumption. However, challenges remain in terms of security, data privacy, and ensuring the reliability of the network. The incentive structures for participation also need to be carefully considered.

the open-source AI community is playing a crucial role in developing more efficient AI algorithms and hardware. Initiatives like the Hugging Face Transformers library provide developers with access to pre-trained models and tools for optimizing AI applications. The collaborative nature of open-source development fosters innovation and accelerates the pace of progress. This contrasts sharply with the closed ecosystems of some of the major tech companies, where innovation is often driven by proprietary interests.

The long-term solution likely involves a combination of technological advancements, regulatory reforms, and a shift towards more sustainable AI practices. The current situation is a wake-up call, highlighting the need for a more holistic and equitable approach to AI development and deployment. The storm is indeed here, but it doesn’t have to be a permanent blackout.

| Processor | Typical Power Draw (Watts) | Peak Performance (TFLOPS) | Power Efficiency (TFLOPS/Watt) |

|---|---|---|---|

| NVIDIA H100 | 700 | 1979 | 2.83 |

| Google TPU v5e | 450 | 3276 | 7.28 |

| Apple M3 Max | 120 | 91.4 | 0.76 |

The data clearly shows that while TPUs offer superior power efficiency, the sheer scale of data center operations still necessitates massive power consumption. Apple’s M3 Max, while less powerful demonstrates the potential for significant energy savings in edge computing applications.