AI-Powered Risk Stratification for Childhood Cancer Survivors: A New Era in Long-Term Care

Researchers at St. Jude Children’s Research Hospital have demonstrated that artificial intelligence (AI), specifically large language models, can effectively analyze patient-physician conversations to identify childhood cancer survivors at higher risk of long-term health complications. This advancement, published in Communications Medicine, promises to improve targeted support and care for this vulnerable population by unlocking insights hidden within unstructured clinical data.

In Plain English: The Clinical Takeaway

- Better Risk Assessment: AI can help doctors quickly understand the challenges survivors face based on what they *say* about their health, not just test results.

- More Personalized Care: By pinpointing specific difficulties (pain, fatigue, social issues), doctors can tailor support services to each survivor’s needs.

- A Faster Process: AI can analyze lengthy conversation transcripts much faster than a human, freeing up doctors’ time to focus on patient care.

The Challenge of Late Effects in Pediatric Oncology

Childhood cancer treatment, whereas often life-saving, can have lasting effects on development and overall health. These “late effects” – ranging from cardiovascular disease and endocrine dysfunction to cognitive impairment and secondary cancers – can emerge years or even decades after treatment completion. Approximately 60% of childhood cancer survivors experience at least one chronic health condition by age 45, and the incidence is rising as survival rates improve. Identifying survivors at highest risk for these complications is crucial for proactive intervention, but current methods rely heavily on structured data (e.g., lab results, medical history) and often miss the nuances of a patient’s lived experience.

Decoding the Conversational Data: The Role of Large Language Models

A significant portion of a clinical encounter – estimated at 40-60% – involves patients describing their symptoms, functional limitations, and quality of life concerns. This information, captured in conversation transcripts and open-ended survey responses, represents a rich source of data that is often underutilized. Large language models (LLMs), a type of AI trained on massive datasets of text and code, offer a powerful tool for analyzing this unstructured data. The St. Jude study focused on two prominent LLMs: ChatGPT and Llama. The researchers hypothesized that more detailed “prompts” – the instructions given to the AI – would yield more accurate results. A prompt, is akin to asking a exceptionally specific question. the more context provided, the better the AI can understand and respond.

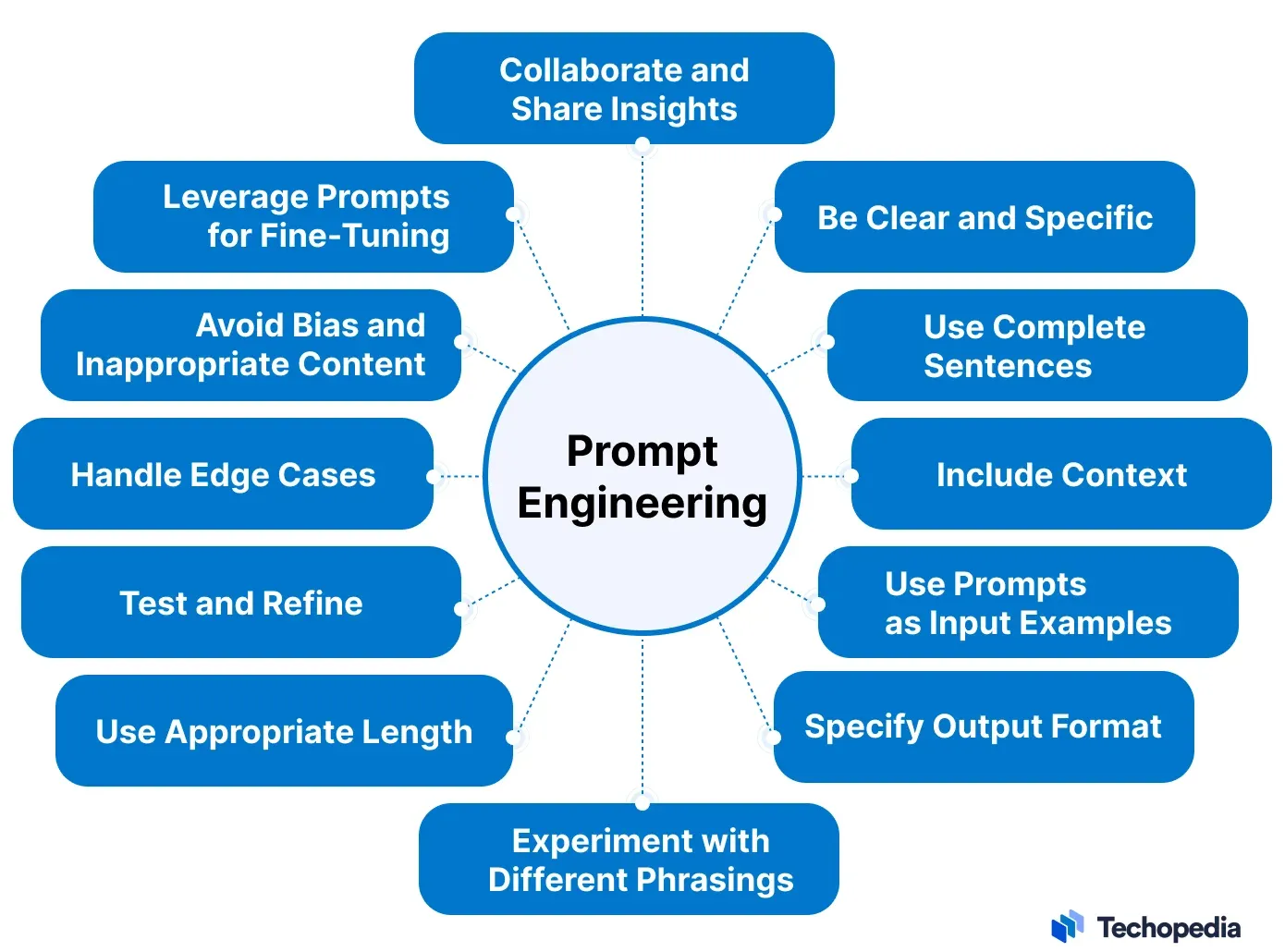

Prompt Engineering: From Zero-Shot to Chain-of-Thought

The study compared four prompting strategies. “Zero-shot” prompting provides minimal instruction, simply asking the AI to analyze the text. “Few-shot” prompting offers a few examples of the desired output. These simpler approaches proved unreliable. The more sophisticated strategies – “chain-of-thought” and “generated knowledge” – significantly improved performance. Chain-of-thought prompting guides the AI through a step-by-step reasoning process, mimicking human thought. Generated knowledge prompting instructs the AI to first generate relevant background information before analyzing the text. Both methods demonstrated a strong ability to identify the physical and cognitive impacts of symptoms, with moderate success in detecting social impacts. The underlying mechanism of action relies on the LLM’s ability to identify patterns and relationships within the text, leveraging its pre-trained knowledge base and the specific instructions provided in the prompt. This is distinct from traditional statistical modeling, which requires pre-defined variables and assumptions.

Geographical Impact and Regulatory Considerations

The integration of AI into clinical workflows is gaining momentum globally. In the United States, the Food and Drug Administration (FDA) is actively developing a regulatory framework for AI-based medical devices. The FDA’s approach focuses on ensuring the safety, effectiveness, and transparency of these technologies. Similar regulatory bodies, such as the European Medicines Agency (EMA) in Europe and the Medicines and Healthcare products Regulatory Agency (MHRA) in the UK, are also grappling with the challenges of AI regulation. Access to this technology may initially be concentrated in academic medical centers and large pediatric oncology programs, potentially exacerbating existing health disparities. Efforts to ensure equitable access will be critical as AI-powered tools become more widespread.

Funding and Potential Biases

This research was supported by the National Cancer Institute (NCI) and the American Lebanese Syrian Associated Charities (ALSAC), the fundraising and awareness organization for St. Jude Children’s Research Hospital. It’s important to acknowledge that funding sources can potentially influence research outcomes, although the researchers have taken steps to mitigate bias through rigorous methodology and transparent reporting. A potential bias inherent in LLMs is their reliance on the data they were trained on. If the training data is not representative of all patient populations, the AI may exhibit biased performance. Ongoing monitoring and validation are essential to ensure fairness and accuracy.

Contraindications & When to Consult a Doctor

This technology is not intended to replace clinical judgment. AI-generated risk assessments should always be interpreted by a qualified healthcare professional. Survivors experiencing new or worsening symptoms should consult their oncologist or primary care physician immediately. This technology is not appropriate for individuals who are unable to communicate effectively or who have cognitive impairments that may affect their ability to participate in interviews. Reliance on AI-driven assessments without considering individual patient preferences and values could lead to suboptimal care.

| Prompting Strategy | Accuracy (vs. Human Experts) | Physical Impact Detection | Cognitive Impact Detection | Social Impact Detection |

|---|---|---|---|---|

| Zero-Shot | Low (30-40%) | Poor | Poor | Poor |

| Few-Shot | Moderate (50-60%) | Fair | Fair | Poor |

| Chain-of-Thought | High (80-90%) | Excellent | Excellent | Moderate |

| Generated Knowledge | High (75-85%) | Excellent | Excellent | Moderate |

Expert Perspective

“The ability of these models to extract meaningful information from patient narratives is truly remarkable. It opens up the possibility of a more holistic and patient-centered approach to survivorship care,” says Dr. Emily Carter, a pediatric oncologist and AI researcher at the University of California, San Francisco. “However, we must proceed cautiously and ensure that these tools are used responsibly and ethically.”

The Future of AI in Pediatric Cancer Survivorship

The St. Jude study represents a significant step forward in leveraging AI to improve the lives of childhood cancer survivors. Future research will focus on validating these findings in larger, more diverse populations and integrating AI-powered tools into clinical workflows. The development of standardized prompts and the creation of curated datasets will be crucial for ensuring the reliability and generalizability of these technologies. The goal is to empower healthcare professionals with the information they need to provide the best possible care for this unique and deserving population. The potential for AI to personalize survivorship care and reduce the burden of late effects is immense, offering hope for a healthier future for childhood cancer survivors.

References

- Sim, J.-a., et al (2026) Optimizing prompting strategies improves large language model classification of pain- and fatigue-related functional impact in childhood cancer survivors, Communications Medicine, DOI: 10.1038/s43856-026-01499-5.

- Oeffinger KC, et al. Long-term outcomes after childhood cancer. Lancet Oncol. 2013;14(10):999-1007.

- National Cancer Institute. Childhood Cancer Survivor Studies.

- FDA. Artificial Intelligence and Machine Learning (AI/ML) in Medical Devices.

- American Cancer Society. Late Effects of Childhood Cancer Treatment.