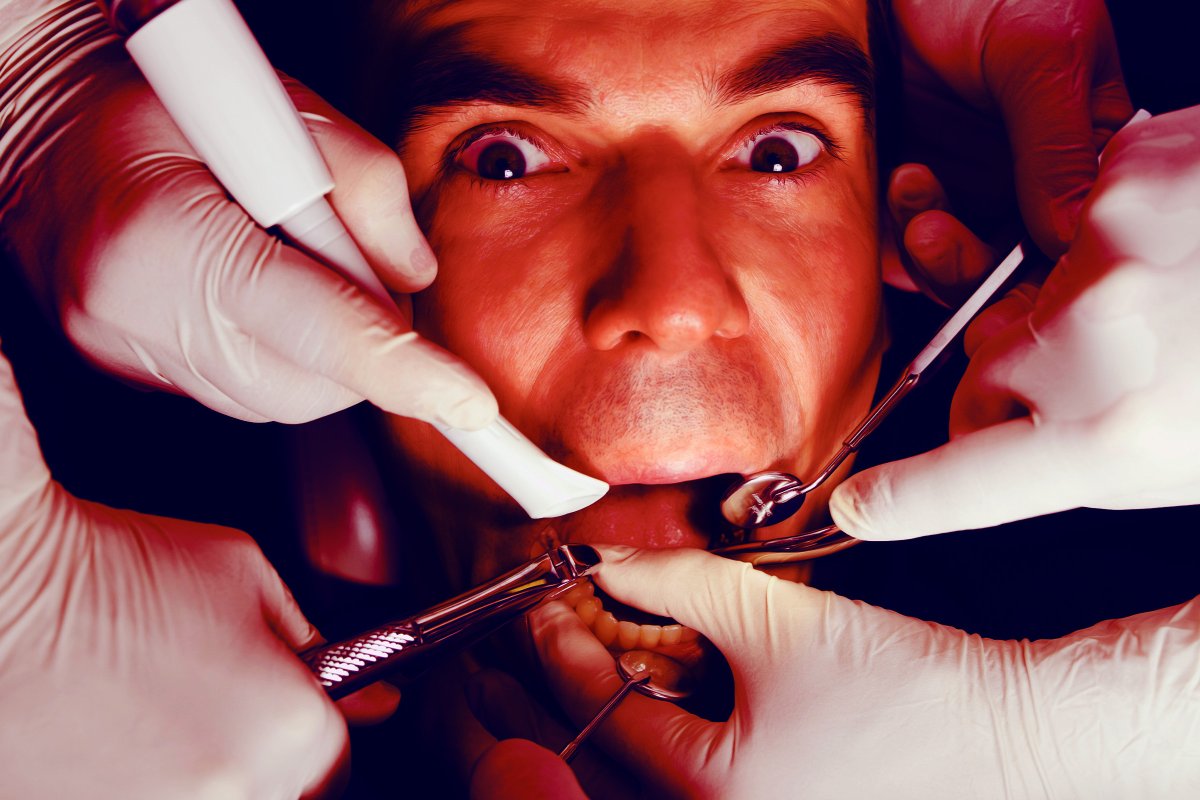

Artificial intelligence software integrated into dental imaging is increasingly being utilized to recommend restorative procedures that may not be clinically necessary. By leveraging algorithmic interpretations of radiographs, some dental practices are incentivizing over-treatment, raising significant ethical concerns regarding patient autonomy and the diagnostic accuracy of automated clinical decision-support systems.

In Plain English: The Clinical Takeaway

- Algorithmic Bias: AI tools are designed to flag potential anomalies, but they often lack the clinical context—such as patient history or physical examination findings—required to diagnose active disease.

- Diagnostic Overlap: What an AI identifies as a “lesion” may often be a dormant, non-progressive area of demineralization that requires observation, not immediate surgical intervention.

- Second Opinions: If a digital scan leads to a sudden recommendation for multiple fillings or crowns, request the raw imaging data and consult a second, independent practitioner.

The Mechanism of Algorithmic Over-Diagnosis

The integration of AI in dentistry primarily relies on deep learning—a subset of machine learning where neural networks analyze thousands of dental images to identify patterns consistent with dental caries (cavities) or periodontal bone loss. While these tools can enhance efficiency, their mechanism of action is inherently probabilistic. These systems operate by calculating the statistical likelihood that a pixel cluster represents pathology based on the training data provided by the software developer.

The clinical risk arises when the software’s sensitivity is tuned too high. In clinical diagnostics, sensitivity refers to the ability of a test to correctly identify those with the disease. If a diagnostic tool is over-sensitive, it generates a high rate of “false positives.” In a commercial environment, these false positives are being weaponized to suggest restorative work for enamel demineralization that, according to established clinical guidelines, could be arrested through fluoride therapy or dietary modification rather than invasive drilling.

“The danger of AI in clinical practice is the ‘black box’ phenomenon. When a clinician abdicates diagnostic judgment to an algorithm without understanding the underlying training set, the patient becomes a revenue target rather than a recipient of evidence-based care.” — Dr. Elena Rossi, Senior Epidemiologist in Digital Health.

Geo-Epidemiological Impact and Regulatory Oversight

The regulatory landscape for these tools varies significantly. In the United States, the Food and Drug Administration (FDA) classifies many dental AI software packages as “Class II medical devices.” This designation requires the manufacturer to demonstrate substantial equivalence to an existing, legally marketed device (a process known as 510(k) clearance). However, this process often focuses on safety rather than the clinical efficacy of the software’s diagnostic suggestions in diverse patient populations.

In the European Union, the Medical Device Regulation (MDR) imposes stricter post-market surveillance requirements. Yet, the rapid proliferation of these tools often outpaces the ability of local health authorities to audit the algorithms for bias. Patients in regions with privatized dental care, such as the US and parts of the UK, are at higher risk of “diagnostic upselling” because the software is frequently bundled with practice management systems designed to maximize billable hours.

| Clinical Metric | Traditional Clinical Exam | AI-Enhanced Assessment |

|---|---|---|

| Diagnostic Basis | Clinical Exam + Radiographs | Radiographic Algorithmic Pattern |

| Primary Bias | Subjective Experience | Training Data/Sensitivity Tuning |

| Intervention Rate | Standard of Care | Increased Risk of Over-treatment |

| Verification | Peer-Reviewed Standards | Proprietary/Black-Box Algorithms |

Funding Transparency and Algorithmic Trust

A critical point of journalistic concern is the funding structure of the software developers. Many of the leading AI dental platforms are funded by private equity firms that also hold stakes in dental service organizations (DSOs). This creates a direct conflict of interest: the software is incentivized to increase the volume of recommended procedures to ensure a return on investment for the parent company. As noted in the Lancet Digital Health, without independent, third-party validation of these algorithms, the medical community lacks the transparency required to ensure patient safety.

Contraindications & When to Consult a Doctor

Patients should exercise caution when presented with a “computer-generated” treatment plan that deviates from their previous history of oral health. You should seek a second opinion if:

- Sudden Diagnostic Shifts: A dentist who has previously monitored your teeth as healthy suddenly finds multiple urgent issues based solely on an AI scan.

- Asymptomatic Recommendations: You are told you need invasive procedures (e.g., crowns, root canals) for teeth that cause you no pain or sensitivity.

- Lack of Clinical Correlation: The dentist cannot explain the diagnosis using visual evidence or a physical probe, relying exclusively on the software’s screen output.

Always verify that your practitioner is using the AI as an adjunct—a supplementary tool—rather than the primary diagnostic authority. A responsible clinician will always prioritize the standard of care, which mandates that treatment must be necessary, evidence-based, and informed by a comprehensive physical examination.

The future of AI in medicine holds immense promise for early detection, but only if the technology remains a servant to clinical expertise. Until these algorithms are subjected to rigorous, double-blind, placebo-controlled trials—where the “placebo” is a human-only diagnostic review—patients must remain the primary gatekeepers of their own health data.