Korea’s AI Sovereignty Push: Beyond Chips, Towards Integrated Infrastructure Control

South Korea is aggressively pursuing infrastructure sovereignty in the age of AI, recognizing that computational power, energy security, and resilient supply chains are now inextricably linked. This isn’t simply about domestic semiconductor production; it’s a holistic strategy to control the entire stack – from data center cooling to power grid stability – to avoid strategic vulnerabilities in a world increasingly defined by AI-driven competition. The initiative, gaining momentum this week, signals a shift from focusing solely on model development to securing the foundational layers that enable it.

The core realization driving this policy is brutally simple: AI’s insatiable appetite for compute isn’t just straining silicon fabrication capacity; it’s creating unprecedented demands on energy grids and exposing critical dependencies within global supply chains. Korea, heavily reliant on imported energy and components, views this as an existential risk. The current trajectory, if unchecked, could effectively outsource national AI capabilities to nations controlling these essential resources.

The Power Density Problem: Why Existing Infrastructure Fails

Current data center designs, even those employing cutting-edge liquid cooling solutions, are proving inadequate to handle the power density requirements of next-generation AI workloads. The shift towards larger Large Language Models (LLMs) – models boasting trillions of parameters – necessitates a fundamental rethink of data center architecture. Traditional power distribution units (PDUs) and cooling systems are hitting their limits. We’re seeing a clear bifurcation: hyperscalers with massive capital expenditure budgets are building bespoke facilities, while everyone else struggles to retrofit existing infrastructure. This creates a significant competitive disadvantage.

The problem isn’t just about raw wattage. It’s about *power quality* and *thermal management*. Fluctuations in the power grid can lead to data corruption and system instability, particularly in AI training runs that can span weeks or months. Inefficient cooling leads to thermal throttling, reducing performance and increasing energy consumption. The industry is increasingly looking at immersion cooling – submerging servers in dielectric fluid – as a viable solution, but even this has its challenges, including the cost of specialized fluids and the complexity of maintenance. Data Center Knowledge provides a comprehensive overview of these emerging cooling technologies.

The Semiconductor-Energy Nexus: Korea’s Strategic Play

Korea’s strategy isn’t just about building more chip fabs – although that’s a crucial component. It’s about co-locating these fabs with advanced energy storage facilities and integrating them directly into a modernized power grid. This minimizes transmission losses, improves grid stability, and reduces reliance on external energy sources. The government is actively incentivizing the development of microgrids powered by renewable energy sources, specifically designed to support AI infrastructure. This represents a direct response to the geopolitical risks associated with relying on fossil fuels and the potential for supply disruptions.

The focus on memory semiconductors – specifically DRAM and NAND flash – is particularly astute. These components are essential for AI training and inference, and Korea currently dominates the global market. However, maintaining that dominance requires continuous investment in advanced manufacturing processes and a secure supply of critical materials. The recent US CHIPS Act and similar initiatives in Europe are aimed at reshoring semiconductor manufacturing, but Korea is taking a more comprehensive approach by addressing the entire ecosystem.

What This Means for Enterprise IT

For enterprises, Korea’s push for infrastructure sovereignty has several implications. First, it will likely lead to increased competition in the AI infrastructure market. Korean companies are well-positioned to offer integrated solutions – from chips to cooling systems to power management – that are optimized for AI workloads. Second, it will accelerate the adoption of energy-efficient technologies. The demand for sustainable AI infrastructure will drive innovation in areas such as liquid cooling, renewable energy, and smart grid technologies. Third, it will highlight the importance of supply chain resilience. Enterprises will need to diversify their sourcing of critical components and build stronger relationships with suppliers.

The move also has implications for open-source AI development. Korea is actively promoting the use of open-source frameworks and tools, recognizing that this fosters innovation and reduces vendor lock-in. However, it’s also investing heavily in its own proprietary AI technologies, creating a potential tension between open-source collaboration and national strategic interests.

The Role of NPUs and Edge Computing in Korea’s Vision

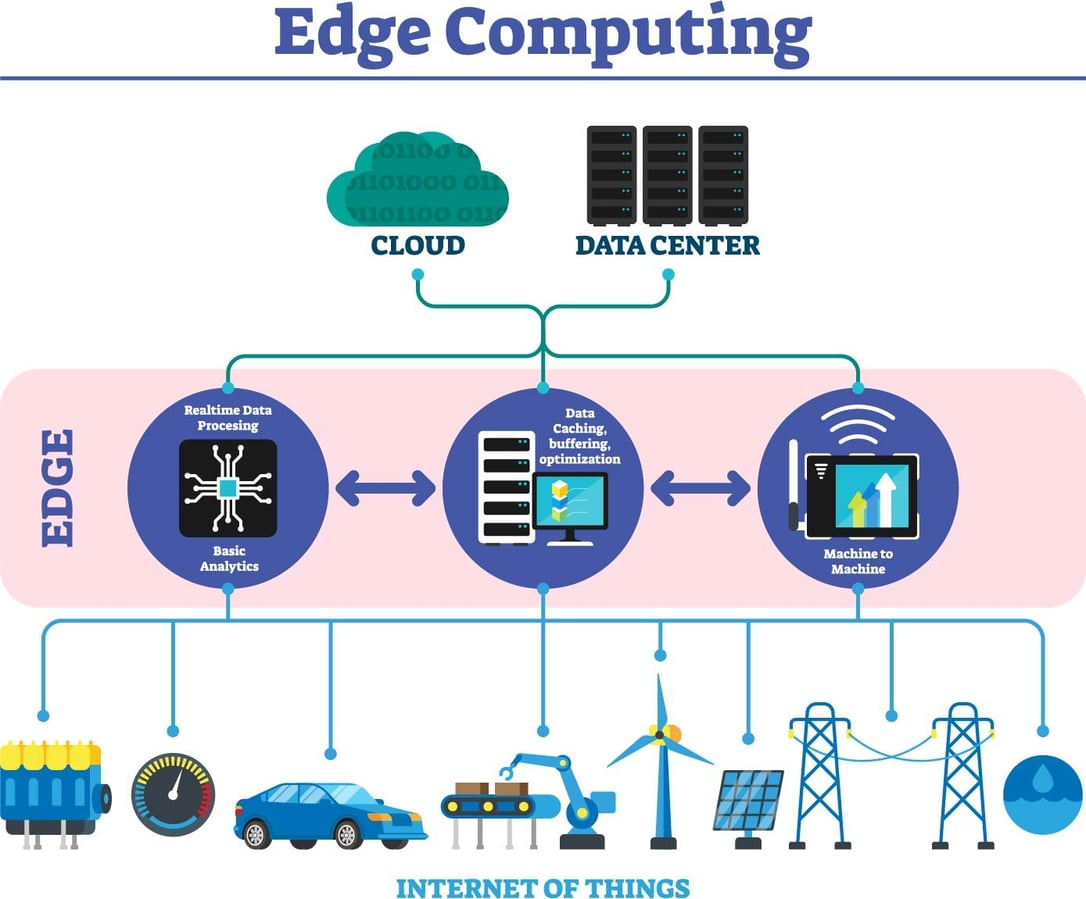

Korea isn’t solely focused on centralized AI infrastructure. It’s also investing heavily in edge computing and the development of Neural Processing Units (NPUs). NPUs are specialized processors designed to accelerate AI workloads, particularly inference. By deploying AI models closer to the data source – at the edge of the network – Korea aims to reduce latency, improve privacy, and conserve bandwidth. This is particularly important for applications such as autonomous vehicles, smart factories, and healthcare.

The integration of NPUs into edge devices requires a robust software stack and a secure development environment. Korea is actively working to establish standards for NPU programming and security, and it’s collaborating with international partners to ensure interoperability. The shift towards edge computing also necessitates a new approach to cybersecurity, as edge devices are often more vulnerable to attack than centralized servers.

“The biggest challenge isn’t building the hardware; it’s building the software and the security layers around it. We need to ensure that AI systems are not only powerful but also trustworthy and resilient.” – Dr. Ji-hoon Kim, CTO of AI Solutions Korea, speaking at the Korea AI Summit last month.

The architectural shift towards NPUs is also impacting the ARM vs. X86 debate. While x86 historically dominated server infrastructure, ARM-based processors are gaining traction in edge computing and AI applications due to their superior power efficiency. Korea is actively supporting the development of ARM-based NPUs, recognizing their potential to unlock new levels of performance and efficiency.

The 30-Second Verdict

Korea’s AI infrastructure strategy is a bold and ambitious attempt to secure its future in the age of artificial intelligence. It’s a comprehensive approach that addresses not only the hardware and software but also the energy and supply chain challenges. This isn’t just a national initiative; it’s a blueprint for other nations seeking to maintain their competitiveness in the global AI landscape.

The success of this strategy will depend on Korea’s ability to foster innovation, attract investment, and collaborate with international partners. However, one thing is clear: the center of gravity in AI is shifting, and Korea is determined to be at the forefront of this transformation. IEEE Transactions on Power Systems offers detailed research on grid modernization and its impact on data center operations.

The implications extend beyond Korea. The global “chip war” is intensifying, and nations are increasingly viewing AI infrastructure as a strategic asset. The race to secure control of the AI stack is on, and the stakes are higher than ever. Ars Technica’s coverage of the US-China semiconductor conflict provides valuable context.

Finally, the ethical considerations surrounding AI infrastructure cannot be ignored. The environmental impact of AI training and inference is significant, and it’s crucial to develop sustainable solutions. The potential for bias in AI algorithms is also a concern, and it’s important to ensure that AI systems are fair and equitable. OpenAI’s Responsible AI principles, while originating from a specific company, highlight the broader industry conversation.