Phoenix’s Rising Tide of Animal Hoarding: A Data-Driven Crisis Demanding Predictive Analytics

Animal hoarding incidents are surging across the Phoenix metropolitan area, according to data compiled this week. The Humane Society of Arizona reports a 35% increase in cases over the past year, straining local resources and raising critical questions about the intersection of mental health, social isolation, and the potential for leveraging AI-powered predictive modeling to identify and intervene *before* conditions escalate. This isn’t simply an animal welfare issue; it’s a complex socio-technical problem ripe for data science intervention.

The current response is largely reactive, relying on neighbor complaints and overwhelmed animal control services. This approach is inefficient and, frankly, inhumane. The core issue isn’t a lack of compassion, but a lack of foresight. We’re missing the signal in the noise. The data exists – fragmented across various agencies, from property records and utility usage to social services interactions and even online purchasing patterns of bulk pet supplies – but it’s siloed and underutilized.

The Data Silos and the Promise of Federated Learning

The biggest hurdle isn’t the *amount* of data, but its accessibility. Privacy concerns and bureaucratic inertia prevent a centralized database. This is where federated learning (McMahan et al., 2017) offers a compelling solution. Federated learning allows a machine learning model to be trained across multiple decentralized edge devices or servers holding local data samples, without exchanging them. Imagine a system where animal control, social services, and even local retailers (with appropriate consent and anonymization protocols) could contribute to a shared model without compromising individual privacy.

The model itself wouldn’t be looking for “hoarders” directly. That’s a problematic and stigmatizing approach. Instead, it would identify patterns of behavior correlated with increased risk – a sudden spike in pet food purchases, unusually high water consumption, a history of social isolation flagged by social service agencies, or even changes in property maintenance detected through satellite imagery analysis. The key is to focus on *indicators*, not accusations.

Beyond Correlation: The Role of LLMs in Behavioral Analysis

Simple correlation isn’t enough. We necessitate to understand the *why* behind the data. This is where Large Language Models (LLMs) come into play. Analyzing free-text reports from social workers, animal control officers, and even online forum posts (again, with strict privacy safeguards) can reveal nuanced patterns of behavior that traditional statistical methods would miss. For example, an LLM could identify subtle linguistic cues indicative of increasing distress, social withdrawal, or a growing emotional attachment to animals as a substitute for human connection.

Although, the ethical implications are significant. LLMs are prone to bias, and misinterpreting language could lead to false positives and unwarranted interventions. The training data must be carefully curated to mitigate these risks, and any AI-driven recommendations should always be reviewed by a human professional. We’re talking about people’s lives, and algorithmic accountability is paramount.

“The challenge isn’t building the AI; it’s building the trust. We need to demonstrate that these systems are fair, transparent, and accountable, and that they prioritize human well-being above all else.”

Dr. Anya Sharma, CTO, Ethical AI Solutions

The Hardware Bottleneck: Edge Computing and NPU Acceleration

Running these complex models – federated learning and LLM inference – requires significant computational power. Centralized cloud processing introduces latency and bandwidth limitations, especially in rural areas. The solution lies in edge computing, deploying AI models directly onto local devices – smartphones, tablets, or dedicated edge servers at animal control facilities.

This is where Neural Processing Units (NPUs) become critical. NPUs, like Apple’s A17 Bionic chip or Qualcomm’s Snapdragon X Elite, are specifically designed for accelerating AI workloads. They offer significantly better performance and energy efficiency compared to traditional CPUs and GPUs for tasks like image recognition, natural language processing, and model inference. The shift towards on-device AI isn’t just about speed; it’s about privacy and resilience. Processing data locally minimizes the risk of data breaches and ensures that services remain available even when internet connectivity is unreliable.

Consider the architectural differences: a typical cloud-based LLM inference pipeline might involve a high-bandwidth connection to a remote server, multiple layers of data serialization and deserialization, and significant latency. An NPU-accelerated edge deployment, can perform the same inference directly on the device, with minimal latency and no reliance on external connectivity. The difference is stark.

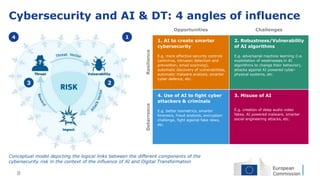

The Cybersecurity Angle: Protecting Sensitive Data in a Decentralized System

A federated learning system, by its very nature, introduces new cybersecurity vulnerabilities. Each participating node represents a potential attack surface. Protecting sensitive data requires a multi-layered approach, including conclude-to-end encryption, differential privacy, and robust access control mechanisms. Differential privacy adds noise to the data to prevent individual records from being identified, even as still allowing the model to learn meaningful patterns.

the communication channels between nodes must be secured against man-in-the-middle attacks and data tampering. Using secure aggregation protocols, where data is encrypted and aggregated before being transmitted, can mitigate these risks. Regular security audits and penetration testing are also essential to identify and address vulnerabilities.

What This Means for Enterprise IT

The lessons learned from this application of AI to animal hoarding extend far beyond animal welfare. The challenges of data siloing, privacy concerns, and the need for edge computing are common across many industries, including healthcare, public safety, and environmental monitoring. The federated learning and NPU-acceleration strategies outlined here can be adapted to address similar problems in other domains.

The rise of edge AI is fundamentally changing the landscape of enterprise IT. Organizations are increasingly moving compute power closer to the data source, reducing latency, improving security, and enabling new applications that were previously impossible. This requires a shift in mindset, from centralized cloud-based architectures to distributed, edge-centric models.

“We’re seeing a massive investment in edge infrastructure, driven by the demand for real-time AI and the need to protect sensitive data. The future of computing is distributed, and NPUs are the key enabler.”

Kenji Tanaka, Lead Architect, Arm Holdings

The situation in Phoenix isn’t just a local crisis; it’s a microcosm of a larger societal challenge. By embracing data-driven solutions and leveraging the power of AI, we can move from reactive crisis management to proactive prevention, not just for animal welfare, but for the well-being of our communities as a whole. The technology is here; the question is whether we have the will to use it responsibly and effectively.

The 30-Second Verdict: AI-powered predictive modeling, coupled with federated learning and edge computing, offers a viable path towards addressing the rising tide of animal hoarding, but ethical considerations and robust cybersecurity measures are paramount.