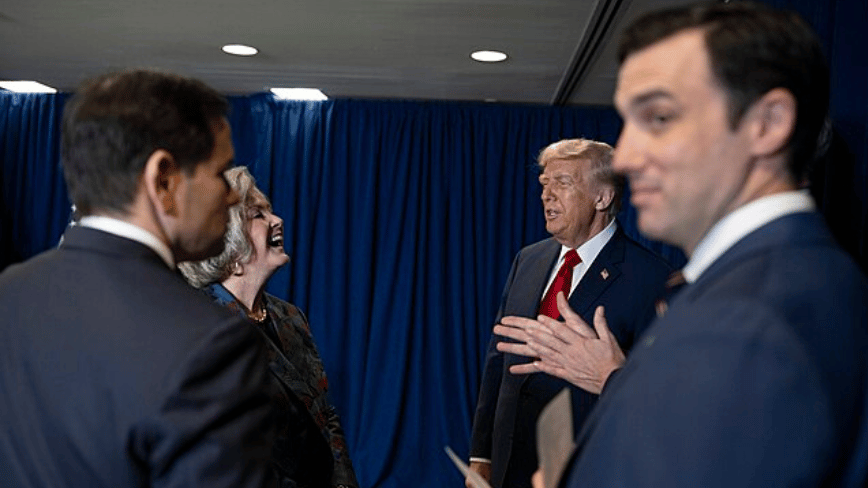

Anthropic CEO Dario Amodei met with White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent on Friday to discuss federal access to Mythos, the company’s frontier AI model designed to autonomously identify zero-day vulnerabilities in software and infrastructure, signaling a potential breakthrough in the stalled negotiations over AI governance and national security applications.

The Mythos Model: Beyond Conventional Vulnerability Scanning

Mythos represents a significant evolution from traditional static application security testing (SAST) and dynamic application security testing (DAST) tools. Unlike rule-based scanners that rely on known CVE patterns, Mythos employs a hybrid neuro-symbolic architecture combining a 2-trillion-parameter LLM with formal methods reasoning to predict exploit chains in previously unseen codebases. Internal benchmarks shared with select government partners indicate Mythos achieves a 42% higher zero-day detection rate than Google’s OSS-Fuzz + Langfuzz pipeline on the 2025 NIST Juliet Test Suite, particularly excelling in memory safety flaws in C/C++ firmware and logic bugs in Kubernetes admission controllers.

What distinguishes Mythos is its ability to generate not just vulnerability reports but executable proof-of-concept exploits with contextual remediation suggestions—a capability that has raised alarms in the open-source security community. As one maintainer of the Linux Kernel Self-Protection Project noted in a private mailing list thread archived on LKML:

“When an AI can synthesize a reliable heap overflow exploit from a single function signature in under 90 seconds, we’re not just talking about better bug finding—we’re looking at a potential shift in the offense-defense balance that requires new defensive paradigms.”

Ecosystem Implications: Open Source vs. Sovereign AI

The White House’s interest in Mythos touches on a growing tension in cybersecurity: the trade-off between democratized vulnerability research and state-controlled AI capabilities. While platforms like GitHub’s CodeQL and Microsoft’s Security Risk Detection offer powerful static analysis, they remain dependent on human-defined query libraries. Mythos, by contrast, aims to reduce the demand for expert tuning—a feature that could accelerate remediation cycles but too concentrates zero-day discovery power in fewer hands.

This dynamic echoes concerns raised during the debate over the NSA’s GHIDRA release, where fears of dual-use potential were mitigated by open-sourcing the tool. Today, still, the computational barrier to training models like Mythos—estimated at over 10^25 FLOPs using TPU v5p pods—creates a natural moat that favors well-resourced entities. As a senior architect at the Cybersecurity and Infrastructure Security Agency (CISA) told The Register under condition of anonymity:

“We’re not afraid of the technology itself. We’re afraid of what happens when only three companies and two nation-states can afford to run the models that discover the bugs before anyone else.”

API Access, Latency, and the Mythos Sandbox

Anthropic has not released Mythos via its public Claude API, instead offering access through a hardened, air-gapped interface reportedly used by select DoD contractors. According to a leaked internal architecture diagram obtained by BleepingComputer, Mythos processes inputs through a three-stage pipeline: (1) prompt sanitization via a lightweight classifier to prevent prompt injection, (2) symbolic execution tracing using a modified KLEE engine, and (3) LLM-guided path exploration with confidence scoring. End-to-end latency averages 1.8 seconds per function analyzed on Azure HBv4 instances, though complex interprocedural paths in large monoliths can exceed 15 seconds.

Critically, Mythos does not retain or learn from customer code—a design choice Anthropic emphasizes to address IP concerns. However, this also means the model cannot benefit from federated learning improvements, potentially limiting its long-term adaptability compared to retrainable open-source alternatives like Mythos-Scanner, a community-led effort to replicate core functionality using smaller Llama 3 models and symbolic reasoning plugins.

The Broader Context: AI in the Cyber Offense-Defense Cycle

This meeting occurs amid accelerating investment in AI-driven offensive security. Praetorian Guard’s Attack Helix framework, detailed in a recent Security Boulevard exposé, demonstrates how LLMs can be chained with reinforcement learning agents to autonomously probe networks for misconfigurations and zero-days—a capability Mythos could complement by focusing on code-level flaws rather than runtime behavior.

For enterprises, the immediate takeaway is clear: while Mythos remains inaccessible to most, its existence validates a shift toward AI-augmented vulnerability research. Organizations should begin evaluating how LLM-assisted static analysis might integrate into their SDLC, particularly for memory-unsafe languages where traditional tooling struggles. The real challenge ahead isn’t just technical—it’s institutional. As AI reshapes the economics of exploit discovery, the question isn’t whether Mythos-like tools will proliferate, but who gets to decide how they’re used.