Apple has discontinued the entry-level Mac Mini, removing the 256GB storage configuration and raising the starting price to $799. This strategic pivot follows an unexpected surge in AI-driven demand for high-spec Mac hardware, effectively eliminating the budget gateway to the macOS ecosystem to prioritize high-margin, AI-capable configurations.

For years, the Mac Mini served as the industry’s most efficient “price-to-performance” sleeper. It was the go-to for home-lab enthusiasts, developers building lightweight CI/CD pipelines, and users who preferred their own peripherals. By killing the cheapest SKU, Apple isn’t just adjusting a price tag; it is fundamentally redefining the Mac Mini’s role from a budget desktop to a professional AI workstation.

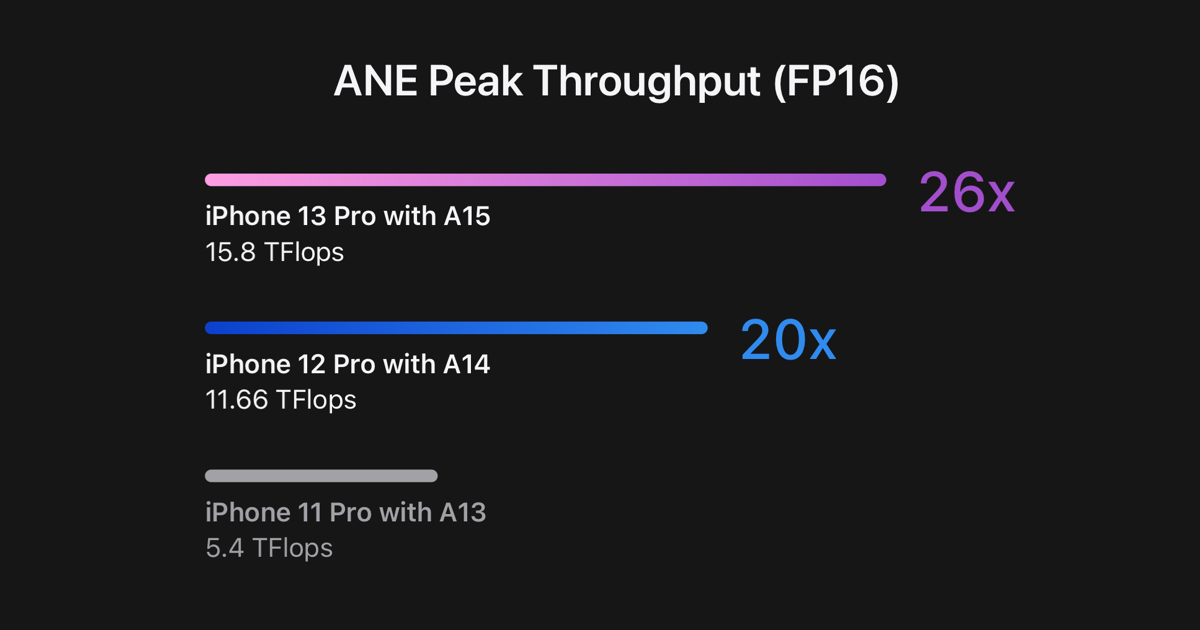

The catalyst here is the “AI Tax.” As local Large Language Models (LLMs) and generative AI workflows move from the cloud to the edge, the hardware requirements have shifted. The 256GB storage and base-level unified memory configurations—once sufficient for general productivity—are now bottlenecks. Apple’s Silicon architecture relies on a Unified Memory Architecture (UMA), where the CPU, GPU, and Neural Engine (NPU) share a single pool of high-bandwidth memory. When you run a local model, that memory is consumed rapidly. The entry-level machine simply couldn’t keep up with the appetite of the new AI-centric developer.

The Architecture of an AI Bottleneck

The shift to a $799 floor is a direct response to what TechCrunch identifies as AI-driven demand

that caught Apple by surprise. From a technical standpoint, this is about the Neural Engine’s efficiency. Whereas Apple’s NPUs are world-class in terms of TOPS (Tera Operations Per Second), they are nothing without the memory bandwidth to feed them. Local AI execution requires significant VRAM—or in Apple’s case, unified memory—to hold model weights.

By forcing users into higher-tier storage and memory configurations, Apple is ensuring that the “baseline” experience for Apple Intelligence and third-party AI tools remains performant. A 256GB drive, which often suffers from slower read/write speeds due to fewer NAND chips working in parallel, is a liability in a world of multi-gigabyte model weights.

The Price Floor Shift

| Configuration Detail | Previous Entry-Level | New Entry-Level |

|---|---|---|

| Starting Price | $599 / $699 | $799 |

| Base Storage | 256GB SSD | 512GB SSD |

| Primary Target | General Consumer / Student | Prosumer / AI Developer |

| Availability | Widely Available | Limited / Backordered |

This isn’t a vacuum. It’s a calculated move in the broader “chip wars.” While Intel and AMD are pushing “AI PCs” with dedicated NPUs, Apple is leveraging the vertical integration of its SoC (System on Chip) to lock users into a higher spending bracket. If you want the efficiency of ARM-based computing for your AI workloads, you now have to pay a premium entrance fee.

Supply Chain Friction and the ‘Several Months’ Gap

The discontinuation of the cheap model coincides with a worrying trend in availability. Reports from WIRED and Ars Technica indicate that getting a Mac Mini—or even a Mac Studio—has become an exercise in patience, with some lead times stretching into several months

.

This suggests a twofold problem: a sudden spike in demand for the remaining high-spec units and a potential transition in the manufacturing pipeline. We are likely seeing the tail-end of one silicon cycle and the friction of ramping up the next. When demand for AI-capable hardware exceeds the production capacity of TSMC’s fabrication plants, the first thing to go is the low-margin product. Apple is simply allocating its limited silicon wafers to the machines that generate the most profit per unit.

“The industry is seeing a massive migration toward local inference. When developers realize they can run a quantized Llama-3 model locally on a Mac rather than paying per-token API costs, the demand for unified memory skyrockets. Apple is simply pruning the product line to match the actual usage patterns of the current market.” Marcus Thorne, Lead Systems Architect at NeuralFlow

The Developer’s Dilemma: Home Labs and ARM

For the community of developers who use the Mac Mini as a headless server or a GitHub Actions runner, this price hike is a bitter pill. The Mac Mini was the most affordable way to get a low-power, high-performance ARM server into a home rack. By raising the entry price, Apple is effectively pushing the “hobbyist” out of the garden.

This creates a strategic opening for the RISC-V community and other ARM-based alternatives. But, the software moat—macOS and its tight integration with Xcode—remains a powerful tether. Most developers will pay the $799 because the cost of switching their entire toolchain to Linux on ARM is higher than the $100-$200 price increase.

The 30-Second Verdict

- The Move: Apple killed the 256GB Mac Mini; the floor is now $799.

- The Cause: AI workloads demand more memory and faster storage than the base model could provide.

- The Result: Lower-end consumers are priced out; prosumers face massive shipping delays.

- The Strategy: Prioritize high-margin, AI-ready hardware over budget market share.

The Macro Play: Margin Protection in the AI Era

this is about the bottom line. Apple has never been a budget brand, but the Mac Mini was a rare exception—a loss leader that brought users into the ecosystem. In the AI era, the cost of components (specifically high-bandwidth memory) has risen. Maintaining a $599 price point would have squeezed margins to an unacceptable degree.

By repositioning the Mac Mini as a “Pro” device, Apple is aligning its hardware strategy with its software ambitions. If Apple Intelligence is to be the centerpiece of the next five years of macOS, the hardware must be capable of running it without thermal throttling or memory swapping. A 256GB machine is no longer a tool; it’s a liability to the brand’s image of “seamless” AI integration.

We are witnessing the death of the budget Mac. In its place, we get a streamlined, expensive, and powerful AI appliance. For the power user, it’s a logical evolution. For everyone else, the door to the Apple ecosystem just got a lot heavier.