Apple has increased the Mac Mini’s starting price to $799, discontinuing its budget entry-level model. This price hike follows an unexpected surge in demand from AI developers and researchers leveraging Apple Silicon’s unified memory for local LLM inference, which has severely depleted global inventory and triggered secondary market markups.

This is not a standard inflationary adjustment. When Tim Cook admits that the Mac Mini is being snapped up for AI work ‘faster than we predicted’

, he is acknowledging a fundamental shift in how the market perceives Apple’s smallest desktop. The Mac Mini has evolved from a consumer-grade “home office” box into a high-density, energy-efficient node for local AI development.

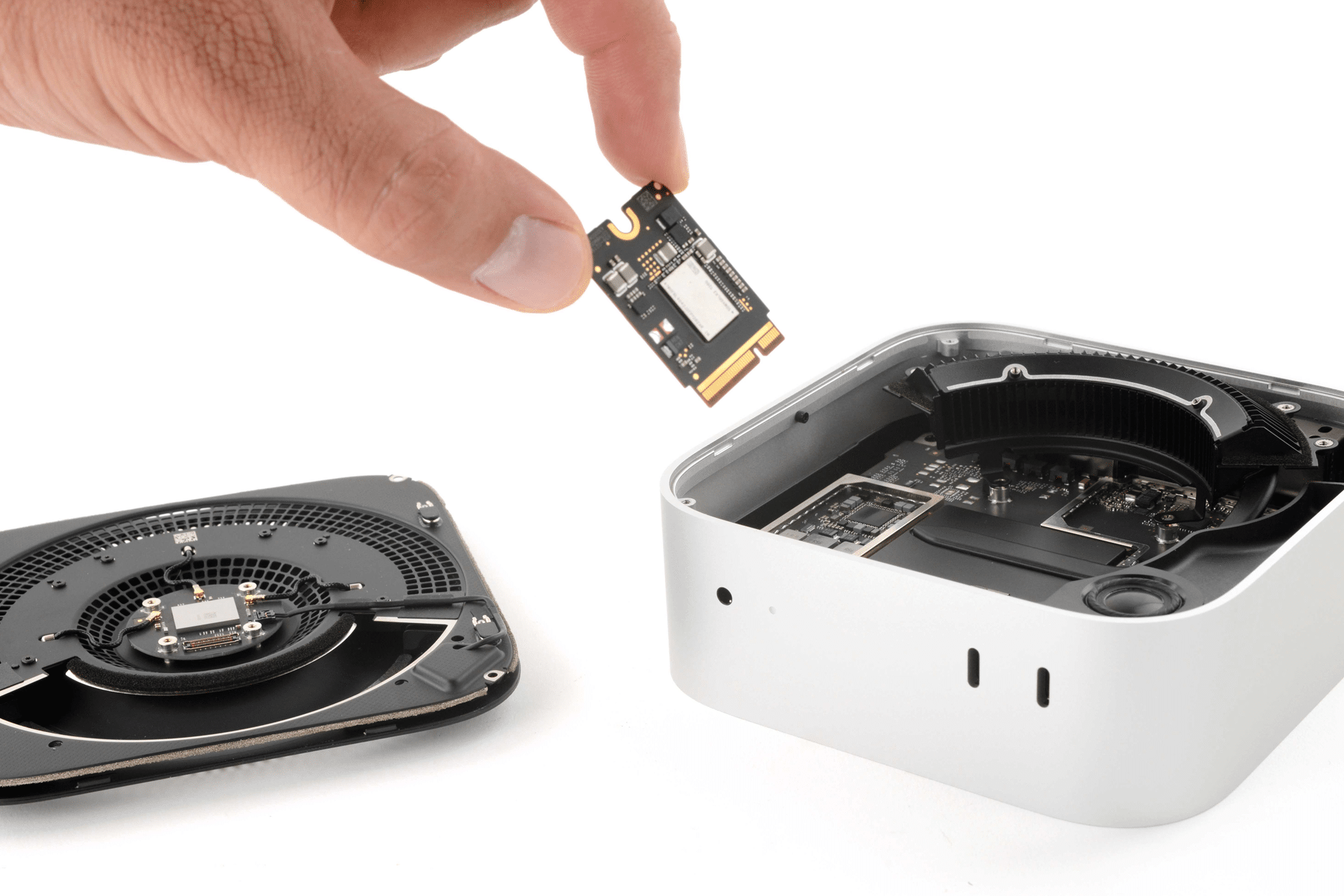

For the uninitiated, the Mac Mini’s appeal to the AI community isn’t about the form factor; it’s about the silicon. Specifically, it is about the Unified Memory Architecture (UMA). In a traditional PC, the CPU and GPU have separate pools of memory. If you want to run a Large Language Model (LLM), you are typically limited by the VRAM on your graphics card. An NVIDIA RTX 4090 is a beast, but with 24GB of VRAM, it hits a hard wall when trying to load larger, high-parameter models.

Apple Silicon flips the script. Because the CPU and GPU share a single pool of high-bandwidth memory, a Mac Mini configured with higher RAM capacities can allocate a massive portion of that memory to the GPU. This allows developers to run quantized versions of models that would normally require enterprise-grade A100 or H100 GPUs, all whereas sipping power from a wall outlet.

The Unified Memory Arbitrage

The “AI frenzy” mentioned by Bloomberg is essentially a massive arbitrage play. Developers have realized that for the price of a mid-to-high-end Mac Mini, they can get a machine capable of local inference on models that would otherwise require a subscription to a cloud provider or a $10,000 server rack. This has led to a supply chain collapse for the entry-level models, which were likely being bought in bulk to be used as distributed inference clusters.

To understand the technical bottleneck, one must look at memory bandwidth. LLM performance is often limited not by raw TFLOPS, but by how quickly data can move from memory to the processor. Apple’s M-series chips provide a tight integration that reduces latency. When you combine this with llama.cpp and other open-source frameworks that optimize for Apple’s Metal API, the Mac Mini becomes a lethal weapon for edge AI.

“The ability to run 30B or 70B parameter models locally without spending five figures on hardware is a game-changer for privacy-centric AI development. Apple didn’t build the Mac Mini as an AI workstation, but the architecture makes it one by default.” Marcus Thorne, Lead Systems Architect at NeuralEdge

The consequence? The “cheapest” Mac Mini is gone. By raising the floor to $799, Apple is effectively pruning the casual consumer from the queue and forcing the AI crowd toward higher-margin configurations. It is a classic supply-side correction.

Hardware Economics vs. LLM Parameter Scaling

The market is currently obsessed with parameter scaling. As models grow, the memory footprint increases. A 7B parameter model might fit comfortably in 8GB of RAM, but as we move toward more complex agents and larger context windows, 16GB becomes the bare minimum. The discontinued entry-level model was likely too underpowered for serious AI work, yet its availability made it a target for those building “farms” of compact devices.

The following breakdown illustrates why the AI community is fighting over these machines compared to traditional x86 setups:

| Feature | Traditional PC (Discrete GPU) | Apple Silicon (UMA) | AI Impact |

|---|---|---|---|

| Memory Access | PCIe Bus (Latency bottleneck) | Unified Fabric (Low latency) | Faster token generation |

| VRAM Limit | Fixed by GPU Hardware (e.g., 12-24GB) | Dynamic (Up to total system RAM) | Ability to load larger models |

| Power Draw | High (300W – 600W+ per GPU) | Ultra-Low (approx. 20W – 100W) | Lower operational cost for 24/7 clusters |

| Thermal Profile | Requires heavy active cooling | Efficient, low heat output | Higher density in server racks |

This efficiency is why we are seeing marked-up Mac Minis flooding eBay. Scalpers aren’t targeting gamers this time; they are targeting the “Local AI” crowd who need a specific RAM-to-price ratio that Apple is now actively adjusting.

The Strategic Pivot to High-Margin Compute

Apple’s move is a calculated risk. By raising the starting price, they are signaling that the Mac Mini is moving upmarket. This aligns with a broader trend in the “chip wars,” where the value is shifting from the processor itself to the memory subsystem. We are seeing a similar trend with Ars Technica reporting on the increasing difficulty of procuring Mac Studio units.

If the Mac Mini is “too good” for the price, it cannibalizes the sales of the Mac Studio and the Mac Pro. By removing the budget option, Apple nudges the professional user toward the M-Max or M-Ultra chips, which offer even wider memory buses and higher totals of unified memory.

Although, this creates a friction point with the open-source community. Much of the current AI innovation is happening in the “small model” space—optimizing models to run on consumer hardware. When the entry price for that hardware rises, the barrier to entry for independent developers similarly rises.

The 30-Second Verdict

- The Cause: AI developers are using Mac Minis as cheap, high-memory inference nodes.

- The Result: Entry-level models are discontinued; starting price is now $799.

- The Tech: Unified Memory Architecture (UMA) allows the GPU to use system RAM, bypassing the VRAM limits of NVIDIA consumer cards.

- The Outlook: Expect further price adjustments as Apple aligns its hardware tiers with AI demand.

For the developer, the math is still relatively favorable. Even at $799, a Mac Mini provides a performance-per-watt ratio that is nearly impossible to match in the x86 ecosystem without spending significantly more on electricity and cooling. But the era of the “budget” AI workstation is closing. Apple has realized that their silicon is too valuable to be sold at a discount.

As we move deeper into 2026, the real question isn’t whether the Mac Mini is expensive—it’s whether Apple will eventually release a “headless” Mac Mini specifically designed for AI clusters, stripping away the HDMI ports and focusing entirely on memory bandwidth and interconnect speeds. Until then, the developers will keep hunting for stock and the scalpers will keep raising the price.