AWS is integrating agentic AI into Amazon Connect to automate complex workflows in healthcare, hiring, supply chain, and customer service. By leveraging LLM-driven orchestration, these tools handle multi-step tasks while maintaining human-in-the-loop oversight, aiming to reduce operational latency and overhead for enterprise clients globally.

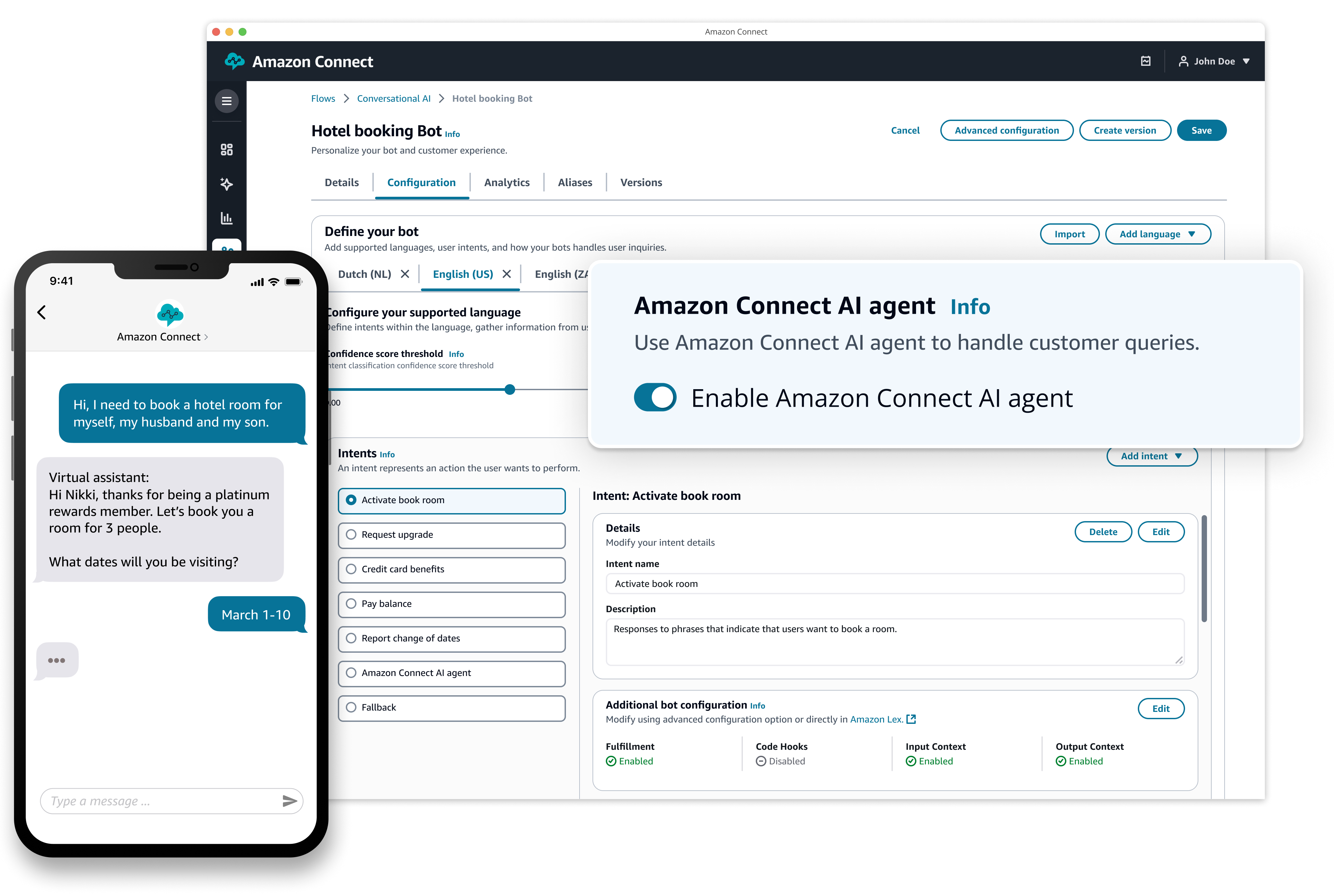

For years, the “AI assistant” has been a glorified FAQ search bar—a thin wrapper around a decision tree that frustrates users the moment they deviate from a pre-scripted path. But this week’s rollout of agentic tools within Amazon Connect marks a fundamental shift in the paradigm. We are moving from conversational AI, which focuses on the dialogue, to agentic AI, which focuses on the execution.

The distinction is critical. A conversational bot tells you your package is delayed. An agentic bot identifies the logistics bottleneck in the supply chain, contacts the carrier via API, reroutes the shipment, and emails the customer a revised delivery window—all before a human manager even opens their dashboard. This isn’t just a feature update; We see an attempt to turn the cloud contact center into an autonomous operating system for enterprise operations.

Beyond the Chatbot: The Shift to Agentic Orchestration

Under the hood, this expansion relies heavily on Amazon Bedrock, the managed service that allows developers to swap between different foundation models (FMs). By utilizing a technique known as “tool use” or “function calling,” these agents can now interact with external software. Instead of merely predicting the next token in a sentence, the LLM identifies when a specific goal requires an external action—such as querying a SQL database for a candidate’s availability in a hiring workflow or checking a patient’s record in a healthcare EHR (Electronic Health Record) system.

To mitigate the persistent problem of hallucinations, AWS is leaning into Retrieval-Augmented Generation (RAG). By grounding the agent’s responses in a company’s own proprietary data—stored in vectors and retrieved in real-time—AWS ensures the AI doesn’t “invent” a healthcare policy or a hiring requirement. The architecture essentially creates a closed-loop system: the agent perceives the user’s intent, retrieves the factual context from a secure knowledge base, plans the sequence of actions, and executes them via API.

It is a sophisticated orchestration layer that hides a massive amount of complexity.

The 30-Second Verdict: Enterprise Impact

- Operational Velocity: Drastic reduction in “swivel-chair” tasks where employees manually move data between systems.

- The Human Element: “Human-in-the-loop” isn’t just a safety feature; it’s a regulatory necessity for HIPAA and EEOC compliance.

- Technical Debt: Integration requires clean API documentation; legacy systems with “spaghetti code” will struggle to implement these agents.

The Latency Tax and the Inferentia Advantage

In the world of real-time voice and chat, latency is the enemy. A two-second delay in an AI’s response creates an “uncanny valley” effect that makes human users uncomfortable. To combat this, AWS is optimizing the inference path. By leveraging AWS Trainium and Inferentia chips, they are attempting to lower the cost and time of token generation compared to relying solely on general-purpose NVIDIA GPUs.

The goal is to move the “reasoning” closer to the edge. When an agent in a supply chain workflow needs to calculate the optimal shipping route, the latency between the LLM’s reasoning step and the API call to the logistics provider must be negligible. If the orchestration layer adds 500ms of overhead per turn, the user experience collapses.

“The real battle in agentic AI isn’t about who has the largest parameter count, but who can minimize the ‘time-to-action.’ The winner will be the platform that integrates the model most tightly with the underlying compute and API gateway.”

This is where the “chip war” manifests in the software layer. By controlling the silicon (Inferentia) and the platform (Connect), AWS can optimize the entire stack for speed, something third-party LLM wrappers cannot do.

Compliance Walls in Healthcare and Hiring

Deploying AI in healthcare and hiring isn’t just a technical challenge; it’s a legal minefield. In healthcare, the stakes are clinical accuracy and HIPAA compliance. In hiring, the risk is algorithmic bias—where an agent might inadvertently filter out candidates based on patterns that violate equal opportunity laws.

AWS’s approach is to implement “guardrails.” These are essentially programmatic filters that sit between the LLM and the output. If an agent attempts to suggest a medical diagnosis or use a protected characteristic to rank a job applicant, the guardrail intercepts the response and forces a human intervention. This “human-in-the-loop” architecture is the only way to deploy these tools in highly regulated sectors without risking catastrophic litigation.

But, the tension remains: the more guardrails you add, the more “robotic” and limited the agent becomes. The engineering challenge for 2026 is maintaining the fluidity of a generative agent while enforcing the rigidity of legal compliance.

The Lock-In Trap: Enterprise Gravity vs. Open Source

We must address the macro-market dynamic: platform lock-in. When a company builds its entire hiring and supply chain orchestration on Amazon Connect’s agentic tools, they aren’t just buying a service; they are migrating their business logic into the AWS ecosystem.

Once your “Agentic Workflows” are defined within the AWS environment, the cost of switching to Google Cloud’s Vertex AI or Microsoft’s Copilot Studio becomes astronomical. This is “enterprise gravity.” AWS is effectively building a moat made of integrated workflows. While they offer Bedrock to allow different models, the orchestration—the actual “how” of the task execution—remains proprietary.

| Feature | Traditional IVR/Chatbot | Conversational AI | Agentic AI (Amazon Connect) |

|---|---|---|---|

| Logic | Hard-coded Decision Trees | Intent Recognition (NLP) | Dynamic Reasoning & Planning |

| Capability | Information Retrieval | Natural Dialogue | Cross-Platform Execution |

| Integration | Static API calls | Limited Webhooks | Autonomous Tool Use (Function Calling) |

| User Experience | Frustrating/Rigid | Fluent but Passive | Proactive/Problem-Solving |

For developers, this creates a dichotomy. On one hand, the speed of deployment is unmatched. On the other, the reliance on a closed ecosystem stifles the kind of flexibility found in open-source frameworks like LangChain or AutoGPT. The industry is currently split between those who want the “it just works” experience of a cloud giant and those who fear the loss of architectural sovereignty.

the success of these tools will not be measured by how “smart” the AI feels, but by how much manual labor it actually deletes from the enterprise balance sheet. If AWS can prove that their agents reduce the cost-per-resolution in healthcare and supply chains by 30% or more, the lock-in will be a price most CTOs are more than willing to pay.