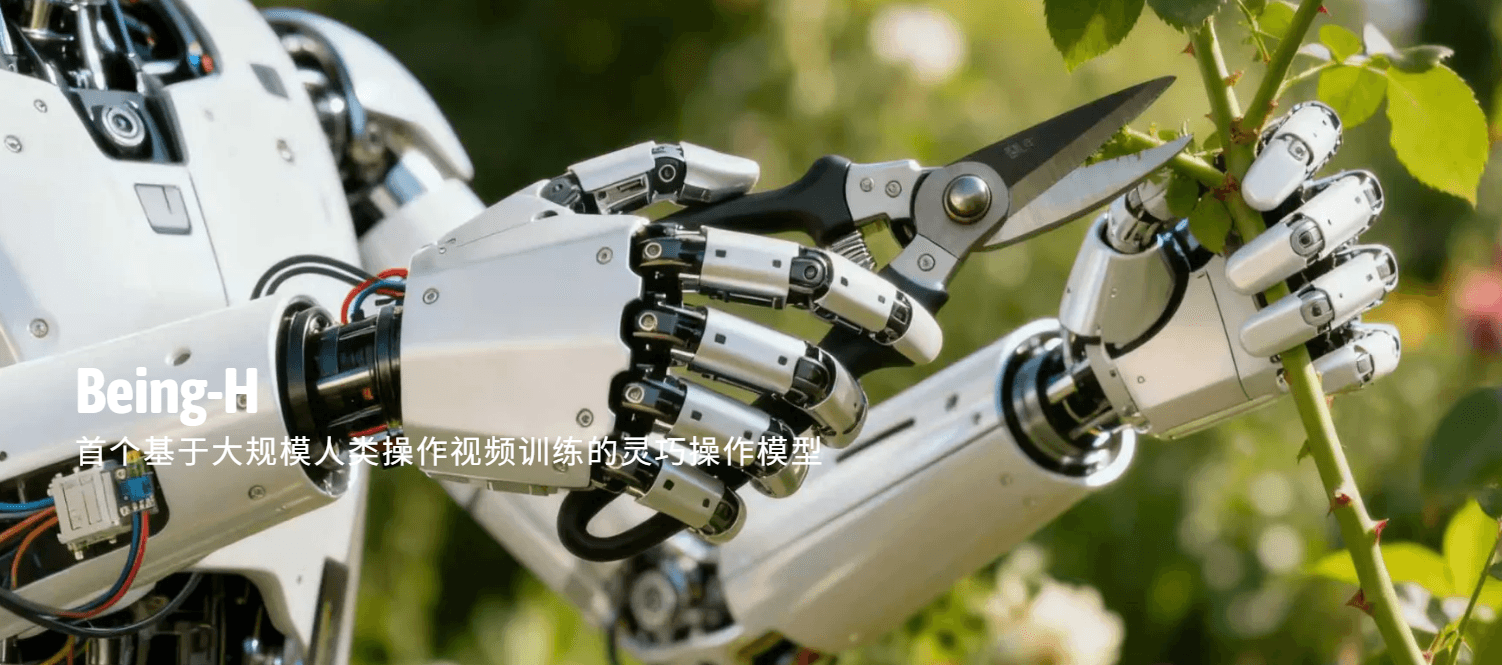

BeingBeyond has launched Being-H0.7, an embodied world model trained on 200,000 hours of human video to bridge the gap between digital perception and physical action. By mapping human kinematics to robotic control, H0.7 enables AI agents to predict physical outcomes and execute complex tasks in unstructured, real-world environments.

For years, the AI industry has been obsessed with the “brain”—the Large Language Model (LLM) that can recite poetry or write Python. But a brain without a body is just a sophisticated autocomplete engine. Being-H0.7 represents a pivot toward the “nervous system.” We are moving from generative AI that creates images of a kitchen to embodied AI that understands how to navigate one without knocking over a vase.

The shift is profound. While previous iterations of world models focused on video synthesis—creating visually plausible clips—H0.7 is designed for actionable intelligence. It doesn’t just predict the next pixel; it predicts the next physical state. This is the difference between watching a movie of someone riding a bike and actually understanding the balance, torque, and friction required to stay upright.

From Pixels to Physics: The Mechanics of H0.7

At its core, Being-H0.7 utilizes a transformer-based architecture optimized for spatio-temporal tokens. By ingesting 200,000 hours of human video, the model has developed a latent representation of “common sense physics.” In engineering terms, this is a massive exercise in behavioral cloning. The model observes a human hand grasping a door handle and translates those visual cues into a series of joint-angle commands for a robotic actuator.

The real breakthrough here is the reduction of the “sim-to-real” gap. Traditionally, robots are trained in simulators (like NVIDIA Isaac Sim) where physics are perfect, and predictable. But the real world is messy. H0.7 bypasses the sterile simulator by learning directly from the chaos of human movement. It employs a technique known as zero-shot generalization, allowing a robot to encounter a tool it has never seen before and infer its use based on the human patterns it learned during training.

However, this isn’t magic; it’s parameter scaling. The model’s ability to handle complex trajectories depends on its capacity to map high-dimensional video data into a low-dimensional action space. If the tokenization is too coarse, the robot is clumsy. If it’s too fine, the compute cost skyrockets, leading to unacceptable latency in real-time environments.

The Latency Tax and the NPU Bottleneck

Shipping a world model is one thing; running it on a bipedal robot in a warehouse is another. The primary enemy here is latency. For a robot to react to a slipping object, the loop from perception to action must happen in milliseconds. This is where the hardware handshake becomes critical.

Being-H0.7 is designed to leverage dedicated NPUs (Neural Processing Units) rather than relying on general-purpose GPUs. By offloading the tensor operations to specialized silicon, BeingBeyond aims to minimize the “inference lag” that plagues earlier embodied models. We are seeing a convergence where the model architecture is being dictated by the chip’s memory bandwidth. If the weights of H0.7 cannot be loaded into the NPU’s local SRAM quickly enough, the robot will experience “stutter,” a digital hesitation that can be catastrophic in industrial settings.

“The transition from generative world models to embodied ones is the hardest leap in AI. We are no longer dealing with the forgiveness of a chat window; we are dealing with the laws of thermodynamics and gravity. A hallucination in a chatbot is a funny mistake; a hallucination in an embodied model is a broken arm or a crashed drone.”

This quote from a leading robotics researcher highlights the stakes. The industry is currently locked in a war over who can create the most robust “foundation model for robotics.” While Google’s RT-2 has made strides, Being-H0.7’s reliance on massive human video datasets suggests a move toward a more organic, intuitive form of machine learning.

Data Provenance in the Age of Behavioral Cloning

We need to talk about the 200,000 hours. In the gold rush for training data, the “how” often gets buried under the “how much.” Where did this video reach from? While BeingBeyond remains tight-lipped, the industry standard has shifted toward a mix of curated YouTube datasets and proprietary teleoperation data—where humans wear VR suits to “drive” robots.

This raises significant ethical and technical questions regarding data provenance. If the model learns from humans, it as well learns human errors, biases, and inefficient movements. The privacy implications of using large-scale human video are immense. We are essentially creating a digital mirror of human physicality. As these models move toward IEEE standards for robotic safety, the auditability of the training set will become a regulatory flashpoint.

the reliance on human video creates a “ceiling.” A robot can only be as solid as the humans it observes. To truly transcend, H0.7 will eventually need to move beyond behavioral cloning and into reinforcement learning, where the AI discovers better ways to move than humans ever did.

The Competitive Landscape: The War for the Physical Layer

Being-H0.7 isn’t operating in a vacuum. It is a direct shot across the bow of Tesla’s Optimus and OpenAI’s rumored robotics partnerships. The battle is no longer about who has the best LLM, but who controls the “Physical Layer” of AI.

- Tesla: Leverages a massive fleet of cars as “world sensors,” using a similar finish-to-end neural net approach.

- Google DeepMind: Focuses on multi-modal versatility, blending language understanding with robotic manipulation.

- BeingBeyond: Betting heavily on the “human-as-teacher” model, prioritizing the fluidity of movement over raw logical reasoning.

This competition is driving a rapid evolution in open-source robotics. We are seeing a surge in repositories on GitHub dedicated to “Action Tokens,” as developers try to standardize how physical movements are encoded across different hardware platforms.

The 30-Second Verdict

Being-H0.7 is a sophisticated leap forward in embodied AI, trading the “hallucinations” of generative video for the utility of physical prediction. It proves that human video is a viable shortcut to teaching robots common sense. However, until BeingBeyond solves the NPU latency bottleneck and provides transparency on its data sourcing, H0.7 remains a brilliant prototype rather than a plug-and-play industrial solution.

The era of the “thinking machine” is ending. The era of the “doing machine” has officially begun.