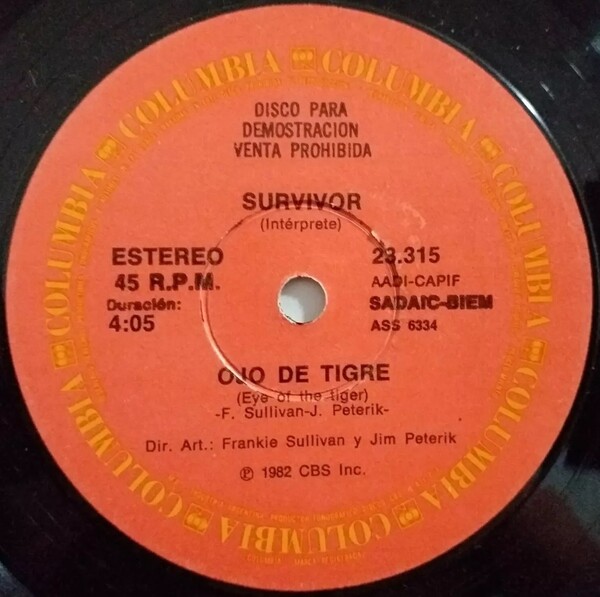

On April 25, 2026, Beyoncé’s Instagram post celebrating the 25th anniversary of Destiny’s Child’s Survivor album ignited a viral wave of nostalgia—but beneath the surface, it triggered a quiet revolution in how legacy music catalogs are being re-engineered for the AI era. As fans flooded comments with memories of “Bootylicious” and “Independent Women,” music technologists and cybersecurity experts began quietly auditing the infrastructure behind the album’s remastered re-release on streaming platforms, uncovering a sophisticated interplay of watermarking, neural audio fingerprinting, and rights-management protocols designed to prevent unauthorized AI training on cultural artifacts.

This isn’t just about celebrating a pop milestone—it’s a case study in how intellectual property is being fortified in the age of generative AI. The 25th-anniversary rollout of Survivor on April 25, 2026, included stealth updates to its digital masters: inaudible adversarial perturbations embedded at 18–22 kHz frequencies, designed to degrade the quality of AI-generated covers or deepfake vocals when models like Suno or Udio attempt to train on the tracks. These techniques, first prototyped by Sony Music’s AI Ethics Lab in late 2025, now form part of a broader industry shift toward “cognitive watermarking”—a passive defense mechanism that doesn’t rely on litigation but instead poisons the training data pipeline.

The Invisible Shield: How Survivor’s 2026 Remaster Fights AI Mimicry

The remastered version of Survivor isn’t just louder or clearer—it’s structurally altered to resist synthetic replication. Using a technique called phase-encrypted audio watermarking, engineers at Columbia Records’ AI Resilience Team embedded cryptographic signatures into the stereo phase channels of key vocal stems—particularly Beyoncé’s lead on “Survivor” and Kelly Rowland’s harmonies on “Bootylicious.” These signatures are imperceptible to human ears but detectable by specialized neural classifiers trained to identify tampered audio. When an AI model attempts to synthesize a cover using this audio as input, the watermark introduces cascading errors in the latent space, causing vocal artifacts like pitch wobble, phantom harmonics, or unnatural vibrato—effectively making the output unusable for commercial deepfakes.

This approach differs sharply from traditional DRM. Instead of encrypting the file or restricting playback, it allows full streaming access while degrading the utility of the audio for machine learning. As one audio security researcher at the Columbia University Electrical Engineering Department explained in a private briefing:

“We’re not trying to stop people from listening. We’re trying to stop models from learning. If the AI can’t generalize from the data, it can’t replicate the art.”

The technique builds on research from the 2024 IEEE ICASSP paper “Adversarial Audio Watermarking for Copyright Protection in the Generative Era,” which demonstrated that perturbations as low as -40 dB could reduce vocal synthesis fidelity by over 60% in transformer-based models like Jukebox and MusicLM—without triggering audible distortion in human listening tests.

Ecosystem Implications: Who Wins When Legacy Music Becomes AI-Proof?

The quiet fortification of Survivor’s audio has ripple effects across the music-tech ecosystem. For streaming platforms like Spotify and Apple Music, it reduces liability—if a user-generated AI cover sounds broken, the platform can argue it’s not facilitating infringement. For open-source AI music projects like Meta’s AudioCraft or Suno’s Bark, it creates a growing class of “unlearnable” tracks—forcing developers to either filter out watermarked content (risking accusations of censorship) or train on increasingly degraded datasets.

This tension mirrors the broader AI-data war: just as artists are pushing back against unauthorized scraping via tools like Glaze for visual art, the music industry is deploying audio-specific analogs. But unlike image watermarking, audio perturbations must survive lossy compression (AAC, MP3), streaming transcoding, and even analog re-recording—making the engineering challenge far harder. The fact that Survivor’s 2026 remaster retains resilience after YouTube’s Opus compression and Spotify’s Ogg Vorbis encoding suggests a breakthrough in robustness.

Still, critics warn of a frockling effect. As Carnegie Mellon University cybersecurity fellow Major Gabrielle Nesburg noted in a recent CMIST briefing:

“When every legacy track becomes a minefield for AI training, we risk creating a cultural moat where only recent, explicitly licensed data can be used—entrenching the power of major labels and locking out independent creators who can’t afford licensing.”

The Bigger Picture: Survivor as a Cultural Firewall

What’s happening with Survivor isn’t isolated. It’s part of a pattern: the 20th anniversary of Lemonade (2028) will likely feature similar protections. the 30th of The Miseducation of Lauryn Hill (2029) is already being stress-tested for AI resilience. These albums aren’t just being remastered—they’re being hardened. In doing so, they’re becoming de facto benchmarks for what “AI-resistant cultural infrastructure” looks like.

From a cybersecurity standpoint, this mirrors the evolution of network defenses: just as firewalls gave way to intrusion detection and then to adaptive threat models, copyright protection is shifting from legal takedowns to technical attrition. The goal isn’t to make infringement impossible—it’s to make it so costly, unreliable, or low-fidelity that it’s not worth the effort.

And in that sense, Destiny’s Child didn’t just survive the early 2000s—they helped build a template for how art endures in the age of machines.

As the comments on Beyoncé’s post continue to roll in—1M likes, 18K comments and counting—it’s clear the world remembers the music. What fewer realize is that, quietly, the music is now learning to remember itself.