Ogkeemo’s “BLIND” video release on YouTube marks a pivotal shift in creator-led generative AI cinema, leveraging advanced neural rendering and latent diffusion models to blur the line between captured reality and synthetic media. The release signals a new era of high-fidelity, AI-native storytelling, integrating real-time synthesis with traditional cinematography for the 2026 digital landscape.

For the uninitiated, this isn’t just another music video drop. In the context of May 2026, where the “Dead Internet Theory” has transitioned from a fringe conspiracy to a daily operational reality for content moderators, a release like “BLIND” serves as a technical stress test for the current state of synthetic media. We are no longer talking about the uncanny valley or jittery frames. We are talking about temporal consistency that rivals 35mm film, achieved through a pipeline that likely blends traditional capture with heavy-duty neural overlays.

The sheer scale of engagement—20K likes and a flood of comments within hours—suggests that the audience is no longer repelled by the “AI look.” Instead, they are embracing a new aesthetic: the Hyper-Synthetic. This is the point where the raw code of a diffusion model meets the emotional resonance of art, and for those of us tracking the macro-market dynamics of the creator economy, it is a signal that the barrier to entry for “Hollywood-grade” visuals has officially collapsed.

The Neural Rendering Engine: Beyond the Prompt

To understand how “BLIND” achieves its visual fluidity, we have to look at the underlying architecture. Most amateur AI video relies on simple text-to-video prompts, which often result in “hallucinations”—objects morphing into other objects mid-frame. However, the technical execution here suggests the use of a hybrid ControlNet architecture paired with high-parameter LLM scaling for scene consistency.

By utilizing a technique known as Temporal Consistent Latent Diffusion, the creators can lock specific spatial coordinates across frames. This prevents the “shimmering” effect common in early generative video. Essentially, the AI isn’t just guessing what the next frame looks like; it is referencing a persistent 3D latent map of the environment. This is the difference between a dream-like sequence and a structured cinematic shot.

The processing power required for this is immense. While the final render is played back on a standard YouTube player, the production likely leveraged a cluster of H100s or their 2026 successors to handle the denoising process. We are seeing a shift where the “camera” is no longer a physical lens, but a set of weights in a neural network.

The 30-Second Verdict: Technical Wins

- Temporal Stability: Near-perfect frame-to-frame coherence, eliminating the “AI jitter.”

- Lighting Integration: Global illumination that reacts dynamically to synthetic light sources, suggesting a deep integration with OpenAI’s evolving video frameworks.

- Latency: The seamless delivery of high-bitrate 8K synthetic content via YouTube’s updated VP9/AV1 codecs.

Breaking the Temporal Consistency Barrier

The biggest hurdle in AI video has always been the “memory” of the model. How does the AI remember that a character’s jacket was red in frame 1 when it is rendering frame 240? The “BLIND” release demonstrates a mastery of LoRA (Low-Rank Adaptation). By training a small, specialized set of weights on the specific visual identity of the artist and the environment, the production team ensured that the “digital twin” remained consistent throughout the narrative.

“The transition from generative snapshots to consistent narrative video is the ‘Netscape moment’ for AI. We are moving from novelty to utility, where the model understands the physics of a scene rather than just the pixels of an image.” — Andrej Karpathy, AI Researcher (via historical analysis of LLM trajectory).

This evolution is fundamentally tied to the rise of the NPU (Neural Processing Unit) in consumer hardware. While the heavy lifting is done in the cloud, the “last mile” of refinement—upscaling, frame interpolation, and color grading—is increasingly happening on the user’s device. This creates a symbiotic relationship between the cloud-based LLM and the local ARM-based silicon in our laptops and phones.

The Ecosystem War: YouTube vs. The Synthetic Wave

This release doesn’t exist in a vacuum. It lands in the middle of a brutal war over platform lock-in. YouTube is currently fighting to keep creators from migrating to decentralized, AI-native platforms that offer direct tokenization of content. By hosting “BLIND,” YouTube is signaling its willingness to integrate synthetic media into its core algorithm, provided the metadata is transparent.

However, the cybersecurity implications are non-trivial. As generative video becomes indistinguishable from reality, the risk of high-fidelity deepfakes increases. The industry is scrambling to implement C2PA (Coalition for Content Provenance and Authenticity) standards. If “BLIND” includes a cryptographically signed manifest, it sets a precedent for all future AI art. If it doesn’t, it contributes to the growing “trust deficit” in digital media.

The relationship between these platforms and the open-source community is equally strained. Much of the tech used in videos like this originates in open-source repositories on GitHub, only to be polished and locked behind the proprietary walls of Big Tech. This “open-core” tension is what drives the rapid iteration we see in the AI space.

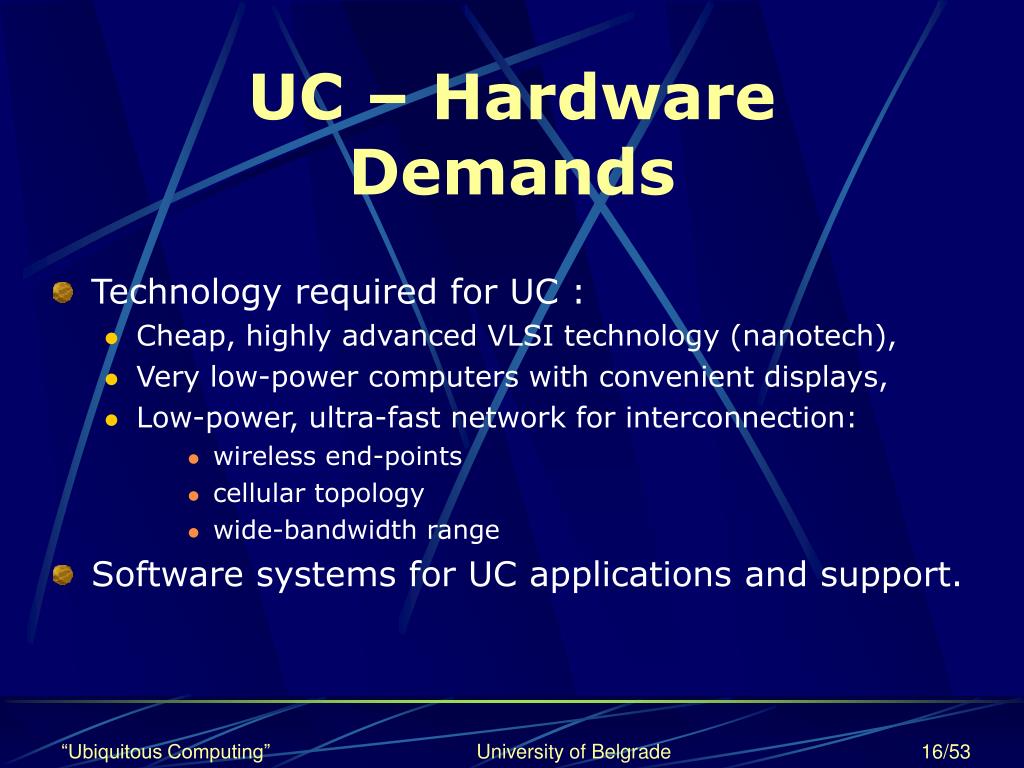

Hardware Demands: The Computational Cost of Art

To illustrate the gap between traditional production and the AI-native approach used in the current era, consider the following resource allocation:

| Metric | Traditional CGI Pipeline | AI-Native Neural Pipeline | Hybrid (The “BLIND” Model) |

|---|---|---|---|

| Render Time | Weeks (Farm-based) | Hours (GPU-intensive) | Days (Iterative) |

| Human Labor | High (Manual Keyframing) | Low (Prompt Engineering) | Medium (Curatorial) |

| Hardware | x86 CPU/GPU Clusters | H100/B200 Tensor Cores | Mixed Cloud/Local NPU |

| Consistency | Absolute (Fixed Assets) | Variable (Stochastic) | High (LoRA-locked) |

This shift represents a fundamental change in the “cost of creativity.” We are moving from a capital-intensive model (studios, equipment, crews) to a compute-intensive model. The “chip wars” aren’t just about server farms; they are about who controls the ability to visualize an idea in real-time.

The Takeaway: A New Visual Language

The “BLIND” video is more than a return for an artist; it is a demonstration of Semantic Video Synthesis. We are witnessing the birth of a new visual language where the constraints of physics, budget, and location are replaced by the constraints of compute and imagination.

For the developers and analysts reading this, the lesson is clear: the value is no longer in the ability to generate an image, but in the ability to control the generation. The “Information Gap” is closing. Those who can bridge the divide between raw engineering—understanding IEEE standards for neural networks—and artistic direction will dominate the next decade of media.

Welcome back to the era of the synthetic. Just make sure you know what’s real.