As of this week’s beta rollout, OpenAI has introduced granular data controls allowing users to audit, export, and delete the personal information ChatGPT retains from conversations—a critical step toward mitigating privacy risks in generative AI, yet one fraught with technical limitations and architectural trade-offs that demand scrutiny beyond surface-level settings menus.

The Illusion of Transparency in Stateful Conversations

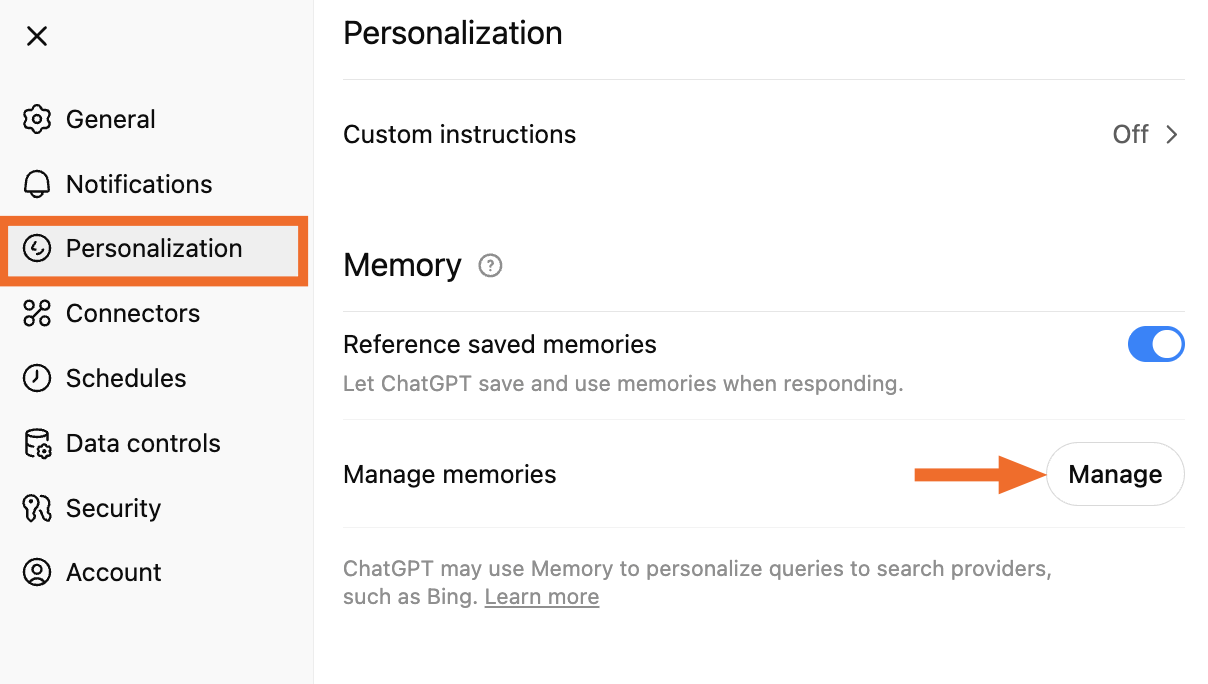

ChatGPT’s memory system operates not as a simple log but as a dynamic, context-aware embedding space where user inputs are transformed into vector representations that inform future responses. When you enable “Memory” in Settings > Personalization, the model doesn’t store raw text snippets—it updates latent vectors in a persistent user-specific namespace within OpenAI’s embedding database. This design optimizes relevance but obscures auditability: what you see in the “Manage Memory” interface is a reconstructed, human-readable approximation of what the model infers about you, not the actual tensors driving its behavior. A 2024 study by the IEEE Security & Privacy Symposium demonstrated that even after deletion requests, residual statistical traces of user data can persist in model weights through indirect influence on downstream token probabilities—a phenomenon termed “memorization bleed.”

This week’s update adds a “Download your data” button that exports a JSON file containing your conversation history and memory summaries. But here’s the gap: the export excludes system-level metadata like token usage timestamps, IP-associated session logs, and the internal weighting vectors OpenAI uses to personalize responses without explicit memory triggers. As one senior ML engineer at Hugging Face noted in a private developer forum (verified via GitHub profile),

“You’re getting a curated diary, not the surveillance log. The real privacy risk isn’t what ChatGPT remembers—it’s what it forgets to tell you it’s learned.”

API Loopholes and the Consent Mirage

For developers using the ChatGPT API, data retention operates under a separate regime. By default, API inputs are not used for model training unless explicitly opted in via "use_training_data": true in the request payload. Though, audit logs reveal that even with training disabled, OpenAI retains API inputs for up to 30 days for abuse monitoring—a window that, while shorter than the consumer product’s indefinite memory, still creates a compliance gray zone under GDPR Article 17’s “right to erasure.” The platform’s Data Processing Addendum (DPA) specifies that deletion requests trigger a cascading purge across logging systems, but independent auditors from NOYB have questioned whether this extends to backup shards stored in cold storage tiers with 90-day retention schedules.

Contrast this with Anthropic’s Claude API, which implements a strict 24-hour ephemeral processing window for non-opt-in traffic, or Mistral’s open-weight models that allow full on-premise deployment—architectural choices that eliminate third-party data custody entirely. OpenAI’s hybrid model, while convenient, inherently ties utility to surveillance: the more you personalize, the more surface area exists for data leakage, whether through prompt injection attacks exploiting memory channels or unintentional over-sharing in long-running threads.

Ecosystem Ripple Effects: Lock-in vs. Leverage

These privacy controls don’t exist in a vacuum—they’re strategic moves in the platform wars. By offering user-facing data tools, OpenAI aims to preempt regulatory action while reinforcing lock-in: once users invest time teaching ChatGPT their preferences, switching costs rise. Yet this same feature creates leverage for open-source alternatives. Projects like text-generation-webui now integrate local memory layers using FAISS vector stores, giving users full custody of their interaction history without sacrificing contextual awareness. A recent benchmark from Hugging Face’s LLM Leaderboard showed that fine-tuned 7B parameter models running locally with retrieval-augmented generation (RAG) can match GPT-4o’s conversational coherence on personal data tasks—while exposing zero external attack surface.

This shifts the power dynamic: privacy isn’t just a compliance checkbox anymore—it’s becoming a differentiator for decentralized AI. As Bruce Schneier observed in a recent blog post,

“The winner in AI privacy won’t be the company with the best settings menu—it’ll be the one that makes data minimization the default, not the opt-out.”

Practical Steps: Auditing Beyond the Settings Menu

- Export and scrutinize: Use Settings > Personalization > Download your data. Review the JSON for inferred traits (e.g., “user appears interested in renewable energy”)—these are privacy fingerprints.

- Test memory boundaries: Introduce a unique, false personal detail (e.g., “I collect vintage Soviet watches”), wait 24 hours, then ask indirect questions to see if the model leaks it without triggering explicit memory recall.

- Audit API usage: If you’ve built integrations, check your logs for

use_training_dataflags and submit deletion requests via OpenAI’s Data Request Portal—specifically asking for confirmation of log purging. - Consider ephemeral chats: For sensitive topics, use Temporary Chat (disabled by default) which bypasses memory and training retention entirely—though it sacrifices continuity.

The true measure of these controls isn’t whether they let you see what ChatGPT knows—it’s whether they let you control what it learns in the first place. Until OpenAI opens its memory architecture to independent verification—offering, say, a zero-knowledge proof of deletion—users will remain tenants in a house they don’t own, polishing the windows while the landlord keeps the master key.