Moonshot AI, a premier Chinese LLM developer, has secured $2 billion in fresh capital, driving its valuation to a staggering $20 billion. The firm is scaling its Kimi chatbot, leveraging an industry-leading long-context window to disrupt the generative AI landscape and challenge the dominance of Western incumbents in the high-stakes race for cognitive computing.

This isn’t just another venture capital windfall. It is a strategic signal. In a geopolitical climate where the “chip wars” have turned high-end GPUs into the new oil, Moonshot is attempting to pivot the battleground from raw compute power to architectural efficiency.

While the industry has spent the last two years obsessed with parameter scaling—the “bigger is better” philosophy—Moonshot is doubling down on the context window. For the uninitiated, the context window is the amount of data a model can “keep in mind” during a single session. Most models suffer from “lost in the middle” syndrome, where they forget information buried in the center of a long prompt. Moonshot’s Kimi is designed to ingest millions of tokens without the typical cognitive decay.

The Engineering Behind the Long-Context Bet

To achieve this, Moonshot isn’t just throwing more H100s at the problem; they are optimizing the KV (Key-Value) cache. In standard Transformer architectures, the memory requirement for the KV cache grows linearly with the sequence length, creating a massive memory bottleneck that leads to latency spikes and thermal throttling on the NPU (Neural Processing Unit).

Moonshot appears to be implementing a sophisticated variation of sparse attention mechanisms and potentially a Mixture-of-Experts (MoE) architecture. By only activating a fraction of the model’s parameters for any given token, they reduce the FLOPs (floating-point operations) required per inference, allowing Kimi to handle massive documents—entire codebases or legal libraries—without crashing the inference server.

It’s a lean approach to intelligence.

However, the real challenge is the hardware. With US export controls restricting access to the latest NVIDIA Blackwell chips, Moonshot is operating in a compute-starved environment. This forces a level of engineering discipline rarely seen in the “burn-rate” culture of Silicon Valley. They are optimizing for the hardware they have, likely utilizing a hybrid of legacy A100s and domestic accelerators like the Huawei Ascend series.

The 30-Second Verdict: Why This Matters for Enterprise IT

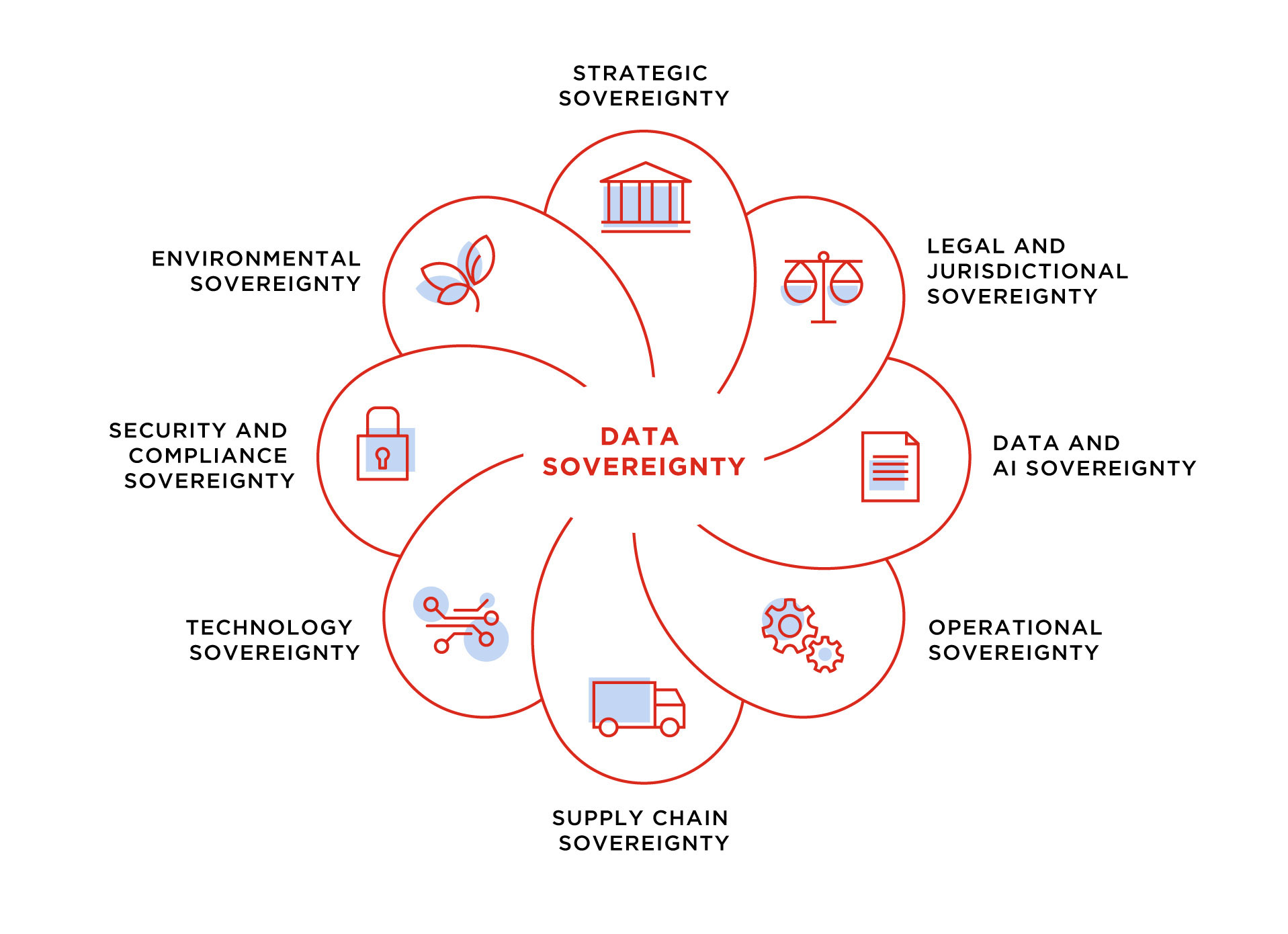

- Data Sovereignty: Moonshot provides a viable, high-performance alternative for firms that cannot migrate data to US-based clouds.

- RAG Obsolescence: By expanding the native context window, Moonshot reduces the reliance on complex Retrieval-Augmented Generation (RAG) pipelines.

- API Competition: Expect a price war in token pricing as Moonshot attempts to undercut OpenAI and Anthropic to capture the Asian market.

The Geopolitical Compute Paradox

The $20 billion valuation is a bet on China’s ability to innovate around sanctions. If Moonshot can prove that architectural elegance can compensate for a lack of top-tier silicon, the entire logic of the US “chip blockade” shifts.

“The focus on long-context windows is a brilliant strategic pivot. When you can’t out-compute your opponent in raw training flops, you out-engineer them in inference efficiency and utility. Moonshot is essentially building a more efficient engine for a car that has a limited fuel supply.”

This shift impacts the open-source community significantly. We are seeing a divergence between the “dense” models favored by Meta’s Llama series and the “sparse/long-context” models emerging from the East. This creates a fragmented ecosystem where developers must choose their stack based on whether they prioritize general reasoning or massive data ingestion.

The risk? Data integrity. The training sets for Kimi are inherently tied to the Chinese digital ecosystem, creating a linguistic and cultural bias that may limit its global adoption but solidify its lock-in within the domestic market.

Competitive Benchmarking: Context vs. Reasoning

To understand where Moonshot sits in the 2026 hierarchy, we have to look at the trade-offs between context window size and actual reasoning capabilities (MMLU scores). While GPT-4o and Claude 3.5 remain the gold standard for complex logical reasoning, Kimi is carving out a niche in “needle-in-a-haystack” retrieval.

| Metric | Moonshot (Kimi) | GPT-4o (Standard) | Claude 3.5 (Sonnet) |

|---|---|---|---|

| Effective Context Window | 2M+ Tokens | 128K Tokens | 200K Tokens |

| Inference Latency | Moderate (Optimized) | Low | Low |

| Primary Strength | Long-form Analysis | Multimodal Reasoning | Coding/Nuance |

| Hardware Dependency | Hybrid/Domestic | NVIDIA H100/B200 | NVIDIA H100/B200 |

The “Great Firewall” of Innovation

Moonshot isn’t operating in a vacuum. It is competing with Baidu’s Ernie and Alibaba’s Qwen. But while the giants are bogged down by legacy corporate structures, Moonshot is acting like a pure-play AI lab. This agility allows them to iterate on their API capabilities faster than the conglomerates.

For developers, the appeal is simple: lower latency for massive inputs. If you are building an AI tool to analyze 500-page regulatory filings, a 128K window is a nuisance. A 2-million-token window is a superpower.

But let’s be clear: the valuation is inflated. A $20 billion price tag in a market where compute is a weaponized resource is speculative. Moonshot is essentially being valued as a sovereign utility, not just a software company.

The next six months will be telling. As they roll out this week’s latest beta updates, the industry will be watching for one thing: does the reasoning hold up as the context grows, or is Kimi just a remarkably expensive search engine with a chat interface?

Final Takeaway: The Shift to Efficiency

The Moonshot funding round confirms that the “Brute Force Era” of AI is ending. We are entering the “Efficiency Era.” Whether you are a CTO in San Francisco or a developer in Shenzhen, the goal is no longer just about who has the most GPUs, but who can do the most with the fewest. Moonshot is betting the house on that thesis.

For more on the underlying mathematics of attention, check the latest research on IEEE Xplore or explore the latest LLM optimization frameworks on GitHub.