Anthropic has transitioned Claude Code from a terminal-based assistant to a comprehensive orchestration hub with the launch of its redesigned desktop app and “Routines” research preview. Released mid-April 2026, these updates shift the developer’s role from writing line-by-line code to managing autonomous, cloud-executed agentic workflows for the enterprise.

The industry is currently obsessed with “copilots,” but Anthropic is pivoting toward “workforce” architecture. For years, we’ve seen LLMs act as sophisticated autocomplete engines living inside the IDE. This latest iteration acknowledges a fundamental shift in agentic work: the move from single-threaded conversations to high-concurrency orchestration. We are no longer just asking a bot to fix a function; we are deploying a fleet of agents to refactor a legacy codebase, triage a Linear backlog, and verify CI/CD pipelines—all simultaneously.

The Orchestration Layer: Mission Control vs. The Terminal

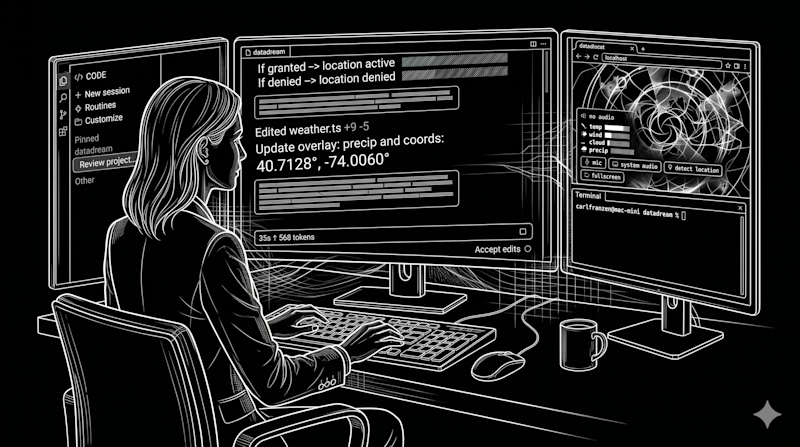

The new desktop GUI is essentially a “Mission Control” for your codebase. The central innovation here is the sidebar, which allows developers to monitor multiple active sessions across disparate repositories. In a standard CLI environment, managing four different AI agents is a cognitive nightmare of tab-switching and context-loss. The GUI solves this by providing a drag-and-drop grid where the terminal, preview pane, and diff viewer coexist.

One standout feature is the “Side Chat” (⌘ + ;). This allows a developer to ask clarifying questions—essentially a “meta-conversation”—without polluting the primary task’s prompt history. In the world of LLM parameter scaling, maintaining a clean context window is everything. By separating the “how” from the “do,” Anthropic prevents the agent from drifting off-task due to conversational noise.

Still, the “walled garden” effect is palpable. While the CLI remains a flexible, agnostic tool, the desktop app is strictly optimized for Anthropic’s ecosystem. For engineers who utilize a poly-model strategy—switching between Claude for architectural reasoning and GPT-4o or open-source Llama 3 variants to bypass rate limits—the GUI creates a friction point. The terminal remains the only place where a diverse, resilient AI stack can truly live.

The 30-Second Verdict: GUI vs. CLI

- Desktop GUI: Superior for “Review and Ship” phases, high-concurrency visibility, and visual diffing of large changesets.

- Terminal CLI: Essential for raw speed, shell-based automation, and model-agnostic workflows.

- The Friction: Integrated terminal latency in the GUI remains a bottleneck for those used to native zsh or bash responsiveness.

Routines: Decoupling Execution from Hardware

The introduction of “Routines” is the most significant architectural shift in this release. Previously, AI automation was tethered to the user’s local machine—if your laptop closed, the agent stopped. Routines move the execution to Anthropic’s web infrastructure. This effectively turns Claude into a headless service that operates on a schedule, regardless of your hardware state.

For an enterprise, this means the AI is no longer a tool you use, but a process you deploy. The infrastructure is split into three distinct integration paths:

| Routine Type | Trigger Mechanism | Enterprise Use Case |

|---|---|---|

| Scheduled | Cron-style cadence | Nightly docs-drift scanning; automated backlog grooming. |

| API | HTTP Requests / Auth Tokens | Triggering Claude via Datadog alerts or CI/CD failures. |

| Webhook | Repository Events (GitHub) | Automatically addressing PR comments or resolving linting errors. |

The pricing and limit structure is tiered, with Enterprise users capped at 25 routines per day. While this seems restrictive for a massive organization, it reflects the immense compute cost of maintaining long-running, stateful agentic sessions in the cloud.

The Security Gap: Agentic Autonomy and the Attack Surface

From a cybersecurity perspective, moving execution from a local CLI to cloud-based “Routines” changes the threat model. We are moving from a “Human-in-the-Loop” (HITL) model to a “Human-on-the-Loop” (HOTL) model. When an agent can autonomously listen to a GitHub webhook and push code to a repository at 3:00 AM, the risk of “prompt injection” or “agent hijacking” becomes a critical enterprise concern.

“The shift toward autonomous AI routines in the dev cycle creates a new vector for supply chain attacks. If an attacker can influence a PR comment that triggers an automated Routine, they aren’t just fooling a human; they are potentially manipulating an agent with write-access to the master branch.”

What we have is particularly dangerous when combined with the “vibe coding” trend, where developers rely more on the visual “preview pane” and less on rigorous manual code audits. The integrated preview pane in the Claude desktop app is a productivity win, but it encourages a “looks good to me” (LGTM) culture that can overlook subtle logic bombs or security regressions.

Bridging the Ecosystem: The Complete of the Solo Coder

This update signals the end of the “solo practitioner” era. The developer is evolving into an orchestrator. By integrating an in-app file editor and a high-performance diff viewer, Anthropic is positioning Claude Code not as a plugin for an IDE, but as a replacement for the traditional development workflow for certain classes of work.

This creates a massive platform lock-in risk. Once an enterprise integrates their Linear backlog and GitHub webhooks into Anthropic’s proprietary Routine infrastructure, the cost of switching to a competitor becomes astronomical. We are seeing the “SaaS-ification” of the compiler and the debugger.

What This Means for Enterprise IT

The transition to agentic workflows requires a new set of guardrails. IT departments must move beyond simple API key management and start implementing “Agent Permissions” frameworks. The fact that the desktop app requires specific subfolder access—rather than the broad user-folder access of the CLI—is a step in the right direction for the principle of least privilege, but it’s a surface-level fix for a deeper architectural challenge.

the redesigned Claude Code is a bet on the future of “Parallel Work.” If you are a lead engineer managing a fleet of contributors, the GUI is your new cockpit. If you are a hardcore tinkerer who lives in the shell, the CLI remains your sanctuary. But for the enterprise, the move toward cloud-executed Routines is the real story—it’s the moment AI stopped being a chatbot and started being an employee.