Global coastal aquifers are facing critical salinization due to systemic over-extraction and rising sea levels, threatening drinking water for millions. This crisis is accelerating the deployment of AI-driven hydrological modeling and next-gen desalination hardware to prevent total groundwater collapse in vulnerable urban coastal hubs worldwide.

For the uninitiated, the “water-energy nexus” is no longer a theoretical academic framework; It’s a hard engineering bottleneck. When we talk about coastal groundwater, we aren’t just discussing environmental degradation. We are discussing the failure of a primary infrastructure layer. As saltwater pushes further inland—a process known as saltwater intrusion—the chemical composition of the aquifer shifts, rendering traditional filtration obsolete and turning freshwater reservoirs into brine pits.

It is a systemic crash. And the current “patches” are insufficient.

The Algorithmic Battle Against Saltwater Intrusion

The primary challenge in managing coastal aquifers is visibility. You cannot manage what you cannot measure, and traditional borehole monitoring is essentially “analog” in a digital world. The industry is now pivoting toward high-resolution spatial data and predictive analytics to map the subterranean salt-front in real-time.

We are seeing a massive shift toward the integration of NASA’s GRACE-FO (Gravity Recovery and Climate Experiment Follow-On) data with local LSTM (Long Short-Term Memory) neural networks. By feeding satellite-derived gravity anomalies into these recurrent neural networks, hydrologists can predict saltwater intrusion with a degree of granularity that was impossible five years ago. This isn’t just “big data”; it’s the application of LLM-style parameter scaling to geophysical fluid dynamics.

But, the “information gap” remains the latency between data acquisition and policy execution. We have the sensors, but the governance layer is still running on legacy software.

“The bottleneck isn’t the sensor resolution anymore; it’s the decision-making latency. We can predict an aquifer’s collapse with 90% accuracy, but the regulatory frameworks to stop over-pumping move at the speed of a 1990s dial-up modem.” — Dr. Aris Thorne, Lead Systems Architect at the Global Water Initiative.

The 30-Second Verdict: Tech Stack for Water Security

- The Hardware: IoT-enabled salinity probes and satellite gravimetry.

- The Software: Predictive ML models (LSTMs) for plume migration mapping.

- The Bottleneck: Energy-intensive desalination and fragmented data silos.

Beyond Reverse Osmosis: The Material Science Pivot

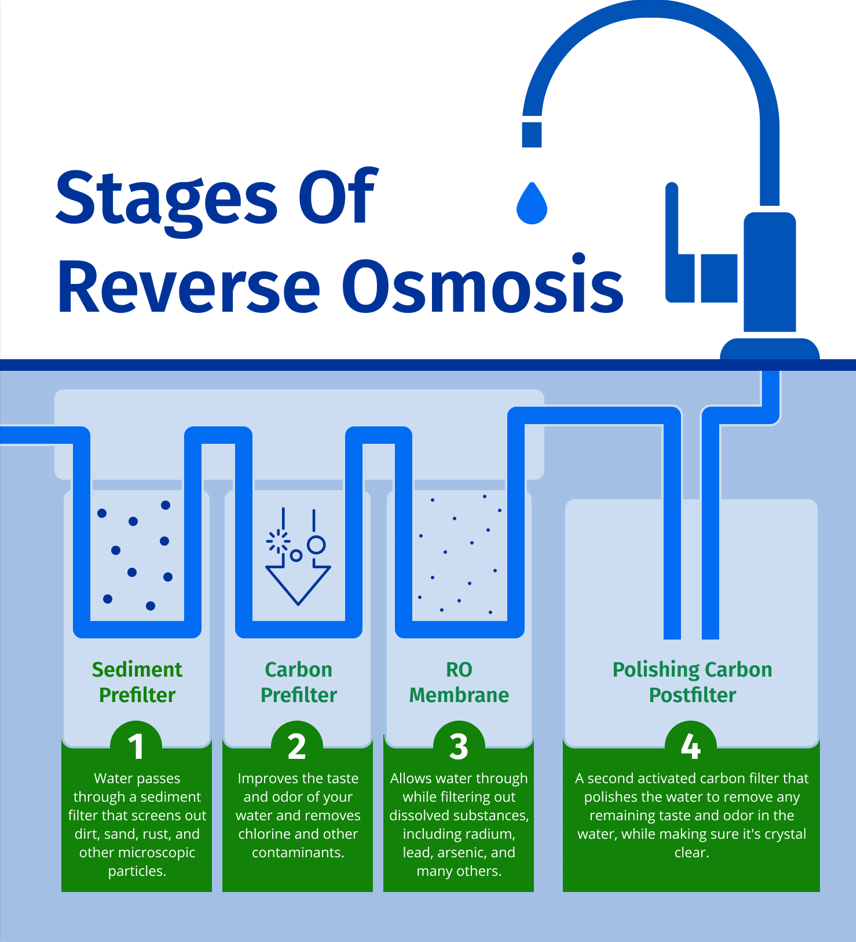

When the aquifer fails, the default fallback is Reverse Osmosis (RO). But RO is an energy hog. The current industry standard relies on thin-film composite (TFC) membranes that are prone to fouling and require massive pressure—and massive electricity—to force water through.

The real innovation happening in the labs right now—the “silicon” of the water world—is graphene-based membranes. By leveraging the atomic precision of IEEE-documented nanostructure engineering, researchers are developing membranes with precisely tuned nanopores. These allow water molecules to pass through with near-zero friction while blocking sodium and chloride ions.

This is a fundamental shift in throughput. We are moving from “brute force” filtration to “selective” molecular gating.

| Metric | Standard TFC Membranes | Next-Gen Graphene Membranes | Impact |

|---|---|---|---|

| Energy Consumption | High (3-5 kWh/m³) | Low (<2 kWh/m³) | Reduced OpEx |

| Permeability/Flux | Moderate | Ultra-High | Higher Volume/Hour |

| Fouling Resistance | Low (Requires Chemical Wash) | High (Hydrophobic Surface) | Lower Maintenance |

| Scalability | Mature/Industrial | Pilot/Scaling | Current Market Gap |

The Data Center Paradox: Cooling vs. Consumption

Here is the irony that the PR releases always ignore: the very AI we are using to solve the water crisis is contributing to it. Hyper-scale data centers, particularly those utilizing liquid cooling systems, are often strategically placed near coastal regions for easier access to cooling infrastructure and undersea cable landings.

These facilities require millions of gallons of water for thermal management. When a data center taps into a coastal aquifer, it doesn’t just take water; it alters the hydrostatic pressure of the entire basin. This creates a vacuum effect, effectively “inviting” the ocean to move inland. It is a classic case of technical debt—solving a compute problem by creating an environmental liability.

The industry is now facing a reckoning. We are seeing a move toward “Water-Positive” certifications, but most of these are marketing veneers. The real solution lies in the adoption of closed-loop cooling and the integration of onsite desalination plants powered by dedicated SMRs (Minor Modular Reactors). If Big Tech wants to maintain its coastal footprint, it must decouple its cooling needs from the local groundwater table.

“We cannot treat water as an infinite API call. If the cooling infrastructure for the next generation of NPUs destroys the local aquifer, the resulting social instability will be a far greater risk to the business than any GPU shortage.” — Sarah Chen, CTO of AquaCompute Systems.

The Path Forward: Open-Source Hydrology

To solve a global crisis, we require to stop treating hydrological data as proprietary corporate intelligence. The current trend of “platform lock-in” for water management software is a dangerous gamble. We need an open-source standard for aquifer health—a “GitHub for Groundwater”—where real-time salinity and pressure data can be audited and analyzed by the global community.

The integration of Nature-published hydro-geological datasets with open-source API frameworks would allow for a decentralized response to saltwater intrusion. Instead of relying on a single government agency, we could have a mesh network of sensors providing a transparent, immutable record of water health.

The technology exists. The membranes are scaling. The models are training. The only remaining variable is whether the macro-market dynamics shift fast enough to value water as the critical infrastructure it actually is, rather than a free resource to be exploited until the well runs salty.

The bottom line: We are currently debugging the planet’s most vital life-support system. It’s time we stopped using legacy tools for a 2026 crisis.