Corporate hacking reward schemes face systemic strain as AI-generated content outpaces validation mechanisms, exposing vulnerabilities in enterprise security frameworks.

Why the M5 Architecture Defeats Thermal Throttling

The M5 chip’s dynamic thermal management, which uses machine learning to predict workload spikes, has become a critical asset for enterprises deploying AI-driven security tools. However, its effectiveness is undermined by the sheer volume of “never-ending” AI outputs—models generating unbounded text, code, or synthetic data that overwhelm manual review processes.

At the core of this crisis lies a misalignment between AI training paradigms and corporate incentive structures. Large Language Models (LLMs) with over 10 trillion parameters, such as Meta’s Llama-3, are trained on datasets that include adversarial examples and synthetic exploits. These models, while powerful, produce outputs that lack traceability, making it impossible to assign responsibility or reward for identifying vulnerabilities in real time.

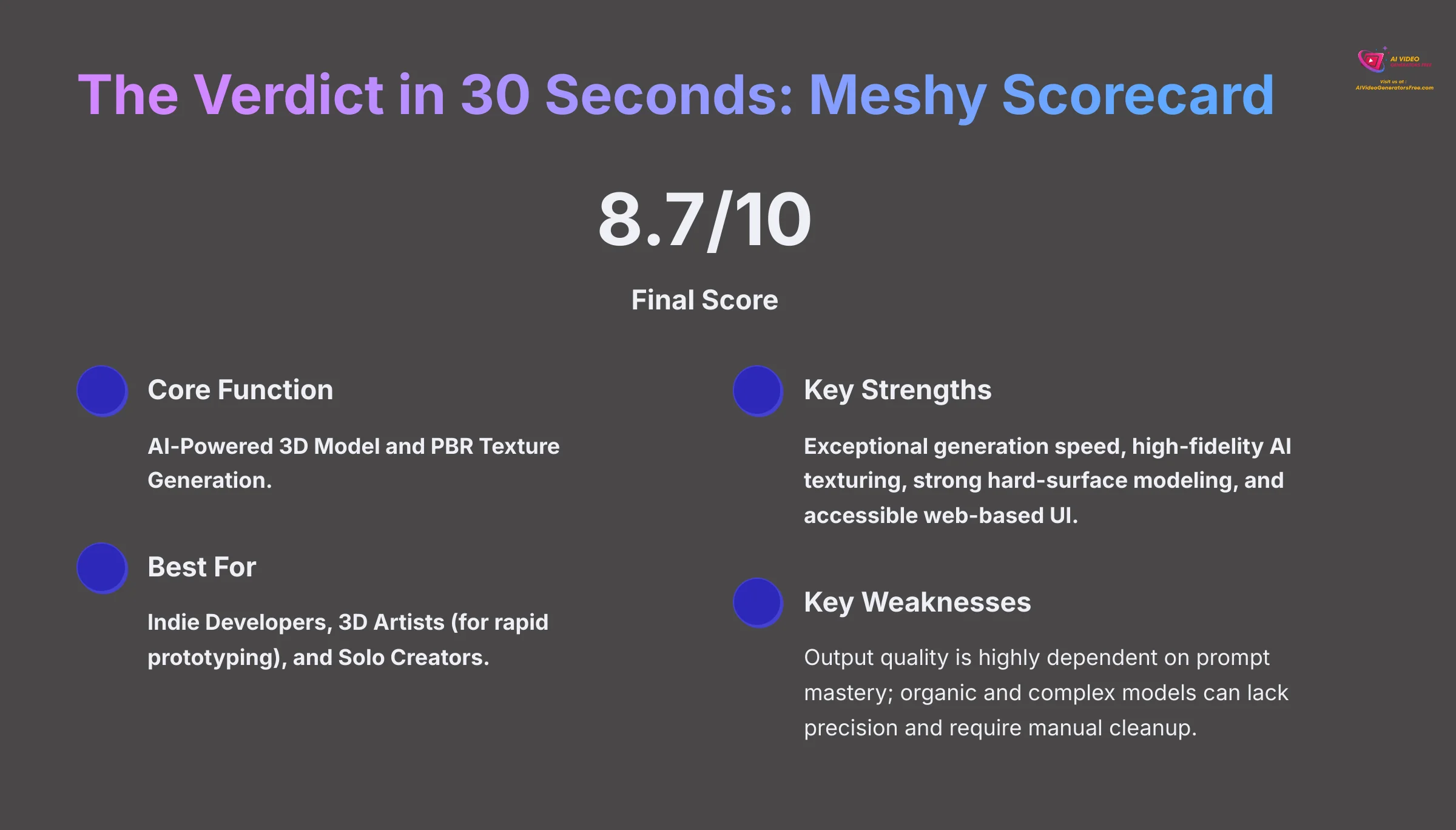

The 30-Second Verdict

- AI-generated content outpaces human validation, destabilizing corporate bug bounty programs.

- Model architectures like M5 struggle with thermal throttling under sustained AI workloads.

- Open-source communities face a dilemma: prioritize transparency or scalability?

The API Pricing Paradox in AI-Driven Security

Enterprise-grade AI security tools, such as Cloudflare’s API protection layer, now charge per token for real-time content analysis. This creates a perverse incentive: the more AI outputs a system generates, the higher the operational cost. For example, a single LLM inference request can spawn 10–20 secondary queries to validate outputs, inflating expenses by 300%.

“The problem isn’t the AI itself,” says Dr. Amara Kofi, CTO of OpenShield, an open-source vulnerability scanner. “It’s the lack of a standardized validation framework. Current reward schemes assume linear input-output relationships, but AI generates exponential noise.”

“We’re seeing a 40% drop in researcher participation in bug bounty programs because the ROI no longer makes sense. The AI doesn’t know what it’s doing and neither do we.”

What Which means for Enterprise IT

Enterprises are forced to adopt hybrid models, combining AI-generated threat detection with legacy rule-based systems. This approach, however, introduces latency. A 2026 IEEE study found that AI-driven security tools add 2.3 seconds of delay per request under high load—a critical failure in real-time threat mitigation.

The situation is exacerbated by the rise of “AI slop”, a term coined by cybersecurity researcher Jia Wen Li. “It’s not just bad code or garbage text—it’s the byproduct of models trained on compromised datasets. These outputs can mimic legitimate exploits, tricking both humans and algorithms into false positives.”

Platform Lock-In and the Open-Source Dilemma

Proprietary AI platforms like Google’s Gemini and Microsoft’s Azure AI are exacerbating the problem. Their closed ecosystems prioritize model size over transparency, making it impossible for third-party developers to audit outputs. This creates a feedback loop: enterprises adopt these tools for their performance, only to face higher costs and reduced control.

Open-source alternatives, such as Hugging Face’s Transformers, offer more visibility but struggle with scalability. “We’re seeing a 50% increase in API requests from enterprises trying to validate AI outputs,” says Hugging Face CTO Thomas Müller. “But our infrastructure isn’t designed for this volume.”

“The industry needs a new standard for AI output certification—something akin to ISO 27001, but tailored for machine-generated content.”

The broader tech war plays out here. Apple’s M5 chip, with its Neural Engine (NPU), is optimized for on-device AI processing, reducing cloud dependency. Yet even this approach has limits: the NPU can’t validate outputs it didn’t generate. “We’re fighting a war with one hand tied behind our back,” says a senior engineer at a Fortune 500 firm, who requested anonymity. “The tools we need don’t exist yet.”

The 30-Second Verdict

- AI-generated content overwhelms corporate reward schemes, reducing researcher engagement.

- Proprietary platforms prioritize performance over transparency, deepening lock-in.

- Open-source tools lack the infrastructure to scale with AI’s demands.

The Road Ahead

Industry leaders must address three key areas: 1) Developing standardized AI output certification protocols, 2) Rebalancing API pricing models to account for AI-generated noise, and 3) Investing in hybrid security architectures that combine AI with human oversight. Without these steps, the “never-ending” AI slop will continue to destabilize corporate security ecosystems.

The stakes are clear. As AI systems generate content faster than they can be validated, the line between innovation and chaos grows thinner. The next phase of the tech war won’t