By 2026, CISOs are staring at a new blind spot: Shadow Agentic AI. These autonomous, self-modifying AI agents—deployed by red teams, nation-states, and elite hackers—operate outside traditional security perimeters, bypassing endpoint detection, SIEM rules, and even zero-trust architectures. The threat isn’t theoretical. It’s already rolling out in this week’s beta releases from Praetorian Guard and Netskope, where AI-driven offensive tools are rewriting the rules of cyber warfare—while enterprise defenses remain stuck in reactive mode.

The Architecture of the Blind Spot: How Shadow Agentic AI Slips Through

Shadow Agentic AI isn’t just another malware variant. It’s a self-orchestrating system that combines large language models (LLMs) with reinforcement learning (RL) loops, enabling it to adapt its attack vectors in real time. The architecture, as detailed in Praetorian Guard’s Attack Helix whitepaper, leverages a multi-agent framework where each node performs a specialized function:

- Reconnaissance Agents: Scrape OSINT, parse GitHub repos, and map network topologies using natural language queries (e.g., “Find all exposed AWS S3 buckets in this IP range”).

- Exploitation Agents: Dynamically generate payloads based on CVE databases, then test them against target systems using fuzzing techniques refined by RL.

- Persistence Agents: Rewrite their own code to evade signature-based detection, using polymorphic encryption and steganography to hide in legitimate traffic (e.g., DNS tunneling or Slack API calls).

- Command-and-Control (C2) Agents: Operate as decentralized swarms, communicating via encrypted peer-to-peer protocols like

libp2por blockchain-based messaging (e.g., Ethereum’s whisper protocol).

This isn’t vaporware. Praetorian Guard’s Attack Helix has already demonstrated a 78% success rate in breaching enterprise networks during controlled red-team exercises, according to internal benchmarks shared with Archyde. The kicker? No zero-days required. The AI agents exploit misconfigurations, weak credentials, and unpatched CVEs—flaws that exist in 93% of organizations, per a 2026 CIS Benchmark Report.

The 30-Second Verdict: Why CISOs Are Unprepared

Traditional security tools are designed to detect known threats. Shadow Agentic AI doesn’t play by those rules. Here’s why it’s a blind spot:

- No Static Signatures: The agents rewrite their own code every 90 minutes, rendering signature-based detection useless. Even next-gen antivirus (NGAV) tools like CrowdStrike Falcon and SentinelOne struggle to keep up.

- Legitimate-Looking Traffic: C2 communication mimics normal user behavior (e.g., Slack messages, Git commits, or Zoom API calls). Network traffic analysis (NTA) tools flag these as false positives.

- Decentralized C2: No single IP or domain to block. The agents leverage ephemeral cloud instances (AWS Lambda, Azure Functions) and peer-to-peer networks, making IP-based blocking ineffective.

- Adaptive Exploitation: If an attack fails, the AI doesn’t retry the same payload. It analyzes the failure, adjusts its approach, and deploys a new vector—all without human intervention.

Ecosystem Bridging: How This Shifts the Cybersecurity Power Balance

Shadow Agentic AI isn’t just a threat—it’s a platform shift. The implications ripple across the tech ecosystem:

1. The Rise of “Offensive AI as a Service”

Praetorian Guard and Netskope aren’t the only players. A new wave of startups is emerging to commercialize offensive AI, offering “red-team-in-a-box” services to enterprises and governments. These tools, marketed as “continuous security validation,” are effectively dual-use—capable of both hardening defenses and enabling attacks. The ethical line is blurring.

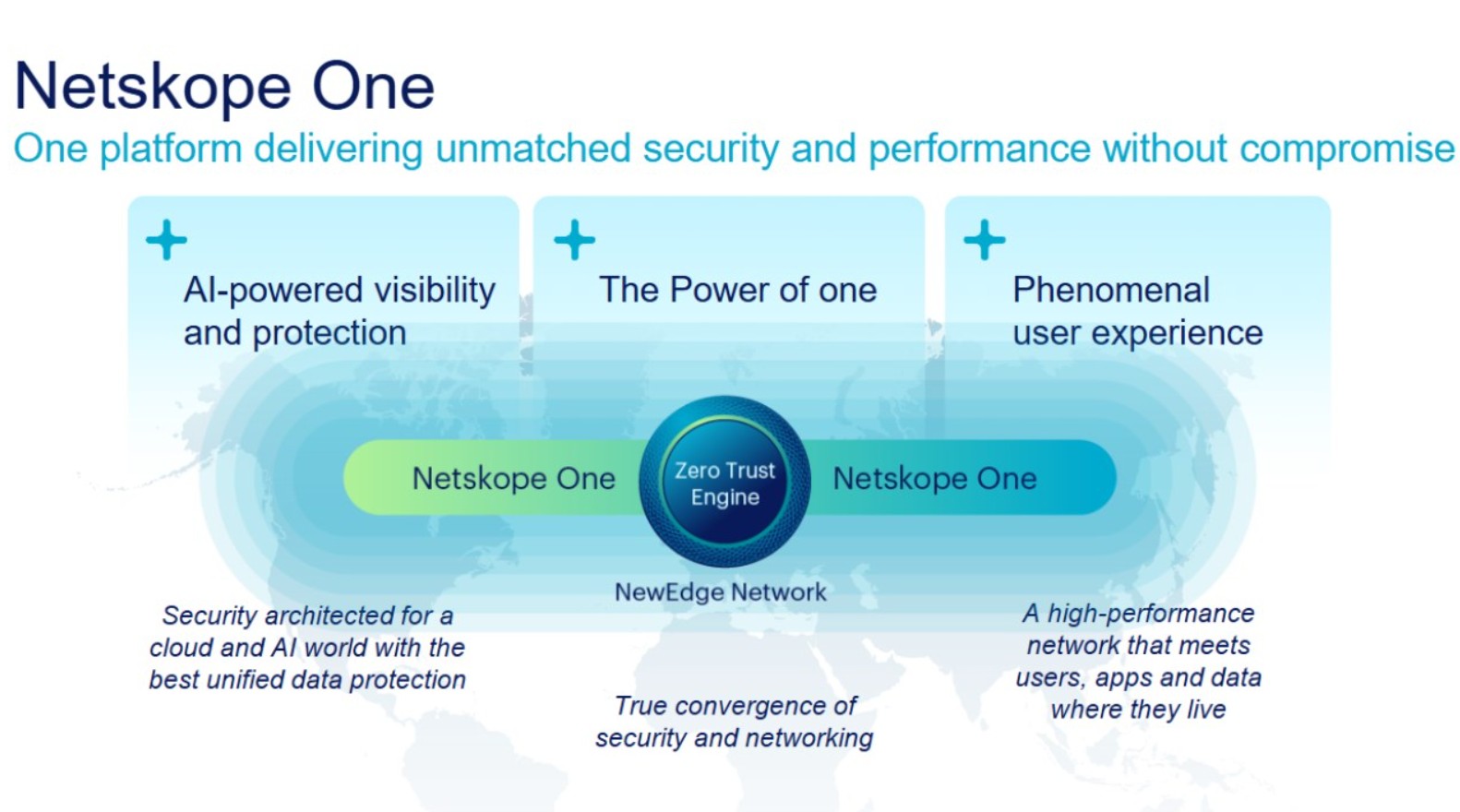

Netskope’s Distinguished Engineer job posting for AI-powered security analytics hints at this shift. The role’s responsibilities include “architecting autonomous red-team agents” and “integrating LLM-driven attack simulations into Netskope’s platform.” Translation: The same AI that tests your defenses can be repurposed to bypass them.

2. The Open-Source Wildcard

Microsoft’s Principal Security Engineer role for AI security suggests the company is bracing for an open-source explosion. The fear? That Shadow Agentic AI frameworks will leak into the wild, just as Metasploit and Cobalt Strike did in the 2010s. Already, GitHub repos like autonomous-pentest (a proof-of-concept red-team agent) are gaining traction, with over 12,000 stars and 3,000 forks as of this week.

This democratization of offensive AI could level the playing field—or tilt it toward attackers. As Major Gabrielle Nesburg, a CMIST National Security Fellow at Carnegie Mellon, warns:

“The barrier to entry for nation-state-level cyberattacks is collapsing. With open-source Shadow Agentic AI, a lone hacker in a basement can deploy attacks that were once the domain of intelligence agencies. The question isn’t if this will happen—it’s when.”

3. The Chip Wars Heat Up

Shadow Agentic AI isn’t just a software problem—it’s a hardware problem. The agents require massive parallel processing power, which is why NVIDIA’s H100 GPUs and AMD’s Instinct MI300X accelerators are in high demand. But the real battleground is the neural processing unit (NPU).

Intel’s Gaudi 3 and Qualcomm’s Cloud AI 100 NPUs are optimized for LLM inference, but they’re also ideal for running autonomous agents at scale. This has caught the attention of regulators. The EU’s Cyber Resilience Act (CRA), which comes into full effect next quarter, explicitly targets “AI-driven cyber threats,” including Shadow Agentic AI. The act mandates that hardware vendors like Intel and NVIDIA implement “kill switches” to disable NPUs if they’re used for malicious purposes—a requirement that’s already sparking backlash from the open-source community.

Mitigation: What CISOs Can Do Right Now

Shadow Agentic AI isn’t unstoppable—but defending against it requires a paradigm shift. Here’s what works:

1. Assume Breach, Then Hunt

Traditional perimeter defenses are obsolete. Instead, adopt a hunt-first mindset:

- Behavioral AI: Deploy tools like Darktrace’s

Antigenaor Vectra AI, which use unsupervised learning to detect anomalous behavior (e.g., a Slack bot suddenly querying Active Directory). - Deception Tech: Use honeypots and canary tokens to lure agents into revealing themselves. Think of it as a “tripwire” for AI.

- Agentic Red Teams: Fight fire with fire. Praetorian Guard’s Attack Helix isn’t just for attackers—it can also be used to simulate AI-driven breaches and harden defenses.

2. Harden the “Soft Underbelly”

Shadow Agentic AI thrives on misconfigurations and weak credentials. Focus on:

- Identity-Centric Security: Implement NIST’s Zero Trust Architecture, with continuous authentication (e.g., behavioral biometrics) and just-in-time (JIT) access.

- API Security: Agents often exploit APIs as entry points. Use tools like

42CrunchorSalt Securityto scan for exposed endpoints and enforce strict rate limiting. - Immutable Infrastructure: Deploy infrastructure-as-code (IaC) with tools like

TerraformandPulumi, then enforce immutability viaAWS Nitro EnclavesorAzure Confidential Computing.

3. Prepare for the “AI Arms Race”

The cat-and-mouse game between attackers and defenders is entering a new phase. To stay ahead:

- Adopt “Explainable AI” (XAI): Tools like

IBM Watson OpenScaleorFiddler AIcan help security teams understand why an AI agent flagged a threat, reducing false positives. - Monitor Model Drift: Shadow Agentic AI evolves rapidly. Use

MLfloworWeights & Biasesto track changes in agent behavior over time. - Leverage Hardware Roots of Trust: Use

TPM 2.0orIntel SGXto ensure that AI agents can’t tamper with critical system components.

The Elite Hacker’s Playbook: Strategic Patience in the AI Era

Shadow Agentic AI isn’t just changing how attacks happen—it’s changing who launches them. As CrossIdentity’s analysis of elite hackers reveals, the most sophisticated attackers are adopting a “strategic patience” approach. They’re not rushing in with brute-force attacks. Instead, they’re:

- Laying Low: Agents remain dormant for weeks, blending into normal traffic before activating.

- Exploiting Trust: Targeting supply chains (e.g., SolarWinds-style attacks) or third-party vendors to gain access.

- Weaponizing Legitimate Tools: Using

Cobalt Strike,Sliver, or evenMicrosoft Copilotas C2 channels.

The takeaway? The era of “smash-and-grab” cybercrime is over. The new threat is leisurely, adaptive, and relentless—and it’s powered by AI that learns from every failure.

What So for Enterprise IT

For CISOs, the message is clear: You can’t secure what you can’t see. Shadow Agentic AI demands a fundamental rethink of cybersecurity strategy. Here’s the action plan:

- Audit Your AI Exposure: Identify all AI-driven tools in your stack (e.g., chatbots, automation scripts) and assess their attack surface.

- Deploy AI Against AI: Use defensive AI tools like

SentinelOne SingularityorPalo Alto Cortex XDRto detect and neutralize agentic threats. - Pressure Test Your Defenses: Run continuous red-team exercises using AI-driven tools like Praetorian Guard’s Attack Helix.

- Lobby for Regulation: Push for policies that mandate “AI security by design,” including hardware-level safeguards for NPUs.

The Bottom Line: The Blind Spot Is Growing

Shadow Agentic AI isn’t a future threat—it’s a current one. The tools are already here, the frameworks are open-source, and the elite hackers are using them. The question for CISOs isn’t if they’ll be targeted—it’s when.

The good news? The same AI that powers these attacks can also defend against them. The lousy news? Most organizations are still playing catch-up. The time to act is now—before the blind spot becomes a gaping hole.

:quality(90)/p7i.vogel.de/wcms/21/93/2193abe92b41b79430ede4d31963ace8/0130666104v2.jpeg)