DeepSeek’s API pricing undermines the economic viability of local LLM hosting by offering inference costs lower than the electricity required to power equivalent hardware. This shift disrupts the “sovereign AI” trend and puts immense pricing pressure on major cloud providers like Microsoft (NASDAQ: MSFT) and Google (NASDAQ: GOOGL).

For the past two years, the enterprise narrative centered on “data sovereignty”—the idea that companies must host their own models to ensure privacy and long-term cost stability. However, the emergence of hyper-efficient inference pricing from DeepSeek changes the calculus. When the cost of an API call is lower than the utility bill for the GPUs running the same model, the capital expenditure (CapEx) for private AI clusters becomes an unjustifiable liability on the balance sheet.

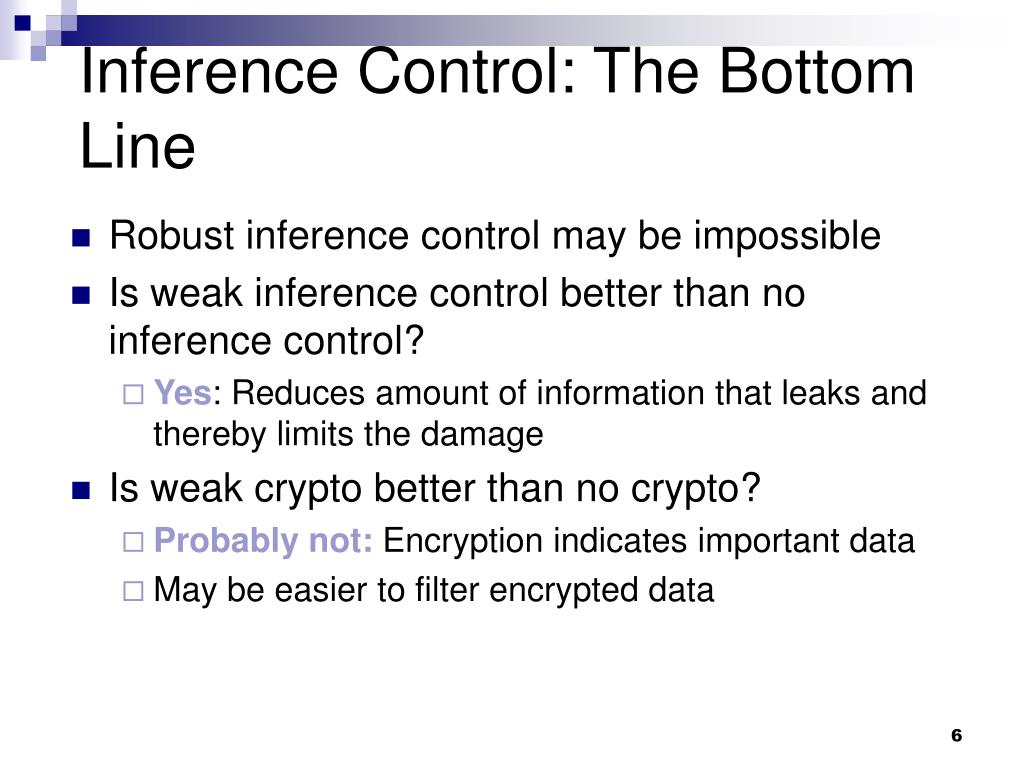

The Bottom Line

- Inference Commoditization: Intelligence is shifting from a high-margin premium service to a low-margin utility, mirroring the evolution of cloud storage.

- CapEx Risk: Enterprises that over-invested in proprietary H100 clusters face accelerated depreciation and stranded assets.

- Margin Compression: Hyperscalers are forced to choose between matching predatory pricing or losing market share in the developer ecosystem.

The Unit Economics of the Inference War

To understand the “cheaper than electricity” claim, we have to appear at the Total Cost of Ownership (TCO). Running a frontier-class model locally requires high-density compute clusters, typically powered by NVIDIA (NASDAQ: NVDA) H100s. These chips have a Thermal Design Power (TDP) of up to 700W per GPU, excluding the energy required for cooling systems and networking fabric.

Here is the math.

For a mid-sized enterprise running a local cluster of 8 GPUs at 100% utilization, the electricity cost alone can exceed $1,200 per month per node, depending on regional kilowatt-hour (kWh) rates. When you factor in the amortization of the hardware—roughly $30,000 to $40,000 per GPU—the cost per million tokens rises significantly. DeepSeek’s pricing model, however, leverages massive economies of scale and highly optimized MoE (Mixture of Experts) architectures to drive the cost per token below the marginal cost of power for a minor-to-medium enterprise.

But the balance sheet tells a different story for the provider.

By pricing below the “local electricity” threshold, DeepSeek is not just competing on product quality. We see engaging in a strategic market-share grab. By making it mathematically irrational to host locally, they centralize data flow and dependency within their ecosystem. Here’s a classic disruption play designed to starve competitors of the telemetry data needed to refine future model iterations.

| Metric (Per 1M Tokens) | Local Hosting (Est. TCO) | DeepSeek API (Current) | Legacy Frontier Models |

|---|---|---|---|

| Electricity & Cooling | $0.15 – $0.40 | Included | Included |

| Hardware Amortization | $0.80 – $1.20 | Included | Included |

| Total Estimated Cost | $0.95 – $1.60 | $0.10 – $0.20 | $2.00 – $15.00 |

The Hyperscaler Squeeze and the NVIDIA Paradox

This pricing aggression creates a precarious situation for Amazon (NASDAQ: AMZN) and Microsoft (NASDAQ: MSFT). These giants have spent billions building the infrastructure to sell AI as a service. If the market price for inference collapses, the Return on Invested Capital (ROIC) for these data centers declines. As we approach the close of Q2 2026, analysts are closely watching whether these firms will pivot toward “bundled” pricing to hide the margin erosion of their AI offerings.

Then there is the NVIDIA (NASDAQ: NVDA) factor. On the surface, cheaper API costs suggest a lower need for local hardware, which should hurt chip sales. However, the reality is more complex. To offer prices lower than local electricity, providers must achieve extreme efficiency, which requires the latest, most power-efficient architecture. The “race to the bottom” on price actually accelerates the upgrade cycle for Blackwell and subsequent chip generations.

“The shift from training-centric spending to inference-centric spending is the defining trend of 2026. When inference becomes a commodity, the value migrates from the model weights to the proprietary data layer.” — Analysis attributed to institutional strategy reports on AI infrastructure.

This shift is already visible in the equity markets. We are seeing a rotation away from “pure-play” AI infrastructure toward companies that can leverage cheap inference to automate high-cost labor processes. The goal is no longer to *own* the model, but to *orchestrate* the cheapest possible intelligence to drive EBITDA growth.

Geopolitical Arbitrage and the Energy Moat

The ability to price below the cost of local electricity is not just a software achievement; it is a result of geopolitical energy arbitrage. DeepSeek benefits from a different cost structure regarding power and land, allowing them to operate at a marginal cost that Western firms—burdened by higher regulatory costs and energy prices—cannot easily match.

This creates a “compute divide.” Western firms are attempting to solve this through nuclear energy investments and custom silicon (like Google’s TPU or Amazon’s Trainium) to decouple from the NVIDIA tax. But these are long-term plays. In the short term, the market is reacting to the immediate availability of near-zero-cost intelligence.

Let’s be clear about the risks.

For the business owner, the temptation to migrate all workloads to the cheapest API is high. However, this introduces systemic concentration risk. If a single provider controls the majority of the world’s inference due to predatory pricing, they hold an unprecedented lever over the global digital economy. This is likely to trigger scrutiny from the SEC and antitrust regulators in the EU and US, who view “predatory pricing to kill local competition” as a violation of fair market principles.

The Strategic Pivot for 2026

As the industry moves forward, the “local vs. Cloud” debate is being replaced by a “hybrid orchestration” model. Smart enterprises are no longer buying hardware to run models; they are buying hardware to cache models. By using local compute for high-security, low-latency tasks and bursting to hyper-cheap APIs for bulk processing, companies can optimize their OpEx.

The broader macroeconomic implication is a deflationary shock to the knowledge economy. When the cost of intelligence drops below the cost of the electricity to produce it, the value of “knowing how to prompt” or “knowing how to code” diminishes. The premium shifts entirely to domain expertise and the ability to verify the output of these low-cost systems.

For investors, the play is no longer the “picks and shovels” of the AI gold rush. The play is now in the “refineries”—the companies that can accept this raw, cheap intelligence and turn it into a high-margin product. Keep a close eye on enterprise software valuations; the winners will be those who can integrate these APIs to slash their own internal costs although maintaining their subscription pricing.

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial advice.