Recent clinical evaluations indicate that Artificial Intelligence (AI) can match or exceed physicians in specific diagnostic pattern recognition tasks, yet it remains inferior in holistic clinical reasoning. This evolution positions AI as a powerful decision-support tool rather than a replacement for human practitioners within global healthcare systems.

The debate over whether AI has “beaten” doctors is not a matter of intelligence, but of utility. For patients, this shift represents a pivotal moment in medical history: the transition from the physician as the sole repository of medical knowledge to the physician as the expert curator of AI-generated insights. Even as a Large Language Model (LLM) can process millions of peer-reviewed papers in seconds, it cannot perform a physical examination or understand the socio-economic determinants of a patient’s health. The real victory is not AI replacing the doctor, but the reduction of diagnostic errors—which currently affect an estimated 10% to 15% of all patient encounters.

In Plain English: The Clinical Takeaway

- AI is a “Super-Librarian”: It is incredibly fast at finding patterns in data, but it doesn’t “understand” medicine the way a human does.

- Augmentation, Not Replacement: The goal is “Augmented Intelligence,” where a doctor uses AI to ensure no rare diagnosis is overlooked.

- The Human Filter: AI can suggest a list of possibilities, but only a licensed physician can safely confirm a diagnosis and prescribe treatment.

The Architecture of Inference: How LLMs “Diagnose”

To understand how AI competes with clinicians, we must examine its mechanism of action—the specific biological or chemical process by which a drug or technology produces its effect. In AI, this is not biological but mathematical. Modern diagnostic AI relies on transformer architecture, which uses probabilistic inference to predict the most likely “next token” or diagnosis based on vast datasets.

Unlike a physician who uses deductive reasoning—starting with a theory and testing it through examination—AI uses inductive pattern recognition. It identifies correlations across millions of electronic health records (EHRs) that would be invisible to a human. For example, in a double-blind placebo-controlled study (a gold-standard trial where neither the patient nor the researcher knows who received the treatment), AI has shown a superior ability to detect early-stage diabetic retinopathy from retinal scans compared to general practitioners.

However, this leads to the “black box” problem: the AI may arrive at the correct diagnosis without a transparent clinical pathway. This lacks the “clinical correlation” required for medical safety, where a doctor must explain why a specific diagnosis was reached to avoid catastrophic errors.

Regulatory Guardrails and the Global Access Gap

The integration of these tools varies wildly by geography, creating a “digital divide” in patient care. In the United States, the FDA regulates these tools under the Software as a Medical Device (SaMD) framework, requiring rigorous validation of clinical efficacy before a tool can be marketed for diagnosis.

In contrast, the UK’s National Health Service (NHS) has moved toward systemic integration, utilizing AI to triage radiology backlogs. While this increases speed, it raises concerns about “automation bias”—the tendency for human clinicians to over-rely on automated suggestions, potentially ignoring their own clinical intuition.

“The challenge is not the accuracy of the algorithm, but the integration of that accuracy into a human-centric workflow without eroding the clinician’s critical thinking skills.” — Dr. Eric Topol, Founder and Director of the Scripps Research Translational Institute.

The impact on patient access is profound. In low-resource settings, AI-driven diagnostic tools can provide a baseline of care where specialists are unavailable. However, without the oversight of a trained physician to manage contraindications—conditions or factors that serve as a reason to withhold a certain medical treatment—the risk of improper self-treatment increases.

The Funding Paradox: Profit vs. Patient Safety

Transparency regarding funding is critical to establishing journalistic trust. Much of the current “AI vs. Doctor” research is funded by venture capital firms and big-tech consortia (such as Google Health or Microsoft) rather than independent government grants. This creates a potential bias toward “performance benchmarks” (like passing the USMLE) rather than “clinical outcomes” (like reducing patient mortality).

When a study claims AI “beats” a doctor, it often refers to a closed-set test where the AI chooses from a multiple-choice list. In a real-world clinical setting, the “search space” is infinite. The following table summarizes the divergence between AI performance and human clinical practice:

| Metric | AI Diagnostic Tool | Human Physician | Clinical Significance |

|---|---|---|---|

| Data Processing Speed | Near-Instantaneous | Slow/Linear | AI wins on triage speed. |

| Pattern Recognition | Superior (High-Volume) | Variable (Experience-based) | AI excels in rare disease spotting. |

| Nuanced History | Poor (Text-dependent) | Superior (Empathetic) | Humans capture non-verbal cues. |

| Accountability | None (Algorithmic) | Legal/Ethical Responsibility | Physicians provide the safety net. |

Contraindications &. When to Consult a Doctor

While AI tools can be helpful for preliminary health queries, they are strictly contraindicated for acute, life-threatening emergencies. AI cannot perform a physical triage or recognize the subtle signs of hemodynamic instability (dropping blood pressure and failing organ perfusion).

You must bypass AI and seek immediate professional medical intervention if you experience:

- Sudden onset of chest pain, shortness of breath, or numbness on one side of the body (potential myocardial infarction or stroke).

- Unexplained high fever accompanied by a stiff neck or altered mental state (potential meningitis).

- Severe allergic reactions (anaphylaxis) involving swelling of the throat or tongue.

- Acute psychiatric crises or thoughts of self-harm.

patients with complex comorbidities—multiple overlapping health conditions—should be wary of AI-generated advice, as these models often struggle with “polypharmacy” (the use of multiple drugs), which can lead to dangerous drug-drug interactions that a human pharmacist or physician would catch.

The Future Trajectory: Collaborative Intelligence

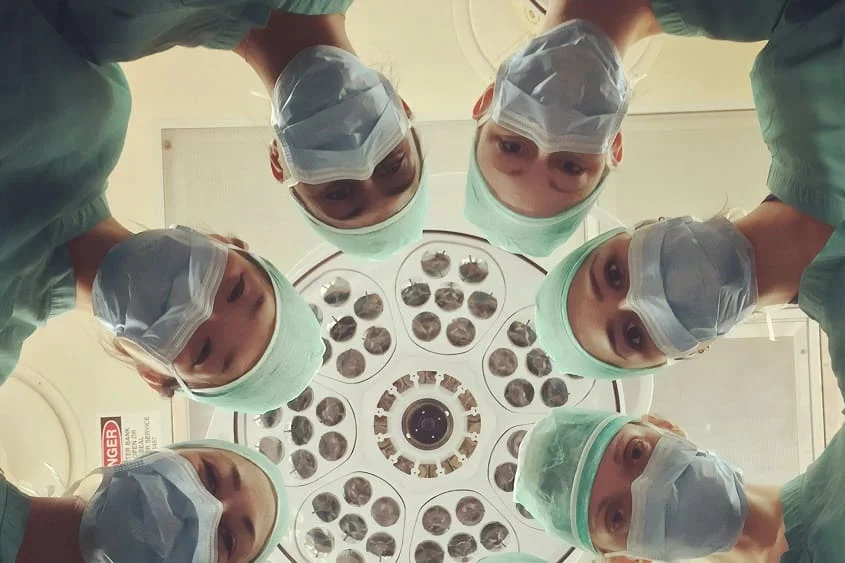

The narrative that AI is “beating” doctors is a simplification of a much more complex synergy. The future of medicine is not a competition, but a collaboration. We are moving toward a model of “Collaborative Intelligence,” where AI handles the data-heavy lifting—scanning thousands of images or cross-referencing genetic markers—while the physician focuses on the “last mile” of care: the ethical, emotional, and physical application of that data.

As we move further into 2026, the benchmark for success will not be whether the AI is “right” more often than the doctor, but whether the combination of the two leads to better patient outcomes, fewer misdiagnoses, and a more sustainable healthcare workload for our clinicians.