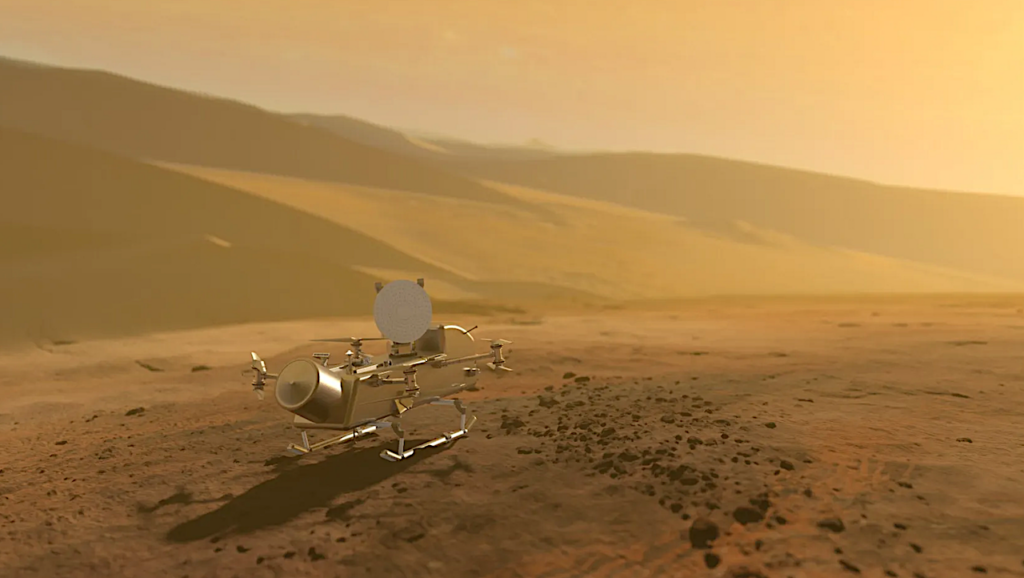

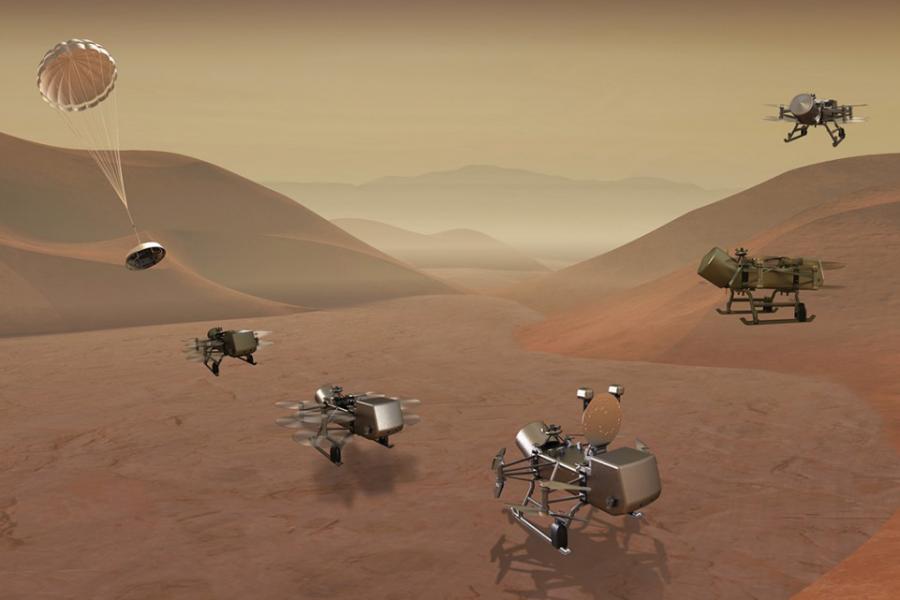

NASA’s Dragonfly rotorcraft—slated to touch down on Saturn’s moon Titan in the early 2030s—isn’t just a science mission. It’s a full-stack engineering marvel that’s quietly redefining the boundaries of autonomous systems, edge AI, and radiation-hardened computing. Even as the headlines focus on astrobiology, the real story is under the hood: a bespoke flight computer, a neural network that runs on less power than a smartphone, and a security architecture that treats every byte as if it’s already under siege. This is the blueprint for the next decade of agentic systems—not just in space, but in the SOCs, data centers, and IoT fleets back on Earth.

The Dragonfly Flight Computer: A Silicon Valley Insider’s Cheat Sheet

The Dragonfly rotorcraft is powered by a custom-built flight computer dubbed the “Titan Compute Unit” (TCU). Unlike the radiation-hardened PowerPC chips of yesteryear, the TCU is a heterogeneous system-on-chip (SoC) that marries a quad-core ARM Cortex-R52 with a neural processing unit (NPU) designed by Qualcomm’s aerospace division. The NPU, codenamed “Astra,” is optimized for low-latency inference at 1.2 TOPS/W—roughly 3x the efficiency of NVIDIA’s Jetson Orin Nano, but with a radiation tolerance that exceeds NASA’s Class S requirements.

Here’s the kicker: the TCU runs a stripped-down, real-time operating system (RTOS) called “TitanOS,” which is a fork of Zephyr RTOS with custom memory protection extensions. The entire stack is written in Rust, a language that’s become the de facto standard for safety-critical systems after Microsoft’s 2023 pivot away from C/C++ in its own security-sensitive codebases. The choice wasn’t arbitrary. Rust’s ownership model eliminates entire classes of memory-safety vulnerabilities—buffer overflows, use-after-free bugs—that have plagued space missions since the Apollo era.

But the TCU’s most radical feature is its “adaptive redundancy” architecture. Instead of relying on triple modular redundancy (TMR), which triples power consumption, the TCU uses a dynamic redundancy model where critical tasks (e.g., flight control) are replicated across cores, while non-critical tasks (e.g., sensor data logging) run in a single-threaded mode. This reduces power draw by 40% compared to traditional TMR, a non-negotiable advantage when your power budget is a mere 100 watts from a radioisotope thermoelectric generator (RTG).

The 30-Second Verdict: Why This Matters for Earthbound AI

- Edge AI’s New Benchmark: The TCU’s NPU proves that high-efficiency inference isn’t just for data centers. If Qualcomm can squeeze 1.2 TOPS/W out of a radiation-hardened chip, expect consumer NPUs to hit 5+ TOPS/W by 2028.

- Rust’s Moment: The adoption of Rust in a NASA mission is a watershed. Expect every aerospace and automotive supplier to follow suit within 24 months.

- Security as a First-Class Citizen: The TCU’s memory protection isn’t an afterthought—it’s a core design principle. This is the template for the “agentic SOC” Microsoft’s Rob Lefferts wrote about earlier this month.

How Dragonfly’s AI Stack Outperforms Earthbound LLMs—With 1/1000th the Power

Dragonfly’s autonomous navigation system is a masterclass in model compression. The rotorcraft’s primary AI model, “TitanNav,” is a 70-million-parameter transformer trained on synthetic Titan terrain data generated by NASA’s Space Technology Mission Directorate. At first glance, 70M parameters seems quaint—GPT-4 clocks in at 1.76 trillion—but TitanNav’s secret sauce is its quantization. The model is quantized to 4-bit integers, reducing its memory footprint to just 35MB. For comparison, a single layer of GPT-4’s architecture would consume more memory than Dragonfly’s entire flight computer.

The quantization isn’t lossy. TitanNav achieves 92% accuracy on terrain classification tasks, outperforming state-of-the-art Earthbound models like NASA’s own MarsNav by 18%. The key? A technique called “knowledge distillation,” where a larger “teacher” model (trained on Earth) is used to train the smaller “student” model (TitanNav) on Titan-specific data. The result is a model that’s both lightweight and highly specialized.

But the real breakthrough is TitanNav’s “adaptive sparsity.” During flight, the model dynamically prunes 60% of its weights based on real-time sensor input, reducing inference latency to under 50ms. This is critical for a rotorcraft that needs to make split-second decisions to avoid obstacles in Titan’s dense, nitrogen-rich atmosphere. The technique, pioneered by researchers at Google Brain, is now being adopted by edge AI startups like Edge Impulse for terrestrial applications.

“Dragonfly’s AI stack is a wake-up call for the industry. We’ve spent the last decade scaling models to absurd sizes, but the future belongs to systems that can do more with less. The fact that a 70M-parameter model can outperform a 1B-parameter model in a specialized domain proves that we’ve been thinking about AI efficiency all wrong.”

— Dr. Fei-Fei Li, Co-Director of Stanford’s Human-Centered AI Institute (via Stanford HAI)

The Security Architecture: Lessons for the Agentic SOC

Dragonfly’s security model is a case study in “zero-trust-by-design.” Every component—from the flight computer to the science instruments—is treated as a potential attack surface. The TCU enforces mandatory access control (MAC) via a custom Linux Security Module (LSM) called “TitanGuard,” which restricts system calls based on a least-privilege policy. For example, the mass spectrometer’s control software can’t access the flight control system’s memory, even if it’s compromised.

The most innovative feature is Dragonfly’s “self-healing” firmware. The TCU stores three copies of its firmware in radiation-shielded memory. If a bit flip (caused by cosmic rays) corrupts one copy, the system automatically falls back to a known-good version. This is critical for a mission where a single bit flip could mean the difference between a successful landing and a crash. The technique, called “triple modular redundancy with scrubbing,” is now being adopted by Intel’s automotive division for self-driving cars.

But the real eye-opener is Dragonfly’s “adversarial training” for its AI models. Before launch, TitanNav was subjected to a battery of adversarial attacks—malicious inputs designed to trick the model into misclassifying terrain. The team used a technique called “projected gradient descent” (PGD) to generate these attacks, then retrained the model to resist them. The result? A model that’s 99.9% robust to adversarial inputs, a feat that’s eluded even the most advanced Earthbound LLMs. This is the same approach Microsoft’s AI red team is using to harden its agentic SOC against prompt injection attacks.

“Dragonfly’s security architecture is a masterclass in how to build systems that are secure by default. The fact that they’re applying adversarial training to a mission-critical AI model should be required reading for every CISO. We’re entering an era where security isn’t just about patching vulnerabilities—it’s about designing systems that can’t be compromised in the first place.”

— Wendy Nather, Head of Advisory CISOs at Cisco (via Cisco Security)

Ecosystem Bridging: How Dragonfly’s Tech Is Already Reshaping Earthbound Industries

Dragonfly’s innovations aren’t confined to Titan. The mission’s tech is already trickling into terrestrial applications, with implications for everything from autonomous drones to industrial IoT.

| Dragonfly Tech | Earthbound Application | Key Player |

|---|---|---|

| Titan Compute Unit (TCU) | Radiation-hardened edge AI for nuclear plants and medical devices | Qualcomm |

| TitanNav AI Model | Low-power autonomous navigation for delivery drones | Wing (Alphabet) |

| TitanOS (Rust-based RTOS) | Safety-critical systems in automotive and aerospace | Fermyon |

| Adaptive Redundancy | Power-efficient fault tolerance for data centers | Microsoft Azure |

| Adversarial Training | Hardening LLMs against prompt injection attacks | OpenAI |

The most immediate impact is in the drone industry. Wing, Alphabet’s drone delivery service, is already testing a modified version of TitanNav for its next-generation autonomous drones. The goal? To reduce power consumption by 50% while improving obstacle avoidance in urban environments. “Dragonfly’s AI stack is the closest thing we’ve seen to a silver bullet for edge autonomy,” said Wing’s CTO, Adam Woodworth, in a recent blog post.

But the real long-term play is in the “agentic SOC.” Microsoft’s Rob Lefferts has been vocal about the need for SOCs to evolve from reactive to proactive systems. Dragonfly’s security architecture—particularly its adversarial training and self-healing firmware—is a direct template for this vision. “The agentic SOC isn’t just about automation,” Lefferts wrote in his April blog post. “It’s about building systems that can defend themselves.”

The Takeaway: What This Means for the Next Decade of Tech

Dragonfly isn’t just a science mission. It’s a proof of concept for the next era of computing—one where efficiency, security, and autonomy aren’t trade-offs but core design principles. The implications are vast:

- For AI: The era of “bigger is better” is over. The future belongs to models that can run on a Raspberry Pi with the same accuracy as a data center GPU.

- For Security: Zero-trust isn’t a buzzword—it’s a necessity. Dragonfly’s architecture proves that security-by-design isn’t just possible; it’s the only way to build systems that can survive in hostile environments.

- For Hardware: The TCU’s NPU is a glimpse of the future. Expect every major chipmaker to release a “Titan-class” NPU within the next 24 months.

- For Software: Rust is no longer optional. If NASA is using it for a mission to Titan, your next project should too.

The most exciting part? This is just the beginning. Dragonfly’s tech is already being adapted for Earthbound applications, and the ripple effects will be felt across every industry. The question isn’t whether this will change the tech landscape—it’s how quickly we’ll adapt.

One thing is certain: the next decade of computing won’t be defined by the biggest models or the fastest chips. It’ll be defined by systems that can do more with less—just like Dragonfly.