Dune Analytics is slashing 25% of its workforce to pivot toward AI-driven agents and institutional-grade on-chain data infrastructure. This strategic realignment shifts the platform from a community-centric dashboarding tool to a high-performance data engine aimed at hedge funds and enterprises requiring automated, real-time blockchain intelligence and streamlined data pipelines.

The “Community Era” of Web3 data is officially hitting a ceiling. For years, Dune thrived as the digital town square for crypto-native analysts—a place where a clever SQL query could uncover a whale’s movements or a protocol’s hidden leakage. But the market has matured. The curiosity of the retail trader has been replaced by the rigorous requirements of the institutional asset manager.

This isn’t just a cost-cutting exercise. It is a fundamental architectural pivot.

The Shift from Visual Dashboards to Agentic Workflows

The core of this transition lies in the move toward AI agents. For the uninitiated, we aren’t talking about a simple chatbot that summarizes a chart. We are talking about Agentic Workflows—autonomous LLM-driven systems capable of iterating through complex data retrieval tasks without human intervention.

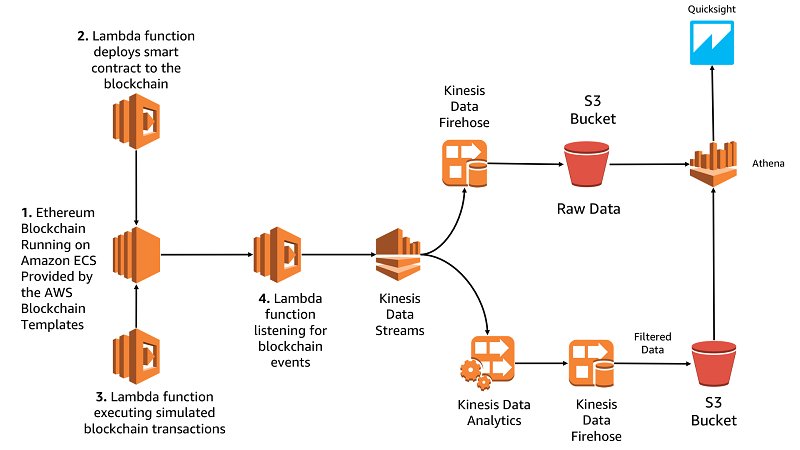

The technical bottleneck for on-chain data has always been the “translation layer.” Raw blockchain data is a nightmare of hex strings and opaque event logs. Historically, you needed a human who spoke both SQL and EVM (Ethereum Virtual Machine) to make sense of it. By integrating LLM parameter scaling specifically tuned for structured query language (SQL) generation, Dune is attempting to collapse that gap.

The goal is a system where an institutional analyst can ask, “Identify all liquidity pools on Arbitrum with a TVL drop of 20% in the last hour and cross-reference them with recent governance votes,” and the AI agent doesn’t just find the data—it writes the query, executes it, validates the result for hallucinations and pushes the alert to a Bloomberg Terminal.

The Technical Stack: dbt and the Semantic Layer

The recent release of the dbt (data build tool) Connector is the smoking gun here. By allowing teams to integrate Dune’s data into their own dbt pipelines, Dune is effectively moving “upstream.” They are no longer just the destination (the dashboard); they are becoming the infrastructure (the data warehouse).

This allows for the creation of a Semantic Layer. In data engineering, a semantic layer acts as a translation map between complex data tables and business terms. When an AI agent queries “Revenue,” it doesn’t have to guess which table to use; it refers to the semantic layer defined via dbt. This drastically reduces latency and eliminates the “hallucination” problem common in general-purpose LLMs.

“The transition from static dashboards to agentic data retrieval is the only way to scale Web3 intelligence. We are moving away from ‘looking at data’ toward ‘interacting with data’ in real-time. The bottleneck is no longer the availability of data, but the latency of the insight.”

Institutional On-Chain Data: The War for the “Single Source of Truth”

Why the 25% layoff? Because maintaining a community-facing product is a different business model than building enterprise software. Community products require high-touch support and constant UI iteration. Institutional products require 99.99% uptime, rigorous security compliance, and deep API integration.

Institutions don’t want a pretty chart; they want a clean, idempotent data feed they can plug into their existing risk management software. They need “Institutional On-Chain Data”—which is code for data that has been cleaned, verified, and indexed with a level of precision that exceeds the “best effort” nature of community queries.

This puts Dune in direct competition with the likes of The Graph and Flipside Crypto, but with a distinct advantage: the historical moat of their community-generated query library. Dune isn’t starting from scratch; they are training their AI agents on the largest repository of human-written blockchain SQL in existence.

The Enterprise Pivot: A Comparative Analysis

| Feature | Community-Centric (Old Dune) | Institutional/Agentic (New Dune) |

|---|---|---|

| Primary Interface | Visual Dashboards / Web UI | API / AI Agents / dbt Pipelines |

| Query Logic | Manual SQL by Analysts | Natural Language $rightarrow$ SQL (LLM) |

| Data Velocity | Near Real-Time / Batch | Low-Latency / Event-Driven |

| User Persona | Crypto-Native Researchers | Quant Funds / Compliance Officers |

| Value Prop | Information Discovery | Operational Intelligence |

The Macro Risk: Platform Lock-in and the Open-Source Dilemma

There is a tension here that the PR doesn’t mention. Dune’s rise was fueled by an open, collaborative spirit. By pivoting toward institutional silos and proprietary AI agents, they risk alienating the very community that built their data moat. If the “power users” feel the platform is becoming a walled garden for hedge funds, they may migrate to more open alternatives.

the reliance on LLMs for data retrieval introduces a new cybersecurity vector. “Prompt injection” in a data context could potentially allow a malicious actor to trick an AI agent into leaking sensitive institutional query patterns or manipulating the perceived state of a protocol to trigger automated trades.

We are seeing a broader trend across Silicon Valley: the “Efficiency Era.” Companies are stripping away the “growth-at-all-costs” headcount and replacing manual workflows with autonomous agents. Dune is simply the first major Web3 data player to do this with ruthless transparency.

The 30-Second Verdict

Dune is betting that the future of blockchain analysis isn’t a human staring at a screen, but an AI agent monitoring a stream. By shedding 25% of its staff, it is trading human versatility for algorithmic scale. For the institutional client, this is a win. For the community analyst, the era of the “free and open” dashboard is likely drawing to a close.

The move is logically sound, technically ambitious, and culturally risky. But in the current market, the “geek-chic” appeal of community dashboards doesn’t pay the bills—institutional API contracts do.