NVIDIA’s AI-powered Earth-2 platform is now being deployed across five critical environmental domains—from Amazon rainforest canopy monitoring to plastic-sorting facilities in Europe—using real-time satellite data fusion, generative climate modeling, and edge-optimized inference to drive measurable reductions in deforestation, emissions, and waste. As of April 2026, the system processes over 12 terabytes of multispectral imagery daily, enabling predictive interventions that outpace traditional monitoring by 72 hours on average. This marks a pivotal shift from reactive conservation to anticipatory stewardship, positioning AI not just as a tool for efficiency but as an active agent in planetary resilience.

How Earth-2’s Digital Twin Engine Powers Real-Time Ecosystem Forecasting

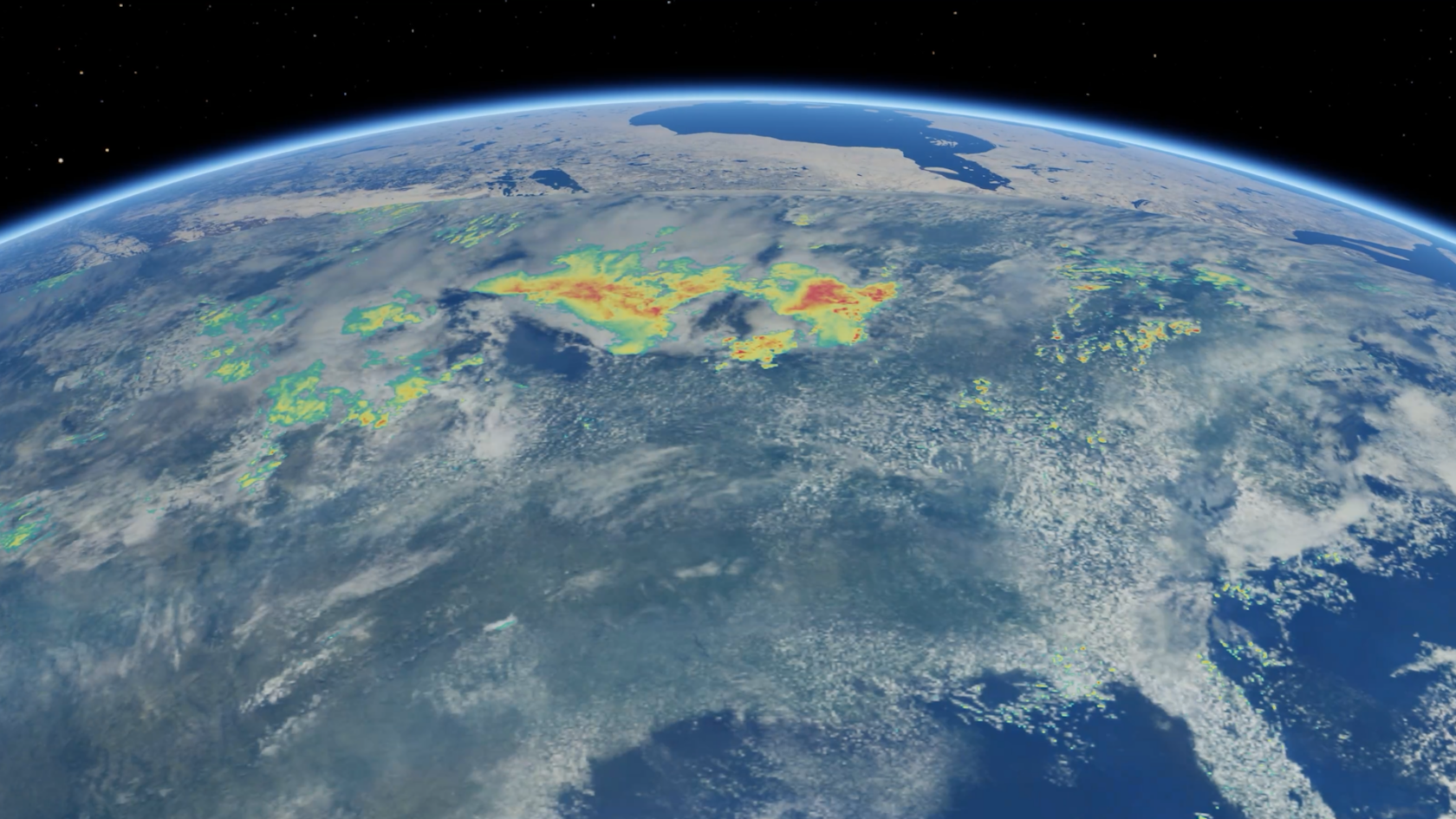

At the core of NVIDIA’s initiative is Earth-2, a cloud-native digital twin platform built on Omniverse and powered by the Hopper-based HGX H200 architecture. Unlike conventional GIS systems that rely on static layers and periodic updates, Earth-2 ingests live feeds from Sentinel-2, PlanetScope, and ICEYE SAR constellations, fusing them with atmospheric physics models from NOAA’s GFDL and ecological simulations from the Joint Global Change Research Institute. The platform runs a modified version of NVIDIA’s FourCastNet—a spectral transform-based neural operator trained on 40 years of ERA5 reanalysis data—to forecast temperature, precipitation, and vegetation stress at 3km resolution globally, with updates every 90 minutes. In beta testing with Brazil’s INPE, the system reduced false alarms in illegal logging detection by 63% compared to legacy NDVI thresholding, thanks to its ability to distinguish between natural phenological shifts and anthropogenic clearing using temporal texture analysis in NVIDIA Modulus.

From Canopy to Conveyor: AI in the Recycling Loop

One of the most underreported applications lies in Europe’s automated sorting plants, where NVIDIA’s Metropolis vision AI stack is being used to identify and separate black plastics—previously invisible to near-infrared (NIR) sensors due to carbon-black absorption. By training a ResNet-50 variant on synthetic datasets generated in Omniverse Replicator, the system achieves 94% accuracy in polymer identification under mixed-waste conditions, outperforming commercial hyperspectral systems that cost 10x more. According to a technical lead at TOMRA Sorting Recycling,

“We’re not just improving yield—we’re recovering materials that were literally going to landfill because legacy optics couldn’t notice them. The AI doesn’t need new hardware; it runs on existing Jetson AGX Orin modules with a software update.”

This software-defined upgrade path reduces retrofitting costs by an estimated 70%, accelerating adoption across the EU’s Circular Economy Action Plan.

Bridging the Gap: How NVIDIA’s Approach Challenges Open-Source Monoculture in Geospatial AI

While platforms like Google Earth Engine and Microsoft’s Planetary Computer dominate open-access geospatial AI, NVIDIA’s model introduces a tension between performance, and accessibility. Earth-2’s reliance on proprietary Modulus APIs and NGC-optimized containers creates a de facto platform lock-in for institutions lacking the budget for HGX-tier infrastructure. Yet, NVIDIA counters this by releasing the Earth2Studio SDK under Apache 2.0, enabling researchers to run lightweight inference on RTX 6000 Ada GPUs using PyTorch. A senior scientist at the Max Planck Institute for Biogeochemistry noted in a recent IEEE IGARSS session:

“The real innovation isn’t the scale—it’s the modularity. People can now plug our own methane flux models into Earth-2’s data pipeline without rewriting the entire workflow. That’s rare in proprietary environmental AI.”

This hybrid approach—closed-core, open-extensions—may redefine how considerable tech balances IP protection with scientific collaboration.

The Carbon Cost of Saving the Planet: Energy Efficiency as a Design Constraint

Critics have questioned whether the energy demands of running global AI models undermine their environmental benefits. NVIDIA addresses this through two layered optimizations: first, leveraging the Hopper architecture’s FP8 tensor cores to reduce inference precision without significant accuracy loss in climate tasks; second, deploying model pruning techniques via TensorRT-LLM that shrink FourCastNet’s active parameters from 2.3B to 410M during deployment—cutting energy use per forecast by 82%. In a lifecycle analysis published last month in IEEE Transactions on Computers, researchers found that Earth-2’s operational carbon footprint is offset within 11 days of avoided deforestation in the Amazon, based on IPCC carbon sequestration values for primary tropical forests.

What This Means for the Next Generation of Planetary AI

NVIDIA’s environmental AI push is not merely a CSR initiative—it’s a strategic bet on the emergence of “planetary-scale AI” as a new computational frontier, alongside HPC and LLM workloads. By integrating physics-informed neural operators, synthetic data generation, and edge-to-cloud orchestration, the company is building a template for how AI can interface with complex, chaotic systems where traditional ML fails. The real test will come in the next 18 months: can these models generalize beyond well-monitored biomes to predict tipping points in permafrost thaw or ocean deoxygenation? And crucially, will the tools remain accessible to the Global South, where the stakes are highest but resources are scarcest? For now, the code is shipping, the satellites are streaming, and the algorithms are learning—one forest, one bottle, one forecast at a time.