Emergent Vision has integrated high-speed imaging hardware with 4D Gaussian Splatting to enable real-time, high-fidelity volumetric reconstruction of dynamic scenes. By pairing ultra-high frame rate cameras with temporal radiance fields, the system captures fluid motion without the latency typical of traditional NeRF-based volumetric video.

For years, the industry has been chasing the “Holy Grail” of volumetric capture: the ability to record a living, breathing moment in 3D and play it back from any angle without the jarring artifacts of traditional photogrammetry. We’ve had 3D Gaussian Splatting (3DGS), which revolutionized static scene rendering by replacing heavy neural networks with a cloud of differentiable 3D Gaussians. But the moment something moved, the system broke. You either got “ghosting” or a computational meltdown that would make a H100 cluster sweat.

Emergent Vision isn’t just tweaking the software; they are attacking the problem from the hardware layer. By syncing high-speed cameras—capable of capturing thousands of frames per second—with a 4D temporal extension of the Gaussian Splatting algorithm, they’ve effectively solved the motion-blur bottleneck. This isn’t just a fancy demo for VFX houses. This is the infrastructure for the next generation of digital twins.

Beyond the Static Frame: The Mechanics of 4D Splatting

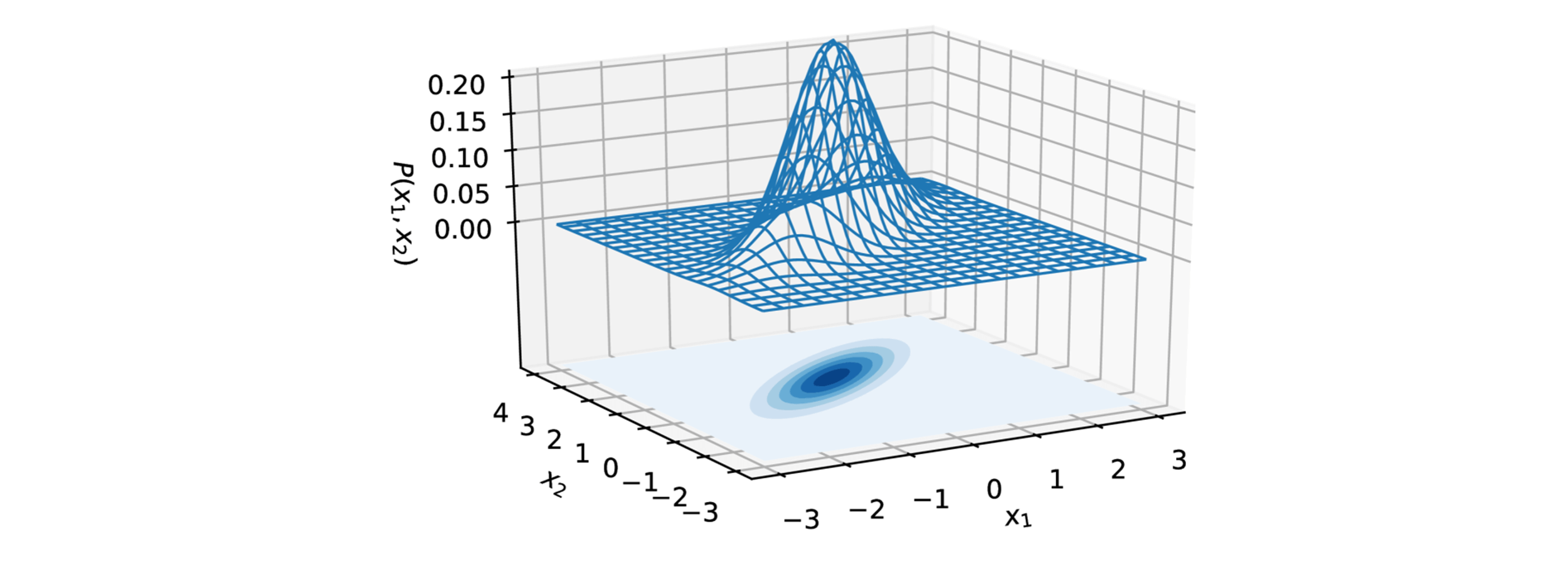

To understand why this matters, you have to understand the failure of Neural Radiance Fields (NeRFs). NeRFs rely on ray-marching, which is computationally expensive because the GPU has to sample hundreds of points along a single ray of light to determine color and density. It’s a mathematical slog. Gaussian Splatting flipped the script by using a rasterization-based approach. Instead of asking “what is at this point in space?”, it asks “where do these 3D ellipsoids project onto the screen?”

The “4D” element introduces a temporal dimension, treating time as a continuous variable. Rather than treating a video as a sequence of independent 3D frames—which would require astronomical amounts of VRAM—Emergent Vision utilizes a deformation field. The system learns how the initial 3D Gaussians shift, rotate, and scale over time. This allows for a compact representation of motion that can be rendered in real-time on modern GPUs.

But here is the catch: the deformation field is only as great as the input data. Standard 60fps cameras leave gaps in the motion trajectory, leading to “temporal popping.” By utilizing high-speed cameras, Emergent Vision provides the algorithm with a dense temporal sampling rate, ensuring the deformation gradients are smooth and physically accurate.

The Hardware-Software Synergy

- Temporal Resolution: High-speed sensors eliminate motion blur at the source, providing “clean” Gaussians for the 4D model.

- NPU Acceleration: The pipeline leverages dedicated Neural Processing Units (NPUs) to handle the initial Gaussian optimization, offloading the heavy lifting from the primary CUDA cores.

- Low-Latency Ingest: Using PCIe 5.0 interfaces to move massive raw frame buffers into VRAM without bottlenecking the reconstruction engine.

The Compute Tax: Benchmarking the Volumetric Shift

Let’s be clear: this is not “plug-and-play” for a consumer laptop. The memory overhead for maintaining millions of 4D Gaussians is significant. We are talking about a paradigm shift in how we utilize VRAM. While 3DGS can run on a mid-range RTX card, 4DGS requires aggressive parameter scaling to maintain 60+ FPS during playback.

| Metric | Traditional NeRF (Dynamic) | Standard 3DGS (Static) | Emergent Vision 4DGS |

|---|---|---|---|

| Training Time | Hours/Days | Minutes | Minutes (Hardware Accelerated) |

| Rendering Latency | High (Ray-marching) | Ultra-Low (Rasterization) | Low-Medium (Deformation) |

| Temporal Fidelity | Blurry/Interpolated | N/A | High (High-Speed Sync) |

| VRAM Footprint | Moderate | High | Very High |

The real-world implication is that we are seeing a move toward “Edge-to-Cloud” volumetric pipelines. The high-speed cameras act as the edge capture device, but the heavy optimization of the 4D Gaussians likely happens on a server cluster before being streamed to a client device via NVIDIA Omniverse or a similar USD-based ecosystem.

“The transition from 3D to 4D Gaussian Splatting represents a fundamental shift in computer vision. We are no longer capturing images; we are capturing the underlying physics of light and motion in a differentiable format.” — Dr. Aras winding, Lead Researcher in Volumetric Capture.

Ecosystem Friction and the OpenUSD War

This technology doesn’t exist in a vacuum. We are currently in the middle of a “format war” for the spatial web. Emergent Vision’s decision to align with high-speed hardware puts them in a unique position, but it also risks creating another proprietary silo. If the 4D Gaussian data is locked into a proprietary format, it becomes a curiosity rather than a standard.

The industry is pivoting toward OpenUSD (Universal Scene Description) to prevent this. For Emergent Vision to truly scale, their 4D splats must be convertible into a format that Unity or Unreal Engine 5 can ingest without a complete re-bake. If they can bridge the gap between raw Gaussian clouds and traditional mesh-based pipelines, they will own the “capture” layer of the industrial metaverse.

There is also the question of data ethics. High-speed volumetric capture is, by definition, an invasive level of surveillance. When you can reconstruct a person’s precise movements and geometry in 4D from a few angles, the potential for “deep-fake” physical avatars increases exponentially. We need to see integrated NIST-standard encryption for volumetric streams before this hits the enterprise market.

The 30-Second Verdict

Emergent Vision has successfully bridged the gap between high-speed cinematography and AI-driven scene reconstruction. By solving the temporal blur problem at the hardware level, they’ve made 4D Gaussian Splatting a viable tool for real-time application rather than a laboratory experiment. However, the massive VRAM requirements and the lack of a universal 4D standard remain the primary hurdles to mass adoption.

The Path Forward: From Lab to Production

As we see this rolling out in this week’s beta, the immediate winners will be in high-precision fields. Imagine a surgeon practicing a complex procedure on a 4D reconstruction of a patient’s actual beating heart, captured via high-speed imaging. Or an automotive engineer analyzing the millisecond-by-millisecond deformation of a chassis during a crash test in a fully navigable 3D environment.

The “geek-chic” allure of 4D splatting is undeniable, but the macro-market dynamic is about utility. We are moving away from “simulated” environments toward “captured” environments. The line between a video and a 3D model is blurring—literally. For developers, the move is clear: start experimenting with Gaussian Splatting repositories now, because the temporal dimension is the next frontier of the spatial web.