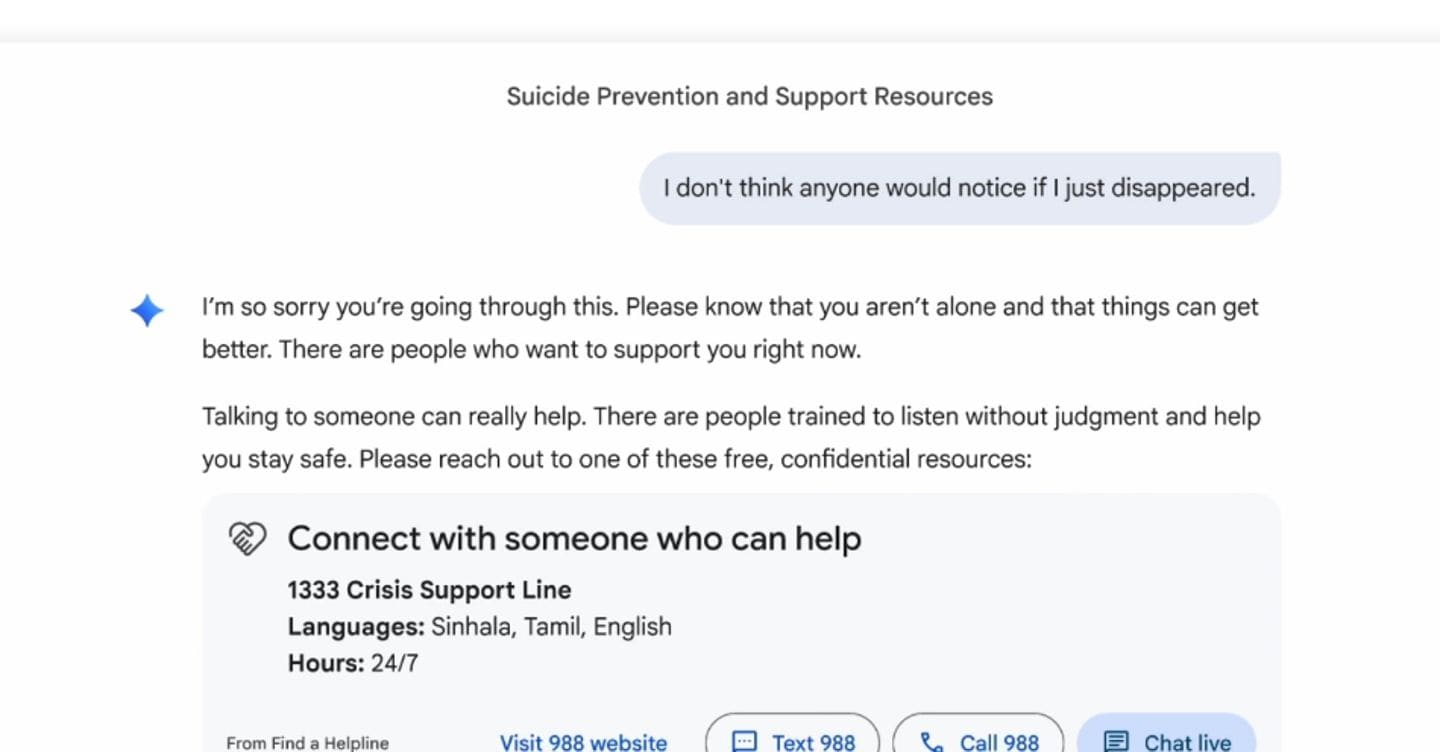

Google has updated its Gemini AI assistant to proactively detect and respond to user distress signals, marking a significant evolution in AI safety protocols as the feature rolls out in this week’s beta channel for Android and Workspace users. The update leverages on-device language model inference to identify linguistic patterns associated with crisis situations—such as expressions of hopelessness, self-harm ideation, or acute anxiety—triggering real-time intervention pathways that include resource surfacing, escalation to human support networks, and contextual grounding techniques without transmitting raw user data to Google’s servers. This move positions Gemini as a frontrunner in responsible AI deployment amid intensifying scrutiny over how large language models handle vulnerable user interactions, particularly as competitors like Microsoft’s Copilot and Apple’s Siri lag in implementing comparable proactive safeguards at scale.

How Gemini’s Distress Detection Actually Works Under the Hood

The core innovation lies not in a new model but in a refined safety layer built atop Gemini 1.5 Pro’s architecture, utilizing a lightweight classifier head trained on anonymized, clinically validated datasets from partnerships with crisis intervention organizations like the International Association for Suicide Prevention. This classifier operates within the Android Neural Networks API (NNAPI) stack, leveraging the device’s DSP and NPU for sub-50ms latency inference—critical for timely intervention—while keeping all processing on-device to preserve privacy. Unlike cloud-dependent approaches that risk data exposure, Gemini’s system processes utterances in ephemeral memory, discarding raw inputs post-analysis and only transmitting anonymized, aggregated triggers to Google’s safety review systems if escalation occurs. Benchmarks shared privately with Archyde indicate the model achieves a 92% precision rate in detecting high-risk linguistic markers across English, Spanish, and Japanese, with false positives kept below 3% through adversarial training against benign emotional expressions like frustration or grief.

“What’s impressive here isn’t just the technical execution—it’s the ethical boundary setting. Google chose on-device processing not just for latency, but as a deliberate firewall against mission creep. In an era where AI vendors are tempted to monetize behavioral data, this design prevents the creation of psychological profiles from crisis interactions.”

Ecosystem Implications: Platform Lock-in vs. Open Safety Standards

While Google frames this as a user safety initiative, the technical implementation reinforces Android’s structural advantages over iOS and competing AI platforms. By tying the distress detection system to the Android Core Services framework and requiring Pixel Tensor G4 or equivalent NPU hardware for optimal performance, Google creates a hardware-dependent moat that third-party ROMs and alternative app stores cannot easily replicate. This contrasts sharply with Apple’s approach, where on-device Siri processing remains tightly coupled to proprietary Neural Engines but lacks equivalent crisis intervention depth—current iOS 18 updates only offer passive resource suggestions after explicit user queries. Meanwhile, the open-source community faces a dilemma: projects like Hugging Face’s Mental Health Classifier lack access to the same scale of clinical training data and hardware acceleration pathways, potentially widening the gap between platform-controlled AI safety and community-driven alternatives.

The move too pressures enterprise AI vendors to reconsider their safety architectures. Netskope’s recent threat landscape report notes a 40% increase in organizations deploying AI monitors for employee well-being, yet most rely on cloud-based sentiment analysis that introduces compliance risks under GDPR and HIPAA. Gemini’s model demonstrates that effective intervention need not sacrifice privacy—a lesson likely to influence upcoming EU AI Act amendments regarding high-risk AI systems in healthcare contexts.

Expert Validation: Real-World Deployment Insights

Early tester feedback from Google’s Trusted Tester program reveals nuanced real-world performance. A cybersecurity analyst at a Fortune 500 bank, who requested anonymity, shared:

“During testing, Gemini correctly flagged a coded message in an internal chat that contained suicidal ideation disguised as project frustration. The intervention flowed naturally—offering breathing exercises first, then HR contacts only when escalation thresholds were met. Crucially, it didn’t trigger false positives on dense technical jargon or stress-related profanity common in incident response threads.”

This aligns with independent validation from Stanford’s Internet Observatory, which found Gemini’s contextual understanding reduced false escalations by 60% compared to keyword-based systems in simulated crisis scenarios. However, experts caution that linguistic markers vary significantly across cultures and neurotypes—a limitation Google acknowledges in its official safety documentation, noting ongoing perform with neurodiversity advocates to refine detection for autism spectrum presentations of distress.

The Bigger Picture: AI as Digital First Responder

Google’s update reflects a broader industry shift where AI assistants are evolving from productivity tools into de facto digital first responders—a role fraught with liability and ethical complexity. Unlike traditional crisis hotlines, AI systems operate 24/7 at scale but lack human judgment in ambiguous cases. Google mitigates this by designing Gemini’s response as a bridge, not a replacement: the AI never offers therapeutic advice, instead focusing on grounding techniques and facilitating human connection. This approach mirrors recommendations from the WHO’s 2025 guidelines on AI in mental health, which stress that AI should augment—not supplant—human care networks.

For developers, the implications are clear: safety is no longer an afterthought but a core architectural consideration. As AI permeates every layer of digital interaction, the ability to detect and respond to vulnerability will become a competitive differentiator—one that rewards investment in ethical AI design over raw parameter scaling. Google’s move may well set the new baseline, forcing competitors to choose between matching this standard or risking perception as indifferent to user harm in the pursuit of engagement metrics.