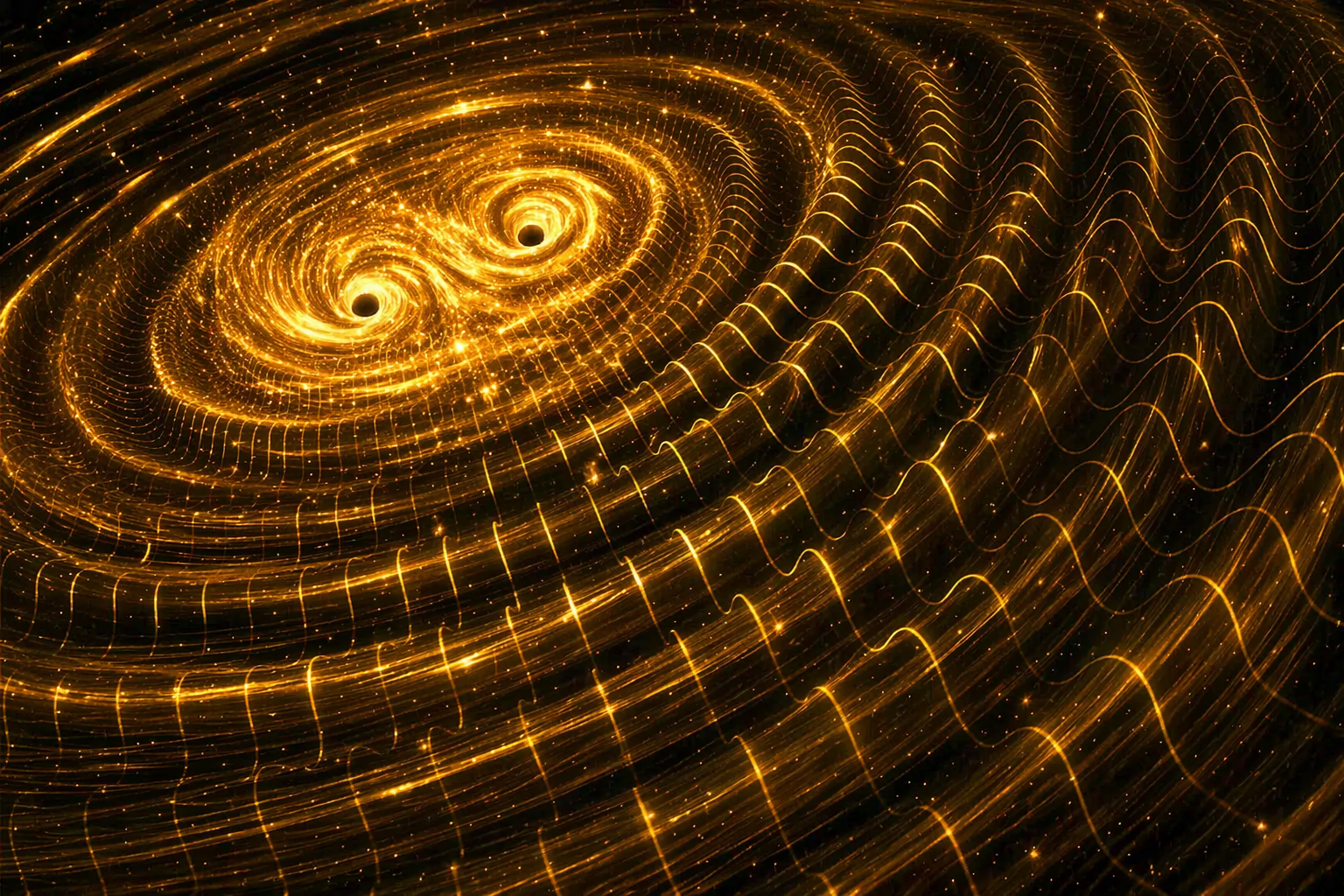

Novel astrophysical models suggest gravitational waves constitute dark matter, reshaping cosmological standards. This discovery relies on high-performance computing clusters to validate signal integrity against noise. For the tech sector, it demands unprecedented AI-driven data verification and secure HPC architectures to prevent simulation spoofing.

The implication is not merely cosmological; We see computational. Validating these waveforms requires exascale processing power that pushes the limits of current IEEE standardization for scientific computing. As we move through Q2 2026, the intersection of astrophysics and enterprise security has never been tighter. The data streams involved are massive, vulnerable to injection attacks, and require the kind of strategic patience usually reserved for elite cybersecurity operations.

The Compute Cost of Cosmological Verification

Understanding dark matter through gravitational waves is not a passive observation task. It is an active data engineering challenge. The signal-to-noise ratio in these detections is infinitesimal, requiring neural processing units (NPUs) optimized for temporal sequence analysis rather than standard image recognition. Traditional GPU clusters are hitting thermal throttling limits when processing continuous wave data over fiscal quarters.

What we have is where the hardware landscape shifts. We are seeing a migration toward specialized architectures capable of sustained floating-point operations without degradation. The energy efficiency per teraflop becomes the critical metric, not just raw speed. If the industry cannot sustain the power load required to simulate these waveforms, the theory remains unverified vaporware. We need shipping silicon, not roadmaps.

Infrastructure Requirements for Waveform Analysis

- Latency: Sub-millisecond processing required for real-time noise cancellation.

- Encryption: Finish-to-end encryption for data in transit between observatories and processing centers.

- Storage: Immutable ledger systems to prevent historical data tampering.

The security implications are profound. If gravitational waves are indeed dark matter, the data becomes a foundational asset for global physics models. Tampering with this dataset could skew economic models reliant on commodity forecasting or even navigation systems dependent on precise celestial mapping. This elevates astrophysical data to the status of critical national infrastructure.

AI Model Integrity and the Security Perimeter

Machine learning models trained on this data must be robust against adversarial examples. In the cybersecurity domain, we worry about prompt injection or data poisoning. In astrophysics, the stakes are universal constants. A corrupted training set could lead to incorrect mass calculations for galactic structures. The open-source community is already debating the verification protocols for these models.

We are witnessing a convergence of roles. The job market reflects this shift. Positions such as Distinguished Technologist, HPC & AI Security Architect are no longer niche. They are central to validating scientific breakthroughs. Companies like Hewlett Packard Enterprise are actively hiring for remote roles that bridge high-performance computing with security architecture, signaling that the industry recognizes the risk profile of this data.

“The integration of AI into scientific discovery requires a security-first mindset. We cannot treat experimental data as immutable truth without verifying the integrity of the pipeline that delivered it.” — Industry Consensus on AI for Science, 2025

This quote underscores the necessity of treating scientific pipelines with the same rigor as financial transaction ledgers. The “Elite Hacker” persona often discussed in security circles applies here. Strategic patience is required to monitor these data streams for anomalies that might indicate either natural phenomena or artificial interference.

Enterprise Mitigation and Data Sovereignty

For enterprise IT leaders, the lesson is clear: data sovereignty extends beyond customer records. It encompasses any data used to train foundational models. If your organization relies on cosmological data for timing synchronization or navigation, you must audit the source. The supply chain for data is as vulnerable as the supply chain for chips.

We are seeing a rise in zero-trust architectures applied to scientific research networks. Access controls are tightening around observatory data lakes. The NASA and partner institutions are implementing stricter clearance types, similar to the Secret clearance required for certain cybersecurity subject matter experts in the defense sector. This is not bureaucracy; it is necessity.

The 30-Second Verdict

Gravitational waves as dark matter is a massive scientific claim. For the tech sector, it is a massive compute claim. Verify the hardware. Secure the pipeline. Audit the model. Do not trust the roadmap.

The broader ecosystem is watching. Open-source communities are demanding transparency in the algorithms used to filter noise from signal. Proprietary locks on these algorithms would create a platform lock-in scenario where only specific cloud providers could validate reality. This is unacceptable. The code used to detect dark matter must be open for peer review, much like the GitHub repositories used for critical security tools.

Future-Proofing the Scientific Stack

As we progress through 2026, expect to see more collaborations between cybersecurity firms and research institutions. The skills required to protect a bank are increasingly similar to those required to protect a particle accelerator. Network segmentation, anomaly detection, and identity management are universal.

The demand for Cybersecurity Subject Matter Experts with knowledge of scientific workflows will spike. These professionals must understand both the exploit mechanism of a network intrusion and the statistical variance of a gravitational wave signal. The overlap is where the value lies.

this discovery forces us to confront the fragility of our digital understanding of the physical world. If the data is compromised, our map of the universe is wrong. In the AI era, ensuring the integrity of that map is the ultimate security challenge. We must build systems that are not only fast but trustworthy. The universe is watching, and so are the hackers.