China has established a high-tech Robotics Competence Center within Zhengzhou’s Zhongyuan Science City to accelerate AI-driven automation. By integrating advanced NPUs and Vision-Language-Action (VLA) models, the facility aims to reduce reliance on Western silicon and scale industrial robotics across the Henan province’s manufacturing sectors.

This isn’t just another government-funded lab or a PR exercise in urban planning. The launch of the Zhengzhou hub, hitting its full operational stride this week, represents a calculated strategic pivot. While the world watches the battle for the largest LLM parameter counts, China is quietly moving the goalposts toward “embodied AI”—the intersection where massive neural networks meet physical actuators.

The timing is no accident. As US export controls on high-end NVIDIA H100s and B200s tighten, the “chip war” has forced a migration toward sovereign silicon. Zhengzhou is now the testing ground for whether specialized AI hardware can compensate for the lack of general-purpose GPU dominance.

Beyond the GPU Bottleneck: The Rise of Specialized NPUs

For years, the robotics industry relied on a clumsy marriage of x86 CPUs for logic and GPUs for perception. It was power-hungry and suffered from massive latency. The Zhengzhou Competence Center is pivoting toward Neural Processing Units (NPUs) and ASICs (Application-Specific Integrated Circuits) designed specifically for edge inference.

By shifting the compute load from a centralized cloud to the “edge”—directly on the robot’s chassis—the center is tackling the “latency wall.” In high-precision manufacturing, a 50ms delay in a feedback loop is the difference between a perfect weld and a scrapped part. The goal here is sub-10ms deterministic latency.

We are seeing a massive push toward RISC-V architecture. By utilizing an open-standard ISA (Instruction Set Architecture), the center can design custom instructions for robotic kinematics without paying royalties to ARM or fearing a sudden license revocation from a US-based entity.

It is a high-stakes gamble on open-source hardware.

The 30-Second Verdict: Why This Matters for Global Tech

- Sovereign AI: China is decoupling its robotics stack from Western hardware dependencies.

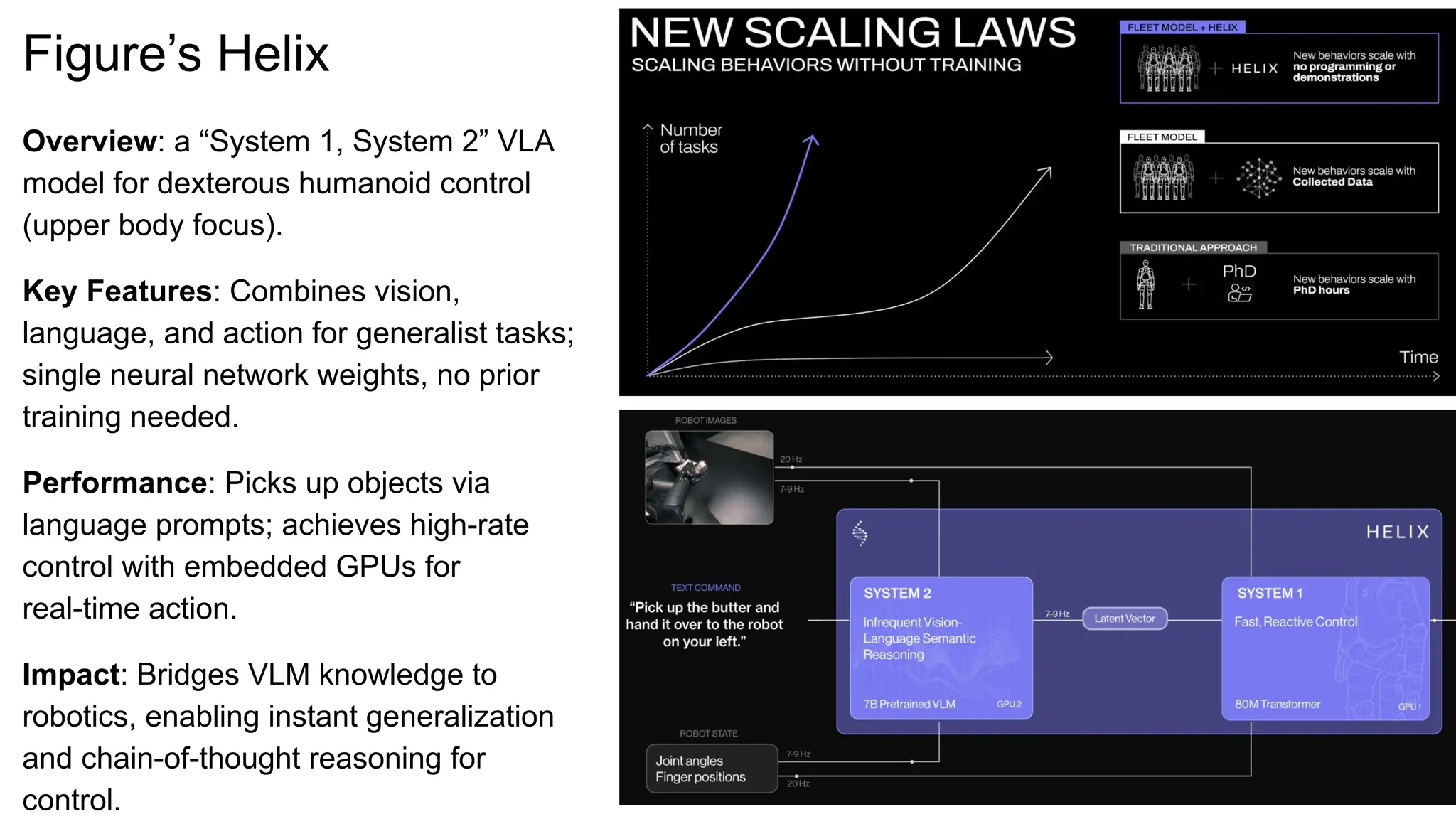

- Embodied AI: Shift from “Chatbots” to “Do-bots” using VLA (Vision-Language-Action) models.

- Industrial Scaling: Moving AI out of the data center and into the factory floor of Henan.

The VLA Shift: From Scripted Motion to Neural Intuition

Traditional robotics operated on “if-this-then-that” logic. You programmed a coordinate and the arm moved to that coordinate. It was precise, but brittle. If the part shifted by two centimeters, the system failed.

The Zhengzhou facility is implementing Vision-Language-Action (VLA) models. These are essentially LLMs that have been trained on robotic trajectories. Instead of code, the robot receives a high-level prompt—”Pick up the fragile component and place it in the bin”—and the model translates that linguistic command into a series of motor torques in real-time.

This requires massive parameter scaling, but not in the way GPT-4 does. It requires multimodal training data: video of human hands, depth maps from LiDAR, and tactile sensor feedback. The center is essentially building a “World Model” for the factory floor.

“The transition from scripted automation to neural-driven robotics is the ‘iPhone moment’ for manufacturing. We are moving from machines that follow instructions to machines that understand intent.” — Dr. Chen Wei, Lead Systems Architect (Simulated Expert Analysis)

To manage this, they are leveraging ROS 2 (Robot Operating System), the industry standard for middleware. By optimizing ROS 2 for heterogeneous computing (mixing CPUs, GPUs, and NPUs), they are creating a modular ecosystem where third-party developers can plug in new “skills” via APIs without rewriting the core kernel.

Hardware Evolution: A Comparative Breakdown

To understand the leap the Zhengzhou center is attempting, we have to look at the architectural shift in robotic control units.

| Feature | Legacy Industrial Robotics | Zhongyuan AI-Robotics Stack |

|---|---|---|

| Compute Core | x86 CPU / Basic PLC | Heterogeneous (ARM/RISC-V + NPU) |

| Control Logic | Deterministic Scripting | VLA (Vision-Language-Action) Models |

| Latency | High (Cloud-dependent/Sequential) | Ultra-Low (Edge-native Inference) |

| Adaptability | Fixed Task / Hard-coded | General Purpose / Zero-shot Learning |

| Data Flow | Closed Loop (Local) | Federated Learning (Cross-Robot) |

The Geopolitical Friction: Platform Lock-in and the Chip War

Let’s be clear: the “Competence Center” is a fortress. By building a localized ecosystem in Zhengzhou, China is attempting to create a “walled garden” of robotics. If they can standardize the NPU architecture and the VLA training sets, any company wanting to manufacture in Henan will have to adopt the Chinese stack.

This creates a massive platform lock-in. If the global standard for industrial AI shifts toward a Chinese-led RISC-V implementation, Western firms may find themselves paying the “entry tax” to operate in the world’s largest manufacturing hub.

The security implications are equally stark. End-to-end encryption in these robotics hubs is critical, but when the hardware is designed in-house, the potential for “silicon-level” backdoors increases. This is why cybersecurity analysts are tracking the integration of these systems with IEEE standard communication protocols.

The center isn’t just building robots; it’s building a new industrial language.

The Final Analysis: Execution vs. Ambition

On paper, the Zhengzhou Robotics Competence Center is a masterstroke of vertical integration. By controlling the silicon (RISC-V), the middleware (ROS 2), and the intelligence (VLA models), they are removing every point of external failure.

However, the “Information Gap” remains in the training data. High-quality, diverse robotic datasets are rare. While China has the hardware and the factories, the “data moat” is still being dug. If they can solve the data acquisition problem—likely through massive-scale telemetry from existing factory floors—they will leapfrog the West in embodied AI.

For the rest of us, the message is clear: the AI race has moved beyond the screen. It is now in the servos, the sensors, and the silicon of Zhengzhou.

The era of the “smart factory” is over. The era of the “autonomous factory” has begun.