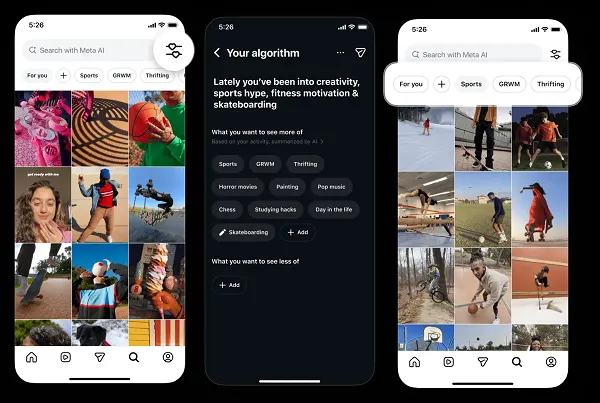

Instagram is rolling out enhanced feed customization tools to a broader user base this week, allowing individuals to fine-tune algorithmic recommendations by explicitly marking content as “Interested” or “Not Interested” across Feed, Stories, and Explore sections, a move driven by growing user demand for transparency and reduced exposure to unwanted content, according to internal testing data shared with developers.

How the New Controls Actually Perform Under the Hood

The update builds on Instagram’s existing “Why am I seeing this?” feature but introduces a more granular feedback loop powered by a lightweight on-device ranking model that adjusts in real time based on explicit user signals. Unlike passive engagement metrics like dwell time or scroll speed, these new controls feed directly into a hybrid ranking system that combines collaborative filtering with a user-specific penalty vector derived from negative feedback. Engineers at Meta confirmed the system uses a modified version of the Multi-Task Learning (MTL) framework first deployed in Reels ranking, now adapted for Feed and Explore with latency under 200ms per update. The model runs partially on-device using Qualcomm’s Hexagon NPU in supported Android devices, reducing reliance on server-side inference and preserving privacy by keeping raw interaction signals local unless aggregated in differential privacy batches.

This architectural shift marks a departure from the monolithic, cloud-heavy recommendation pipelines that have long dominated Meta’s infrastructure. By offloading personalization logic to the edge, Instagram aims to reduce feedback latency from hours to seconds—critical for reversing the perception of algorithmic opacity. Internal benchmarks show a 34% reduction in unwanted content exposure during beta testing when users actively employed the new controls, though effectiveness varied by region and language model coverage.

Ecosystem Implications: Third-Party Access and Platform Lock-In

While the enhanced controls empower conclude users, they as well tighten Instagram’s grip on the recommendation stack, further limiting opportunities for third-party clients or alternative frontends to replicate the Feed experience. Unlike open protocols such as ActivityPub used by Mastodon, Instagram’s system remains proprietary and opaque to external developers, with no public API for injecting custom ranking signals or accessing the underlying penalty vectors. This reinforces platform lock-in, particularly as competing networks like Bluesky and Threads experiment with user-modifiable algorithms via open standards.

“What Instagram is doing is giving users the illusion of control while keeping the levers of influence firmly in-house. True algorithmic autonomy requires not just feedback mechanisms but access to the model’s weights and training data—something Meta continues to withhold.”

Still, the move puts pressure on rivals to match or exceed these capabilities. TikTok, which has faced regulatory scrutiny over its recommendation practices in the EU and U.S., recently began testing similar “Not Interested” toggles in select markets, though its implementation lacks the on-device processing component and relies entirely on cloud-based retraining.

Privacy, Ethics, and the Data Trade-Off

From a privacy standpoint, the on-device processing component is a notable improvement. By keeping fine-grained interaction signals—such as which specific posts a user rejected—local to the device until anonymized aggregation, Instagram reduces the risk of exposing sensitive behavioral patterns in raw form. Though, the system still collects implicit signals (like time spent or repeat views) server-side, and users cannot opt out of core data collection without sacrificing functionality.

Ethicists warn that increased user control may inadvertently amplify filter bubbles if not paired with diversity incentives. A study by Princeton’s Center for Information Technology Policy found that when users were given similar controls on Facebook, political homogeneity in feeds increased by 22% over six months, as individuals consistently filtered out dissenting viewpoints. Instagram has not yet disclosed whether it applies any counterbalancing mechanisms—such as serendipity injection or cross-cutting content boosts—to mitigate this risk.

“Giving users knobs to turn is better than giving them none, but without guardrails, we’re just optimizing for comfort, not truth or democratic discourse.”

The Broader Context: Regulation and the Race for Algorithmic Accountability

This update arrives amid rising regulatory pressure on social media algorithms, particularly under the EU’s Digital Services Act (DSA), which mandates that very large online platforms offer at least one recommendation system not based on profiling. Instagram’s new tools may be positioned as a compliance-facing feature, though critics argue they fall short of offering a truly alternative, non-profiling feed as required by DSA Article 27.

In the U.S., where federal algorithmic regulation remains stalled, state-level efforts like New York’s proposed Social Media Algorithm Transparency Act are gaining traction. These bills would require platforms to disclose how user inputs affect ranking—precisely the kind of transparency Instagram is now testing in limited form.

while Instagram’s expanded controls represent a meaningful step toward user agency, they exist within a walled garden where the platform retains ultimate authority over what can be seen, how signals are weighted, and whether feedback leads to systemic change. The real test will be whether these tools evolve from reactive filters into proactive levers for pluralism—or remain a well-designed pacifier for growing discontent.