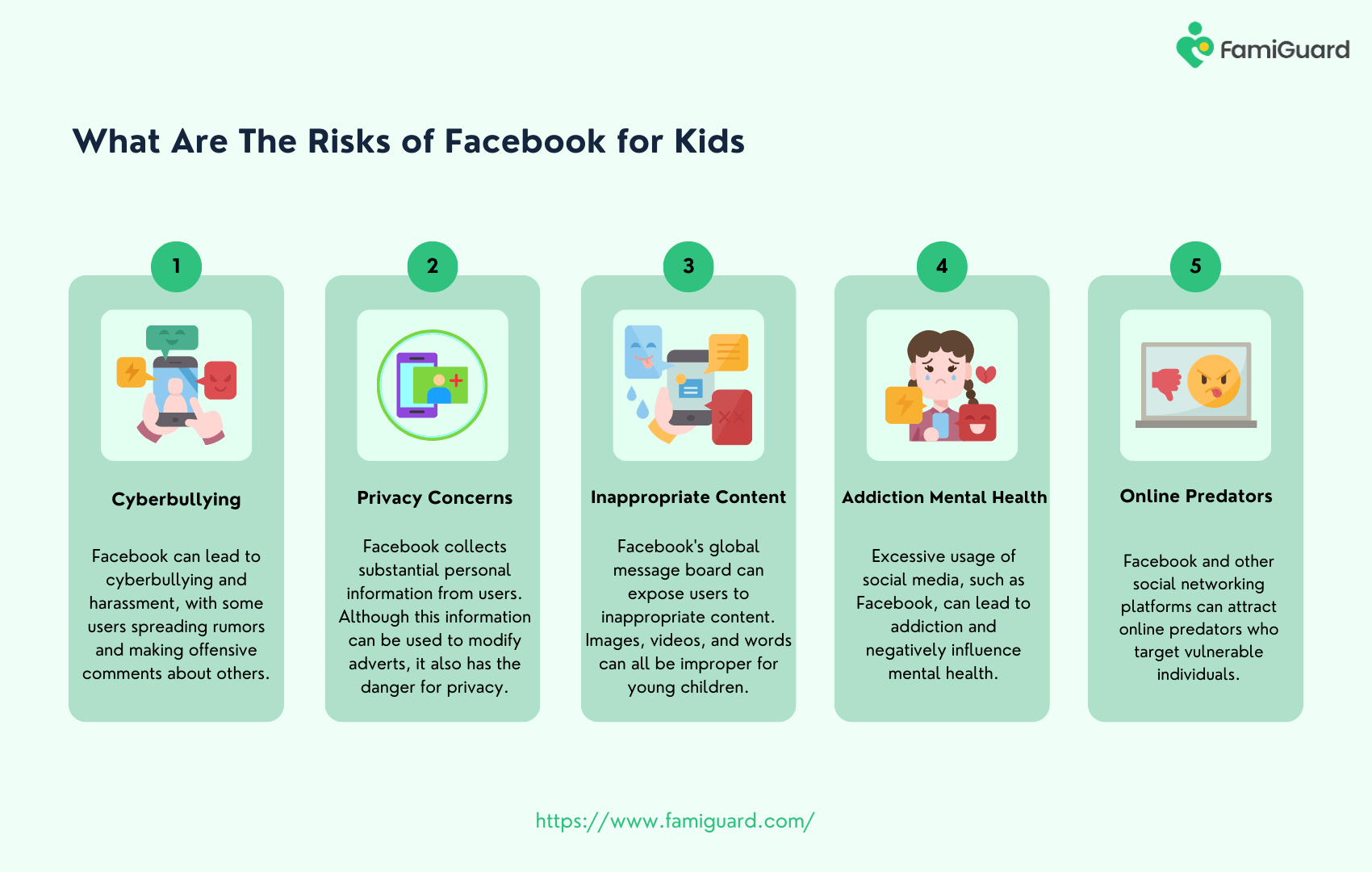

European regulators in Brussels are escalating pressure on Meta, alleging systemic failures in age verification protocols allowing children under 13 access to Instagram, and Facebook. This isn’t a latest concern, but the severity of the accusations – and potential fines – signals a shift towards more aggressive enforcement of digital age compliance. The core issue revolves around Meta’s reliance on self-reporting and insufficient technical safeguards.

The Illusion of Consent: How Meta’s Age Gates Fail

The problem isn’t simply that young children *are* on these platforms; it’s that Meta’s current methods for preventing underage access are demonstrably ineffective. The company primarily relies on users self-identifying their age during account creation. Here’s, frankly, a joke. A determined pre-teen can easily bypass these checks. More concerning is the lack of robust backend verification. While Meta has explored various solutions – including AI-powered age estimation – these have consistently fallen short of reliable accuracy. The current systems are easily spoofed with minimal technical skill. We’ve seen similar vulnerabilities exploited in other social media platforms, but the scale of Meta’s user base amplifies the risk exponentially.

What Which means for Enterprise IT

While seemingly focused on consumer privacy, this regulatory action has ripple effects for enterprise IT. The principles of data minimization and responsible AI – central to the EU’s Digital Services Act (DSA) – are directly applicable to how companies handle user data, including age verification. Organizations deploying AI-powered customer engagement tools must demonstrate similar levels of diligence in protecting vulnerable populations.

The core technical challenge lies in balancing privacy with verification. Traditional methods, like requiring government-issued IDs, are a non-starter due to privacy concerns and logistical hurdles. Meta’s attempts at AI-based age estimation have been criticized for bias and inaccuracy. The company has experimented with “privacy-enhancing technologies” (PETs) like differential privacy, but these often come with trade-offs in data utility. The ideal solution would involve a federated learning approach, where age verification models are trained on decentralized data sources without directly accessing sensitive user information. Though, this requires industry-wide collaboration, something Meta has historically resisted.

The Regulatory Landscape: DSA and Beyond

This isn’t an isolated incident. The European Commission’s Digital Services Act (DSA), which came into full force in February 2024, mandates stricter content moderation and user safety standards for online platforms. The DSA empowers regulators to impose hefty fines – up to 6% of a company’s global annual revenue – for non-compliance. The current investigation into Meta falls squarely under the DSA’s purview. The EU is actively considering the Digital Services Act’s successor, the Digital Markets Act (DMA), which aims to curb the power of “gatekeeper” platforms like Meta. The DMA could force Meta to interoperate with smaller social networks, potentially reducing its control over user data and age verification processes.

The situation is further complicated by the evolving legal definitions of “child” and “consent” in the digital age. The concept of “digital maturity” – a child’s ability to understand the risks and consequences of online interactions – is gaining traction in legal circles. This could lead to a more nuanced approach to age verification, where access to certain features is restricted based on a user’s demonstrated level of digital literacy. However, implementing such a system would require sophisticated AI-powered assessment tools, raising further privacy concerns.

“The fundamental problem is that Meta has built a business model predicated on maximizing user engagement, even at the expense of user safety. Age verification is not a priority; it’s a compliance hurdle. Until that mindset shifts, these issues will persist.”

– Dr. Anya Sharma, Cybersecurity Analyst, SecureFuture Labs.

The Technical Arms Race: Age Verification Technologies

Several technologies are vying to become the standard for age verification. One promising approach involves “privacy-preserving biometrics,” where facial or voice recognition is used to estimate age without storing personally identifiable information. However, these technologies are still in their early stages of development and are vulnerable to spoofing attacks. Another approach involves analyzing a user’s online behavior – their language, interests, and social connections – to infer their age. This technique, known as “behavioral biometrics,” is more demanding to spoof but raises concerns about profiling and discrimination. A third approach, gaining traction in the decentralized web (Web3) space, utilizes zero-knowledge proofs (ZKPs) to verify age without revealing the user’s actual date of birth. ZKPs offer strong privacy guarantees but require specialized cryptographic infrastructure.

Here’s a comparative look at some emerging technologies:

| Technology | Privacy Level | Spoofing Resistance | Implementation Complexity |

|---|---|---|---|

| Privacy-Preserving Biometrics | Medium | Low | High |

| Behavioral Biometrics | Low | Medium | Medium |

| Zero-Knowledge Proofs (ZKPs) | High | High | Very High |

Meta has also been exploring the use of verifiable credentials (VCs) – digital certificates that can be used to prove age without revealing sensitive information. VCs are based on the W3C standard and are gaining traction in the identity management space. However, widespread adoption of VCs requires a trusted ecosystem of issuers and verifiers, something that is currently lacking.

The 30-Second Verdict

Meta’s age verification failures aren’t a bug; they’re a feature of a system designed for growth at all costs. Expect increased regulatory scrutiny and potentially crippling fines. The future of age verification lies in privacy-enhancing technologies, but widespread adoption requires industry collaboration and a fundamental shift in business priorities.

Ecosystem Lock-In and the Open-Source Alternative

Meta’s dominance in the social media landscape creates a significant barrier to entry for competitors. The company’s vast network effects and control over user data make it difficult for new platforms to gain traction. This ecosystem lock-in exacerbates the age verification problem, as users are less likely to switch to platforms with stricter age restrictions. The rise of decentralized social networks, built on open-source protocols like ActivityPub (ActivityPub Specification), offers a potential alternative. These platforms allow users to control their own data and choose their own verification methods. However, decentralized networks face challenges in terms of scalability, moderation, and user experience. The Mastodon network, for example, has struggled to attract mainstream users despite its commitment to privacy and decentralization.

The current situation highlights the tension between centralized control and decentralized autonomy in the digital world. Meta’s closed ecosystem prioritizes user engagement and data monetization, while open-source alternatives prioritize privacy and user empowerment. The outcome of this struggle will have profound implications for the future of the internet.

“The core issue isn’t just Meta’s technology, it’s their incentive structure. They profit from eyeballs, and that includes the eyeballs of children. Until regulators fundamentally alter that equation, we’ll continue to see these kinds of failures.”

– Ben Thompson, CTO, PrivacyFirst Solutions.

The ongoing investigation in Brussels is a critical test case for the DSA and the EU’s broader efforts to regulate Big Tech. The outcome will likely set a precedent for age verification standards across the industry and could pave the way for a more privacy-respecting and child-safe online environment. However, achieving this goal will require a concerted effort from regulators, technology companies, and the open-source community.