Nvidia has become the first company in history to breach a $5 trillion market capitalization, cementing its dominance in the AI semiconductor landscape as Microsoft, Amazon, and Meta double down on custom silicon strategies to reduce dependency on its GPUs. This milestone, reached amid soaring demand for Blackwell-based systems and surging enterprise adoption of NVIDIA AI Enterprise software, reflects not just hardware supremacy but the irreplaceable role of CUDA as the de facto operating system for accelerated computing. While rivals scramble to close the gap with alternative architectures and open software stacks, Nvidia’s vertical integration—from silicon to systems to cloud—creates a moat that extends far beyond raw FLOPS.

The CUDA Moat: Why Performance Alone Doesn’t Tell the Whole Story

Nvidia’s lead isn’t merely a function of transistor density or memory bandwidth; it’s rooted in two decades of software investment that transformed CUDA from a parallel computing API into a full-stack platform. Today, over 4 million developers rely on CUDA for everything from large language model training to real-time ray tracing, creating a network effect that makes switching costs prohibitively high. Unlike x86 or ARM, where instruction set compatibility allows for multiple vendors, CUDA locks users into Nvidia’s ecosystem through PTX (Parallel Thread Execution) ISA, driver-level optimizations, and libraries like cuDNN, TensorRT, and NCCL—each tuned across generations of architecture from Volta to Blackwell.

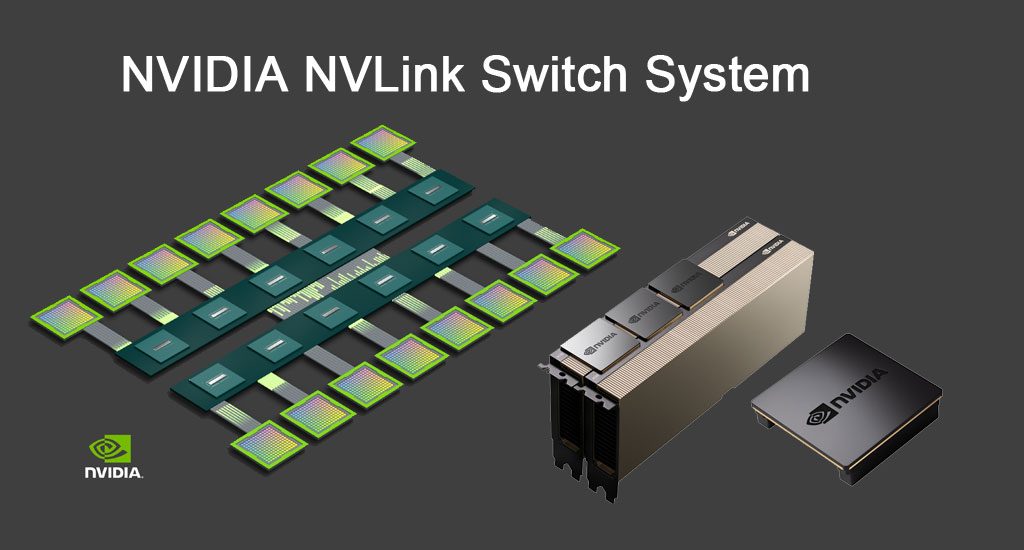

This depth becomes critical when scaling beyond single-node experimentation. Consider the difference between training a Llama 3 405B model on a heterogeneous cluster of AMD Instinct MI300X GPUs versus Nvidia’s HGX B200 systems. While raw TFLOPS may appear comparable on paper, Nvidia’s NVLink Switch System delivers 900 GB/s of GPU-to-GPU bandwidth with sub-microsecond latency, enabling near-linear scaling across 576 GPUs in a single NVL72 rack. AMD’s Infinity Fabric, though improved, still tops out at 896 GB/s peak with higher latency under mixed workloads—a gap that widens in transformer-heavy training due to inefficient all-reduce patterns.

Ecosystem Lock-In and the Open-Source Counterpush

Nvidia’s dominance has sparked a quiet but growing rebellion in the open-source community, particularly around projects seeking to decouple AI workloads from proprietary CUDA dependencies. Initiatives like Intel’s oneAPI and AMD’s ROCm aim to provide vendor-neutral alternatives, but adoption remains fragmented. A 2025 survey by the Linux Foundation Foundry found that while 68% of AI researchers experiment with alternative backends, only 12% apply them for production training due to immature tooling and kernel-level incompatibilities.

Meanwhile, cloud providers are hedging their bets. Amazon’s Trainium2 chips, now powering select EC2 Trn2 instances, offer compelling price-performance for specific workloads like BERT-based inference, yet still lack broad framework support. Microsoft’s Maia 100, deployed internally for Azure AI services, remains unavailable to external customers—a strategic limitation that underscores the difficulty of breaking into Nvidia’s stronghold. As one senior engineer at a major hyperscaler noted off the record: “We can design silicon that matches Blackwell in peak throughput, but replicating Nvidia’s software maturity? That’s a decade-long bet we’re not sure the market will wait for.”

What This Means for the Chip Wars and Enterprise Strategy

The $5 trillion valuation isn’t just a financial milestone—it’s a signal that the AI industrial complex has fully aligned around Nvidia as the central nervous system of modern computing. For enterprises, this means evaluating AI infrastructure isn’t just about upfront GPU cost but long-term software portability, vendor roadmap alignment, and access to pre-optimized models via NVIDIA NGC. Companies building sovereign AI clouds or pursuing data center diversification must now weigh the performance gains of alternative hardware against the risk of fragmented toolchains and delayed framework updates.

Regulatory scrutiny is also intensifying. With Nvidia controlling an estimated 80–85% of the data center GPU market, antitrust authorities in the EU and Japan have launched preliminary inquiries into whether its bundling of hardware, software, and cloud services constitutes abusive dominance. Nvidia argues that competition remains vigorous—citing custom ASICs from Google and Amazon—but critics point out that those chips are rarely sold externally, limiting true market competition. As IEEE Spectrum recently highlighted, the real concern isn’t just market share but whether Nvidia’s platform becomes so entrenched that alternatives struggle to gain traction even when technically superior.

The 30-Second Verdict: Dominance Earned, Not Given

Nvidia’s ascent to $5 trillion isn’t the result of speculative hype or temporary AI frenzy—it’s the payoff of a relentless, vertically integrated strategy that began when Jensen Huang bet the company on GPUs for general-purpose computing in 2006. Today, that bet has paid off in spades: CUDA is to AI what x86 was to the PC era, a foundational layer so deeply embedded that displacing it requires not just better silicon, but a generational shift in how the world builds and deploys software. Until then, the empire holds.