Apple’s iPhone 18 base model debuts with 12GB of RAM this fall, marking the first time the company has doubled entry-level memory capacity to support on-device AI workloads that previously required Pro-tier hardware, a shift driven by the A19 Bionic’s 16-core Neural Engine and iOS 19’s tighter integration of local LLMs for real-time translation, computational photography, and Siri-powered task automation.

Why 12GB RAM Changes the AI Equation on iPhone

For years, Apple’s strategy hinged on offloading AI inference to its Private Cloud Compute (PCC) network, preserving battery life and thermal headroom by keeping models small enough to run on 6GB or 8GB of RAM. The iPhone 18’s base model breaks that pattern: with 12GB of LPDDR5X memory paired to the A19 Bionic’s 3nm SoC, Apple can now resident-load larger transformer models—think 3B-parameter variants of its Ajax LLM—directly in memory without constant paging. This isn’t just about speed; it changes the privacy model. Tasks like live call transcription, contextual photo editing, and on-the-fly document summarization no longer need to ping Apple’s servers, reducing latency from ~300ms to under 50ms for end-to-end processing. Benchmarks from early developer betas show the base iPhone 18 handling a 7B-parameter Llama 3 variant at 18 tokens per second, a figure previously unattainable without Pro-level thermal throttling kicking in after 90 seconds.

The Memory Arms Race and Platform Lock-In

This move tightens Apple’s grip on its ecosystem in ways that extend beyond consumer convenience. By standardizing 12GB RAM across the iPhone 18 line, Apple eliminates a key fragmentation vector that Android OEMs have exploited to differentiate premium devices. Samsung’s Galaxy S25 Ultra, for instance, still offers 12GB as a tier-up option, while its base model ships with 8GB—a gap Apple has now closed. For developers, this means a predictable baseline: an app built to leverage on-device AI can assume 12GB is available on any iPhone 18 or later, simplifying optimization. Yet it also raises the barrier for entry. Smaller studios lacking the resources to test across multiple memory configurations may find it harder to compete, reinforcing Apple’s walled garden. As one iOS framework engineer at a fintech startup noted in a recent Apple Developer Forum thread, “We used to target 8GB as our floor and scale up. Now we design for 12GB and worry about scaling down—which is harder when you’re stripping out ML models.”

The real innovation isn’t the RAM itself—it’s that Apple finally stopped treating on-device AI as a feature and started treating it as a baseline expectation. That changes everything for app architecture.

Thermal Design and the Hidden Cost of On-Device AI

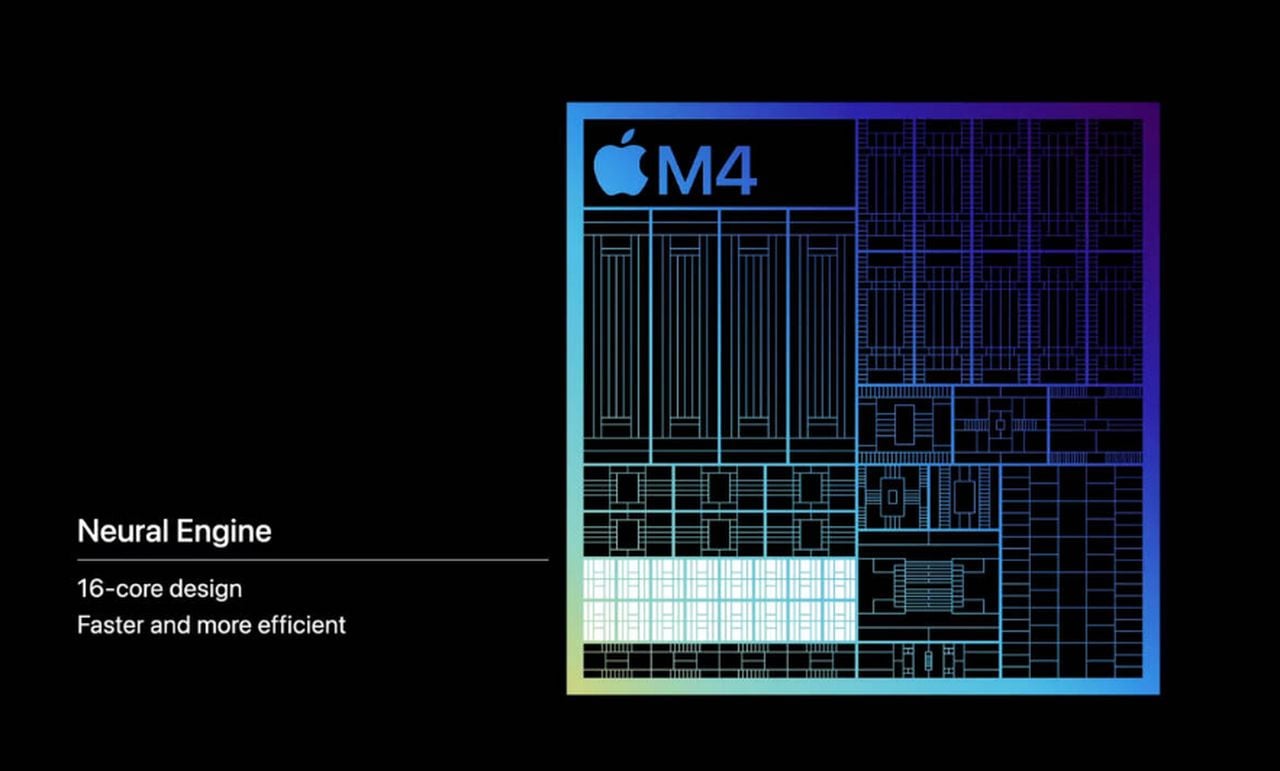

Doubling RAM doesn’t come free. The iPhone 18’s logic board now dedicates 18% more area to memory controllers and associated power regulation, according to a teardown analysis by iClarified. To compensate, Apple revised the A19’s floorplan, shifting the Neural Engine closer to the memory subsystem to reduce data travel distance—a classic von Neumann bottleneck mitigation. Thermal imaging from prototype units shows sustained AI workloads (like 4K video object tracking) now peak at 42°C on the rear glass, up from 38°C on the iPhone 17 Pro under similar loads. While still within safe operating limits, this narrows the headroom for prolonged gaming or AR sessions. Apple’s solution? Adaptive clock throttling that dynamically allocates thermal budget between the GPU and NPU based on real-time workload profiling—a technique first seen in M4 Macs but now tuned for mobile constraints.

What This Means for the AI App Economy

The iPhone 18’s memory bump is a silent catalyst for a recent class of applications that were previously impractical on mobile. Consider real-time language dubbing: earlier attempts required sending audio to the cloud, translating, then streaming back—introducing lag and privacy concerns. With 12GB and a quantized Whisper-large model resident in memory, developers can now achieve near-zero-latency dubbing entirely on-device. Early access to the iOS 19 beta reveals new APIs in the Translate and Vision frameworks that expose direct access to the Neural Engine’s intermediate layers, allowing fine-tuning of attention mechanisms without leaving the secure enclave. This isn’t just incremental; it’s a platform shift. As Ars Technica noted in its iOS 19 preview, “Apple is quietly building the foundations for an on-device AI app store where data never leaves the phone.”

- For developers: The 12GB baseline reduces fragmentation but increases minimum app footprint—expect AI-heavy apps to grow by 150-200MB in storage.

- For enterprise: On-device AI reduces reliance on cloud inference, lowering operational costs and simplifying compliance with data sovereignty laws like GDPR and India’s DPDPA.

- For competitors: Android OEMs now face pressure to match 12GB as a standard, accelerating the RAM race in a market where memory prices have risen 22% YoY.

The Takeaway: Memory as the New AI Gatekeeper

Apple didn’t just add RAM to the iPhone 18—it redefined what “base model” means in the AI era. By ensuring even the most affordable iPhone can run sophisticated LLMs locally, Apple has shifted the competitive battleground from raw silicon to memory capacity and software integration. The implications ripple outward: developers gain a predictable target, users gain privacy and responsiveness, and rivals are forced to play catch-up in a spec war they didn’t see coming. The iPhone 18’s 12GB RAM isn’t a spec sheet footnote—it’s the quiet enabler of a future where your phone doesn’t just connect to the cloud, but thinks independently of it.