Apple’s latest leadership is grappling with a systemic memory crisis as the shift toward on-device generative AI mandates a massive increase in RAM capacity and bandwidth. With memory costs projected to spike fourfold and potentially comprise 45% of total production costs by 2027, iPhone retail prices are poised for a significant upward correction.

This isn’t a simple case of inflation or supply chain hiccups. We are witnessing a fundamental collision between the physics of Large Language Model (LLM) parameter scaling and the economics of consumer electronics. For years, Apple managed to keep the Bill of Materials (BOM) lean by optimizing software to run on modest hardware. That era is dead. To move “Apple Intelligence” from a cloud-tethered novelty to a truly local, private execution environment, the hardware must evolve—and that evolution is expensive.

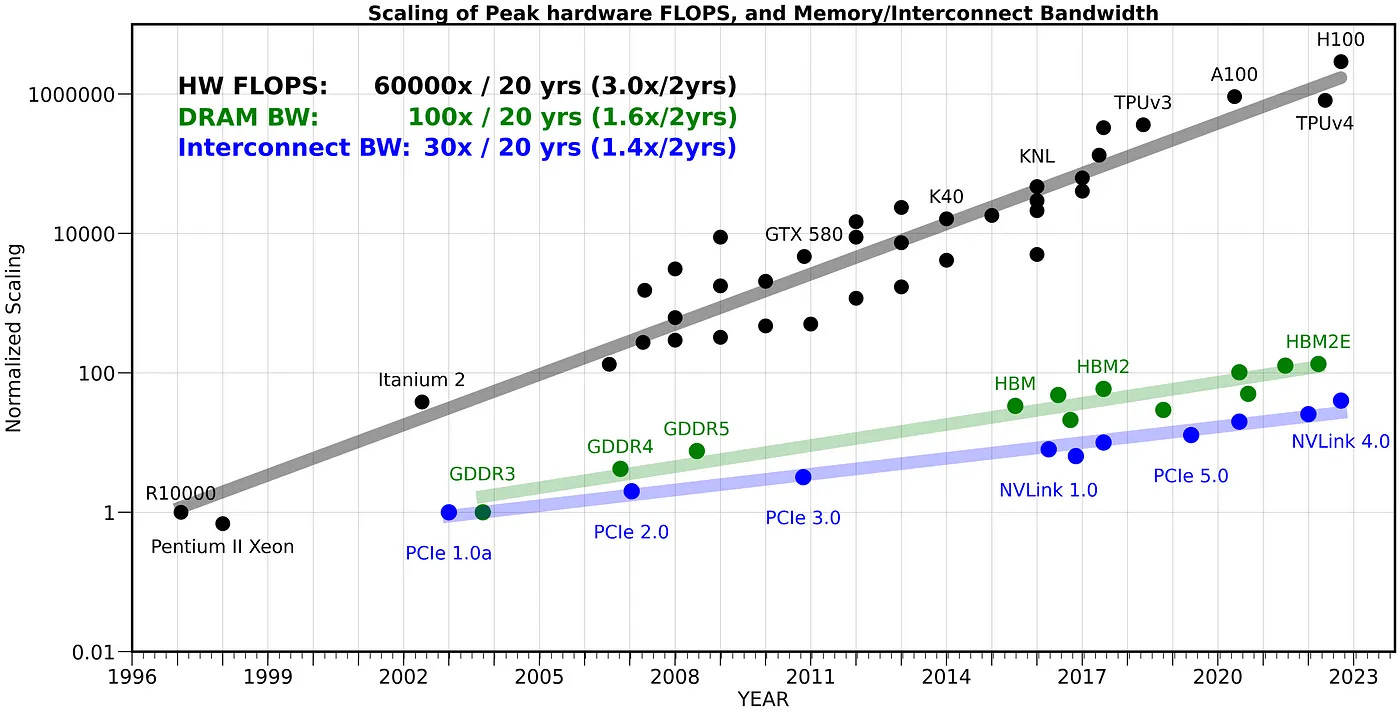

The bottleneck is the “memory wall.”

The LLM Tax: Why RAM is the New Gold

To understand why a phone’s price might jump by hundreds of dollars, you have to understand how LLMs occupy space. A model’s “intelligence” is essentially a massive matrix of weights. Even with aggressive quantization techniques—which shrink these weights from 16-bit floating point (FP16) to 4-bit or even 2-bit integers—the memory footprint remains enormous. If Apple wants to run a sophisticated 7B or 13B parameter model locally, the device needs enough LPDDR5X (Low Power Double Data Rate 5X) memory to hold those weights in a state of constant readiness.

Current iPhones are already pushing the limits. When you run a local model, the system doesn’t just need space for the model itself; it needs space for the KV (Key-Value) cache, which stores the context of your current conversation. As the context window grows, the memory pressure increases linearly. If the OS runs out of RAM, it swaps to the NAND flash storage, which is orders of magnitude slower, leading to the dreaded “token stutter” where the AI pauses mid-sentence.

Apple is now competing for the same high-grade silicon wafers as NVIDIA and the cloud hyperscalers. When Microsoft and Google are buying up every available HBM3 (High Bandwidth Memory) module for their data centers, the ripple effect hits the consumer supply chain. The result? A fourfold increase in the cost of the memory modules that sit next to the A-series SoC.

The 30-Second Verdict: The BOM Shift

- The Driver: Local LLM execution requires massive RAM for weight storage and KV caching.

- The Conflict: Apple is fighting AI giants for limited memory bandwidth and silicon capacity.

- The Result: Memory is shifting from a secondary component to the primary cost driver of the iPhone.

- The Price: Expect a divergence where “Base” models remain stagnant while “Pro” models see aggressive price hikes to offset the LPDDR5X/LPDDR6 costs.

The Architecture War: Unified Memory vs. The Market

Apple’s secret weapon has always been its Unified Memory Architecture (UMA). By integrating the CPU, GPU, and NPU (Neural Processing Unit) into a single package with a shared pool of memory, Apple eliminates the latency involved in moving data across a PCIe bus. This is why an M-series chip can experience snappier than a PC with more raw RAM.

However, UMA only works if the pool is large enough. If the NPU is starving for data because the memory bandwidth is capped, the TFLOPS (Teraflops) of the processor develop into irrelevant. We are reaching a point where the NPU is faster than the memory can feed it. To solve this, Apple must either increase the memory bus width—which requires a physical redesign of the logic board—or move to more expensive, higher-density memory stacks.

“The industry is hitting a wall where compute is no longer the bottleneck; it’s the energy cost of moving a single bit of data from memory to the processor. We are seeing a pivot toward ‘memory-centric computing’ where the cost of the RAM literally dictates the capability of the AI.”

This shift creates a dangerous platform lock-in. By tying advanced AI features to specific high-memory hardware tiers, Apple isn’t just selling a phone; they are selling a ticket to a functional AI ecosystem. If you don’t buy the 12GB or 16GB RAM model, your “Apple Intelligence” will be a lobotomized version that relies on the cloud, sacrificing the very privacy Apple uses as a marketing shield.

Projected Cost Distribution (2024 vs 2027)

The shift in the Bill of Materials is staggering. We are moving from a world where the SoC (System on Chip) was the undisputed king of the cost sheet to one where the memory subsystem rivals it.

| Component | 2024 BOM % (Estimated) | 2027 BOM % (Projected) | Trend |

|---|---|---|---|

| A-Series SoC | 25% – 30% | 20% – 25% | Relative Decrease |

| Memory (RAM/Storage) | 10% – 15% | 40% – 45% | Aggressive Increase |

| Display (OLED) | 15% – 20% | 12% – 15% | Optimization/Scaling |

| Housing/Camera/Misc | 35% – 40% | 20% – 25% | Stabilizing |

The Geopolitical Silicon Gamble

This isn’t just an internal Apple problem; it’s a symptom of the broader “Chip Wars.” The reliance on TSMC for the 3nm and 2nm nodes is well-documented, but the memory side is equally precarious. Most of the world’s high-end LPDDR is controlled by a handful of players in South Korea and the US. When demand for AI servers spikes, these manufacturers prioritize the high-margin HBM (High Bandwidth Memory) used in NVIDIA H100s over the LPDDR used in iPhones.

Apple’s new CEO is now forced to make a choice: absorb the costs and accept a hit to the industry-leading margins, or pass the cost to the consumer. Given Apple’s history, the latter is almost certain. We will likely see the introduction of a “Ultra” tier or a significant price jump for the Pro Max models, justified by “AI-ready” hardware.

For developers, this means a fragmented landscape. If you’re building apps using Core ML or Swift, you’ll have to optimize for a wide variance in memory availability. The “baseline” iPhone will become a legacy device much faster than in previous cycles.

The era of the “commodity smartphone” is over. We are entering the era of the “AI Appliance,” where the price of admission is determined by how many gigabytes of RAM you can afford to carry in your pocket. If you’re planning an upgrade, do it now. The 2027 price tags will reflect a world where memory is the most valuable real estate in Silicon Valley.