The Agentic SOC: How AI Is Rewriting the Rules of Cybersecurity—And Why Elite Hackers Are Playing the Long Game

In 50 words: The James Webb Space Telescope’s latest anomaly—a mysterious obstruction near a potential “second Earth” moon—has sent ripples through the astrophysics community. But on Earth, a quieter revolution is unfolding: the rise of the agentic SOC, where AI-driven security operations centers are outmaneuvering elite hackers by adopting their own strategic patience. Here’s why this shift matters.

The discovery of an unexplained obstruction in the orbit of a distant exomoon—dubbed “Second Earth” by tabloids—has dominated headlines this week. Yet, while astronomers debate the implications of this cosmic anomaly, a far more terrestrial (but no less profound) shift is underway in cybersecurity. The agentic SOC—a term coined by Microsoft’s Rob Lefferts and David Weston in a recent Security Blog deep dive—is redefining how organizations detect, respond to, and preempt cyber threats. And it’s doing so by borrowing a page from the playbook of the very adversaries it seeks to defeat: elite hackers.

The Strategic Patience Paradox

Elite hackers, as dissected in a CrossIdentity analysis, operate with a level of strategic patience that borders on the pathological. They don’t just exploit vulnerabilities. they cultivate them, often lying dormant for months or even years before striking. This isn’t mere stealth—it’s a calculated game of psychological and technical attrition, designed to exhaust defenders while maximizing the value of their eventual payload.

Traditional SOCs, by contrast, have been built for speed. Alert-driven, reactive, and optimized for mean time to detect (MTTD) and mean time to respond (MTTR), they’ve treated cybersecurity as a sprint. But in the AI era, that model is collapsing. The agentic SOC flips the script: it doesn’t just respond to threats; it anticipates them, using autonomous AI agents to model attacker behavior, simulate attack paths, and even preemptively harden defenses before an intrusion occurs.

This isn’t theoretical. Microsoft’s Principal Security Engineer roles now explicitly require expertise in “autonomous security agents,” while Netskope’s Distinguished Engineer for AI-Powered Security Analytics is tasked with architecting systems that “experience like an attacker.” The message is clear: if you can’t beat their patience, outlast them.

Under the Hood: How Agentic SOCs Work

The agentic SOC isn’t just a buzzword—it’s a fundamental rearchitecture of security operations. At its core, it relies on three technical pillars:

- Autonomous AI Agents: These aren’t your grandfather’s SIEM rules. We’re talking about multi-agent reinforcement learning (MARL) systems that dynamically adjust their behavior based on real-time threat intelligence. Think of them as digital “hunter-killer” teams, where each agent specializes in a specific phase of the attack lifecycle—reconnaissance, lateral movement, exfiltration—and collaborates to predict and neutralize threats before they escalate.

- Behavioral Graph Analysis: Traditional SOCs rely on signature-based detection or anomaly thresholds. Agentic SOCs, however, build behavioral graphs—dynamic models of how users, devices, and applications interact within a network. These graphs are updated in real time, allowing the system to detect deviations that would slip past rule-based systems. For example, if a normally dormant service account suddenly starts querying Active Directory at 3 AM, the agentic SOC doesn’t just flag it—it investigates it, cross-referencing with historical patterns and external threat feeds to determine if this is a legitimate anomaly or a precursor to a larger attack.

- Preemptive Hardening: This is where the agentic SOC truly diverges from traditional models. Instead of waiting for an alert to trigger a response, these systems use predictive modeling to proactively harden defenses. For instance, if an agent detects a pattern of brute-force attempts against a set of credentials, it might automatically rotate those credentials, enforce stricter MFA policies, or even isolate the affected systems before the attacker gains a foothold. It’s the cybersecurity equivalent of a chess grandmaster sacrificing a pawn to trap the opponent’s queen.

But here’s the catch: these systems aren’t plug-and-play. They require massive computational resources, particularly for real-time behavioral graph analysis. Hewlett Packard Enterprise’s Distinguished Technologist for HPC & AI Security role, for example, specifies a need for expertise in “high-performance computing (HPC) architectures optimized for AI workloads,” including GPU-accelerated graph processing and neuromorphic computing. This isn’t just about throwing more hardware at the problem; it’s about rethinking how security workloads are distributed across CPUs, GPUs, and emerging NPUs (Neural Processing Units).

The Elite Hacker’s Counterplay

Of course, elite hackers aren’t sitting idly by. The same AI tools that power agentic SOCs are also being weaponized by adversaries. The CrossIdentity analysis highlights a disturbing trend: hackers are now using adversarial AI to probe defenses, crafting attacks that mimic legitimate behavior so closely that even behavioral graphs struggle to detect them. One particularly insidious tactic involves “slow-drip” attacks, where malware is designed to operate at such a low intensity that it avoids triggering anomaly thresholds—think of it as the cybersecurity equivalent of water torture.

This has led to a new arms race: agentic SOCs are now being trained to detect themselves being probed. Microsoft’s latest agentic SOC framework includes a module called “Meta-Defense,” which uses generative AI to simulate how an attacker might try to evade its own detection mechanisms. It’s a recursive game of cat-and-mouse, where the defender is constantly trying to outthink its own blind spots.

“The biggest misconception about agentic SOCs is that they’re a silver bullet. They’re not. They’re a force multiplier—but only if you understand their limitations. The real breakthrough isn’t the AI itself; it’s the shift from reactive to predictive security. That requires a fundamental change in how we think about risk.”

— Dr. Elena Vasquez, CTO of Darktrace and former DARPA researcher

The Ecosystem Fallout: Who Wins, Who Loses?

The rise of the agentic SOC isn’t just a technical evolution—it’s a market disruptor. Here’s how the landscape is shifting:

| Stakeholder | Opportunity | Threat |

|---|---|---|

| Cloud Providers (Azure, AWS, GCP) | Agentic SOCs are inherently cloud-native, requiring massive, scalable compute. Microsoft’s Azure AI and AWS’s Security Hub are already positioning themselves as the backbone of these systems. | Open-source alternatives (e.g., Sigma, Elastic Detection Rules) could commoditize parts of the stack, eroding margins. |

| SIEM Vendors (Splunk, IBM QRadar) | Legacy SIEMs can pivot to become “data layers” for agentic SOCs, feeding behavioral graphs and threat intelligence into AI agents. | If they fail to adapt, they risk being relegated to “dumb pipes” for more advanced platforms. |

| MSSPs (Managed Security Service Providers) | Agentic SOCs reduce the need for manual triage, allowing MSSPs to scale without proportional headcount increases. | Autonomous agents could disintermediate MSSPs entirely, as enterprises bring security operations in-house. |

| Elite Hackers | Adversarial AI tools are becoming more accessible, lowering the barrier to entry for sophisticated attacks. | Agentic SOCs raise the cost of failure, making it harder to monetize breaches. |

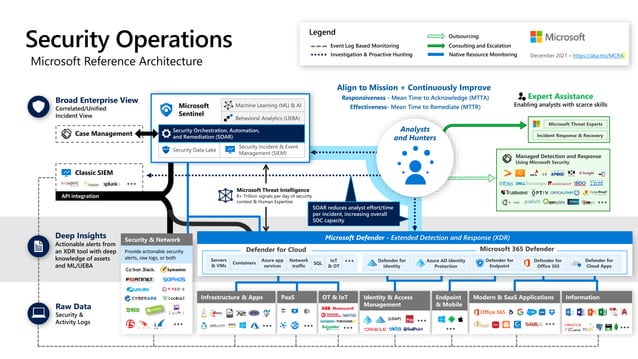

One of the most contentious debates in this space is whether agentic SOCs will accelerate platform lock-in. Microsoft’s approach, for example, tightly integrates its agentic SOC framework with Azure Sentinel and Defender, creating a walled garden that’s challenging for enterprises to escape. This has drawn criticism from open-source advocates, who argue that proprietary agentic SOCs could stifle innovation.

“The danger isn’t just that agentic SOCs will be expensive—it’s that they’ll be opaque. If you can’t audit the AI’s decision-making process, how do you grasp it’s not introducing new vulnerabilities? We’ve seen this movie before with black-box algorithms in finance and healthcare. The security industry can’t afford to repeat those mistakes.”

— Bruce Schneier, Security Technologist and Author of Schneier on Security

The 30-Second Verdict: What This Means for You

If you’re an enterprise CISO, the agentic SOC is both an opportunity and a threat. Here’s what you need to know:

- For Large Enterprises: Start piloting agentic SOC components now. Focus on use cases where AI can augment (not replace) human analysts, such as threat hunting and incident response. Microsoft’s agentic SOC framework is a great starting point, but don’t neglect open-source tools like Elastic’s detection rules for behavioral analysis.

- For MSSPs: Your value proposition is about to shift. Instead of selling “eyes on glass,” you’ll need to differentiate on AI-driven insights. Invest in training your team to work alongside autonomous agents, not compete with them.

- For Developers: The agentic SOC is creating demand for new skill sets. If you’re a security engineer, upskill in TensorFlow or PyTorch for custom AI model development. If you’re a data scientist, learn how to build and tune behavioral graphs. And if you’re a red teamer, start studying adversarial AI—because the attackers certainly are.

- For Regulators: The agentic SOC raises thorny questions about accountability. If an AI agent makes a wrong call—say, isolating a critical system during a false positive—who’s liable? The vendor? The enterprise? The human analyst who trained the model? These questions need answers before agentic SOCs become ubiquitous.

The Long Game: Why Strategic Patience Wins

The James Webb Telescope’s discovery of an obstruction near a “second Earth” moon is a reminder that some mysteries unfold on cosmic timescales. Cybersecurity, too, is entering an era where the most effective strategies are those that play the long game. Elite hackers have always understood this; now, the defenders are catching up.

The agentic SOC isn’t just a new tool—it’s a philosophical shift. It’s the recognition that cybersecurity isn’t a series of sprints; it’s a marathon, where the winner isn’t the fastest responder, but the most patient strategist. And in this new era, the best defense isn’t just a wall—it’s a mirror, reflecting the attacker’s own tactics back at them.

One thing is certain: the SOC of 2030 won’t look anything like the SOC of 2020. And if you’re not preparing for that future now, you’re already behind.