Large Language Models (LLMs) have evolved beyond content generation into sophisticated tools for text-in-text steganography, enabling the embedding of encrypted messages within seemingly mundane prose. By manipulating token selection probabilities, these models create covert channels that are virtually indistinguishable from natural language, bypassing traditional Data Loss Prevention (DLP) systems and AI safety filters.

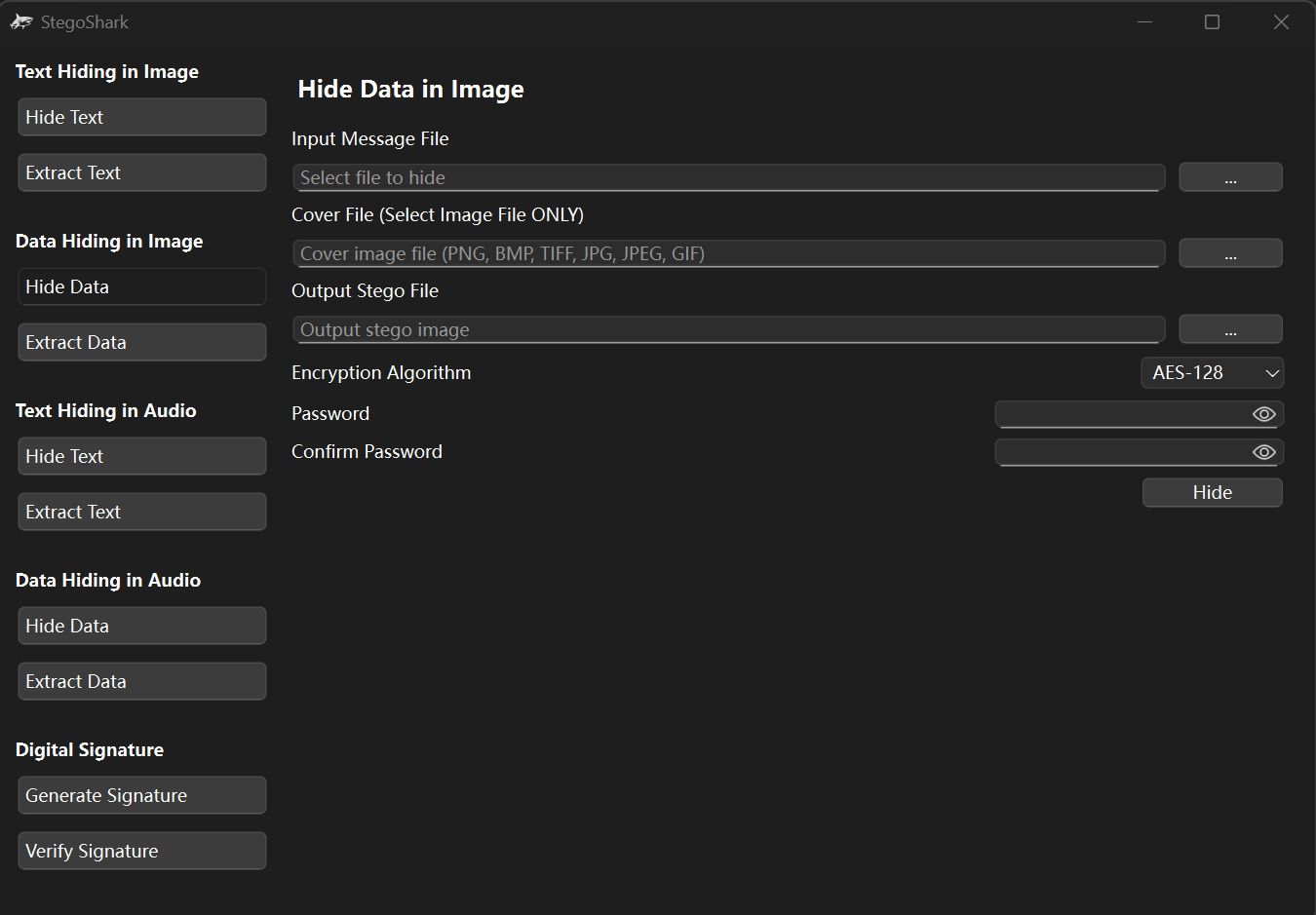

For years, steganography was the domain of image LSB (Least Significant Bit) manipulation or audio frequency masking. But the shift to transformer-based architectures has opened a new flank. We aren’t talking about simple ciphers or “leetspeak” that a basic regex filter can catch. We are talking about linguistic camouflage executed at the level of the model’s latent space.

This isn’t theoretical. The latest research, specifically the findings detailed in arXiv:2510.20075, demonstrates that LLMs can hide high-entropy data within low-perplexity text. Essentially, the model doesn’t just write a story; it uses the choice between “happy,” “joyful,” and “cheerful” to encode binary data.

The Logit Game: How LLMs Hide Data in Plain Sight

To understand the exploit, you have to look at the logits—the raw, unnormalized predictions the model makes for the next token in a sequence. In a standard generation task, the model typically samples from the top-p (nucleus) or top-k distribution to ensure coherence. Steganography hijacks this sampling process.

Instead of picking the most probable token to maximize fluency, the steganographic algorithm partitions the available high-probability tokens into bins. If the secret bit to be transmitted is a ‘0’, the model selects a token from Bin A; if it’s a ‘1’, it selects from Bin B. Because both bins contain tokens that are statistically plausible in that context, the resulting text remains grammatically correct and contextually relevant. The perplexity—the measure of how “surprised” a language model is by a sequence—remains low enough to evade detection by automated monitors.

What we have is where LLM parameter scaling becomes a liability. Larger models with more parameters generally exhibit a “flatter” probability distribution for synonyms. In plain English: a massive model like GPT-5 or a scaled-up Llama iteration sees more ways to say the same thing without sounding weird. This increased linguistic flexibility directly correlates to higher steganographic capacity.

The Capacity vs. Stealth Trade-off

| Metric | Standard Generation | High-Capacity Steganography | Stealth-Optimized Steganography |

|---|---|---|---|

| Token Selection | Greedy/Nucleus Sampling | Wide Bin Partitioning | Tight Logit Constraints |

| Perplexity | Very Low (Natural) | Moderate (Slightly “Off”) | Low (Indistinguishable) |

| Bits per Token | 0 | 2.5 – 4.0 bits | 0.5 – 1.2 bits |

| Detection Risk | Zero | High (Statistical Analysis) | Minimal |

The danger here is the “stealth-optimized” approach. When the bit-rate is kept low, the delta between the steganographic text and natural text is smaller than the natural variance of human writing styles. It is a ghost in the machine.

Weaponizing the Covert Channel: The Cybersecurity Nightmare

From a security perspective, this is a catastrophic failure of the “inspect the payload” philosophy. Most enterprise security stacks rely on keyword scanning or sentiment analysis to prevent data exfiltration. But how do you flag a “benign” email about a quarterly marketing strategy that is actually transmitting an encrypted SSH key to a remote server?

We are seeing a fundamental shift in the threat model. This isn’t just about spies; it’s about bypassing the very guardrails that AI companies spend billions to implement. If a user can wrap a malicious prompt inside a steganographic layer, they can potentially trigger “jailbroken” behaviors in a target LLM that is programmed to decode such messages, creating a hidden command-and-control (C2) infrastructure mediated by AI.

“The ability of LLMs to act as a high-bandwidth, low-visibility transport layer for encrypted data renders current DLP strategies obsolete. We are moving from a world of ‘searching for the needle’ to a world where the needle is made of the same hay as the haystack.”

The quote above from a lead security architect at a major cloud provider underscores the urgency. This is no longer a research curiosity; it is a viable vector for advanced persistent threats (APTs).

The Ecosystem War: Open-Source vs. Closed Gardens

This capability creates a fascinating, if terrifying, divergence between closed-source models and open-weight models. Companies like OpenAI and Google are racing to implement “steganalysis” filters—models designed specifically to detect the statistical anomalies associated with token-binning. They want to lock down the API to ensure their models aren’t used as covert messengers.

Meanwhile, the open-source community, leveraging platforms like GitHub and Hugging Face, is inadvertently providing the blueprints for these tools. When a model’s weights are public, an attacker can calculate the exact logits for any given prompt, allowing them to optimize the steganographic bins for maximum efficiency without ever alerting a central authority.

This reinforces the “chip war” dynamics. The more powerful the NPU (Neural Processing Unit) in a local device, the faster these encoding/decoding processes happen. We are heading toward a future where edge devices can perform real-time, AI-driven covert communication that is invisible to the network layer.

The 30-Second Verdict for Enterprise IT

- The Risk: Your employees (or attackers) can exfiltrate sensitive data via LLMs using text that looks perfectly normal to your security filters.

- The Mitigation: Stop relying on keyword filters. Move toward behavioral analysis and strict API egress monitoring.

- The Reality: Detection is currently an uphill battle because the “signal” is mathematically blended into the “noise” of natural language.

Searching for the Signal: The Future of Steganalysis

Can we stop this? The answer lies in steganalysis—the art of detecting hidden messages. Researchers are currently exploring the use of KL Divergence (Kullback–Leibler divergence) to measure the difference between the probability distribution of a suspected text and the expected distribution of a baseline model.

If a text is “too perfect” or follows a suspiciously consistent distribution of synonyms, it may be flagged. However, as LLMs get better, the “natural” distribution becomes broader, giving the hidden messages more room to hide. It is a classic cryptographic arms race.

For further reading on the mathematical foundations of these attacks, the IEEE Xplore digital library provides extensive documentation on linguistic entropy and its role in covert communication. The industry must move toward a zero-trust architecture for AI-generated content, treating every “benign” string as a potential carrier.

the very thing that makes LLMs brilliant—their ability to understand and mimic the nuance of human language—is exactly what makes them the perfect tool for deception. We’ve taught machines to speak; now we’re discovering they can lie with an absolute, statistical precision.