Snapchat is leveraging advanced computer vision and generative AI to synchronize Bitmoji avatars with real-world attire in real-time. This convergence of digital twins and fashion-tech allows users to mirror their physical “fit” instantly, transforming static avatars into dynamic, AI-driven extensions of personal identity through multimodal embedding and garment segmentation.

When a user claims their Bitmoji “is a vibe” because it matches their physical outfit—down to the specific sneakers—they aren’t just talking about a coincidence of digital dress-up. They are interacting with a sophisticated pipeline of computer vision (CV) and latent space mapping. For the uninitiated, this looks like a fun mirror effect. For those of us tracking the silicon, it is a masterful execution of edge-computing and real-time asset generation.

The “vibe” is the result of a shift from manual selection to automated inference. We have moved past the era of scrolling through a menu of 50 generic t-shirts. In the current 2026 build, Snap is utilizing on-device NPUs (Neural Processing Units) to handle the heavy lifting of image segmentation without sending raw biometric data to the cloud, reducing latency and increasing privacy.

The Latent Space of Fashion: How CV Maps the “Fit”

To achieve a 1:1 match between a human wearing “Js” (Air Jordans) and a digital avatar, the app must solve the “semantic gap”—the difference between raw pixels and the conceptual meaning of “streetwear.” This process begins with Instance Segmentation. The system identifies specific boundaries of clothing items, separating the shoe from the pavement and the pants from the skin.

Once the items are segmented, the AI employs a multimodal LLM (Large Language Model) architecture to categorize the aesthetic. It doesn’t just see “red shoe”; it recognizes the specific silhouette, color blocking, and brand markers of a Jordan 1. Here’s achieved through Contrastive Language-Image Pre-training (CLIP), which allows the system to map the image into a shared embedding space where the visual of the shoe sits closely next to the text description of that specific model.

The final step is the translation of these embeddings into a 3D mesh. Instead of static textures, Snap is increasingly using generative shaders that can adapt the Bitmoji’s clothing geometry in real-time. This isn’t just a skin; it’s a procedural generation of clothing that mimics the drape and fold of the physical fabric captured by the camera.

The Tech Stack: From Pixels to Polygons

- Edge Inference: Leveraging ARM-based architectures to run quantized models locally, ensuring the “fit” updates in milliseconds.

- Mesh Deformation: Using linear blend skinning to ensure the digital clothing moves naturally with the avatar’s rigging.

- Vector Quantization: Reducing the complexity of the garment data so it can be transmitted across the network without lagging the chat interface.

“The transition from curated avatars to generative digital twins represents a fundamental shift in how we perceive identity online. We are no longer choosing a mask; we are projecting a synchronized version of our physical presence into a digital layer.”

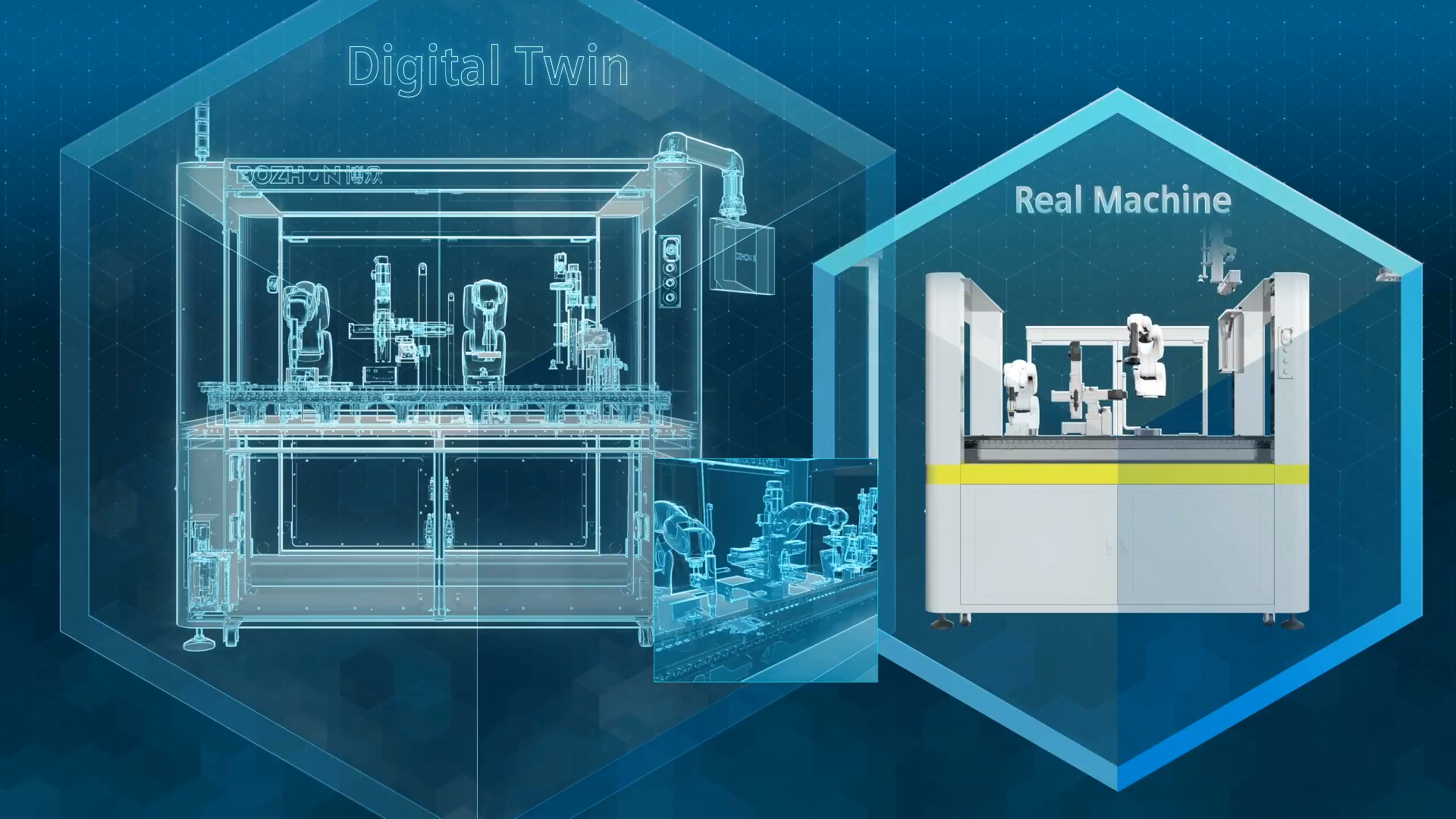

This synchronization is a critical piece of the broader “Digital Twin” war. While Apple’s Memoji remains largely a curated, high-fidelity tool for communication, and Meta’s avatars strive for VR immersion, Snap is winning on contextual relevance. By anchoring the digital self to the physical “fit,” Snap creates a tighter feedback loop between the user’s real-world spending habits and their digital persona.

The Data Harvest: Why Your Shoes Matter to Snap

Let’s strip away the “vibe” and look at the macro-market dynamics. This feature isn’t just about user delight; it is a massive data ingestion engine. Every time the AI identifies a specific pair of sneakers or a brand of hoodie, Snap is essentially building a real-time, global map of fashion trends and consumer behavior.

This creates a powerful API opportunity for third-party developers and brands. Imagine a world where a brand can push a “Digital Twin” version of a limited-edition drop directly to your Bitmoji the moment you purchase the physical item. This is the ultimate platform lock-in: your digital identity becomes a living ledger of your physical acquisitions.

To understand the complexity of this mapping, we can compare the current state of avatar synchronization across the major players:

| Feature | Snap Bitmoji (2026) | Meta Avatars | Apple Memoji |

|---|---|---|---|

| Input Method | Real-time CV / Generative AI | Manual / Template-based | Curated Manual |

| Synchronization | High (Dynamic “Fits”) | Medium (Preset Outfits) | Low (Static Assets) |

| Processing | On-device NPU / Edge | Cloud-Hybrid | On-device (Neural Engine) |

| E-commerce Link | Direct Asset Integration | Storefront Integration | None/Minimal |

The Privacy Paradox of Hyper-Realistic Avatars

As we push toward a world where our avatars are perfect mirrors of our physical selves, the cybersecurity implications become non-trivial. We are moving toward a state of biometric synchronization. If an attacker can intercept the embeddings used to generate your “vibe,” they aren’t just stealing a picture; they are stealing a mathematical representation of your physical appearance and style.

The industry is currently debating the implementation of end-to-end encryption for biometric embeddings. If the “fit” is processed on-device, the risk is mitigated. However, the moment that data is used to suggest a product or sync across devices, it enters the realm of potential vulnerability. We are seeing a rise in “identity spoofing” where generative AI is used to create fake “fits” to bypass visual verification systems.

For those interested in the underlying framework of this technology, exploring the OpenCV library provides a window into how image segmentation and feature detection actually function at the code level. The jump from a library like OpenCV to a consumer-facing feature like Bitmoji sync is largely a matter of scaling and optimization via Snap’s proprietary SDKs.

“The danger isn’t in the avatar itself, but in the metadata attached to it. When your digital twin knows exactly what you’re wearing, it knows where you’ve been, how much you earn, and who you’re trying to impress.”

the “vibe” is a triumph of engineering over aesthetics. By blending generative adversarial networks (GANs) with real-time computer vision, Snap has turned the act of getting dressed into a data point. It is geek-chic at its finest: a seamless user experience powered by a ruthless amount of math.

The 30-Second Verdict

Snapchat’s real-time outfit synchronization is a masterclass in edge AI. By moving from static assets to generative embeddings, they’ve turned the Bitmoji into a functional digital twin. While the user sees a “vibe,” the industry sees a sophisticated pipeline for consumer data and a new frontier in digital identity. The tech is impressive; the privacy implications are a minefield.