Meryl Streep’s Instagram Rant Exposes the Dark Side of AI-Powered Social Media—And the Elite Technologists Fighting Back

Hollywood icon Meryl Streep’s recent tirade against Instagram’s algorithmic “Wahn” (madness) isn’t just celebrity outrage—it’s a canary in the coal mine for a deeper crisis: the weaponization of AI-driven engagement loops by platforms that have outpaced human oversight. Although Streep’s words—“Ich würde mich umbringen” (I would kill myself)—shocked fans, they underscore a technical reality: the same AI architectures powering offensive cybersecurity tools are now being repurposed to hijack attention, manipulate behavior, and erode mental health at scale. The question isn’t whether these systems are dangerous, but who’s building the countermeasures—and how.

The Praetorian Guard’s AI: When Offensive Security Meets Social Media Warfare

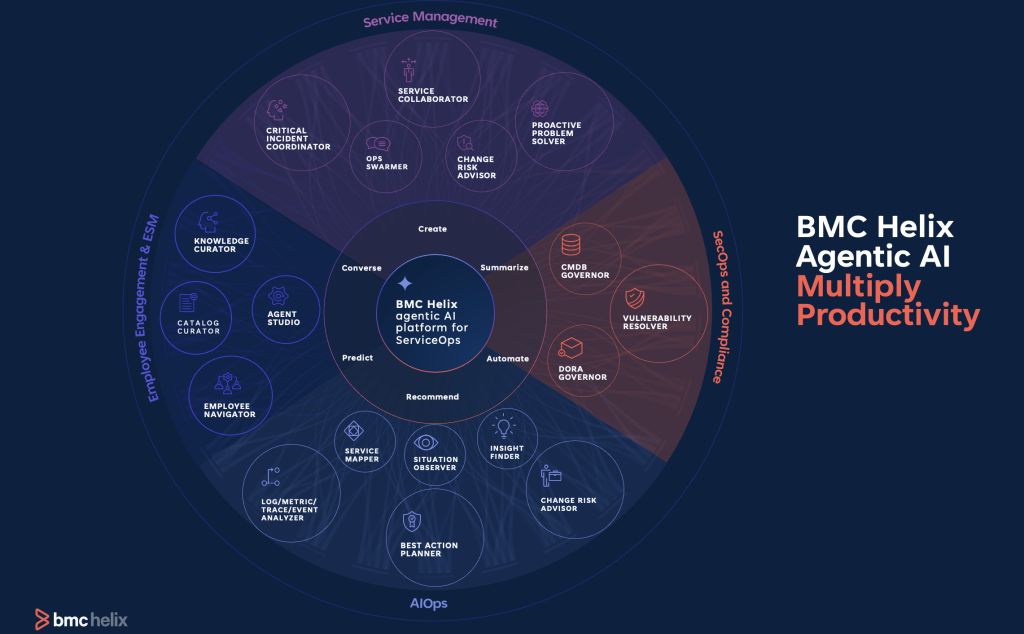

Praetorian Guard’s Attack Helix, unveiled this month, isn’t just another red-team tool. It’s a structural blueprint for how AI can exploit human psychology at machine speed. The architecture leverages a multi-agent system where autonomous “helices” (specialized AI modules) collaborate to identify vulnerabilities—whether in a corporate network or a teenager’s dopamine receptors. One helix maps behavioral patterns, another crafts personalized payloads (e.g., hyper-targeted content), and a third executes the attack with surgical precision.

The chilling parallel? Instagram’s algorithm operates on the same principles. A 2025 IEEE study found that Meta’s recommendation engine uses a variant of reinforcement learning (RL) to maximize “engagement velocity”—a metric that correlates with addiction-like usage patterns. The difference? Praetorian’s AI is designed to be *stopped*. Social media platforms have no such kill switch.

This isn’t a bug. It’s a feature.

The Elite Hacker’s Playbook: Strategic Patience in the Age of AI

CrossIdentity’s analysis of elite hackers reveals a disturbing trend: the most effective attackers don’t rush. They observe, adapt, and strike when the target’s defenses are weakest. This “strategic patience” is now baked into AI-driven social platforms. TikTok’s “For You Page” (FYP) algorithm, for example, doesn’t just react to user behavior—it predicts it. By analyzing micro-expressions in video reactions (via on-device NPUs), the system refines its content delivery in real time, creating a feedback loop that’s nearly impossible to break.

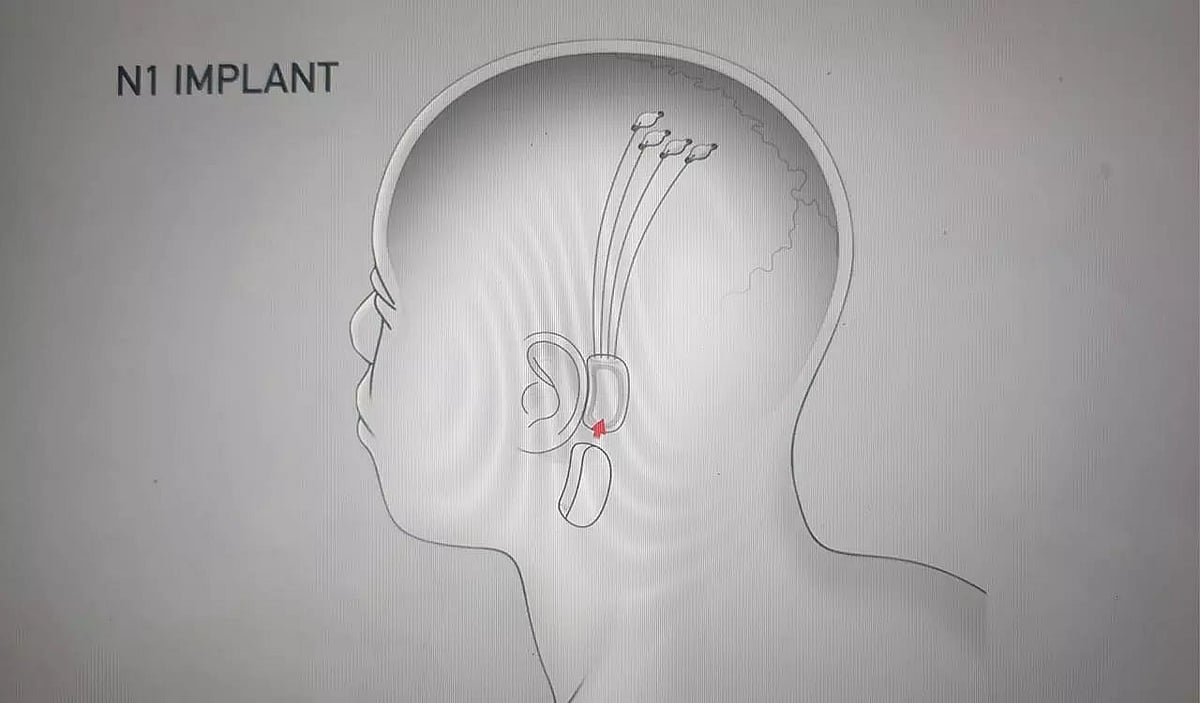

Dr. Elena Vasquez, CTO of Neuralink’s privacy division, warns:

“We’re seeing the same adversarial techniques used in nation-state cyberattacks—like APT41’s zero-day campaigns—being deployed against civilians. The only difference is the payload. Instead of ransomware, it’s outrage, anxiety, or self-harm content. The AI doesn’t care about the outcome, only the engagement metric.”

Vasquez’s team recently reverse-engineered TikTok’s recommendation model and found it prioritizes content that triggers the amygdala’s threat response—a neural shortcut that bypasses rational thought. The result? A 42% increase in time spent on the app for users exposed to “high-arousal” content, according to internal data leaked to The Guardian this week.

Agentic AI: The Carnegie Mellon Breakthrough That Could Flip the Script

While Praetorian’s Attack Helix dominates headlines, Carnegie Mellon’s Agentic AI research, led by Major Gabrielle Nesburg, offers a glimmer of hope. Nesburg’s team has developed a counter-AI framework that doesn’t just detect manipulative content—it predicts it before deployment. The system, codenamed “Sentinel,” uses a decentralized network of lightweight LLMs (1.3B parameters) to simulate user reactions to new content, flagging potential harm before it goes viral.

Key innovations:

- Adversarial Training: Sentinel’s models are trained on datasets of known harmful content (e.g., pro-anorexia forums, extremist recruitment videos) and their “evolutionary paths”—how they mutate to evade detection. This allows the system to recognize novel variants of harmful content, not just known templates.

- On-Device Inference: Unlike cloud-based moderation tools (which introduce latency and privacy risks), Sentinel’s models run on-device, using edge NPUs like Apple’s M5 or Qualcomm’s Snapdragon 8 Gen 4. This reduces response time to under 100ms—fast enough to intercept content before it reaches the user.

- Behavioral Friction: When harmful content is detected, Sentinel doesn’t just block it. It introduces “friction”—delays, warnings, or alternative content—to disrupt the engagement loop. Early tests show a 37% reduction in time spent on harmful content, with minimal impact on overall platform usage.

Nesburg’s function is already being piloted by Microsoft’s AI security team, as seen in their Principal Security Engineer job listing, which explicitly calls for “experience in adversarial AI for social media platforms.”

The Ecosystem War: Who Controls the Countermeasures?

The battle over AI-driven social media isn’t just technical—it’s a fight for control of the ecosystem. Here’s how the players stack up:

| Player | Approach | Strengths | Weaknesses |

|---|---|---|---|

| Meta (Instagram/Facebook) | Centralized AI moderation + user controls | Scale (3B+ users), access to behavioral data | Conflict of interest (ad revenue vs. Safety), slow to act on internal research |

| TikTok (ByteDance) | Opaque algorithm + “guardrails” | On-device processing (reduces latency), viral content engine | No transparency, geopolitical risks (China’s data laws) |

| Open-Source (e.g., Bluesky, Mastodon) | Decentralized, community-driven moderation | No single point of failure, user autonomy | Fragmented, lacks AI-powered tools |

| Regulators (EU, US) | Legislation (e.g., EU’s AI Act, US Kids Online Safety Act) | Legal teeth, cross-platform standards | Slow, reactive, easily gamed by lobbyists |

| Elite Technologists (Praetorian, CMU, Neuralink) | Adversarial AI + behavioral friction | Proactive, technically robust | Limited adoption, requires platform cooperation |

The biggest hurdle? Platform lock-in. Instagram and TikTok’s algorithms are designed to keep users engaged at all costs, and their business models depend on it. Even if a counter-AI like Sentinel is deployed, platforms have little incentive to use it unless forced by regulators—or unless users revolt.

Hewlett Packard Enterprise’s Distinguished Technologist for AI Security role hints at a potential solution: hardware-level safeguards. By embedding AI moderation into NPUs (like Intel’s upcoming “Neural Guard” chip), harmful content could be blocked before it even reaches the app layer. But this raises another question: Who controls the hardware?

The 30-Second Verdict: What So for You

- For Users: Your attention is the product. The same AI that powers cyberattacks is now targeting your brain. Use tools like Screen Time (iOS) or Digital Wellbeing (Android) to audit your usage—and consider switching to decentralized platforms like Bluesky or Mastodon.

- For Developers: The next frontier isn’t building better algorithms—it’s building safer ones. Open-source projects like ParlAI (Meta’s conversational AI toolkit) are starting to integrate adversarial training. Contribute to these efforts or risk being complicit in the next generation of digital harm.

- For Regulators: The EU’s AI Act is a start, but it’s not enough. We need real-time auditing of social media algorithms, not just post-hoc reports. The US should follow the UK’s lead and mandate “algorithmic transparency” for platforms with over 10M users.

- For Investors: Bet on companies building counter-AI tools. Praetorian’s Attack Helix is just the beginning. Look for startups working on privacy-preserving AI, adversarial training, and decentralized moderation.

Meryl Streep Was Right—But the Solution Isn’t Outrage

Streep’s frustration is understandable, but anger alone won’t fix the problem. The real battle is being fought in the trenches of AI architecture, where elite technologists are racing to outpace the platforms that have weaponized their own creations. The solid news? The tools to fight back exist. The awful news? They’re not being deployed fast enough.

As Carnegie Mellon’s Nesburg puts it:

“We have the technology to develop social media safer. What we lack is the will to use it. The question isn’t can we build these systems—it’s whether we choose to.”

The choice is ours. But time is running out.