Meta Platforms (NASDAQ: META)** is facing regulatory scrutiny in the European Union for deploying AI-driven employee surveillance tools. The move tests the EU AI Act’s “high-risk” classification for workplace AI, potentially exposing the company to significant fines and labor disputes across its European workforce as of May 2026.

This is more than a conflict between management and staff; it is a high-stakes stress test for the global AI regulatory framework. While Mark Zuckerberg views algorithmic monitoring as a tool to optimize a “high-talent” workforce, the European Commission views it as a potential violation of fundamental labor rights. For the market, the concern is not merely a one-time fine, but the systemic risk to Meta’s talent acquisition pipeline and its operational freedom within the Eurozone.

The Bottom Line

- Regulatory Exposure: Meta faces potential penalties of up to 7% of its global annual turnover under the EU AI Act for “prohibited” AI practices.

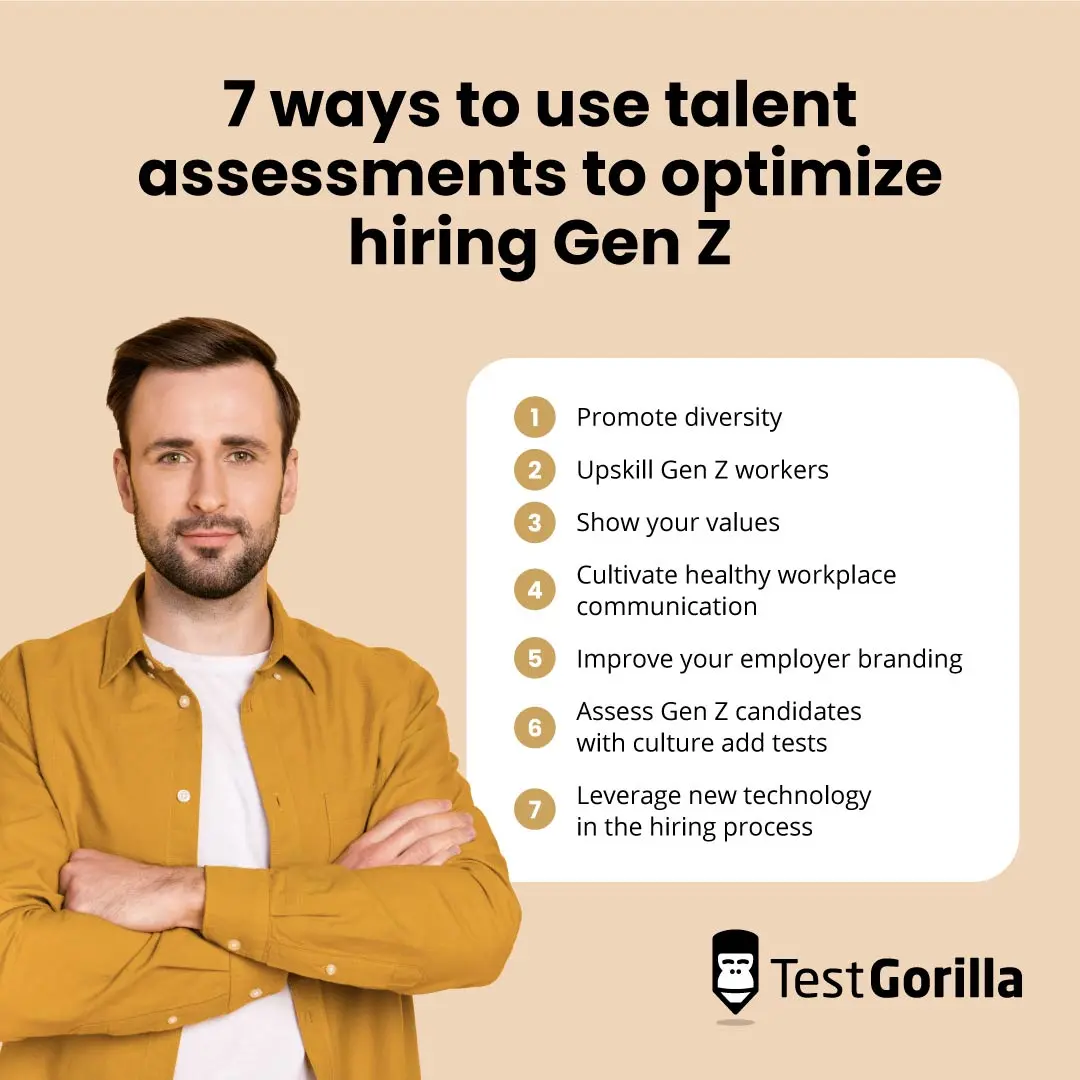

- Talent Attrition: Internal friction, particularly among Gen-Z engineers, threatens the R&D velocity required to maintain Llama’s competitive edge against Alphabet (NASDAQ: GOOGL).

- Operational Risk: The shift toward “algorithmic management” creates a precedent that may trigger broader labor union interventions across the EU.

The Collision of Algorithmic Management and EU Law

The core of the dispute lies in the EU AI Act’s classification of AI systems used in employment, worker management, and access to self-employment as “high-risk.” Under these rules, systems that monitor worker behavior or evaluate performance must adhere to strict transparency, human oversight, and data governance standards. Meta’s current tests—which involve tracking granular activity patterns to “optimize” productivity—appear to bypass these safeguards.

But the balance sheet tells a different story regarding Meta’s motivation. After the “Year of Efficiency” in 2023, the company has shifted from broad headcount reductions to a philosophy of hyper-optimization. By using AI to identify “underperformers” or “bottlenecks” in real-time, Meta aims to squeeze higher output from a leaner workforce. However, the European Data Protection Board (EDPB) has historically maintained that “constant monitoring” is disproportionate and violates the GDPR’s principle of data minimization.

Here is the math on the potential liability. With Meta’s 2025 annual revenue trending toward the $160 billion mark, a maximum fine under the AI Act could theoretically reach $11.2 billion. While Meta maintains a massive cash reserve, the recurring nature of these regulatory battles creates a persistent drag on the stock’s multiple.

The Gen-Z Talent Moat is Leaking

While C-suite executives like CTO Andrew Bosworth suggest that employees may be “shocked” but must comply, the labor market dynamics of 2026 suggest a different outcome. Gen-Z workers, who now make up a critical percentage of the AI engineering workforce, prioritize autonomy and psychological safety over traditional corporate loyalty. Reports indicate that Meta’s surveillance experiments are actively driving talent toward leaner, more flexible competitors or stealth-stage startups.

This attrition is a strategic liability. In the race for AGI (Artificial General Intelligence), the primary asset is not GPU clusters, but the specialized engineers who can optimize them. If Meta becomes known as the “digital panopticon” of Large Tech, its cost of talent acquisition will rise, forcing higher compensation packages to offset the perceived loss of autonomy.

“The transition from human-led management to algorithmic oversight is a precarious pivot. When high-skill workers feel they are being managed by a black-box metric rather than a mentor, the institutional knowledge begins to evaporate.” — Dr. Elena Rossi, Senior Fellow at the European Labor Institute.

Quantifying the Regulatory Risk Landscape

To understand the scale of the threat, one must look at how the EU AI Act categorizes these risks compared to previous regulatory frameworks. Meta is not operating in a vacuum; its peers are watching closely to see if the EU will grant “innovation exemptions” or enforce the letter of the law.

| AI Risk Category | Application in Workplace | Maximum Penalty (% of Global Turnover) | Compliance Requirement |

|---|---|---|---|

| Unacceptable Risk | Biometric categorization / Social scoring | 7% | Total Ban |

| High Risk | Recruitment / Performance Monitoring | 3% – 7% | Strict Audit & Human Oversight |

| Limited Risk | Chatbots / Generative AI (General) | Variable | Transparency Disclosure |

| Minimal Risk | Spam filters / AI-enabled scheduling | N/A | No specific obligations |

Market-Bridging: The Ripple Effect on Big Tech

If the EU rules against Meta, the implications will extend immediately to Microsoft (NASDAQ: MSFT) and Amazon (NASDAQ: AMZN). Amazon, in particular, has a long history of algorithmic management in its fulfillment centers. A victory for EU labor regulators in the Meta case would provide a legal blueprint for dismantling automated firing and monitoring systems across the entire logistics and tech sector.

this regulatory friction impacts Meta’s forward guidance on AI integration. As the company seeks to integrate AI more deeply into its business operations, the need for “compliance by design” will increase Capex spending. We are seeing a trend where regulatory compliance is becoming a significant line item in the SEC filings of major tech firms, often categorized under “general and administrative expenses.”

But is this a deal-breaker for investors? Not necessarily. The market has largely priced in “the cost of doing business in Europe.” However, the risk profile changes when the conflict moves from “privacy” (which is abstract) to “labor rights” (which is political). A coordinated strike or a mass exodus of EU-based engineers would be a tangible hit to operational capacity.

The Trajectory: From Optimization to Litigation

Looking ahead to the close of the current fiscal year, Meta’s strategy appears to be one of “calculated defiance.” By deploying these tools and waiting for a regulatory challenge, Meta is attempting to define the boundaries of the EU AI Act through litigation rather than compliance. This is a classic Wall Street play: move fast, break things, and settle the fines later.

However, the environment has changed since 2012. The EU’s appetite for “Big Tech” settlements has diminished, replaced by a desire for structural behavioral changes. If Meta cannot reconcile its internal culture of surveillance with the legal requirements of the European market, it may be forced to bifurcate its management practices—running a “surveillance-light” model in Europe and a “hyper-optimized” model in the US.

For the institutional investor, the metric to watch is not the stock price today, but the employee retention rate within the FAIR (Fundamental AI Research) team. If the brain drain accelerates, the “efficiency” gained from AI surveillance will be a net loss compared to the loss of intellectual capital.

For further analysis on tech regulation, refer to recent updates from Reuters Technology and Bloomberg Technology.

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial advice.