Microsoft’s Q3 Surge: Azure, AI and the Quiet Consolidation of Power

Microsoft’s latest earnings report, released this week, reveals a 40% revenue increase in Azure and other cloud services, signaling a continued dominance in the cloud infrastructure market. This growth isn’t simply about more servers; it’s a reflection of Microsoft’s aggressive integration of AI across its entire stack, from foundational models to developer tools, and a strategic positioning that’s subtly reshaping the competitive landscape. The More Personal Computing segment also contributed $13.2 billion, demonstrating the continued strength of Windows and Surface devices, but the cloud and AI narrative is undeniably the driving force.

The headline number – 40% growth – is impressive, but it obscures a more nuanced reality. Azure’s growth, whereas substantial, is slowing slightly from previous quarters. However, the key isn’t just the growth rate, but *where* that growth is coming from. Microsoft is successfully monetizing its AI services, particularly through Azure OpenAI Service, and is attracting enterprise customers seeking a fully managed AI platform. This is a critical differentiator against competitors like AWS, which often require more hands-on management of AI infrastructure.

The NPU Advantage: Why Microsoft is Winning the AI Inference Game

Much of Azure’s AI prowess stems from Microsoft’s early and aggressive investment in Neural Processing Units (NPUs). While GPUs remain the workhorse for AI training, NPUs are rapidly becoming essential for efficient AI *inference* – the process of actually using a trained model to produce predictions. Microsoft’s custom-designed NPUs, integrated into its Azure infrastructure and increasingly into its Surface devices, offer a significant performance-per-watt advantage over traditional CPUs and even some GPUs for specific AI workloads. This translates to lower operating costs for Microsoft and faster response times for its customers. The company isn’t publishing detailed NPU specs, but independent benchmarks suggest their architecture prioritizes INT8 and FP16 precision, ideal for many real-world AI applications. AnandTech’s recent analysis of the Snapdragon X Elite, which shares architectural similarities with Microsoft’s NPU designs, highlights the potential for substantial performance gains in AI-accelerated tasks.

This isn’t just about hardware. Microsoft’s software stack – particularly its integration of the ONNX Runtime – is crucial. ONNX (Open Neural Network Exchange) allows developers to train models in one framework (like PyTorch or TensorFlow) and deploy them on a variety of hardware platforms, including those with NPUs. Microsoft’s optimized ONNX Runtime for Azure ensures that these models run efficiently on its infrastructure.

Beyond the Cloud: Microsoft’s Ecosystem Lock-In Strategy

The success of Azure and its AI services isn’t happening in a vacuum. It’s deeply intertwined with Microsoft’s broader ecosystem strategy. By tightly integrating AI into its Office 365 suite, Windows operating system, and developer tools (like Visual Studio and GitHub), Microsoft is creating powerful incentives for customers to stay within its walled garden. This is a classic example of platform lock-in, but it’s being executed with a level of sophistication that’s proving highly effective.

Consider Copilot, Microsoft’s AI assistant. It’s not just a standalone product; it’s deeply embedded in Word, Excel, PowerPoint, and Outlook. Users who turn into reliant on Copilot for their daily tasks are less likely to switch to competing productivity suites, even if those suites offer comparable features. This creates a powerful network effect, where the value of the Microsoft ecosystem increases as more users join it.

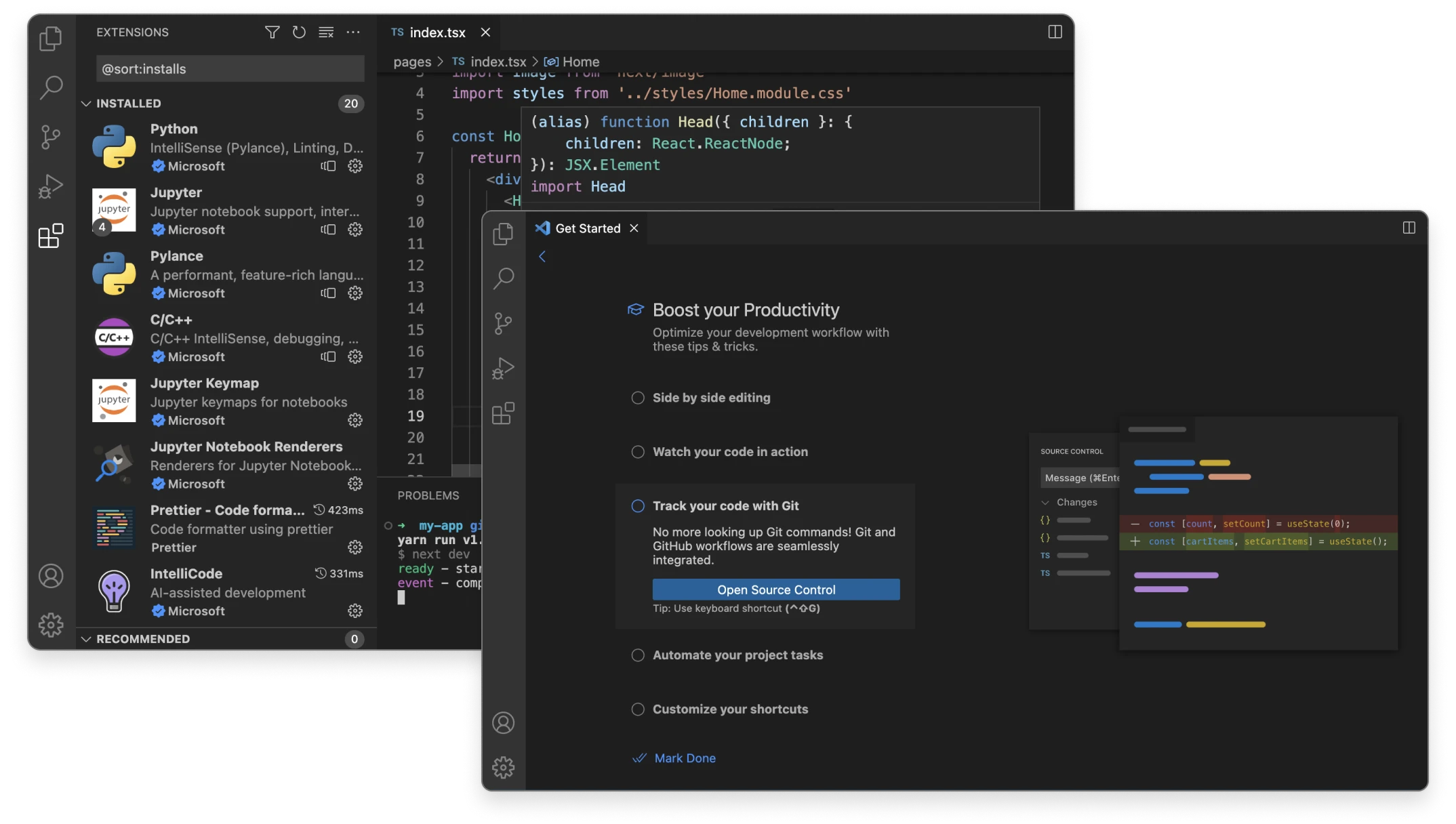

The Developer Angle: GitHub and the Rise of AI-Powered Coding

GitHub, acquired by Microsoft in 2018, is playing a critical role in this strategy. GitHub Copilot, an AI-powered code completion tool, is rapidly becoming indispensable for many developers. It leverages OpenAI’s Codex model to suggest code snippets, entire functions, and even complete programs. This not only increases developer productivity but also subtly steers them towards using Microsoft’s tools, and platforms. The API for Copilot is becoming increasingly open, allowing developers to integrate it into their own IDEs and workflows, further expanding its reach. GitHub’s official Copilot page details the API capabilities and integration options.

“The integration of AI into the developer workflow is no longer a ‘nice-to-have’ – it’s a fundamental requirement. Microsoft is uniquely positioned to capitalize on this trend, given its control over both the infrastructure (Azure) and the tools (GitHub, Visual Studio).”

– Dr. Anya Sharma, CTO, Stellar Dynamics

The Cybersecurity Implications: AI as Both Threat and Defense

The rise of AI also presents significant cybersecurity challenges. AI-powered tools can be used to automate attacks, create more sophisticated phishing campaigns, and even bypass traditional security defenses. However, AI can also be used to enhance cybersecurity, by detecting anomalies, identifying malware, and automating incident response. Microsoft is investing heavily in both areas.

Azure Sentinel, Microsoft’s cloud-native SIEM (Security Information and Event Management) platform, is increasingly leveraging AI to detect and respond to threats. It uses machine learning algorithms to identify suspicious patterns and prioritize alerts, reducing the burden on security analysts. However, the effectiveness of these AI-powered defenses depends on the quality of the training data and the ability to adapt to evolving threats. The ongoing “AI arms race” in cybersecurity will likely see attackers and defenders constantly trying to outsmart each other.

The potential for AI-generated deepfakes and disinformation campaigns is also a growing concern. Microsoft is working on technologies to detect and mitigate these threats, but it’s a challenging problem with no uncomplicated solutions. Microsoft’s Security AI page outlines their approach to leveraging AI for cybersecurity.

API Pricing and LLM Parameter Scaling: A Competitive Look

Microsoft’s Azure OpenAI Service offers access to a range of large language models (LLMs), including GPT-4 and various open-source models. The pricing structure is complex, based on the number of tokens processed (both input and output). Here’s a simplified comparison (as of late April 2026):

| Model | Input Tokens (per 1K tokens) | Output Tokens (per 1K tokens) |

|---|---|---|

| GPT-4 Turbo | $0.010 | $0.030 |

| GPT-3.5 Turbo | $0.0005 | $0.0015 |

| Llama 3 8B | $0.008 | $0.020 |

The trend towards larger LLM parameter scaling continues, but Microsoft is also focusing on optimizing model efficiency. They’ve demonstrated significant improvements in inference speed and cost by using techniques like quantization and pruning. This allows them to offer competitive pricing while maintaining high performance. The availability of open-source models like Llama 3 on Azure also provides customers with more flexibility and control.

“Microsoft’s strategy isn’t just about building the biggest models; it’s about building the *most useful* models, and making them accessible to a wide range of developers and enterprises. The focus on NPU acceleration and optimized inference is a key differentiator.”

– Ben Carter, Lead AI Engineer, DataForge Solutions

Microsoft’s Q3 results are a clear indication that its cloud and AI strategy is working. The company is not only growing its revenue but also solidifying its position as a leader in the next wave of computing. The key takeaway for enterprise IT is that ignoring Microsoft’s AI offerings is no longer an option. The integration of AI into core business processes is inevitable, and Microsoft is making it increasingly easy – and compelling – to adopt its platform. The quiet consolidation of power is well underway.