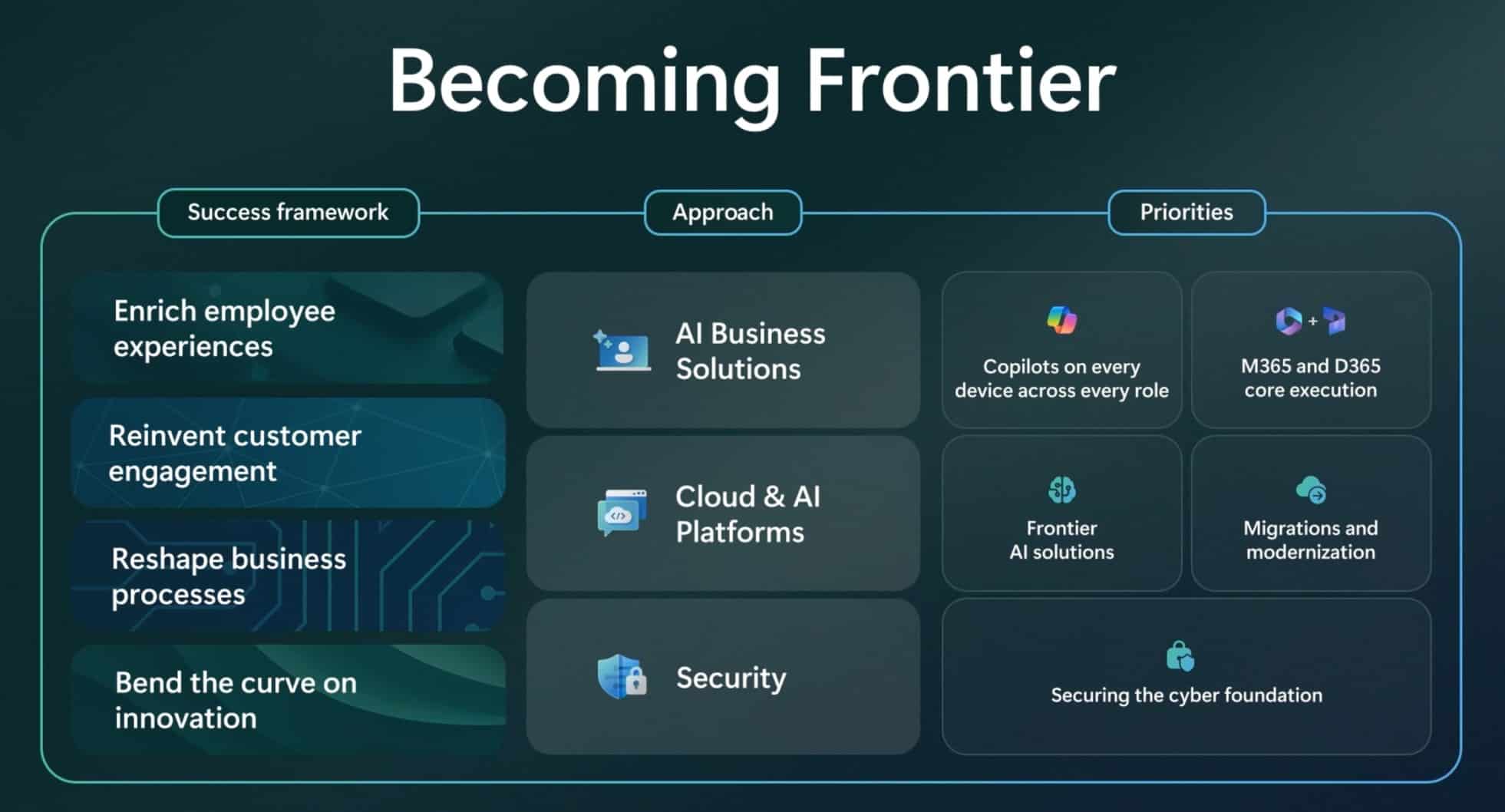

Microsoft’s newly unveiled AI Cloud Partner Programme, featuring the Frontier Suite and Frontier Engineer specialisations, marks a strategic pivot toward industrialising generative AI workloads through tightly coupled Azure infrastructure and partner enablement, aiming to reduce time-to-production for enterprise AI deployments by standardising model fine-tuning, governance, and deployment pipelines across ISVs and SIs globally.

From Pilot Purgatory to Production Pipelines: The Frontier Suite Architecture

The Frontier Suite is not merely a rebrand of existing Azure AI tools; it represents a layered architectural shift designed to eliminate the chasm between proof-of-concept and scalable deployment. At its core lies the Azure AI Foundry, now augmented with model distillation pipelines that compress large language models (LLMs) into smaller, latency-optimised variants without significant accuracy loss — a technique Microsoft internal benchmarks show can reduce inference costs by up to 60% on Azure ND H100 v5 VMs while maintaining >90% performance on MMLU and GSM8K evaluations. Crucially, the Suite introduces version-controlled model registries backed by Azure Purview, enabling partners to track data lineage, drift, and compliance across hybrid environments — a direct response to growing enterprise anxiety over AI auditability under impending EU AI Act classifications.

What distinguishes this from competing offerings like Google’s Vertex AI Partner Program or AWS’s ML Elevate is the deep integration with GitHub Advanced Security and Azure DevOps, allowing continuous model retraining triggered by data drift detection via Azure Monitor. Partners gain access to pre-validated LoRA (Low-Rank Adaptation) adapters for domain-specific fine-tuning in sectors like healthcare and finance, reducing custom model development cycles from weeks to days. This isn’t theoretical: early adopters like C3.ai and UiPath report cutting model validation time by 40% using the Suite’s automated red-teaming framework, which simulates adversarial prompts against LLMs using NVIDIA’s NeMo Guardrails.

Frontier Engineer: Certifying the Novel AI Artisan Class

The Frontier Engineer specialisation targets a growing skills gap: the need for practitioners who can bridge MLOps, prompt engineering, and responsible AI governance. Unlike generic Azure certifications, this track requires hands-on assessment in constructing retrieval-augmented generation (RAG) pipelines using Azure AI Search, implementing semantic caching to cut token costs, and deploying models with dynamic batching via Triton Inference Server — all assessed through a live lab environment graded against ISO/IEC 42001 AI management system standards.

As one Azure Partner Network architect noted during a closed-door briefing last week, “We’re not just certifying button-clickers; we’re validating engineers who can optimise a Llama 3 70B model for sub-200ms p99 latency on Azure NDv5 while keeping token costs under $0.0003 per 1K tokens — that’s the bar.” This focus on measurable efficiency aligns with Microsoft’s internal goal to drive partner-led AI consumption to 30% of Azure AI revenue by FY27, up from an estimated 12% in FY24.

Ecosystem Implications: Lock-in, Leverage, and the Open-Source Tension

While Microsoft positions the Frontier Suite as an open enablement layer, its technical dependencies reveal a nuanced strategy. The Suite’s model optimisation tools are tightly coupled to Azure Machine Learning’s managed pipelines, which currently lack parity with open-source alternatives like Kubeflow Pipelines when deployed on non-Azure infrastructure. The pre-validated LoRA adapters are distributed via Azure Model Catalog, creating a potential gravity well that could discourage partners from exporting fine-tuned models to rival clouds — a concern echoed by several open-source AI advocates.

“Microsoft is building a very compelling on-ramp, but the off-ramps are narrow. If you build your AI IP inside their Foundry, extracting it without rework becomes a non-trivial engineering project — that’s by design, not accident.”

— Dr. Elena Rossi, Chief AI Architect, Red Hat

This dynamic mirrors broader cloud AI trends where providers differentiate through proprietary tooling rather than raw model access. Yet, Microsoft has made concessions: the Frontier Suite supports ONNX Runtime for model export, and partners can bring their own LLMs (including Mistral and Llama variants) into the Foundry for optimisation. Still, the real lock-in may come not from technology but from economics — partners achieving Frontier Engineer status gain access to co-sell incentives and Azure consumption credits that can offset up to 40% of infrastructure costs during the first year of deployment.

Cybersecurity and Governance: The Hidden Layer

Beneath the enablement narrative lies a robust security framework often overlooked in partner announcements. The Frontier Suite integrates Microsoft’s AI Security Risk Assessment framework, which automatically scans fine-tuned models for prompt injection vulnerabilities, data leakage risks, and bias amplification using counterfactual fairness testing. These scans feed into Azure Policy, enabling enforcement of organisational guardrails — for example, blocking model deployment if toxicity scores exceed thresholds set in Azure AI Content Safety.

This is particularly relevant given recent findings from the Praetorian Guard’s Attack Helix architecture, which demonstrated how adversarial fine-tuning can embed stealthy backdoors in LLMs that evade standard perplexity-based detection. Microsoft’s response — embedding adversarial robustness checks directly into the model registration pipeline — represents a proactive shift from reactive patching to preemptive hardening, a practice still rare among cloud AI providers.

The Takeaway: Industrial AI, Partnered

Microsoft’s AI Cloud Partner Programme update is less about flashy new features and more about operationalising AI at scale through partner empowerment. By combining architectural rigor in model optimisation, a specialised certification track for AI engineers, and embedded governance controls, the company is attempting to shift the narrative from AI experimentation to AI industrialisation — a move that could reshape how enterprises source, deploy, and trust AI solutions in the coming years. For partners, the incentive is clear: master the Frontier Suite, and you’re not just building models — you’re building repeatable, secure, and profitable AI businesses on Azure.