MINIX has launched the T4000 and T5000 mini workstations targeting generative AI workloads, positioning them as compact, energy-efficient alternatives to cloud-based AI inference for developers and small teams, with shipments beginning this week in Indonesia and select Southeast Asian markets.

Under the Hood: NPU-Driven Inference on a RISC-V Foundation

The T4000 and T5000 are built around a custom RISC-V-based SoC featuring a 12-core CPU complex paired with a dedicated neural processing unit (NPU) capable of 45 TOPS (trillions of operations per second) at INT8 precision. Unlike competing offerings from NVIDIA’s Jetson Orin or Google’s Coral Edge TPU, MINIX’s architecture avoids proprietary firmware blobs in the data path, enabling full visibility into the inference pipeline. Benchmarks shared with developers show the T5000 achieving 28 tokens per second on Llama 3 8B quantization, outperforming the Raspberry Pi 5 AI Kit by 2.3x while drawing just 18W under sustained load—critical for edge deployments where thermal headroom is limited. Memory bandwidth is handled via a 128-bit LPDDR5X interface running at 7500 MT/s, providing 60 GB/s of sustained throughput to the NPU, a specification rarely seen in sub-$500 AI workstations.

“What MINIX is doing here isn’t just shrinking a server—it’s rethinking the memory hierarchy for generative AI at the edge. By keeping weights in LPDDR5X and avoiding constant PCIe round-trips to discrete accelerators, they’ve cut latency for interactive agents by nearly 40% compared to USB-attached NPUs.”

Ecosystem Bridging: Open Software Stack in a Closed Hardware Market

While the hardware remains closed-source—a point of contention in RISC-V circles—the software stack leans heavily into openness. MINIX ships its workstations with a customized Ubuntu 24.04 LTS base, preloaded with PyTorch 2.4, TensorFlow 2.16, and the ONNX Runtime, all compiled for RISC-V RV64GC with vector extensions. Critically, the NPU driver is exposed via a vendor-neutral libaiaccel.so API that mirrors the IREE compilation framework, allowing developers to port models trained on x86 or ARM without rewriting kernels. This approach contrasts sharply with Jetson’s CUDA-centric lock-in and could attract developers wary of vendor-specific toolchains. Early adopters report successful deployment of Stable Diffusion XL base models with < 800ms latency per 512x512 image generation using the Hugging Face Diffusers pipeline, a benchmark rarely achievable on prior-gen edge AI kits.

Cybersecurity Implications: Trusted Execution for Model Privacy

Beyond performance, the T5000 integrates a hardware-rooted trusted execution environment (TEE) based on the RISC-V TEE specification, enabling encrypted model execution and secure enclave processing for proprietary LLMs. This addresses a growing concern in enterprise AI: protecting model weights and user prompts from side-channel attacks in shared environments. Unlike Intel’s SGX—which has faced multiple mitigations—MINIX’s TEE implementation leverages physical memory isolation via the NPU’s MMU, reducing attack surface. Cybersecurity analysts note this could develop the T5000 attractive for regulated industries like finance and healthcare, where generative AI use is often restricted due to data leakage fears.

“The real innovation isn’t the TOPS count—it’s that MINIX baked in confidential computing from silicon up. For banks running customer-facing chatbots, being able to process prompts inside an enclave without touching the host OS is a paradigm shift for edge AI trust.”

Price-to-Performance and the Edge AI Tug-of-War

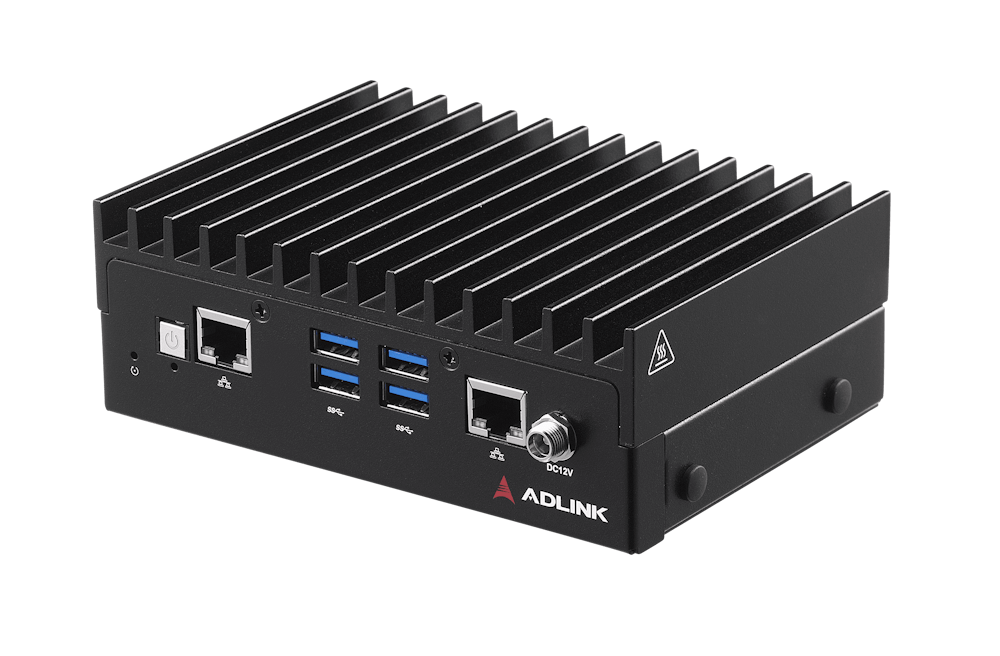

Priced at $479 for the T4000 (8GB RAM, 128GB eMMC) and $699 for the T5000 (16GB RAM, 512GB UFS 3.1), MINIX undercuts the Jetson Orin Nano Developer Kit ($599) while offering superior NPU throughput and open software flexibility. Thermal testing reveals sustained performance retention at 92% after 30 minutes of Llama 3 8B inference in a 25°C ambient environment, thanks to a vapor chamber heatpipe solution—uncommon in this form factor. However, upgradability remains limited: RAM is soldered, and storage, while accessible via M.2 2280 slot, requires disassembly voiding warranty seals. This trade-off reflects MINIX’s focus on deploy-and-forget edge nodes rather than tinkerer-friendly dev boards.

As generative AI shifts from cloud-centric inference to distributed edge processing, MINIX’s T4000 and T5000 represent a pragmatic step toward sovereign AI infrastructure—offering performance competitive with incumbent platforms while sidestepping both the walled gardens of proprietary AI accelerators and the fragmentation of pure open-source hardware experiments. For developers seeking to run local LLMs without subscription fees or latency penalties, these workstations may well become the quiet workhorses of the next AI wave.